Link to this sectionQuick Start Guide: NVIDIA Jetson with Ultralytics YOLO26#

This comprehensive guide provides a detailed walkthrough for deploying Ultralytics YOLO26 on NVIDIA Jetson devices. Additionally, it showcases performance benchmarks to demonstrate the capabilities of YOLO26 on these small and powerful devices.

We have updated this guide with the latest NVIDIA Jetson AGX Thor Developer Kit which delivers up to 2070 FP4 TFLOPS of AI compute and 128 GB of memory with power configurable between 40 W and 130 W. It delivers over 7.5x higher AI compute than NVIDIA Jetson AGX Orin, with 3.5x better energy efficiency to seamlessly run the most popular AI models.

Watch: How to use Ultralytics YOLO26 on NVIDIA Jetson Devices

This guide has been tested with NVIDIA Jetson AGX Thor Developer Kit (Jetson T5000) running the latest stable JetPack release of JP7.0, NVIDIA Jetson AGX Orin Developer Kit (64GB) running JetPack release of JP6.2, NVIDIA Jetson Orin Nano Super Developer Kit running JetPack release of JP6.1, Seeed Studio reComputer J4012 which is based on NVIDIA Jetson Orin NX 16GB running JetPack release of JP6.0/ JetPack release of JP5.1.3 and Seeed Studio reComputer J1020 v2 which is based on NVIDIA Jetson Nano 4GB running JetPack release of JP4.6.1. It is expected to work across all the NVIDIA Jetson hardware lineup, including the latest and legacy devices.

Link to this sectionWhat is NVIDIA Jetson?#

NVIDIA Jetson is a series of embedded computing boards designed to bring accelerated AI (artificial intelligence) computing to edge devices. These compact and powerful devices are built around NVIDIA's GPU architecture and can run complex AI algorithms and deep learning models directly on the device, without relying on cloud computing resources. Jetson boards are often used in robotics, autonomous vehicles, industrial automation, and other applications where AI inference needs to be performed locally with low latency and high efficiency. Additionally, these boards are based on the ARM64 architecture and run at lower power compared to traditional GPU computing devices.

Link to this sectionNVIDIA Jetson Series Comparison#

NVIDIA Jetson AGX Thor is the latest iteration of the NVIDIA Jetson family based on NVIDIA Blackwell architecture which brings drastically improved AI performance when compared to the previous generations. The table below compares a few of the Jetson devices in the ecosystem.

| Jetson AGX Thor(T5000) | Jetson AGX Orin 64GB | Jetson Orin NX 16GB | Jetson Orin Nano Super | Jetson AGX Xavier | Jetson Xavier NX | Jetson Nano | |

|---|---|---|---|---|---|---|---|

| AI Performance | 2070 TFLOPS | 275 TOPS | 100 TOPS | 67 TOPS | 32 TOPS | 21 TOPS | 472 GFLOPS |

| GPU | 2560-core NVIDIA Blackwell architecture GPU with 96 Tensor Cores | 2048-core NVIDIA Ampere architecture GPU with 64 Tensor Cores | 1024-core NVIDIA Ampere architecture GPU with 32 Tensor Cores | 1024-core NVIDIA Ampere architecture GPU with 32 Tensor Cores | 512-core NVIDIA Volta architecture GPU with 64 Tensor Cores | 384-core NVIDIA Volta™ architecture GPU with 48 Tensor Cores | 128-core NVIDIA Maxwell™ architecture GPU |

| GPU Max Frequency | 1.57 GHz | 1.3 GHz | 918 MHz | 1020 MHz | 1377 MHz | 1100 MHz | 921MHz |

| CPU | 14-core Arm® Neoverse®-V3AE 64-bit CPU 1MB L2 + 16MB L3 | 12-core NVIDIA Arm® Cortex A78AE v8.2 64-bit CPU 3MB L2 + 6MB L3 | 8-core NVIDIA Arm® Cortex A78AE v8.2 64-bit CPU 2MB L2 + 4MB L3 | 6-core Arm® Cortex®-A78AE v8.2 64-bit CPU 1.5MB L2 + 4MB L3 | 8-core NVIDIA Carmel Arm®v8.2 64-bit CPU 8MB L2 + 4MB L3 | 6-core NVIDIA Carmel Arm®v8.2 64-bit CPU 6MB L2 + 4MB L3 | Quad-Core Arm® Cortex®-A57 MPCore processor |

| CPU Max Frequency | 2.6 GHz | 2.2 GHz | 2.0 GHz | 1.7 GHz | 2.2 GHz | 1.9 GHz | 1.43GHz |

| Memory | 128GB 256-bit LPDDR5X 273GB/s | 64GB 256-bit LPDDR5 204.8GB/s | 16GB 128-bit LPDDR5 102.4GB/s | 8GB 128-bit LPDDR5 102 GB/s | 32GB 256-bit LPDDR4x 136.5GB/s | 8GB 128-bit LPDDR4x 59.7GB/s | 4GB 64-bit LPDDR4 25.6GB/s |

For a more detailed comparison table, please visit the Compare Specifications section of official NVIDIA Jetson page.

Link to this sectionWhat is NVIDIA JetPack?#

NVIDIA JetPack SDK powering the Jetson modules is the most comprehensive solution and provides full development environment for building end-to-end accelerated AI applications and shortens time to market. JetPack includes Jetson Linux with bootloader, Linux kernel, Ubuntu desktop environment, and a complete set of libraries for acceleration of GPU computing, multimedia, graphics, and computer vision. It also includes samples, documentation, and developer tools for both host computer and developer kit, and supports higher level SDKs such as DeepStream for streaming video analytics, Isaac for robotics, and Riva for conversational AI.

Link to this sectionFlash JetPack to NVIDIA Jetson#

The first step after getting your hands on an NVIDIA Jetson device is to flash NVIDIA JetPack to the device. There are several different ways of flashing NVIDIA Jetson devices.

- If you own an official NVIDIA Development Kit such as the Jetson AGX Thor Developer Kit, you can download an image and prepare a bootable USB stick to flash JetPack to the included SSD.

- If you own an official NVIDIA Development Kit such as the Jetson Orin Nano Developer Kit, you can download an image and prepare an SD card with JetPack for booting the device.

- If you own any other NVIDIA Development Kit, you can flash JetPack to the device using SDK Manager.

- If you own a Seeed Studio reComputer J4012 device, you can flash JetPack to the included SSD and if you own a Seeed Studio reComputer J1020 v2 device, you can flash JetPack to the eMMC/ SSD.

- If you own any other third-party device powered by the NVIDIA Jetson module, it is recommended to follow command-line flashing.

For methods 1, 4 and 5 above, after flashing the system and booting the device, please enter "sudo apt update && sudo apt install nvidia-jetpack -y" on the device terminal to install all the remaining JetPack components needed.

Link to this sectionJetPack Support Based on Jetson Device#

The below table highlights NVIDIA JetPack versions supported by different NVIDIA Jetson devices.

| JetPack 4 | JetPack 5 | JetPack 6 | JetPack 7 | |

|---|---|---|---|---|

| Jetson Nano | ✅ | ❌ | ❌ | ❌ |

| Jetson TX2 | ✅ | ❌ | ❌ | ❌ |

| Jetson Xavier NX | ✅ | ✅ | ❌ | ❌ |

| Jetson AGX Xavier | ✅ | ✅ | ❌ | ❌ |

| Jetson AGX Orin | ❌ | ✅ | ✅ | ❌ |

| Jetson Orin NX | ❌ | ✅ | ✅ | ❌ |

| Jetson Orin Nano | ❌ | ✅ | ✅ | ❌ |

| Jetson AGX Thor | ❌ | ❌ | ❌ | ✅ |

Link to this sectionQuick Start with Docker#

The fastest way to get started with Ultralytics YOLO26 on NVIDIA Jetson is to run with pre-built docker images for Jetson. Refer to the table above and choose the JetPack version according to the Jetson device you own.

t=ultralytics/ultralytics:latest-jetson-jetpack4

sudo docker pull $t && sudo docker run -it --ipc=host --runtime=nvidia $tAfter this is done, skip to Use TensorRT on NVIDIA Jetson section.

Link to this sectionStart with Native Installation#

For a native installation without Docker, please refer to the steps below.

Link to this sectionRun on JetPack 7.0#

Link to this sectionInstall Ultralytics Package#

Here we will install Ultralytics package on the Jetson with optional dependencies so that we can export the PyTorch models to other different formats. We will mainly focus on NVIDIA TensorRT exports because TensorRT will make sure we can get the maximum performance out of the Jetson devices.

-

Update packages list, install pip and upgrade to latest

sudo apt update sudo apt install python3-pip -y pip install -U pip -

Install

ultralyticspip package with optional dependenciespip install ultralytics[export] -

Reboot the device

sudo reboot

Link to this sectionInstall PyTorch and Torchvision#

The above ultralytics installation will install Torch and Torchvision. However, these 2 packages installed via pip are not compatible to run on Jetson AGX Thor which comes with JetPack 7.0 and CUDA 13. Therefore, we need to manually install them.

Install torch and torchvision according to JP7.0

pip install torch torchvision --index-url https://download.pytorch.org/whl/cu130Link to this sectionInstall onnxruntime-gpu#

The onnxruntime-gpu package hosted in PyPI does not have aarch64 binaries for the Jetson. So we need to manually install this package. This package is needed for some of the exports.

Here we will download and install onnxruntime-gpu 1.24.0 with Python3.12 support.

pip install https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.24.0-cp312-cp312-linux_aarch64.whlLink to this sectionRun on JetPack 6.1#

Link to this sectionInstall Ultralytics Package#

Here we will install Ultralytics package on the Jetson with optional dependencies so that we can export the PyTorch models to other different formats. We will mainly focus on NVIDIA TensorRT exports because TensorRT will make sure we can get the maximum performance out of the Jetson devices.

-

Update packages list, install pip and upgrade to latest

sudo apt update sudo apt install python3-pip -y pip install -U pip -

Install

ultralyticspip package with optional dependenciespip install ultralytics[export] -

Reboot the device

sudo reboot

Link to this sectionInstall PyTorch and Torchvision#

The above ultralytics installation will install Torch and Torchvision. However, these two packages installed via pip are not compatible with the Jetson platform, which is based on ARM64 architecture. Therefore, we need to manually install a pre-built PyTorch pip wheel and compile or install Torchvision from source.

Install torch 2.10.0 and torchvision 0.25.0 according to JP6.1

pip install https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.10.0-cp310-cp310-linux_aarch64.whl

pip install https://github.com/ultralytics/assets/releases/download/v0.0.0/torchvision-0.25.0-cp310-cp310-linux_aarch64.whlVisit the PyTorch for Jetson page to access all different versions of PyTorch for different JetPack versions. For a more detailed list on the PyTorch, Torchvision compatibility, visit the PyTorch and Torchvision compatibility page.

Install cuDSS to fix a dependency issue with torch 2.10.0

wget https://developer.download.nvidia.com/compute/cudss/0.7.1/local_installers/cudss-local-tegra-repo-ubuntu2204-0.7.1_0.7.1-1_arm64.deb

sudo dpkg -i cudss-local-tegra-repo-ubuntu2204-0.7.1_0.7.1-1_arm64.deb

sudo cp /var/cudss-local-tegra-repo-ubuntu2204-0.7.1/cudss-*-keyring.gpg /usr/share/keyrings/

sudo apt-get update

sudo apt-get -y install cudssLink to this sectionInstall onnxruntime-gpu#

The onnxruntime-gpu package hosted in PyPI does not have aarch64 binaries for the Jetson. So we need to manually install this package. This package is needed for some of the exports.

You can find all available onnxruntime-gpu packages—organized by JetPack version, Python version, and other compatibility details—in the Jetson Zoo ONNX Runtime compatibility matrix.

For JetPack 6 with Python 3.10 support, you can install onnxruntime-gpu 1.23.0:

pip install https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.23.0-cp310-cp310-linux_aarch64.whlAlternatively, for onnxruntime-gpu 1.20.0:

pip install https://github.com/ultralytics/assets/releases/download/v0.0.0/onnxruntime_gpu-1.20.0-cp310-cp310-linux_aarch64.whlLink to this sectionRun on JetPack 5.1.2#

Link to this sectionInstall Ultralytics Package#

Here we will install Ultralytics package on the Jetson with optional dependencies so that we can export the PyTorch models to other different formats. We will mainly focus on NVIDIA TensorRT exports because TensorRT will make sure we can get the maximum performance out of the Jetson devices.

-

Update packages list, install pip and upgrade to latest

sudo apt update sudo apt install python3-pip -y pip install -U pip -

Install

ultralyticspip package with optional dependenciespip install ultralytics[export] -

Reboot the device

sudo reboot

Link to this sectionInstall PyTorch and Torchvision#

The above ultralytics installation will install Torch and Torchvision. However, these two packages installed via pip are not compatible with the Jetson platform, which is based on ARM64 architecture. Therefore, we need to manually install a pre-built PyTorch pip wheel and compile or install Torchvision from source.

-

Uninstall currently installed PyTorch and Torchvision

pip uninstall torch torchvision -

Install

torch 2.1.0andtorchvision 0.16.2according to JP5.1.2pip install https://github.com/ultralytics/assets/releases/download/v0.0.0/torch-2.1.0a0+41361538.nv23.06-cp38-cp38-linux_aarch64.whl pip install https://github.com/ultralytics/assets/releases/download/v0.0.0/torchvision-0.16.2+c6f3977-cp38-cp38-linux_aarch64.whl

Visit the PyTorch for Jetson page to access all different versions of PyTorch for different JetPack versions. For a more detailed list on the PyTorch, Torchvision compatibility, visit the PyTorch and Torchvision compatibility page.

Link to this sectionInstall onnxruntime-gpu#

The onnxruntime-gpu package hosted in PyPI does not have aarch64 binaries for the Jetson. So we need to manually install this package. This package is needed for some of the exports.

You can find all available onnxruntime-gpu packages—organized by JetPack version, Python version, and other compatibility details—in the Jetson Zoo ONNX Runtime compatibility matrix. Here we will download and install onnxruntime-gpu 1.17.0 with Python3.8 support.

wget https://nvidia.box.com/shared/static/zostg6agm00fb6t5uisw51qi6kpcuwzd.whl -O onnxruntime_gpu-1.17.0-cp38-cp38-linux_aarch64.whl

pip install onnxruntime_gpu-1.17.0-cp38-cp38-linux_aarch64.whlonnxruntime-gpu will automatically revert back the NumPy version to latest. So we need to reinstall NumPy to 1.23.5 to fix an issue by executing:

pip install numpy==1.23.5

Link to this sectionUse TensorRT on NVIDIA Jetson#

Among all the model export formats supported by Ultralytics, TensorRT offers the highest inference performance on NVIDIA Jetson devices, making it our top recommendation for Jetson deployments. For setup instructions and advanced usage, see our dedicated TensorRT integration guide.

Link to this sectionConvert Model to TensorRT and Run Inference#

The YOLO26n model in PyTorch format is converted to TensorRT to run inference with the exported model.

from ultralytics import YOLO

# Load a YOLO26n PyTorch model

model = YOLO("yolo26n.pt")

# Export the model to TensorRT

model.export(format="engine") # creates 'yolo26n.engine'

# Load the exported TensorRT model

trt_model = YOLO("yolo26n.engine")

# Run inference

results = trt_model("https://ultralytics.com/images/bus.jpg")Visit the Export page to access additional arguments when exporting models to different model formats

Link to this sectionUse NVIDIA Deep Learning Accelerator (DLA)#

NVIDIA Deep Learning Accelerator (DLA) is a specialized hardware component built into NVIDIA Jetson devices that optimizes deep learning inference for energy efficiency and performance. By offloading tasks from the GPU (freeing it up for more intensive processes), DLA enables models to run with lower power consumption while maintaining high throughput, ideal for embedded systems and real-time AI applications.

DLA is not supported in TensorRT 11.0 and is planned to return in a later release, so DLA export requires TensorRT 10.x. On JetPack 6.x/7.x, export with a TensorRT 10.x build to use DLA, or use the GPU for TensorRT 11.0 engines.

The following Jetson devices are equipped with DLA hardware:

| Jetson Device | DLA Cores | DLA Max Frequency |

|---|---|---|

| Jetson AGX Orin Series | 2 | 1.6 GHz |

| Jetson Orin NX 16GB | 2 | 614 MHz |

| Jetson Orin NX 8GB | 1 | 614 MHz |

| Jetson AGX Xavier Series | 2 | 1.4 GHz |

| Jetson Xavier NX Series | 2 | 1.1 GHz |

from ultralytics import YOLO

# Load a YOLO26n PyTorch model

model = YOLO("yolo26n.pt")

# Export the model to TensorRT with DLA enabled (only works with FP16 or INT8)

model.export(format="engine", device="dla:0", half=True) # dla:0 or dla:1 corresponds to the DLA cores

# Load the exported TensorRT model

trt_model = YOLO("yolo26n.engine")

# Run inference

results = trt_model("https://ultralytics.com/images/bus.jpg")When using DLA exports, some layers may not be supported to run on DLA and will fall back to the GPU for execution. This fallback can introduce additional latency and impact the overall inference performance. Therefore, DLA is not primarily designed to reduce inference latency compared to TensorRT running entirely on the GPU. Instead, its primary purpose is to increase throughput and improve energy efficiency.

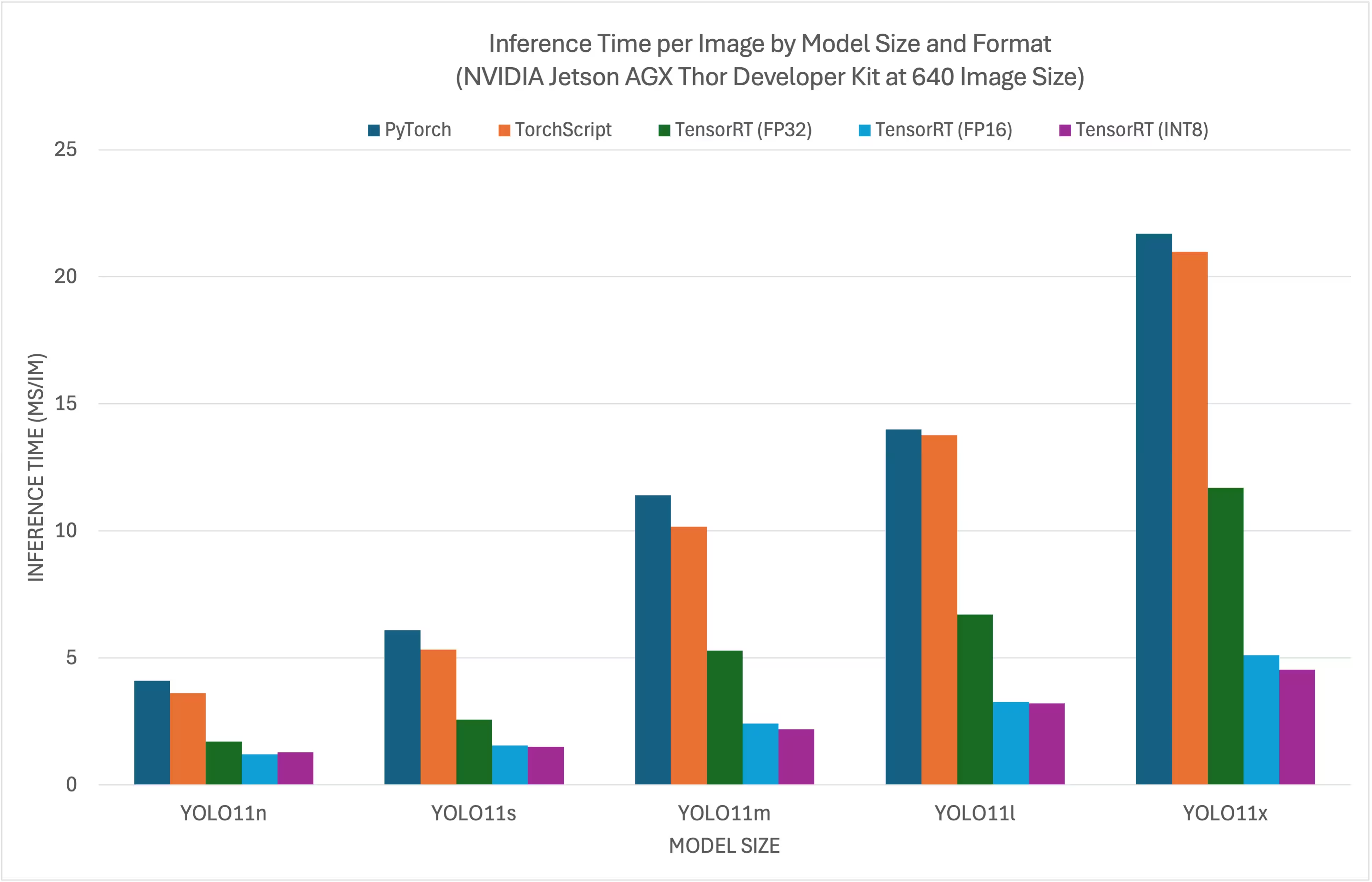

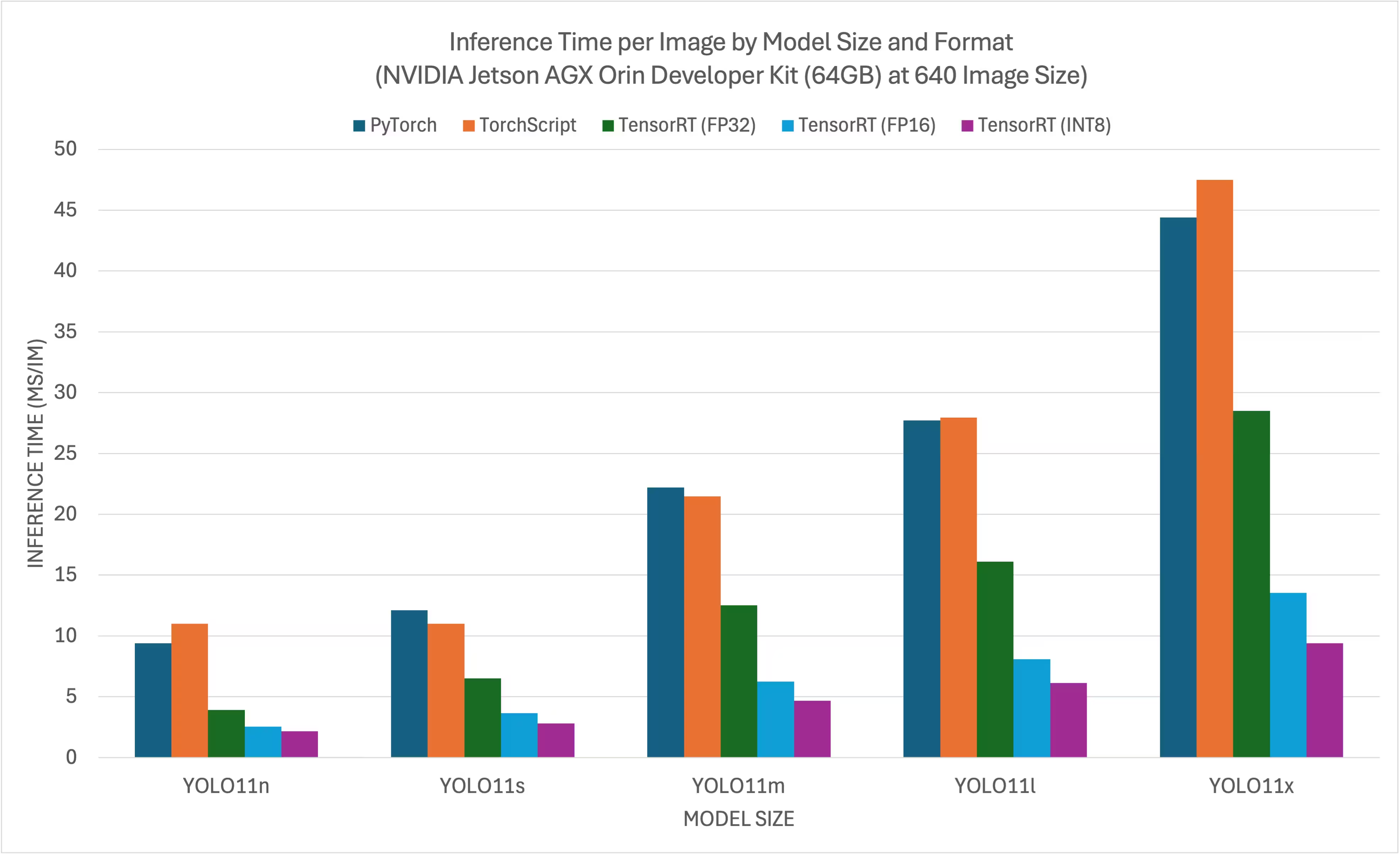

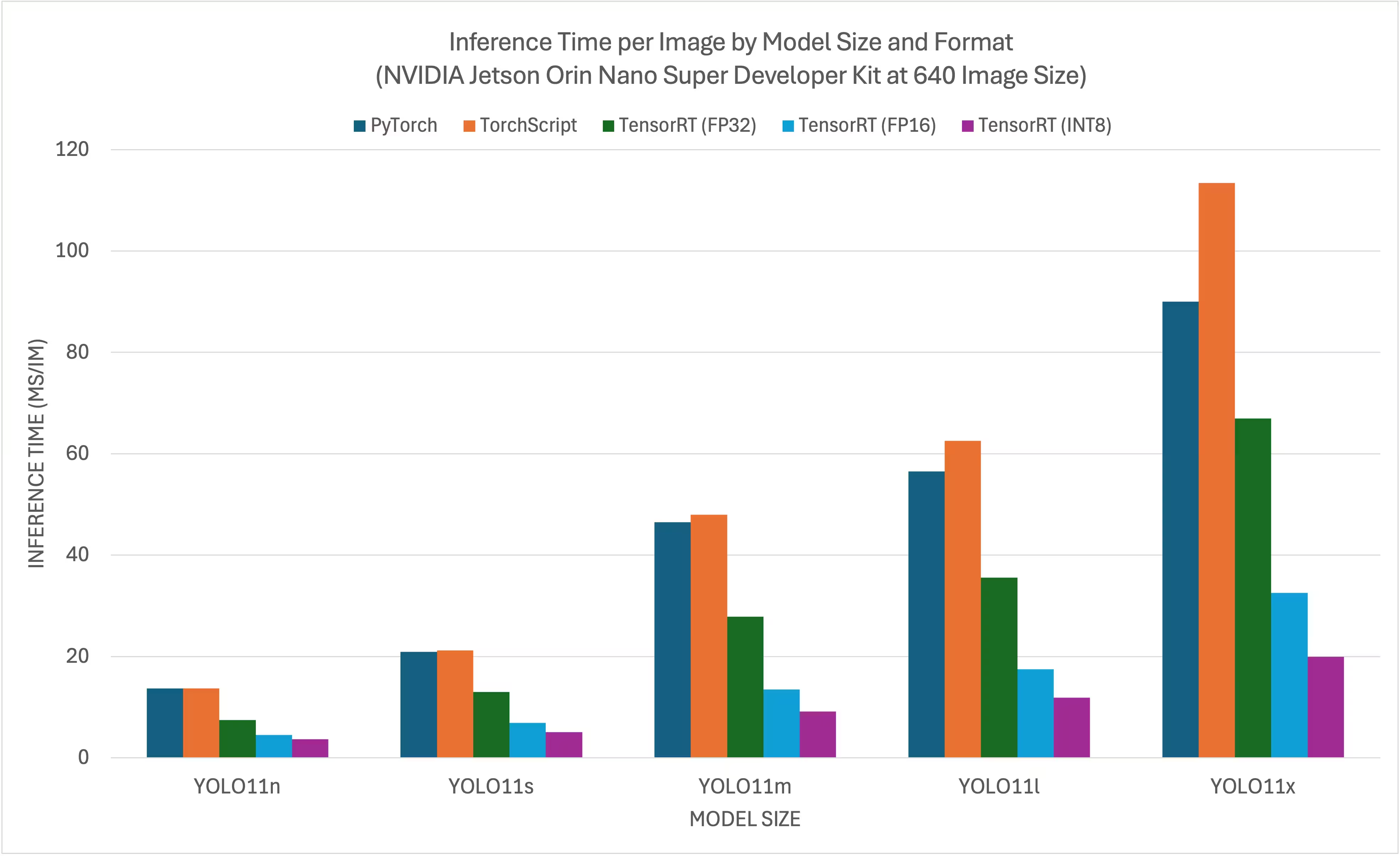

Link to this sectionNVIDIA Jetson YOLO11/ YOLO26 Benchmarks#

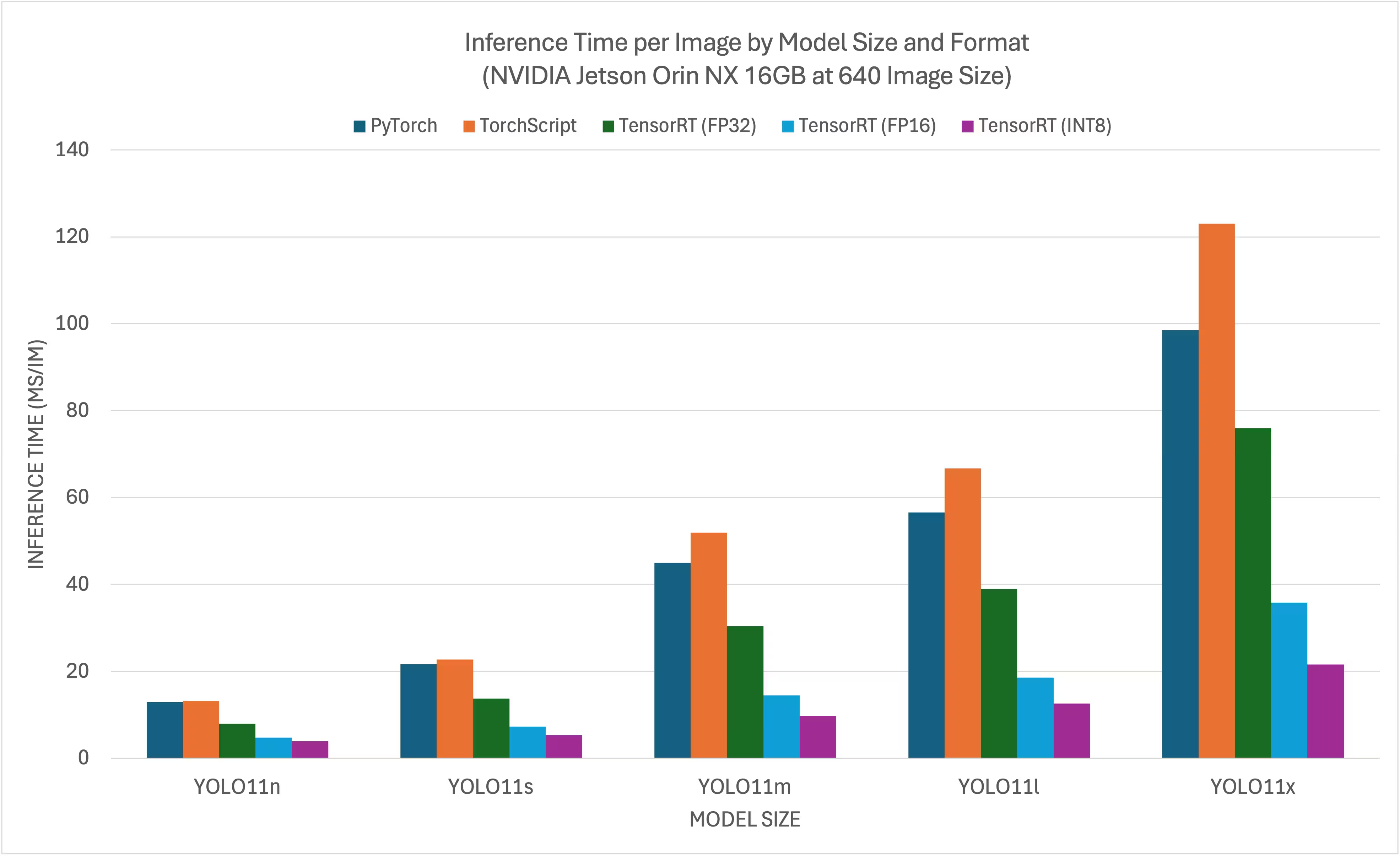

YOLO11/ YOLO26 benchmarks were run by the Ultralytics team on 11 different model formats measuring speed and accuracy: PyTorch, TorchScript, ONNX, OpenVINO, TensorRT, TF SavedModel, TF GraphDef, TF Lite, MNN, NCNN, ExecuTorch. Benchmarks were run on NVIDIA Jetson AGX Thor Developer Kit, NVIDIA Jetson AGX Orin Developer Kit (64GB), NVIDIA Jetson Orin Nano Super Developer Kit and Seeed Studio reComputer J4012 powered by Jetson Orin NX 16GB device at FP32 precision with default input image size of 640.

Link to this sectionComparison Charts#

Even though all model exports work on NVIDIA Jetson, we have only included PyTorch, TorchScript, TensorRT for the comparison chart below because they make use of the GPU on the Jetson and are guaranteed to produce the best results. All the other exports only utilize the CPU and the performance is not as good as the above three. You can find benchmarks for all exports in the section after this chart.

Link to this sectionNVIDIA Jetson AGX Thor Developer Kit#

Link to this sectionNVIDIA Jetson AGX Orin Developer Kit (64GB)#

Link to this sectionNVIDIA Jetson Orin Nano Super Developer Kit#

Link to this sectionNVIDIA Jetson Orin NX 16GB#

Link to this sectionDetailed Comparison Tables#

The below table represents the benchmark results for five different models (YOLO11n, YOLO11s, YOLO11m, YOLO11l, YOLO11x) across 11 different formats (PyTorch, TorchScript, ONNX, OpenVINO, TensorRT, TF SavedModel, TF GraphDef, TF Lite, MNN, NCNN, ExecuTorch), giving us the status, size, mAP50-95(B) metric, and inference time for each combination.

Link to this sectionNVIDIA Jetson AGX Thor Developer Kit#

| Format | Status | Size on disk (MB) | mAP50-95(B) | Inference time (ms/im) |

|---|---|---|---|---|

| PyTorch | ✅ | 5.3 | 0.4798 | 7.39 |

| TorchScript | ✅ | 9.8 | 0.4789 | 4.21 |

| ONNX | ✅ | 9.5 | 0.4767 | 6.58 |

| OpenVINO | ✅ | 10.1 | 0.4794 | 17.50 |

| TensorRT (FP32) | ✅ | 13.9 | 0.4791 | 1.90 |

| TensorRT (FP16) | ✅ | 7.6 | 0.4797 | 1.39 |

| TensorRT (INT8) | ✅ | 6.5 | 0.4273 | 1.52 |

| TF SavedModel | ✅ | 25.7 | 0.4764 | 47.24 |

| TF GraphDef | ✅ | 9.5 | 0.4764 | 45.98 |

| TF Lite | ✅ | 9.9 | 0.4764 | 182.04 |

| MNN | ✅ | 9.4 | 0.4784 | 21.83 |

Benchmarked with Ultralytics 8.4.7

Inference time does not include pre/ post-processing.

Link to this sectionNVIDIA Jetson AGX Orin Developer Kit (64GB)#

| Format | Status | Size on disk (MB) | mAP50-95(B) | Inference time (ms/im) |

|---|---|---|---|---|

| PyTorch | ✅ | 5.3 | 0.4790 | 11.58 |

| TorchScript | ✅ | 9.8 | 0.4770 | 4.60 |

| ONNX | ✅ | 9.5 | 0.4770 | 9.87 |

| OpenVINO | ✅ | 9.6 | 0.4820 | 28.80 |

| TensorRT (FP32) | ✅ | 11.5 | 0.0450 | 4.18 |

| TensorRT (FP16) | ✅ | 7.9 | 0.0450 | 2.62 |

| TensorRT (INT8) | ✅ | 5.4 | 0.4640 | 2.30 |

| TF SavedModel | ✅ | 24.6 | 0.4760 | 71.10 |

| TF GraphDef | ✅ | 9.5 | 0.4760 | 70.02 |

| TF Lite | ✅ | 9.9 | 0.4760 | 227.94 |

| MNN | ✅ | 9.4 | 0.4760 | 32.46 |

| NCNN | ✅ | 9.3 | 0.4810 | 29.93 |

Benchmarked with Ultralytics 8.4.32

Inference time does not include pre/ post-processing.

Link to this sectionNVIDIA Jetson Orin Nano Super Developer Kit#

| Format | Status | Size on disk (MB) | mAP50-95(B) | Inference time (ms/im) |

|---|---|---|---|---|

| PyTorch | ✅ | 5.3 | 0.4790 | 15.60 |

| TorchScript | ✅ | 9.8 | 0.4770 | 12.60 |

| ONNX | ✅ | 9.5 | 0.4760 | 15.76 |

| OpenVINO | ✅ | 9.6 | 0.4820 | 56.23 |

| TensorRT (FP32) | ✅ | 11.3 | 0.4770 | 7.53 |

| TensorRT (FP16) | ✅ | 8.1 | 0.4800 | 4.57 |

| TensorRT (INT8) | ✅ | 5.3 | 0.4490 | 3.80 |

| TF SavedModel | ✅ | 24.6 | 0.4760 | 118.33 |

| TF GraphDef | ✅ | 9.5 | 0.4760 | 116.30 |

| TF Lite | ✅ | 9.9 | 0.4760 | 286.00 |

| MNN | ✅ | 9.4 | 0.4760 | 68.77 |

| NCNN | ✅ | 9.3 | 0.4810 | 47.50 |

Benchmarked with Ultralytics 8.4.33

Inference time does not include pre/ post-processing.

Link to this sectionNVIDIA Jetson Orin NX 16GB#

| Format | Status | Size on disk (MB) | mAP50-95(B) | Inference time (ms/im) |

|---|---|---|---|---|

| PyTorch | ✅ | 5.3 | 0.4799 | 13.90 |

| TorchScript | ✅ | 9.8 | 0.4787 | 11.60 |

| ONNX | ✅ | 9.5 | 0.4763 | 14.18 |

| OpenVINO | ✅ | 9.6 | 0.4819 | 40.19 |

| TensorRT (FP32) | ✅ | 11.4 | 0.4770 | 7.01 |

| TensorRT (FP16) | ✅ | 8.0 | 0.4789 | 4.13 |

| TensorRT (INT8) | ✅ | 5.5 | 0.4489 | 3.49 |

| TF SavedModel | ✅ | 24.6 | 0.4764 | 92.34 |

| TF GraphDef | ✅ | 9.5 | 0.4764 | 92.06 |

| TF Lite | ✅ | 9.9 | 0.4764 | 254.43 |

| MNN | ✅ | 9.4 | 0.4760 | 48.55 |

| NCNN | ✅ | 9.3 | 0.4805 | 34.31 |

Benchmarked with Ultralytics 8.4.33

Inference time does not include pre/ post-processing.

Explore more benchmarking efforts by Seeed Studio running on different versions of NVIDIA Jetson hardware.

Link to this sectionReproduce Our Results#

To reproduce the above Ultralytics benchmarks on all export formats run this code:

from ultralytics import YOLO

# Load a YOLO11n PyTorch model

model = YOLO("yolo11n.pt")

# Benchmark YOLO11n speed and accuracy on the COCO128 dataset for all export formats

results = model.benchmark(data="coco128.yaml", imgsz=640)Note that benchmarking results might vary based on the exact hardware and software configuration of a system, as well as the current workload of the system at the time the benchmarks are run. For the most reliable results, use a dataset with a large number of images, e.g., data='coco.yaml' (5000 val images).

Link to this sectionBest Practices when using NVIDIA Jetson#

When using NVIDIA Jetson, there are a couple of best practices to follow in order to enable maximum performance on the NVIDIA Jetson running YOLO26.

-

Enable MAX Power Mode

Enabling MAX Power Mode on the Jetson will make sure all CPU, GPU cores are turned on.

sudo nvpmodel -m 0 -

Enable Jetson Clocks

Enabling Jetson Clocks will make sure all CPU, GPU cores are clocked at their maximum frequency.

sudo jetson_clocks -

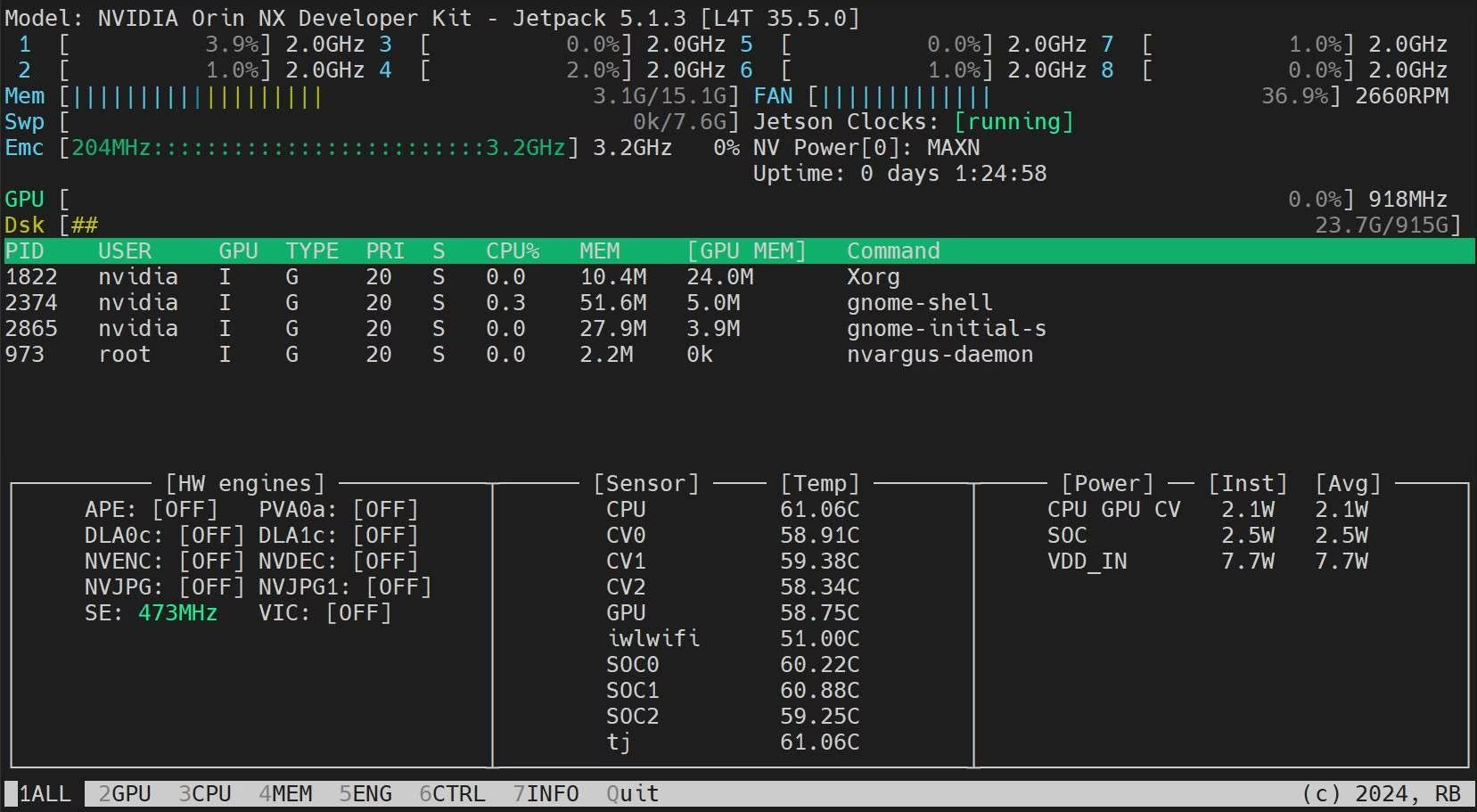

Install Jetson Stats Application

We can use jetson stats application to monitor the temperatures of the system components and check other system details such as view CPU, GPU, RAM utilization, change power modes, set to max clocks, check JetPack information

sudo apt update sudo pip install jetson-stats sudo reboot jtop

Link to this sectionMemory Optimization Tips for NVIDIA Jetson#

Available memory is often the limiting factor on Jetson devices, particularly on lower-memory variants such as the Jetson Orin Nano (8 GB) or Orin NX 8 GB. The tips below are practical, low-risk changes that can collectively free several hundred megabytes and let you run larger YOLO models or support additional parallel workloads. For a comprehensive treatment see the NVIDIA blog on maximizing memory efficiency on Jetson.

Link to this section1. Switch to Headless (No-GUI) Boot#

If your Jetson is connected over SSH or running as a production appliance without a display attached, eliminating the desktop environment and display server can recover up to 865 MB of RAM:

sudo systemctl set-default multi-user.target

sudo rebootTo restore the desktop later:

sudo systemctl set-default graphical.target

sudo rebootLink to this section2. Disable Unused System Services#

Non-essential background services (Bluetooth, connectivity managers, unused hardware daemons) consume around 32 MB combined. List active services and disable anything your deployment doesn't require:

# List running services

systemctl list-units --type=service --state=running

# Disable a service

sudo systemctl disable SERVICE_NAMELink to this section3. Profile Memory Usage#

Before optimizing, identify which processes are actually consuming RAM. procrank sorts processes by PSS (Proportional Set Size), which reflects the true per-process memory footprint more accurately than RSS (Resident Set Size, the total physical RAM pages mapped by a process, including pages shared with other processes):

git clone https://github.com/csimmonds/procrank_linux.git

cd procrank_linux && make

sudo ./procrankTo see per-process GPU and NvMap (CUDA/video pipeline) allocations:

sudo cat /sys/kernel/debug/nvmap/iovmm/clientsLink to this section4. Run Inference Without a Display in Production#

For inference pipelines that have no live-preview requirement, disabling display-related components (Tiler, OSD, DisplaySink) can save 200+ MB from the pipeline alone. With Ultralytics YOLO, suppress the viewer and write results to disk instead:

from ultralytics import YOLO

model = YOLO("yolo11n.engine")

# show=False prevents any display window; save=True writes annotated output to disk

results = model.predict(source="video.mp4", show=False, save=True)Link to this sectionCumulative Impact#

| Optimization | Approx. Memory Saved |

|---|---|

| Disable desktop GUI | ~865 MB |

| Disable unused OS services | ~32 MB |

| Headless inference pipeline (no display) | ~200+ MB |

| Total (easy wins) | ~1 GB+ |

Combining these changes is especially valuable when targeting TensorRT INT8 models on memory-constrained devices — it can be the difference between fitting a larger model variant in memory or not.

Link to this sectionNext Steps#

For further learning and support, see the Ultralytics YOLO26 Docs.

Link to this sectionFAQ#

Link to this sectionHow do I deploy Ultralytics YOLO26 on NVIDIA Jetson devices?#

Deploying Ultralytics YOLO26 on NVIDIA Jetson devices is a straightforward process. First, flash your Jetson device with the NVIDIA JetPack SDK. Then, either use a pre-built Docker image for quick setup or manually install the required packages. Detailed steps for each approach can be found in sections Quick Start with Docker and Start with Native Installation.

Link to this sectionWhat performance benchmarks can I expect from YOLO11 models on NVIDIA Jetson devices?#

YOLO11 models have been benchmarked on various NVIDIA Jetson devices showing significant performance improvements. For example, the TensorRT format delivers the best inference performance. The table in the Detailed Comparison Tables section provides a comprehensive view of performance metrics like mAP50-95 and inference time across different model formats.

Link to this sectionWhy should I use TensorRT for deploying YOLO26 on NVIDIA Jetson?#

TensorRT is highly recommended for deploying YOLO26 models on NVIDIA Jetson due to its optimal performance. It accelerates inference by leveraging the Jetson's GPU capabilities, ensuring maximum efficiency and speed. Learn more about how to convert to TensorRT and run inference in the Use TensorRT on NVIDIA Jetson section.

Link to this sectionHow can I install PyTorch and Torchvision on NVIDIA Jetson?#

To install PyTorch and Torchvision on NVIDIA Jetson, first uninstall any existing versions that may have been installed via pip. Then, manually install the compatible PyTorch and Torchvision versions for the Jetson's ARM64 architecture. Detailed instructions for this process are provided in the Install PyTorch and Torchvision section.

Link to this sectionWhat are the best practices for maximizing performance on NVIDIA Jetson when using YOLO26?#

To maximize performance on NVIDIA Jetson with YOLO26, follow these best practices:

- Enable MAX Power Mode to utilize all CPU and GPU cores.

- Enable Jetson Clocks to run all cores at their maximum frequency.

- Install the Jetson Stats application for monitoring system metrics.

For commands and additional details, refer to the Best Practices when using NVIDIA Jetson section.

Link to this sectionHow do I free up memory on NVIDIA Jetson to run larger YOLO models?#

Available RAM is often the bottleneck on lower-memory Jetson devices. Three easy wins that together can recover over 1 GB:

- Switch to headless boot (

sudo systemctl set-default multi-user.target) to eliminate the desktop GUI (~865 MB saved). - Disable unused services such as Bluetooth or connectivity managers (~32 MB saved).

- Run inference without a display by setting

show=Falsein your YOLOpredictcall, which avoids allocating display pipeline memory (~200+ MB saved).

Use procrank to profile per-process RAM usage and sudo cat /sys/kernel/debug/nvmap/iovmm/clients to inspect GPU allocations. See the Memory Optimization Tips section for full details.

Link to this sectionWhy does my TensorRT INT8 export disable end2end on JetPack 6?#

TensorRT 10.3.0 shipped with JetPack 6 has a known issue that prevents INT8 engine builds when end2end=True is enabled. When Ultralytics detects this combination, it automatically disables the end2end branch to ensure the export succeeds.

To restore end2end INT8 exports, upgrade TensorRT to a newer version (e.g., 10.7.0+):

wget https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2204/arm64/cuda-keyring_1.1-1_all.deb

sudo dpkg -i cuda-keyring_1.1-1_all.deb

sudo apt-get update

sudo apt-get install -y tensorrtAfter upgrading, re-run your export. For more details, see GitHub issue #23841.