Link to this sectionDécouvre comment exporter au format TF SavedModel depuis YOLO26#

Le déploiement de modèles de machine learning peut s'avérer complexe. Cependant, utiliser un format de modèle efficace et flexible peut te faciliter la tâche. TF SavedModel est un framework open-source de machine learning utilisé par TensorFlow pour charger des modèles de manière cohérente. C'est comme une valise pour les modèles TensorFlow, ce qui les rend faciles à transporter et à utiliser sur différents appareils et systèmes.

Apprendre à exporter des modèles Ultralytics YOLO26 vers le format TF SavedModel peut t'aider à déployer tes modèles facilement sur différentes plateformes et environnements. Dans ce guide, nous verrons comment convertir tes modèles au format TF SavedModel, simplifiant ainsi le processus d'exécution d'inférences avec tes modèles sur divers appareils.

Link to this sectionPourquoi devrais-tu exporter vers TF SavedModel ?#

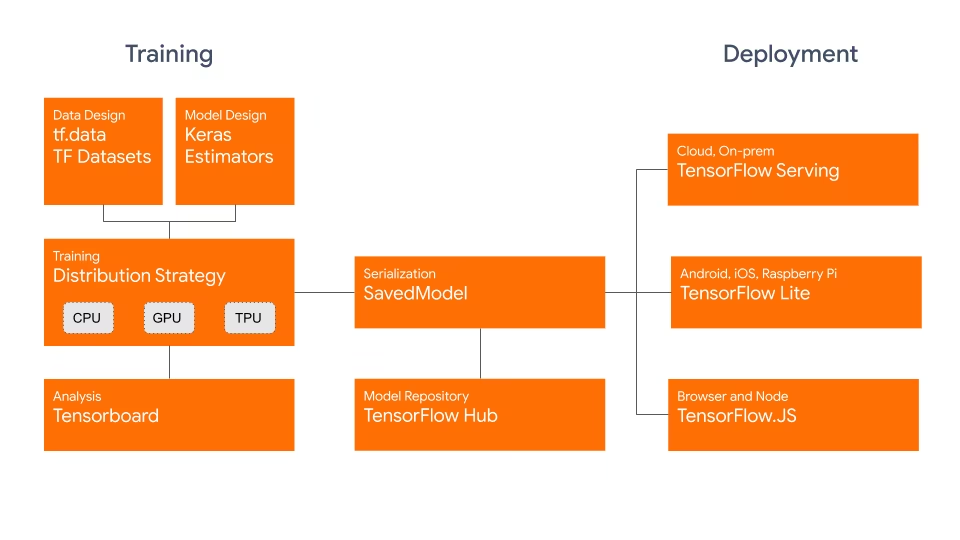

Le format TensorFlow SavedModel fait partie de l'écosystème TensorFlow développé par Google, comme illustré ci-dessous. Il est conçu pour sauvegarder et sérialiser les modèles TensorFlow de manière transparente. Il encapsule tous les détails du modèle, tels que l'architecture, les poids et même les informations de compilation. Cela rend le partage, le déploiement et la poursuite de l'entraînement dans différents environnements très simples.

Le TF SavedModel possède un avantage clé : sa compatibilité. Il fonctionne parfaitement avec TensorFlow Serving, TensorFlow Lite et TensorFlow.js. Cette compatibilité facilite le partage et le déploiement de modèles sur diverses plateformes, y compris les applications web et mobiles. Le format TF SavedModel est utile aussi bien pour la recherche que pour la production. Il offre un moyen unifié de gérer tes modèles, en t'assurant qu'ils sont prêts pour n'importe quelle application.

Link to this sectionFonctionnalités clés des TF SavedModels#

Voici les fonctionnalités clés qui font du TF SavedModel une excellente option pour les développeurs en IA :

-

Portabilité : TF SavedModel fournit un format de sérialisation hermétique, récupérable et neutre vis-à-vis du langage. Il permet aux systèmes et outils de haut niveau de produire, consommer et transformer les modèles TensorFlow. Les SavedModels peuvent être facilement partagés et déployés sur différentes plateformes et environnements.

-

Facilité de déploiement : TF SavedModel regroupe le graphe de calcul, les paramètres entraînés et les métadonnées nécessaires dans un seul package. Ils peuvent être facilement chargés et utilisés pour l'inférence sans nécessiter le code original ayant servi à construire le modèle. Cela rend le déploiement des modèles TensorFlow simple et efficace dans divers environnements de production.

-

Gestion des ressources : TF SavedModel prend en charge l'inclusion de ressources externes telles que des vocabulaires, des embeddings ou des tables de correspondance. Ces ressources sont stockées aux côtés de la définition du graphe et des variables, garantissant qu'elles sont disponibles au chargement du modèle. Cette fonctionnalité simplifie la gestion et la distribution des modèles qui dépendent de ressources externes.

Link to this sectionOptions de déploiement avec TF SavedModel#

Avant de nous plonger dans le processus d'exportation des modèles YOLO26 vers le format TF SavedModel, explorons quelques scénarios de déploiement typiques où ce format est utilisé.

TF SavedModel offre une gamme d'options pour déployer tes modèles de machine learning :

-

TensorFlow Serving : TensorFlow Serving est un système de service flexible et performant conçu pour les environnements de production. Il prend nativement en charge les TF SavedModels, ce qui facilite le déploiement et la mise à disposition de tes modèles sur des plateformes cloud, des serveurs sur site ou des appareils de périphérie.

-

Plateformes Cloud : Les principaux fournisseurs de cloud comme Google Cloud Platform (GCP), Amazon Web Services (AWS) et Microsoft Azure proposent des services pour déployer et exécuter des modèles TensorFlow, y compris les TF SavedModels. Ces services offrent une infrastructure évolutive et gérée, te permettant de déployer et de mettre à l'échelle tes modèles facilement.

-

Appareils mobiles et embarqués : TensorFlow Lite, une solution légère pour exécuter des modèles de machine learning sur des appareils mobiles, embarqués et IoT, prend en charge la conversion des TF SavedModels au format TensorFlow Lite. Cela te permet de déployer tes modèles sur une large gamme d'appareils, des smartphones et tablettes aux microcontrôleurs et appareils de périphérie.

-

TensorFlow Runtime : TensorFlow Runtime (

tfrt) est un environnement d'exécution haute performance pour exécuter des graphes TensorFlow. Il fournit des API de plus bas niveau pour charger et exécuter des TF SavedModels dans des environnements C++. TensorFlow Runtime offre de meilleures performances par rapport à l'environnement d'exécution TensorFlow standard. Il est adapté aux scénarios de déploiement nécessitant une inférence à faible latence et une intégration étroite avec des bases de code C++ existantes.

Link to this sectionExportation des modèles YOLO26 vers TF SavedModel#

En exportant tes modèles YOLO26 vers le format TF SavedModel, tu améliores leur adaptabilité et leur facilité de déploiement sur diverses plateformes.

Link to this sectionInstallation#

Pour installer le package requis, exécute :

# Install the required package for YOLO26

pip install ultralyticsPour des instructions détaillées et les meilleures pratiques liées au processus d'installation, consulte notre guide d'installation Ultralytics. Lors de l'installation des packages requis pour YOLO26, si tu rencontres des difficultés, consulte notre guide des problèmes courants pour des solutions et des conseils.

Link to this sectionUtilisation#

Tous les modèles Ultralytics YOLO26 sont conçus pour prendre en charge l'exportation dès leur installation, ce qui facilite leur intégration dans ton flux de travail de déploiement préféré. Tu peux consulter la liste complète des formats d'exportation pris en charge et des options de configuration pour choisir la meilleure configuration pour ton application.

Le format TF SavedModel prend en charge les modes Export, Predict et Validate. Exporte ton modèle, puis charge le modèle exporté pour exécuter une inférence ou valider sa précision.

from ultralytics import YOLO

# Load a YOLO26 model

model = YOLO("yolo26n.pt")

# Export the model to TF SavedModel format

model.export(format="saved_model") # creates '/yolo26n_saved_model'from ultralytics import YOLO

# Load the exported TF SavedModel model

model = YOLO("./yolo26n_saved_model")

# Run inference

results = model("https://ultralytics.com/images/bus.jpg")from ultralytics import YOLO

# Load the exported TF SavedModel model

model = YOLO("./yolo26n_saved_model")

# Validate accuracy on the COCO8 dataset

metrics = model.val(data="coco8.yaml")Link to this sectionArguments d'exportation#

| Argument | Type | Défaut | Description |

|---|---|---|---|

format | str | 'saved_model' | Format cible pour le modèle exporté, définissant la compatibilité avec divers environnements de déploiement. |

imgsz | int ou tuple | 640 | Desired image size for the model input. Can be an integer for square images or a tuple (height, width) for specific dimensions. |

keras | bool | False | Permet l'exportation au format Keras, assurant la compatibilité avec TensorFlow Serving et les API. |

int8 | bool | False | Active la quantification INT8, compressant davantage le modèle et accélérant l'inférence avec une perte minimale de accuracy, principalement pour les appareils de bord. |

nms | bool | False | Ajoute la suppression non-maximale (NMS), essentielle pour un post-traitement de détection précis et efficace. |

batch | int | 1 | Spécifie la taille d'inférence par lot du modèle exporté ou le nombre maximal d'images que le modèle exporté traitera simultanément en mode predict. |

data | str | 'coco8.yaml' | Chemin vers le fichier de configuration du dataset (par défaut : coco8.yaml), essentiel pour la quantification. |

fraction | float | 1.0 | Spécifie la fraction du jeu de données à utiliser pour la calibration de la quantification INT8. Permet de calibrer sur un sous-ensemble du jeu de données complet, utile pour des expériences ou lorsque les ressources sont limitées. Si non spécifié avec INT8 activé, le jeu de données complet sera utilisé. |

device | str | None | Spécifie l'appareil pour l'exportation : CPU (device=cpu), MPS pour Apple silicon (device=mps). |

Pour plus de détails sur le processus d'exportation, visite la page de documentation d'Ultralytics sur l'exportation.

Link to this sectionDéploiement des modèles YOLO26 TF SavedModel exportés#

Maintenant que tu as exporté ton modèle YOLO26 au format TF SavedModel, l'étape suivante consiste à le déployer. La première étape, recommandée, pour exécuter un modèle TF SavedModel est d'utiliser la méthode YOLO("yolo26n_saved_model/"), comme montré précédemment dans l'extrait de code d'utilisation.

Cependant, pour des instructions détaillées sur le déploiement de tes modèles TF SavedModel, jette un œil aux ressources suivantes :

-

TensorFlow Serving : Voici la documentation développeur sur la façon de déployer tes modèles TF SavedModel en utilisant TensorFlow Serving.

-

Exécuter un TensorFlow SavedModel dans Node.js : Un article de blog TensorFlow sur l'exécution directe d'un TensorFlow SavedModel dans Node.js sans conversion.

-

Déploiement sur le Cloud : Un article de blog TensorFlow sur le déploiement d'un modèle TensorFlow SavedModel sur Cloud AI Platform.

Link to this sectionRésumé#

Dans ce guide, nous avons exploré comment exporter des modèles Ultralytics YOLO26 vers le format TF SavedModel. En exportant vers TF SavedModel, tu gagnes la flexibilité nécessaire pour optimiser, déployer et mettre à l'échelle tes modèles YOLO26 sur une large gamme de plateformes.

Pour plus de détails sur l'utilisation, visite la documentation officielle de TF SavedModel.

Pour plus d'informations sur l'intégration d'Ultralytics YOLO26 avec d'autres plateformes et frameworks, n'oublie pas de consulter notre page du guide d'intégration. Elle regorge de ressources pour t'aider à tirer le meilleur parti de YOLO26 dans tes projets.

Link to this sectionFAQ#

Link to this sectionComment puis-je exporter un modèle Ultralytics YOLO vers le format TensorFlow SavedModel ?#

Exporter un modèle Ultralytics YOLO vers le format TensorFlow SavedModel est simple. Tu peux utiliser Python ou la CLI pour y parvenir :

from ultralytics import YOLO

# Load a YOLO26 model

model = YOLO("yolo26n.pt")

# Export the model to TF SavedModel format

model.export(format="saved_model") # creates '/yolo26n_saved_model'

# Load the exported TF SavedModel for inference

tf_savedmodel_model = YOLO("./yolo26n_saved_model")

results = tf_savedmodel_model("https://ultralytics.com/images/bus.jpg")Consulte la documentation d'exportation d'Ultralytics pour plus de détails.

Link to this sectionPourquoi devrais-je utiliser le format TensorFlow SavedModel ?#

Le format TensorFlow SavedModel offre plusieurs avantages pour le déploiement de modèles :

- Portabilité : Il fournit un format neutre vis-à-vis du langage, facilitant le partage et le déploiement de modèles dans différents environnements.

- Compatibilité : Il s'intègre de manière transparente avec des outils tels que TensorFlow Serving, TensorFlow Lite et TensorFlow.js, essentiels pour déployer des modèles sur diverses plateformes, incluant les applications web et mobiles.

- Encapsulation complète : Encode l'architecture du modèle, les poids et les informations de compilation, permettant un partage et une poursuite de l'entraînement simplifiés.

Pour plus d'avantages et d'options de déploiement, consulte les options de déploiement de modèles Ultralytics YOLO.

Link to this sectionQuels sont les scénarios de déploiement typiques pour TF SavedModel ?#

TF SavedModel peut être déployé dans divers environnements, notamment :

- TensorFlow Serving : Idéal pour les environnements de production nécessitant un service de modèle évolutif et haute performance.

- Plateformes Cloud : Prend en charge les principaux services cloud comme Google Cloud Platform (GCP), Amazon Web Services (AWS) et Microsoft Azure pour un déploiement de modèle évolutif.

- Appareils mobiles et embarqués : L'utilisation de TensorFlow Lite pour convertir des TF SavedModels permet un déploiement sur des appareils mobiles, des objets connectés (IoT) et des microcontrôleurs.

- TensorFlow Runtime : Pour les environnements C++ nécessitant une inférence à faible latence avec de meilleures performances.

Pour des options de déploiement détaillées, visite les guides officiels sur le déploiement de modèles TensorFlow.

Link to this sectionComment puis-je installer les packages nécessaires pour exporter des modèles YOLO26 ?#

Pour exporter des modèles YOLO26, tu dois installer le package ultralytics. Exécute la commande suivante dans ton terminal :

pip install ultralyticsPour des instructions d'installation plus détaillées et les meilleures pratiques, réfère-toi à notre guide d'installation Ultralytics. Si tu rencontres des problèmes, consulte notre guide des problèmes courants.

Link to this sectionQuelles sont les fonctionnalités clés du format TensorFlow SavedModel ?#

Le format TF SavedModel est bénéfique pour les développeurs en IA grâce aux fonctionnalités suivantes :

- Portabilité : Permet le partage et le déploiement sans effort dans divers environnements.

- Facilité de déploiement : Encapsule le graphe de calcul, les paramètres entraînés et les métadonnées dans un package unique, ce qui simplifie le chargement et l'inférence.

- Gestion des ressources : Prend en charge des ressources externes comme les vocabulaires, garantissant leur disponibilité lors du chargement du modèle.

Pour plus de détails, explore la documentation officielle de TensorFlow.