Сегментация экземпляров

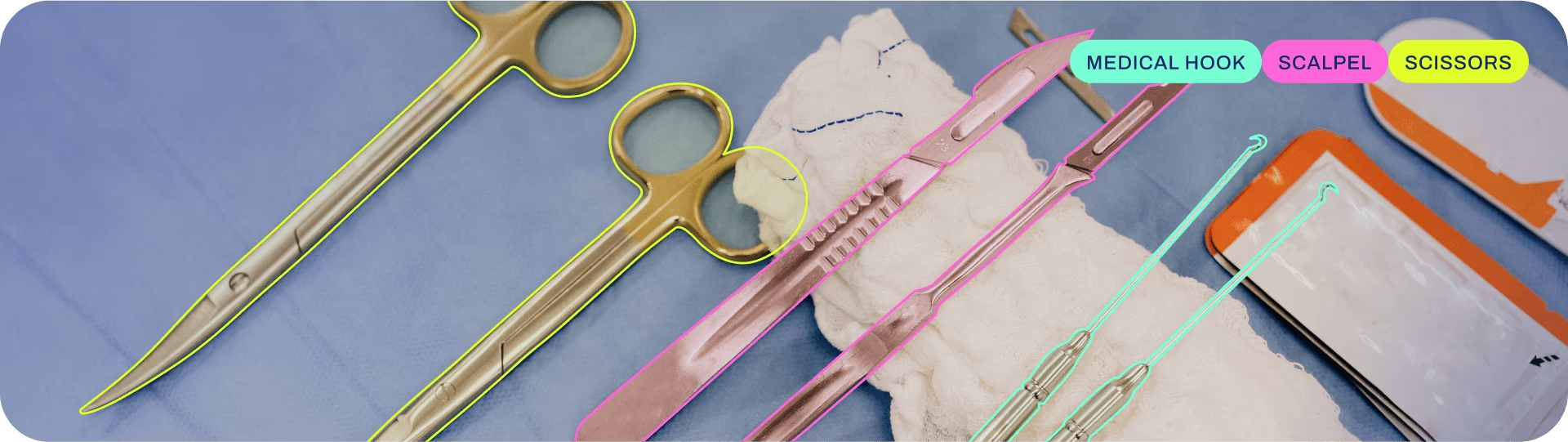

Сегментация экземпляров идет на шаг дальше обнаружения объектов и включает в себя идентификацию отдельных объектов на изображении и их сегментацию от остальной части изображения.

Выходные данные модели сегментации экземпляров представляют собой набор масок или контуров, которые очерчивают каждый объект на изображении, а также метки классов и оценки достоверности для каждого объекта. Сегментация экземпляров полезна, когда вам нужно знать не только то, где находятся объекты на изображении, но и то, какова их точная форма.

Смотреть: Запуск Segmentation с предварительно обученной моделью Ultralytics YOLO в Python.

Совет

Модели YOLO26 Segment используют -seg суффикс, т.е., yolo26n-seg.pt, и предварительно обучены на COCO.

Модели

Предобученные модели YOLO26 Segment показаны здесь. Модели Detect, Segment и Pose предобучены на наборе данных COCO, в то время как модели Classify предобучены на наборе данных ImageNet.

Модели загружаются автоматически из последнего релиза Ultralytics при первом использовании.

| Модель | размер (пиксели) | mAPbox 50-95(e2e) | mAPmask 50-95(e2e) | Скорость CPU ONNX (мс) | Скорость T4 TensorRT10 (мс) | параметры (M) | FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLO26n-seg | 640 | 39.6 | 33.9 | 53.3 ± 0.5 | 2.1 ± 0.0 | 2.7 | 9.1 |

| YOLO26s-seg | 640 | 47.3 | 40.0 | 118.4 ± 0.9 | 3.3 ± 0.0 | 10.4 | 34.2 |

| YOLO26m-seg | 640 | 52.5 | 44.1 | 328.2 ± 2.4 | 6.7 ± 0.1 | 23.6 | 121.5 |

| YOLO26l-seg | 640 | 54.4 | 45.5 | 387.0 ± 3.7 | 8.0 ± 0.1 | 28.0 | 139.8 |

| YOLO26x-seg | 640 | 56.5 | 47.0 | 787.0 ± 6.8 | 16.4 ± 0.1 | 62.8 | 313.5 |

- mAPval значения для одномодельной одномасштабной модели на COCO val2017 наборе данных.

Воспроизвести с помощьюyolo val segment data=coco.yaml device=0 - Скорость в среднем по изображениям COCO val с использованием Amazon EC2 P4d instance.

Воспроизвести с помощьюyolo val segment data=coco.yaml batch=1 device=0|cpu - Параметры и FLOPs значения относятся к объединенной модели после

model.fuse(), который объединяет слои Conv и BatchNorm и, для сквозных моделей, удаляет вспомогательную голову обнаружения «один-ко-многим». Предварительно обученные контрольные точки сохраняют полную архитектуру обучения и могут показывать более высокие значения.

Обучение

Обучите YOLO26n-seg на наборе данных COCO8-seg в течение 100 эпох при размере изображения 640. Полный список доступных аргументов см. на странице Конфигурация.

Пример

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.yaml") # build a new model from YAML

model = YOLO("yolo26n-seg.pt") # load a pretrained model (recommended for training)

model = YOLO("yolo26n-seg.yaml").load("yolo26n-seg.pt") # build from YAML and transfer weights

# Train the model

results = model.train(data="coco8-seg.yaml", epochs=100, imgsz=640)

# Build a new model from YAML and start training from scratch

yolo segment train data=coco8-seg.yaml model=yolo26n-seg.yaml epochs=100 imgsz=640

# Start training from a pretrained *.pt model

yolo segment train data=coco8-seg.yaml model=yolo26n-seg.pt epochs=100 imgsz=640

# Build a new model from YAML, transfer pretrained weights to it and start training

yolo segment train data=coco8-seg.yaml model=yolo26n-seg.yaml pretrained=yolo26n-seg.pt epochs=100 imgsz=640

Смотрите полную информацию о train деталях режима в Обучение странице. Модели сегментации также можно обучать на облачных графических процессорах с помощью Ultralytics Platform.

Формат набора данных

Подробную информацию о формате набора данных YOLO можно найти в Руководстве по наборам данных. Для преобразования имеющегося набора данных из других форматов (таких как COCO ) в YOLO воспользуйтесь инструментом JSON2YOLO от Ultralytics. Вы также можете создавать маски сегментации на Ultralytics с помощью инструментов для работы с многоугольниками и интеллектуальной аннотации SAM.

Валидация

Проверьте обученную модель YOLO26n-seg точность на наборе данных COCO8-seg. Никакие аргументы не требуются, так как model сохраняет свое обучение data и аргументы в качестве атрибутов модели.

Пример

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom model

# Validate the model

metrics = model.val() # no arguments needed, dataset and settings remembered

metrics.box.map # map50-95(B)

metrics.box.map50 # map50(B)

metrics.box.map75 # map75(B)

metrics.box.maps # a list containing mAP50-95(B) for each category

metrics.box.image_metrics # per-image metrics dictionary for det with precision, recall, F1, TP, FP, and FN

metrics.seg.map # map50-95(M)

metrics.seg.map50 # map50(M)

metrics.seg.map75 # map75(M)

metrics.seg.maps # a list containing mAP50-95(M) for each category

metrics.seg.image_metrics # per-image metrics dictionary for seg with precision, recall, F1, TP, FP, and FN

yolo segment val model=yolo26n-seg.pt # val official model

yolo segment val model=path/to/best.pt # val custom model

Прогнозирование

Используйте обученную модель YOLO26n-seg для выполнения предсказаний на изображениях.

Пример

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom model

# Predict with the model

results = model("https://ultralytics.com/images/bus.jpg") # predict on an image

# Access the results

for result in results:

xy = result.masks.xy # mask in polygon format

xyn = result.masks.xyn # normalized

masks = result.masks.data # mask in matrix format (num_objects x H x W)

yolo segment predict model=yolo26n-seg.pt source='https://ultralytics.com/images/bus.jpg' # predict with official model

yolo segment predict model=path/to/best.pt source='https://ultralytics.com/images/bus.jpg' # predict with custom model

Смотрите полную информацию о predict деталях режима в Прогнозирование странице.

Экспорт

Экспортируйте модель YOLO26n-seg в другой формат, такой как ONNX, CoreML и т. д.

Пример

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom-trained model

# Export the model

model.export(format="onnx")

yolo export model=yolo26n-seg.pt format=onnx # export official model

yolo export model=path/to/best.pt format=onnx # export custom-trained model

Доступные форматы экспорта YOLO26-seg представлены в таблице ниже. Вы можете экспортировать в любой формат, используя format аргумент, т.е., format='onnx' или format='engine'Вы можете выполнять предсказания или валидацию непосредственно на экспортированных моделях, т.е., yolo predict model=yolo26n-seg.onnx. Примеры использования отображаются для вашей модели после завершения экспорта.

| Формат | format Аргумент | Модель | Метаданные | Аргументы |

|---|---|---|---|---|

| PyTorch | - | yolo26n-seg.pt | ✅ | - |

| TorchScript | torchscript | yolo26n-seg.torchscript | ✅ | imgsz, half, dynamic, optimize, nms, batch, device |

| ONNX | onnx | yolo26n-seg.onnx | ✅ | imgsz, half, dynamic, simplify, opset, nms, batch, device |

| OpenVINO | openvino | yolo26n-seg_openvino_model/ | ✅ | imgsz, half, dynamic, int8, nms, batch, data, fraction, device |

| TensorRT | engine | yolo26n-seg.engine | ✅ | imgsz, half, dynamic, simplify, workspace, int8, nms, batch, data, fraction, device |

| CoreML | coreml | yolo26n-seg.mlpackage | ✅ | imgsz, dynamic, half, int8, nms, batch, device |

| TF SavedModel | saved_model | yolo26n-seg_saved_model/ | ✅ | imgsz, keras, int8, nms, batch, data, fraction, device |

| TF GraphDef | pb | yolo26n-seg.pb | ❌ | imgsz, batch, device |

| TF Lite | tflite | yolo26n-seg.tflite | ✅ | imgsz, half, int8, nms, batch, data, fraction, device |

| TF Edge TPU | edgetpu | yolo26n-seg_edgetpu.tflite | ✅ | imgsz, int8, data, fraction, device |

| TF.js | tfjs | yolo26n-seg_web_model/ | ✅ | imgsz, half, int8, nms, batch, data, fraction, device |

| PaddlePaddle | paddle | yolo26n-seg_paddle_model/ | ✅ | imgsz, batch, device |

| MNN | mnn | yolo26n-seg.mnn | ✅ | imgsz, batch, int8, half, device |

| NCNN | ncnn | yolo26n-seg_ncnn_model/ | ✅ | imgsz, half, batch, device |

| IMX500 | imx | yolo26n-seg_imx_model/ | ✅ | imgsz, int8, data, fraction, nms, device |

| RKNN | rknn | yolo26n-seg_rknn_model/ | ✅ | imgsz, batch, name, device |

| ExecuTorch | executorch | yolo26n-seg_executorch_model/ | ✅ | imgsz, batch, device |

| Axelera | axelera | yolo26n-seg_axelera_model/ | ✅ | imgsz, batch, int8, data, fraction, device |

Смотрите полную информацию о export подробности в Экспорт странице.

Часто задаваемые вопросы

Как обучить модель YOLO26 для segment на пользовательском наборе данных?

Чтобы обучить модель YOLO26 для segment на пользовательском наборе данных, вам сначала необходимо подготовить набор данных в формате YOLO для segment. Вы можете использовать такие инструменты, как JSON2YOLO, для преобразования наборов данных из других форматов. Как только ваш набор данных будет готов, вы можете обучить модель, используя команды python или CLI:

Пример

from ultralytics import YOLO

# Load a pretrained YOLO26 segment model

model = YOLO("yolo26n-seg.pt")

# Train the model

results = model.train(data="path/to/your_dataset.yaml", epochs=100, imgsz=640)

yolo segment train data=path/to/your_dataset.yaml model=yolo26n-seg.pt epochs=100 imgsz=640

Дополнительные аргументы можно найти на странице Конфигурация.

В чем разница между обнаружением объектов и сегментацией экземпляров в YOLO26?

Detect объектов идентифицирует и локализует объекты на изображении, рисуя вокруг них ограничивающие рамки, тогда как сегментация экземпляров не только идентифицирует ограничивающие рамки, но и очерчивает точную форму каждого объекта. Модели сегментации экземпляров YOLO26 предоставляют маски или контуры, которые обрисовывают каждый обнаруженный объект, что особенно полезно для задач, где знание точной формы объектов важно, например, в медицинской визуализации или автономном вождении.

Почему стоит использовать YOLO26 для сегментации экземпляров?

Ultralytics YOLO26 — это современная модель, известная своей высокой точностью и производительностью в реальном времени, что делает ее идеальной для задач сегментации экземпляров. Модели YOLO26 Segment поставляются предварительно обученными на наборе данных COCO, обеспечивая надежную производительность для различных объектов. Кроме того, YOLO поддерживает функции обучения, валидации, предсказания и экспорта с бесшовной интеграцией, что делает ее чрезвычайно универсальной как для исследований, так и для промышленных применений.

Как загрузить и проверить предварительно обученную модель сегментации YOLO?

Загрузка и проверка предварительно обученной модели сегментации YOLO не составляет труда. Вот как это можно сделать с помощью Python и CLI:

Пример

from ultralytics import YOLO

# Load a pretrained model

model = YOLO("yolo26n-seg.pt")

# Validate the model

metrics = model.val()

print("Mean Average Precision for boxes:", metrics.box.map)

print("Mean Average Precision for masks:", metrics.seg.map)

yolo segment val model=yolo26n-seg.pt

Эти шаги предоставят вам метрики валидации, такие как Mean Average Precision (mAP), которые имеют решающее значение для оценки производительности модели.

Как экспортировать модель сегментации YOLO в формат ONNX?

Экспорт модели сегментации YOLO в формат ONNX прост и может быть выполнен с помощью команд Python или CLI:

Пример

from ultralytics import YOLO

# Load a pretrained model

model = YOLO("yolo26n-seg.pt")

# Export the model to ONNX format

model.export(format="onnx")

yolo export model=yolo26n-seg.pt format=onnx

Для получения более подробной информации об экспорте в различные форматы обратитесь к странице Экспорт.