COCO-Pose Dataset

The COCO-Pose dataset is a specialized version of the COCO (Common Objects in Context) dataset, designed for pose estimation tasks. It leverages the COCO Keypoints 2017 images and labels to enable the training of models like YOLO for pose estimation tasks.

COCO-Pose Pretrained Models

| Model | size (pixels) | mAPpose 50-95(e2e) | mAPpose 50(e2e) | Speed CPU ONNX (ms) | Speed T4 TensorRT10 (ms) | params (M) | FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLO26n-pose | 640 | 57.2 | 83.3 | 40.3 ± 0.5 | 1.8 ± 0.0 | 2.9 | 7.5 |

| YOLO26s-pose | 640 | 63.0 | 86.6 | 85.3 ± 0.9 | 2.7 ± 0.0 | 10.4 | 23.9 |

| YOLO26m-pose | 640 | 68.8 | 89.6 | 218.0 ± 1.5 | 5.0 ± 0.1 | 21.5 | 73.1 |

| YOLO26l-pose | 640 | 70.4 | 90.5 | 275.4 ± 2.4 | 6.5 ± 0.1 | 25.9 | 91.3 |

| YOLO26x-pose | 640 | 71.6 | 91.6 | 565.4 ± 3.0 | 12.2 ± 0.2 | 57.6 | 201.7 |

Key Features

- COCO-Pose builds upon the COCO Keypoints 2017 dataset which contains 200K images labeled with keypoints for pose estimation tasks.

- The dataset supports 17 keypoints for human figures, facilitating detailed pose estimation.

- Like COCO, it provides standardized evaluation metrics, including Object Keypoint Similarity (OKS) for pose estimation tasks, making it suitable for comparing model performance.

Dataset Structure

The COCO-Pose dataset is split into three subsets:

- Train2017: This subset contains 56599 images from the COCO dataset, annotated for training pose estimation models.

- Val2017: This subset has 2346 images used for validation purposes during model training.

- Test2017: This subset consists of images used for testing and benchmarking the trained models. Ground truth annotations for this subset are not publicly available, and the results are submitted to the COCO evaluation server for performance evaluation.

Applications

The COCO-Pose dataset is specifically used for training and evaluating deep learning models in keypoint detection and pose estimation tasks, such as OpenPose. The dataset's large number of annotated images and standardized evaluation metrics make it an essential resource for computer vision researchers and practitioners focused on pose estimation.

Dataset YAML

A YAML (Yet Another Markup Language) file is used to define the dataset configuration. It contains information about the dataset's paths, classes, and other relevant information. In the case of the COCO-Pose dataset, the coco-pose.yaml file is maintained at https://github.com/ultralytics/ultralytics/blob/main/ultralytics/cfg/datasets/coco-pose.yaml.

ultralytics/cfg/datasets/coco-pose.yaml

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# COCO 2017 Keypoints dataset https://cocodataset.org by Microsoft

# Documentation: https://docs.ultralytics.com/datasets/pose/coco/

# Example usage: yolo train data=coco-pose.yaml

# parent

# ├── ultralytics

# └── datasets

# └── coco-pose ← downloads here (20.1 GB)

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: coco-pose # dataset root dir

train: train2017.txt # train images (relative to 'path') 56599 images

val: val2017.txt # val images (relative to 'path') 2346 images

test: test-dev2017.txt # 20288 of 40670 images, submit to https://codalab.lisn.upsaclay.fr/competitions/7403

# Keypoints

kpt_shape: [17, 3] # number of keypoints, number of dims (2 for x,y or 3 for x,y,visible)

flip_idx: [0, 2, 1, 4, 3, 6, 5, 8, 7, 10, 9, 12, 11, 14, 13, 16, 15]

# Classes

names:

0: person

# Keypoint names per class

kpt_names:

0:

- nose

- left_eye

- right_eye

- left_ear

- right_ear

- left_shoulder

- right_shoulder

- left_elbow

- right_elbow

- left_wrist

- right_wrist

- left_hip

- right_hip

- left_knee

- right_knee

- left_ankle

- right_ankle

# Download script/URL (optional)

download: |

from pathlib import Path

from ultralytics.utils import ASSETS_URL

from ultralytics.utils.downloads import download

# Download labels

dir = Path(yaml["path"]) # dataset root dir

urls = [f"{ASSETS_URL}/coco2017labels-pose.zip"]

download(urls, dir=dir.parent)

# Download data

urls = [

"http://images.cocodataset.org/zips/train2017.zip", # 19G, 118k images

"http://images.cocodataset.org/zips/val2017.zip", # 1G, 5k images

"http://images.cocodataset.org/zips/test2017.zip", # 7G, 41k images (optional)

]

download(urls, dir=dir / "images", threads=3)

Usage

To train a YOLO26n-pose model on the COCO-Pose dataset for 100 epochs with an image size of 640, you can use the following code snippets. For a comprehensive list of available arguments, refer to the model Training page.

Train Example

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-pose.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="coco-pose.yaml", epochs=100, imgsz=640)

# Start training from a pretrained *.pt model

yolo pose train data=coco-pose.yaml model=yolo26n-pose.pt epochs=100 imgsz=640

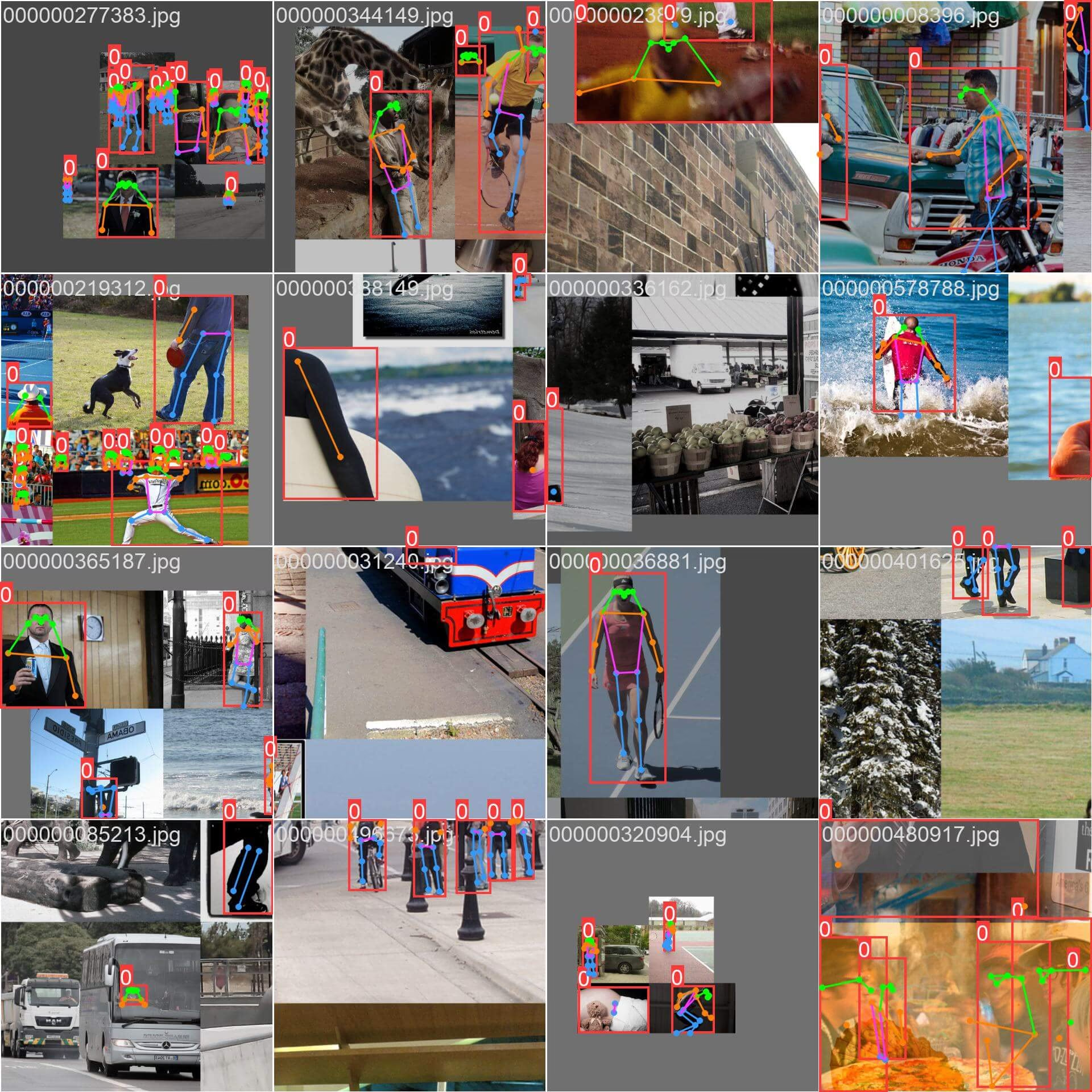

Sample Images and Annotations

The COCO-Pose dataset contains a diverse set of images with human figures annotated with keypoints. Here are some examples of images from the dataset, along with their corresponding annotations:

- Mosaiced Image: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

The example showcases the variety and complexity of the images in the COCO-Pose dataset and the benefits of using mosaicing during the training process.

Citations and Acknowledgments

If you use the COCO-Pose dataset in your research or development work, please cite the following paper:

@misc{lin2015microsoft,

title={Microsoft COCO: Common Objects in Context},

author={Tsung-Yi Lin and Michael Maire and Serge Belongie and Lubomir Bourdev and Ross Girshick and James Hays and Pietro Perona and Deva Ramanan and C. Lawrence Zitnick and Piotr Dollár},

year={2015},

eprint={1405.0312},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

We would like to acknowledge the COCO Consortium for creating and maintaining this valuable resource for the computer vision community. For more information about the COCO-Pose dataset and its creators, visit the COCO dataset website.

FAQ

What is the COCO-Pose dataset and how is it used with Ultralytics YOLO for pose estimation?

The COCO-Pose dataset is a specialized version of the COCO (Common Objects in Context) dataset designed for pose estimation tasks. It builds upon the COCO Keypoints 2017 images and annotations, allowing for the training of models like Ultralytics YOLO for detailed pose estimation. For instance, you can use the COCO-Pose dataset to train a YOLO26n-pose model by loading a pretrained model and training it with a YAML configuration. For training examples, refer to the Training documentation.

How can I train a YOLO26 model on the COCO-Pose dataset?

Training a YOLO26 model on the COCO-Pose dataset can be accomplished using either Python or CLI commands. For example, to train a YOLO26n-pose model for 100 epochs with an image size of 640, you can follow the steps below:

Train Example

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-pose.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="coco-pose.yaml", epochs=100, imgsz=640)

# Start training from a pretrained *.pt model

yolo pose train data=coco-pose.yaml model=yolo26n-pose.pt epochs=100 imgsz=640

For more details on the training process and available arguments, check the training page.

What are the different metrics provided by the COCO-Pose dataset for evaluating model performance?

The COCO-Pose dataset provides several standardized evaluation metrics for pose estimation tasks, similar to the original COCO dataset. Key metrics include the Object Keypoint Similarity (OKS), which evaluates the accuracy of predicted keypoints against ground truth annotations. These metrics allow for thorough performance comparisons between different models. For instance, the COCO-Pose pretrained models such as YOLO26n-pose, YOLO26s-pose, and others have specific performance metrics listed in the documentation, like mAPpose50-95 and mAPpose50.

How is the dataset structured and split for the COCO-Pose dataset?

The COCO-Pose dataset is split into three subsets:

- Train2017: Contains 56599 COCO images, annotated for training pose estimation models.

- Val2017: 2346 images for validation purposes during model training.

- Test2017: Images used for testing and benchmarking trained models. Ground truth annotations for this subset are not publicly available; results are submitted to the COCO evaluation server for performance evaluation.

These subsets help organize the training, validation, and testing phases effectively. For configuration details, explore the coco-pose.yaml file available on GitHub.

What are the key features and applications of the COCO-Pose dataset?

The COCO-Pose dataset extends the COCO Keypoints 2017 annotations to include 17 keypoints for human figures, enabling detailed pose estimation. Standardized evaluation metrics (e.g., OKS) facilitate comparisons across different models. Applications of the COCO-Pose dataset span various domains, such as sports analytics, healthcare, and human-computer interaction, wherever detailed pose estimation of human figures is required. For practical use, leveraging pretrained models like those provided in the documentation (e.g., YOLO26n-pose) can significantly streamline the process (Key Features).

If you use the COCO-Pose dataset in your research or development work, please cite the paper with the following BibTeX entry.