SAM 2: Segment Anything Model 2

SAM 2, the successor to Meta's Segment Anything Model (SAM), is a cutting-edge tool designed for comprehensive object segmentation in both images and videos. It excels in handling complex visual data through a unified, promptable model architecture that supports real-time processing and zero-shot generalization.

SAM 2.1 models power the smart annotation feature on Ultralytics Platform, enabling click-based segmentation for fast dataset labeling. See the annotation guide for details.

Key Features

Watch: How to Run Inference with Meta's SAM2 using Ultralytics | Step-by-Step Guide 🎉

Unified Model Architecture

SAM 2 combines the capabilities of image and video segmentation in a single model. This unification simplifies deployment and allows for consistent performance across different media types. It leverages a flexible prompt-based interface, enabling users to specify objects of interest through various prompt types, such as points, bounding boxes, or masks.

Real-Time Performance

The model achieves real-time inference speeds, processing approximately 44 frames per second. This makes SAM 2 suitable for applications requiring immediate feedback, such as video editing and augmented reality.

Zero-Shot Generalization

SAM 2 can segment objects it has never encountered before, demonstrating strong zero-shot generalization. This is particularly useful in diverse or evolving visual domains where pre-defined categories may not cover all possible objects.

Interactive Refinement

Users can iteratively refine the segmentation results by providing additional prompts, allowing for precise control over the output. This interactivity is essential for fine-tuning results in applications like video annotation or medical imaging.

Advanced Handling of Visual Challenges

SAM 2 includes mechanisms to manage common video segmentation challenges, such as object occlusion and reappearance. It uses a sophisticated memory mechanism to keep track of objects across frames, ensuring continuity even when objects are temporarily obscured or exit and re-enter the scene.

For a deeper understanding of SAM 2's architecture and capabilities, explore the SAM 2 research paper.

Performance and Technical Details

SAM 2 sets a new benchmark in the field, outperforming previous models on various metrics:

| Metric | SAM 2 | Previous SOTA |

|---|---|---|

| Interactive Video Segmentation | Best | - |

| Human Interactions Required | 3x fewer | Baseline |

| Image Segmentation Accuracy | Improved | SAM |

| Inference Speed | 6x faster | SAM |

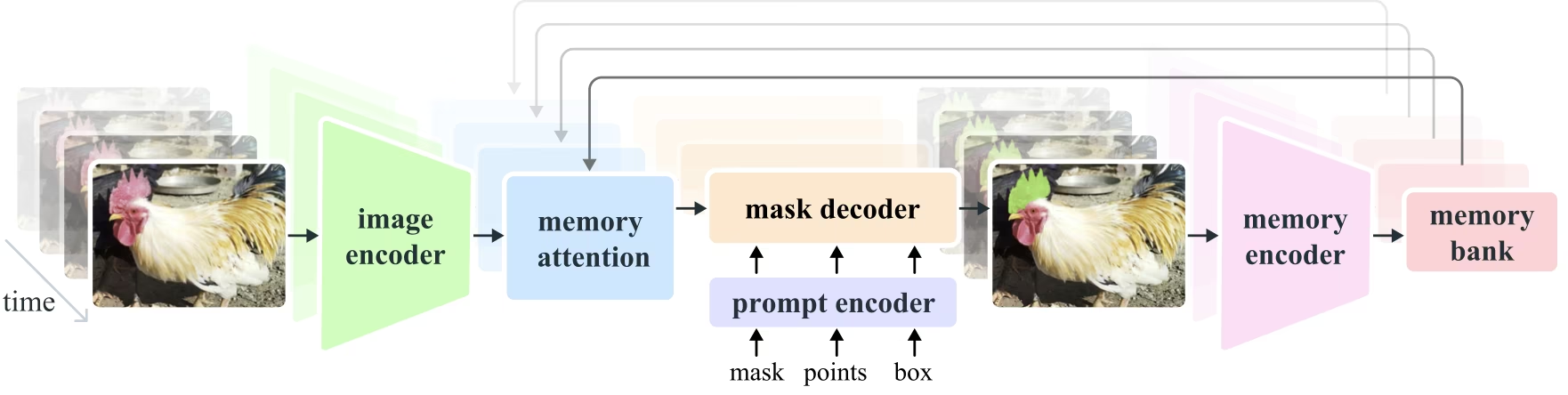

Model Architecture

Core Components

- Image and Video Encoder: Utilizes a transformer-based architecture to extract high-level features from both images and video frames. This component is responsible for understanding the visual content at each timestep.

- Prompt Encoder: Processes user-provided prompts (points, boxes, masks) to guide the segmentation task. This allows SAM 2 to adapt to user input and target specific objects within a scene.

- Memory Mechanism: Includes a memory encoder, memory bank, and memory attention module. These components collectively store and utilize information from past frames, enabling the model to maintain consistent object tracking over time.

- Mask Decoder: Generates the final segmentation masks based on the encoded image features and prompts. In video, it also uses memory context to ensure accurate tracking across frames.

Memory Mechanism and Occlusion Handling

The memory mechanism allows SAM 2 to handle temporal dependencies and occlusions in video data. As objects move and interact, SAM 2 records their features in a memory bank. When an object becomes occluded, the model can rely on this memory to predict its position and appearance when it reappears. The occlusion head specifically handles scenarios where objects are not visible, predicting the likelihood of an object being occluded.

Multi-Mask Ambiguity Resolution

In situations with ambiguity (e.g., overlapping objects), SAM 2 can generate multiple mask predictions. This feature is crucial for accurately representing complex scenes where a single mask might not sufficiently describe the scene's nuances.

SA-V Dataset

The SA-V dataset, developed for SAM 2's training, is one of the largest and most diverse video segmentation datasets available. It includes:

- 51,000+ Videos: Captured across 47 countries, providing a wide range of real-world scenarios.

- 600,000+ Mask Annotations: Detailed spatio-temporal mask annotations, referred to as "masklets," covering whole objects and parts.

- Dataset Scale: It features 4.5 times more videos and 53 times more annotations than previous largest datasets, offering unprecedented diversity and complexity.

Benchmarks

Video Object Segmentation

SAM 2 has demonstrated superior performance across major video segmentation benchmarks:

| Dataset | J&F | J | F |

|---|---|---|---|

| DAVIS 2017 | 82.5 | 79.8 | 85.2 |

| YouTube-VOS | 81.2 | 78.9 | 83.5 |

Interactive Segmentation

In interactive segmentation tasks, SAM 2 shows significant efficiency and accuracy:

| Dataset | NoC@90 | AUC |

|---|---|---|

| DAVIS Interactive | 1.54 | 0.872 |

Installation

To install SAM 2, use the following command. All SAM 2 models will automatically download on first use.

pip install ultralyticsHow to Use SAM 2: Versatility in Image and Video Segmentation

The following table details the available SAM 2 models, their pretrained weights, supported tasks, and compatibility with different operating modes like Inference, Validation, Training, and Export.

| Model Type | Pretrained Weights | Tasks Supported | Inference | Validation | Training | Export |

|---|---|---|---|---|---|---|

| SAM 2 tiny | sam2_t.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

| SAM 2 small | sam2_s.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

| SAM 2 base | sam2_b.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

| SAM 2 large | sam2_l.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

| SAM 2.1 tiny | sam2.1_t.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

| SAM 2.1 small | sam2.1_s.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

| SAM 2.1 base | sam2.1_b.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

| SAM 2.1 large | sam2.1_l.pt | Instance Segmentation | ✅ | ❌ | ❌ | ❌ |

SAM 2 Prediction Examples

SAM 2 can be utilized across a broad spectrum of tasks, including real-time video editing, medical imaging, and autonomous systems. Its ability to segment both static and dynamic visual data makes it a versatile tool for researchers and developers.

Segment with Prompts

Use prompts to segment specific objects in images or videos.

from ultralytics import SAM

# Load a model

model = SAM("sam2.1_b.pt")

# Display model information (optional)

model.info()

# Run inference with bboxes prompt

results = model("path/to/image.jpg", bboxes=[100, 100, 200, 200])

# Run inference with single point

results = model(points=[900, 370], labels=[1])

# Run inference with multiple points

results = model(points=[[400, 370], [900, 370]], labels=[1, 1])

# Run inference with multiple points prompt per object

results = model(points=[[[400, 370], [900, 370]]], labels=[[1, 1]])

# Run inference with negative points prompt

results = model(points=[[[400, 370], [900, 370]]], labels=[[1, 0]])Segment Everything

Segment the entire image or video content without specific prompts.

from ultralytics import SAM

# Load a model

model = SAM("sam2.1_b.pt")

# Display model information (optional)

model.info()

# Run inference

model("path/to/video.mp4")Segment Video and Track objects

Segment the entire video content with specific prompts and track objects.

from ultralytics.models.sam import SAM2VideoPredictor

# Create SAM2VideoPredictor

overrides = dict(conf=0.25, task="segment", mode="predict", imgsz=1024, model="sam2_b.pt")

predictor = SAM2VideoPredictor(overrides=overrides)

# Run inference with single point

results = predictor(source="test.mp4", points=[920, 470], labels=[1])

# Run inference with multiple points

results = predictor(source="test.mp4", points=[[920, 470], [909, 138]], labels=[1, 1])

# Run inference with multiple points prompt per object

results = predictor(source="test.mp4", points=[[[920, 470], [909, 138]]], labels=[[1, 1]])

# Run inference with negative points prompt

results = predictor(source="test.mp4", points=[[[920, 470], [909, 138]]], labels=[[1, 0]])- This example demonstrates how SAM 2 can be used to segment the entire content of an image or video if no prompts (bboxes/points/masks) are provided.

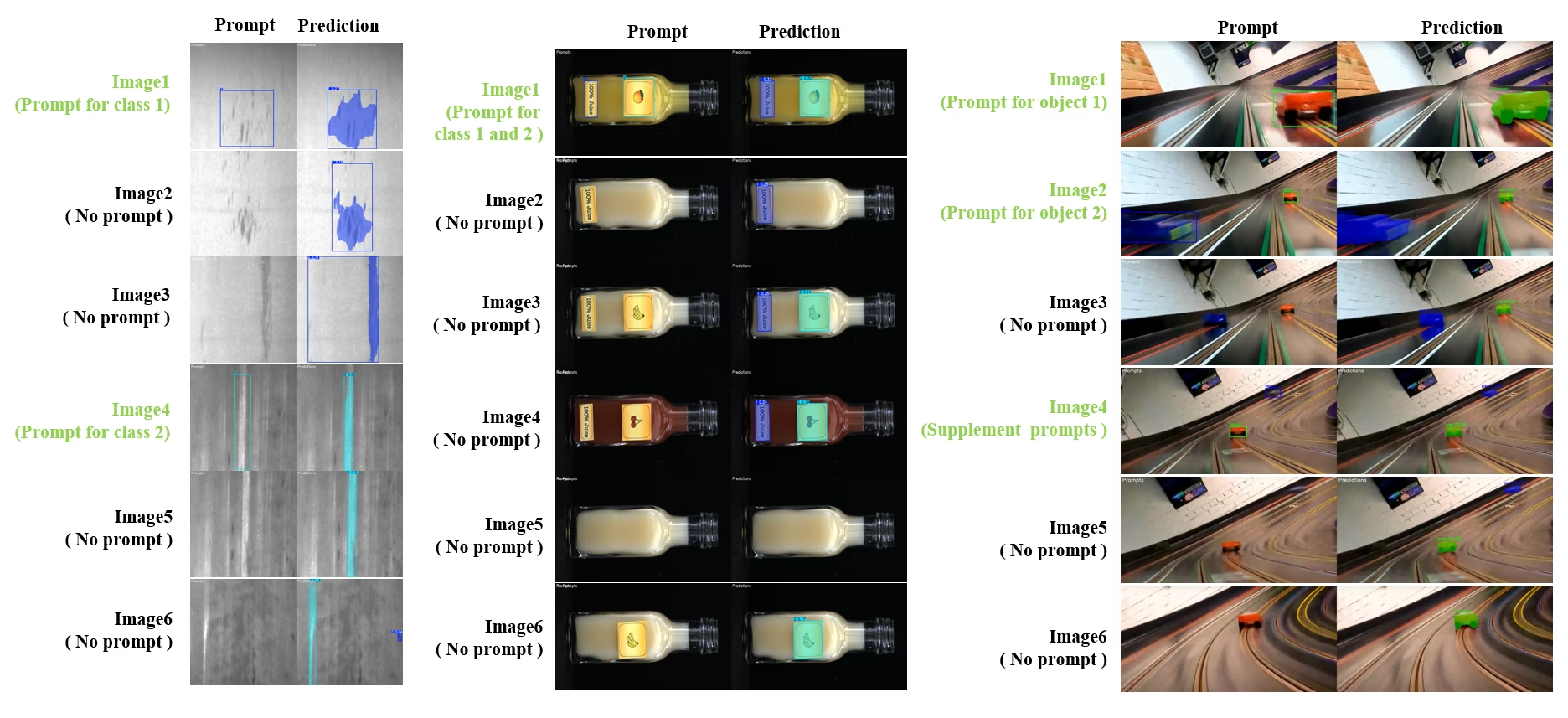

Dynamic Interactive Segment and Track

SAM2DynamicInteractivePredictor is an advanced training-free extension of SAM2 that enables dynamic interaction with multiple frames and continual learning capabilities. This predictor supports real-time prompt updates and memory management for improved tracking performance across a sequence of images. Compared to the original SAM2, SAM2DynamicInteractivePredictor rebuilds the inference flow to make the best use of pretrained SAM2 models without requiring additional training.

Key Features

It offers three significant enhancements:

- Dynamic Interactive: Add new prompts for merging/untracked new instances in following frames anytime during video processing

- Continual Learning: Add new prompts for existing instances to improve the model performance over time

- Independent Multi-Image Support: Process multiple independent images (not necessarily from a video sequence) with memory sharing and cross-image object tracking

Core Capabilities

- Prompt Flexibility: Accepts bounding boxes, points, and masks as prompts

- Memory Bank Management: Maintains a dynamic memory bank to store object states across frames

- Multi-Object Tracking: Supports tracking multiple objects simultaneously with individual object IDs

- Real-Time Updates: Allows adding new prompts during inference without reprocessing previous frames

- Independent Image Processing: Process standalone images with shared memory context for cross-image object consistency

from ultralytics.models.sam import SAM2DynamicInteractivePredictor

# Create SAM2DynamicInteractivePredictor

overrides = dict(conf=0.01, task="segment", mode="predict", imgsz=1024, model="sam2_t.pt", save=False)

predictor = SAM2DynamicInteractivePredictor(overrides=overrides, max_obj_num=10)

# Define a category by box prompt

predictor(source="image1.jpg", bboxes=[[100, 100, 200, 200]], obj_ids=[0], update_memory=True)

# Detect this particular object in a new image

results = predictor(source="image2.jpg")

# Add new category with a new object ID

results = predictor(

source="image4.jpg",

bboxes=[[300, 300, 400, 400]], # New object

obj_ids=[1], # New object ID

update_memory=True, # Add to memory

)

# Perform inference

results = predictor(source="image5.jpg")

# Add refinement prompts to the same category to boost performance

# This helps when object appearance changes significantly

results = predictor(

source="image6.jpg",

points=[[150, 150]], # Refinement point

labels=[1], # Positive point

obj_ids=[1], # Same object ID

update_memory=True, # Update memory with new information

)

# Perform inference on new image

results = predictor(source="image7.jpg")The SAM2DynamicInteractivePredictor is designed to work with SAM2 models, and support adding/refining categories by all the box/point/mask prompts natively that SAM2 supports. It is particularly useful for scenarios where objects appear or change over time, such as in video annotation or interactive editing tasks.

Arguments

| Name | Default Value | Data Type | Description |

|---|---|---|---|

max_obj_num | 3 | int | The preset maximum number of categories |

update_memory | False | bool | Whether to update memory with new prompts |

obj_ids | None | List[int] | List of object IDs corresponding to prompts |

Use Cases

SAM2DynamicInteractivePredictor is ideal for:

- Video annotation workflows where new objects appear during the sequence

- Interactive video editing requiring real-time object addition and refinement

- Surveillance applications with dynamic object tracking needs

- Medical imaging for tracking anatomical structures across time series

- Autonomous systems requiring adaptive object detection and tracking

- Multi-image datasets for consistent object segmentation across independent images

- Image collection analysis where objects need to be tracked across different scenes

- Cross-domain segmentation leveraging memory from diverse image contexts

- Semi-automatic annotation for efficient dataset creation with minimal manual intervention

SAM Comparison vs YOLO

Here we compare Meta's SAM 2 models, including the smallest SAM2-t variant, with Ultralytics segmentation models including YOLO26n-seg:

| Model | Size (MB) | Parameters (M) | Speed (CPU) (ms/im) |

|---|---|---|---|

| Meta SAM-b | 375 | 93.7 | 41703 |

| Meta SAM2-b | 162 | 80.8 | 28867 |

| Meta SAM2-t | 78.1 | 38.9 | 23430 |

| MobileSAM | 40.7 | 10.1 | 23802 |

| FastSAM-s with YOLOv8 backbone | 23.9 | 11.8 | 58.0 |

| Ultralytics YOLOv8n-seg | 7.1 (11.0x smaller) | 3.4 (11.4x less) | 24.8 (945x faster) |

| Ultralytics YOLO11n-seg | 6.2 (12.6x smaller) | 2.9 (13.4x less) | 24.3 (964x faster) |

| Ultralytics YOLO26n-seg | 6.7 (11.7x smaller) | 2.7 (14.4x less) | 25.2 (930x faster) |

This comparison demonstrates the substantial differences in model sizes and speeds between SAM variants and YOLO segmentation models. While SAM provides unique automatic segmentation capabilities, YOLO models, particularly YOLOv8n-seg, YOLO11n-seg and YOLO26n-seg, are significantly smaller, faster, and more computationally efficient.

SAM speeds measured with PyTorch, YOLO speeds measured with ONNX Runtime. Tests run on a 2025 Apple M4 Air with 16GB of RAM using torch==2.10.0, ultralytics==8.4.31, and onnxruntime==1.24.4. To reproduce this test:

from ultralytics import ASSETS, SAM, YOLO, FastSAM

# Profile SAM2-t, SAM2-b, SAM-b, MobileSAM

for file in ["sam_b.pt", "sam2_b.pt", "sam2_t.pt", "mobile_sam.pt"]:

model = SAM(file)

model.info()

model(ASSETS)

# Profile FastSAM-s

model = FastSAM("FastSAM-s.pt")

model.info()

model(ASSETS)

# Profile YOLO models (ONNX)

for file_name in ["yolov8n-seg.pt", "yolo11n-seg.pt", "yolo26n-seg.pt"]:

model = YOLO(file_name)

model.info()

onnx_path = model.export(format="onnx", dynamic=True)

model = YOLO(onnx_path)

model(ASSETS)Auto-Annotation: Efficient Dataset Creation

Auto-annotation is a powerful feature of SAM 2, enabling users to generate segmentation datasets quickly and accurately by leveraging pretrained models. This capability is particularly useful for creating large, high-quality datasets without extensive manual effort.

How to Auto-Annotate with SAM 2

Watch: Auto Annotation with Meta's Segment Anything 2 Model using Ultralytics | Data Labeling

To auto-annotate your dataset using SAM 2, follow this example:

from ultralytics.data.annotator import auto_annotate

auto_annotate(data="path/to/images", det_model="yolo26x.pt", sam_model="sam2_b.pt")| Argument | Type | Default | Description |

|---|---|---|---|

data | str | required | Path to directory containing target images for annotation or segmentation. |

det_model | str | 'yolo26x.pt' | YOLO detection model path for initial object detection. |

sam_model | str | 'sam_b.pt' | SAM model path for segmentation (supports SAM, SAM2 variants, and MobileSAM models). |

device | str | '' | Computation device (e.g., 'cuda:0', 'cpu', or '' for automatic device detection). |

conf | float | 0.25 | YOLO detection confidence threshold for filtering weak detections. |

iou | float | 0.45 | IoU threshold for Non-Maximum Suppression to filter overlapping boxes. |

imgsz | int | 640 | Input size for resizing images (must be multiple of 32). |

max_det | int | 300 | Maximum number of detections per image for memory efficiency. |

classes | list[int] | None | List of class indices to detect (e.g., [0, 1] for person & bicycle). |

output_dir | str | None | Save directory for annotations (defaults to './labels' relative to data path). |

This function facilitates the rapid creation of high-quality segmentation datasets, ideal for researchers and developers aiming to accelerate their projects.

Limitations

Despite its strengths, SAM 2 has certain limitations:

- Tracking Stability: SAM 2 may lose track of objects during extended sequences or significant viewpoint changes.

- Object Confusion: The model can sometimes confuse similar-looking objects, particularly in crowded scenes.

- Efficiency with Multiple Objects: Segmentation efficiency decreases when processing multiple objects simultaneously due to the lack of inter-object communication.

- Detail Accuracy: May miss fine details, especially with fast-moving objects. Additional prompts can partially address this issue, but temporal smoothness is not guaranteed.

Citations and Acknowledgments

If SAM 2 is a crucial part of your research or development work, please cite it using the following reference:

@article{ravi2024sam2,

title={SAM 2: Segment Anything in Images and Videos},

author={Ravi, Nikhila and Gabeur, Valentin and Hu, Yuan-Ting and Hu, Ronghang and Ryali, Chaitanya and Ma, Tengyu and Khedr, Haitham and R{\"a}dle, Roman and Rolland, Chloe and Gustafson, Laura and Mintun, Eric and Pan, Junting and Alwala, Kalyan Vasudev and Carion, Nicolas and Wu, Chao-Yuan and Girshick, Ross and Doll{\'a}r, Piotr and Feichtenhofer, Christoph},

journal={arXiv preprint},

year={2024}

}We extend our gratitude to Meta AI for their contributions to the AI community with this groundbreaking model and dataset.

FAQ

What is SAM 2 and how does it improve upon the original Segment Anything Model (SAM)?

SAM 2, the successor to Meta's Segment Anything Model (SAM), is a cutting-edge tool designed for comprehensive object segmentation in both images and videos. It excels in handling complex visual data through a unified, promptable model architecture that supports real-time processing and zero-shot generalization. SAM 2 offers several improvements over the original SAM, including:

- Unified Model Architecture: Combines image and video segmentation capabilities in a single model.

- Real-Time Performance: Processes approximately 44 frames per second, making it suitable for applications requiring immediate feedback.

- Zero-Shot Generalization: Segments objects it has never encountered before, useful in diverse visual domains.

- Interactive Refinement: Allows users to iteratively refine segmentation results by providing additional prompts.

- Advanced Handling of Visual Challenges: Manages common video segmentation challenges like object occlusion and reappearance.

For more details on SAM 2's architecture and capabilities, explore the SAM 2 research paper.

How can I use SAM 2 for real-time video segmentation?

SAM 2 can be utilized for real-time video segmentation by leveraging its promptable interface and real-time inference capabilities. Here's a basic example:

Use prompts to segment specific objects in images or videos.

from ultralytics import SAM

# Load a model

model = SAM("sam2_b.pt")

# Display model information (optional)

model.info()

# Segment with bounding box prompt

results = model("path/to/image.jpg", bboxes=[100, 100, 200, 200])

# Segment with point prompt

results = model("path/to/image.jpg", points=[150, 150], labels=[1])For more comprehensive usage, refer to the How to Use SAM 2 section.

What datasets are used to train SAM 2, and how do they enhance its performance?

SAM 2 is trained on the SA-V dataset, one of the largest and most diverse video segmentation datasets available. The SA-V dataset includes:

- 51,000+ Videos: Captured across 47 countries, providing a wide range of real-world scenarios.

- 600,000+ Mask Annotations: Detailed spatio-temporal mask annotations, referred to as "masklets," covering whole objects and parts.

- Dataset Scale: Features 4.5 times more videos and 53 times more annotations than previous largest datasets, offering unprecedented diversity and complexity.

This extensive dataset allows SAM 2 to achieve superior performance across major video segmentation benchmarks and enhances its zero-shot generalization capabilities. For more information, see the SA-V Dataset section.

How does SAM 2 handle occlusions and object reappearances in video segmentation?

SAM 2 includes a sophisticated memory mechanism to manage temporal dependencies and occlusions in video data. The memory mechanism consists of:

- Memory Encoder and Memory Bank: Stores features from past frames.

- Memory Attention Module: Utilizes stored information to maintain consistent object tracking over time.

- Occlusion Head: Specifically handles scenarios where objects are not visible, predicting the likelihood of an object being occluded.

This mechanism ensures continuity even when objects are temporarily obscured or exit and re-enter the scene. For more details, refer to the Memory Mechanism and Occlusion Handling section.

How does SAM 2 compare to other segmentation models like YOLO26?

SAM 2 models, such as Meta's SAM2-t and SAM2-b, offer powerful zero-shot segmentation capabilities but are significantly larger and slower compared to YOLO models. For instance, YOLO26n-seg is approximately 24 times smaller and over 1145 times faster than SAM2-b on CPU. While SAM 2 excels in versatile, prompt-based, and zero-shot segmentation scenarios, YOLO26 is optimized for speed, efficiency, and real-time applications with NMS-free end-to-end inference, making it better suited for deployment in resource-constrained environments.