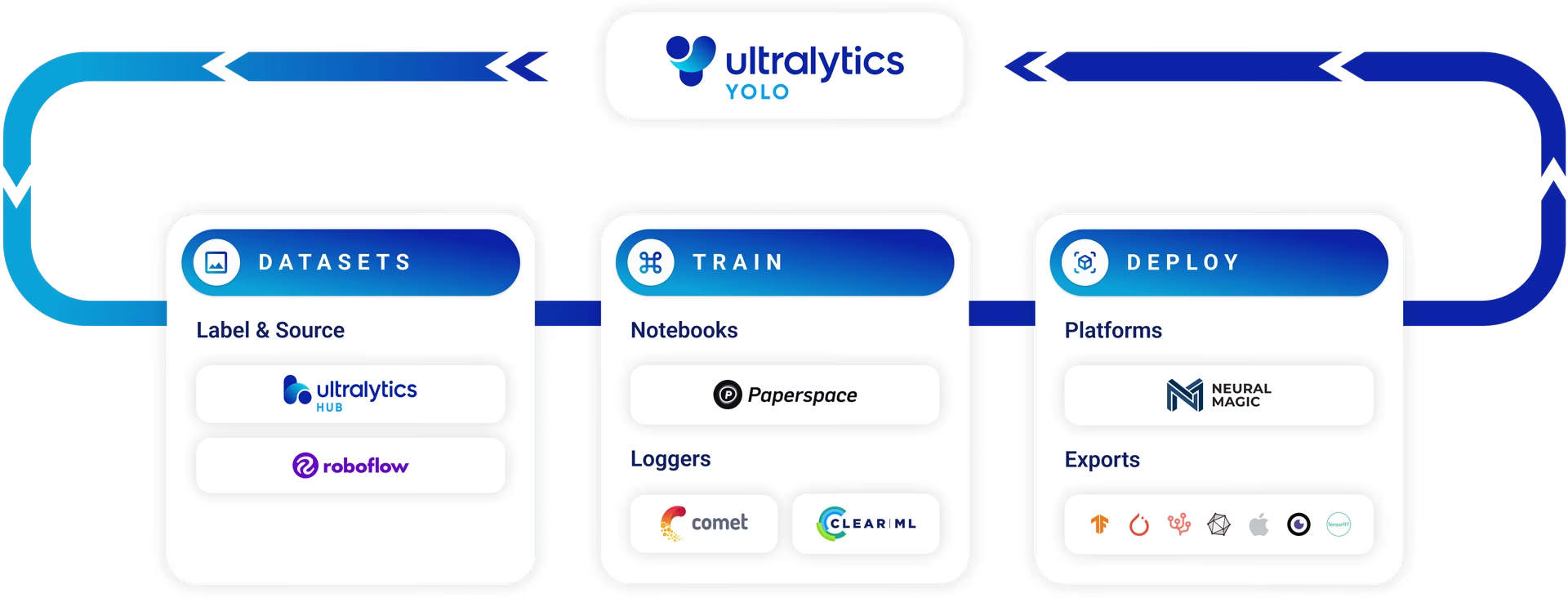

Model Prediction with Ultralytics YOLO

Introduction

In the world of machine learning and computer vision, the process of making sense of visual data is often called inference or prediction. Ultralytics YOLO26 offers a powerful feature known as predict mode, tailored for high-performance, real-time inference across a wide range of data sources.

Watch: How to Extract Results from Ultralytics YOLO26 Tasks for Custom Projects 🚀

Real-world Applications

| Manufacturing | Sports | Safety |

|---|---|---|

|  |  |

| Vehicle Spare Parts Detection | Football Player Detection | People Fall Detection |

Why Use Ultralytics YOLO for Inference?

Here's why you should consider YOLO26's predict mode for your various inference needs:

- Versatility: Capable of running inference on images, videos, and even live streams.

- Performance: Engineered for real-time, high-speed processing without sacrificing accuracy.

- Ease of Use: Intuitive Python and CLI interfaces for rapid deployment and testing.

- Highly Customizable: Various settings and parameters to tune the model's inference behavior according to your specific requirements.

- Production Ready: Deploy models as API endpoints on Ultralytics Platform with auto-scaling and monitoring, or run inference locally.

Key Features of Predict Mode

YOLO26's predict mode is designed to be robust and versatile, featuring:

- Multiple Data Source Compatibility: Whether your data is in the form of individual images, a collection of images, video files, or real-time video streams, predict mode has you covered.

- Streaming Mode: Use the streaming feature to generate a memory-efficient generator of

Resultsobjects. Enable this by settingstream=Truein the predictor's call method. - Batch Processing: Process multiple images or video frames in a single batch, further reducing total inference time.

- Integration Friendly: Easily integrate with existing data pipelines and other software components, thanks to its flexible API.

Ultralytics YOLO models return either a Python list of Results objects or a memory-efficient generator of Results objects when stream=True is passed to the model during inference:

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # pretrained YOLO26n model

# Run batched inference on a list of images

results = model(["image1.jpg", "image2.jpg"]) # return a list of Results objects

# Process results list

for result in results:

boxes = result.boxes # Boxes object for bounding box outputs

masks = result.masks # Masks object for segmentation masks outputs

keypoints = result.keypoints # Keypoints object for pose outputs

probs = result.probs # Probs object for classification outputs

obb = result.obb # Oriented boxes object for OBB outputs

result.show() # display to screen

result.save(filename="result.jpg") # save to diskInference Sources

YOLO26 can process different types of input sources for inference, as shown in the table below. The sources include static images, video streams, and various data formats. The table also indicates whether each source can be used in streaming mode with the argument stream=True ✅. Streaming mode is beneficial for processing videos or live streams as it creates a generator of results instead of loading all frames into memory.

Use stream=True for processing long videos or large datasets to efficiently manage memory. When stream=False, the results for all frames or data points are stored in memory, which can quickly add up and cause out-of-memory errors for large inputs. In contrast, stream=True utilizes a generator, which only keeps the results of the current frame or data point in memory, significantly reducing memory consumption and preventing out-of-memory issues.

| Source | Example | Type | Notes |

|---|---|---|---|

| image | 'image.jpg' | str or Path | Single image file. |

| URL | 'https://ultralytics.com/images/bus.jpg' | str | URL to an image. |

| screenshot | 'screen' | str | Capture a screenshot. |

| PIL | Image.open('image.jpg') | PIL.Image | HWC format with RGB channels. |

| OpenCV | cv2.imread('image.jpg') | np.ndarray | HWC format with BGR channels uint8 (0-255). |

| numpy | np.zeros((640,1280,3)) | np.ndarray | HWC format with BGR channels uint8 (0-255). |

| torch | torch.zeros(16,3,320,640) | torch.Tensor | BCHW format with RGB channels float32 (0.0-1.0). |

| CSV | 'sources.csv' | str or Path | CSV file containing paths to images, videos, or directories. |

| video ✅ | 'video.mp4' | str or Path | Video file in formats like MP4, AVI, etc. |

| directory ✅ | 'path/' | str or Path | Path to a directory containing images or videos. |

| glob ✅ | 'path/*.jpg' | str | Glob pattern to match multiple files. Use the * character as a wildcard. |

| YouTube ✅ | 'https://youtu.be/LNwODJXcvt4' | str | URL to a YouTube video. |

| stream ✅ | 'rtsp://example.com/media.mp4' | str | URL for streaming protocols such as RTSP, RTMP, TCP, or an IP address. |

| multi-stream ✅ | 'list.streams' | str or Path | *.streams text file with one stream URL per row, i.e., 8 streams will run at batch-size 8. |

| webcam ✅ | 0 | int | Index of the connected camera device to run inference on. |

Below are code examples for using each source type:

Run inference on an image file.

from ultralytics import YOLO

# Load a pretrained YOLO26n model

model = YOLO("yolo26n.pt")

# Define path to the image file

source = "path/to/image.jpg"

# Run inference on the source

results = model(source) # list of Results objectsInference Arguments

model.predict() accepts multiple arguments that can be passed at inference time to override defaults:

Fixed shape vs minimum rectangle (rect)

By default, predict uses rect=True, which enables minimum-rectangle padding when possible. The image is scaled to fit inside imgsz and padded only to the nearest stride multiple, so the final tensor may be smaller than imgsz. Minimum-rectangle padding is only used when all images in the batch have the same shape and the backend supports it (PyTorch .pt, or dynamic ONNX / Triton). Otherwise, images are padded to the full imgsz target.

Use rect=False to always pad to the full imgsz target. This is recommended when you need a fixed input size to match exported models (ONNX, TensorRT, etc.).

Integer vs tuple imgsz

- An integer

imgsz=640becomes a square target(640, 640)after stride rounding. - A tuple

imgsz=(384, 672)sets a rectangular target. Withrect=Trueandauto=True, the actual tensor can be smaller than this target.

Training vs predict/export

Training accepts only a single integer imgsz (a [h, w] list is coerced to the largest value). Predict and export accept either an integer or a (height, width) tuple.

from ultralytics import YOLO

# Load a pretrained YOLO26n model

model = YOLO("yolo26n.pt")

# Run inference on 'bus.jpg' with arguments

model.predict("https://ultralytics.com/images/bus.jpg", save=True, imgsz=320, conf=0.25)Inference arguments:

| Argument | Type | Default | Description |

|---|---|---|---|

source | str or int or None | None | Specifies the data source for inference. Can be an image path, video file, directory, URL, or device ID for live feeds. If omitted, a warning is logged and the model falls back to the built-in demo assets (ultralytics/assets, or a demo URL for OBB). Supports a wide range of formats and sources, enabling flexible application across different types of input. |

conf | float | 0.25 | Sets the minimum confidence threshold for detections. Objects detected with confidence below this threshold will be disregarded. Adjusting this value can help reduce false positives. |

iou | float | 0.7 | Intersection Over Union (IoU) threshold for Non-Maximum Suppression (NMS). Lower values result in fewer detections by eliminating overlapping boxes, useful for reducing duplicates. |

imgsz | int or tuple | 640 | Letterbox target. An integer gives a square N×N; a tuple gives (height, width). With rect=True, the actual tensor may be smaller than this target due to minimum-rectangle padding. Use rect=False for a fixed size. See Fixed shape vs minimum rectangle. |

rect | bool | True | If True, use minimum-rectangle padding when possible (same-shape batch and supported backend). If False, always pad to the full imgsz. See Fixed shape vs minimum rectangle. |

half | bool | False | Enables half-precision (FP16) inference, which can speed up model inference on supported GPUs with minimal impact on accuracy. |

device | str | None | Specifies the device for inference (e.g., cpu, cuda:0, 0, npu or npu:0). Allows users to select between CPU, a specific GPU, Huawei Ascend NPU, or other compute devices for model execution. |

batch | int | 1 | Specifies the batch size for inference (only works when the source is a directory, video file, or .txt file). A larger batch size can provide higher throughput, shortening the total amount of time required for inference. |

max_det | int | 300 | Maximum number of detections allowed per image. Limits the total number of objects the model can detect in a single inference, preventing excessive outputs in dense scenes. |

vid_stride | int | 1 | Frame stride for video inputs. Allows skipping frames in videos to speed up processing at the cost of temporal resolution. A value of 1 processes every frame, higher values skip frames. |

stream_buffer | bool | False | Determines whether to queue incoming frames for video streams. If False, old frames get dropped to accommodate new frames (optimized for real-time applications). If True, queues new frames in a buffer, ensuring no frames get skipped, but will cause latency if inference FPS is lower than stream FPS. |

visualize | bool | False | Activates visualization of model features during inference, providing insights into what the model is "seeing". Useful for debugging and model interpretation. |

augment | bool | False | Enables test-time augmentation (TTA) for predictions, potentially improving detection robustness at the cost of inference speed. |

agnostic_nms | bool | False | Enables class-agnostic Non-Maximum Suppression (NMS), which merges overlapping boxes of different classes. Useful in multi-class detection scenarios where class overlap is common. For end-to-end models (YOLO26, YOLOv10), this only prevents the same detection from appearing with multiple class labels (IoU=1.0 duplicates) and does not perform IoU-threshold-based suppression between distinct boxes. |

classes | list[int] | None | Filters predictions to a set of class IDs. Only detections belonging to the specified classes will be returned. Useful for focusing on relevant objects in multi-class detection tasks. |

retina_masks | bool | False | Returns high-resolution segmentation masks. The returned masks (masks.data) will match the original image size if enabled. If disabled, they have the image size used during inference. |

embed | list[int] | None | Specifies the layers from which to extract feature vectors or embeddings. Useful for downstream tasks like clustering or similarity search. |

project | str | None | Name of the project directory where prediction outputs are saved if save is enabled. |

name | str | None | Name of the prediction run. Used for creating a subdirectory within the project folder, where prediction outputs are stored if save is enabled. |

stream | bool | False | Enables memory-efficient processing for long videos or numerous images by returning a generator of Results objects instead of loading all frames into memory at once. |

verbose | bool | True | Controls whether to display detailed inference logs in the terminal, providing real-time feedback on the prediction process. |

compile | bool or str | False | Enables PyTorch 2.x torch.compile graph compilation with backend='inductor'. Accepts True → "default", False → disables, or a string mode such as "default", "reduce-overhead", "max-autotune-no-cudagraphs". Falls back to eager with a warning if unsupported. |

end2end | bool | None | Overrides the end-to-end mode in YOLO models that support NMS-free inference (YOLO26, YOLOv10). Setting it to False lets you run prediction using the traditional NMS pipeline, additionally allowing you to make use of the iou argument. See the End-to-End Detection guide for details. |

Visualization arguments:

| Argument | Type | Default | Description |

|---|---|---|---|

show | bool | False | If True, displays the annotated images or videos in a window. Useful for immediate visual feedback during development or testing. |

save | bool | False or True | Enables saving of the annotated images or videos to files. Useful for documentation, further analysis, or sharing results. Defaults to True when using CLI & False when used in Python. |

save_frames | bool | False | When processing videos, saves individual frames as images. Useful for extracting specific frames or for detailed frame-by-frame analysis. |

save_txt | bool | False | Saves detection results in a text file, following the format [class] [x_center] [y_center] [width] [height] [confidence]. Useful for integration with other analysis tools. |

save_conf | bool | False | Includes confidence scores in the saved text files. Enhances the detail available for post-processing and analysis. |

save_crop | bool | False | Saves cropped images of detections. Useful for dataset augmentation, analysis, or creating focused datasets for specific objects. |

show_labels | bool | True | Displays labels for each detection in the visual output. Provides immediate understanding of detected objects. |

show_conf | bool | True | Displays the confidence score for each detection alongside the label. Gives insight into the model's certainty for each detection. |

show_boxes | bool | True | Draws bounding boxes around detected objects. Essential for visual identification and location of objects in images or video frames. |

line_width | int or None | None | Specifies the line width of bounding boxes. If None, the line width is automatically adjusted based on the image size. Provides visual customization for clarity. |

Image and Video Formats

YOLO26 supports various image and video formats, as specified in ultralytics/data/utils.py. See the tables below for the valid suffixes and example predict commands.

Images

The below table contains valid Ultralytics image formats.

HEIC/HEIF formats require pi-heif, which is installed automatically on first use. AVIF is supported natively by Pillow.

| Image Suffixes | Example Predict Command | Reference |

|---|---|---|

.avif | yolo predict source=image.avif | AV1 Image File Format |

.bmp | yolo predict source=image.bmp | Microsoft BMP File Format |

.dng | yolo predict source=image.dng | Adobe DNG |

.heic | yolo predict source=image.heic | High Efficiency Image Format |

.heif | yolo predict source=image.heif | High Efficiency Image Format |

.jp2 | yolo predict source=image.jp2 | JPEG 2000 |

.jpeg | yolo predict source=image.jpeg | JPEG |

.jpg | yolo predict source=image.jpg | JPEG |

.mpo | yolo predict source=image.mpo | Multi Picture Object |

.png | yolo predict source=image.png | Portable Network Graphics |

.tif | yolo predict source=image.tif | Tag Image File Format |

.tiff | yolo predict source=image.tiff | Tag Image File Format |

.webp | yolo predict source=image.webp | WebP |

Videos

The below table contains valid Ultralytics video formats.

| Video Suffixes | Example Predict Command | Reference |

|---|---|---|

.asf | yolo predict source=video.asf | Advanced Systems Format |

.avi | yolo predict source=video.avi | Audio Video Interleave |

.gif | yolo predict source=video.gif | Graphics Interchange Format |

.m4v | yolo predict source=video.m4v | MPEG-4 Part 14 |

.mkv | yolo predict source=video.mkv | Matroska |

.mov | yolo predict source=video.mov | QuickTime File Format |

.mp4 | yolo predict source=video.mp4 | MPEG-4 Part 14 - Wikipedia |

.mpeg | yolo predict source=video.mpeg | MPEG-1 Part 2 |

.mpg | yolo predict source=video.mpg | MPEG-1 Part 2 |

.ts | yolo predict source=video.ts | MPEG Transport Stream |

.wmv | yolo predict source=video.wmv | Windows Media Video |

.webm | yolo predict source=video.webm | WebM Project |

Working with Results

All Ultralytics predict() calls will return a list of Results objects:

from ultralytics import YOLO

# Load a pretrained YOLO26n model

model = YOLO("yolo26n.pt")

# Run inference on an image

results = model("https://ultralytics.com/images/bus.jpg")

results = model(

[

"https://ultralytics.com/images/bus.jpg",

"https://ultralytics.com/images/zidane.jpg",

]

) # batch inferenceResults objects have the following attributes:

| Attribute | Type | Description |

|---|---|---|

orig_img | np.ndarray | The original image as a numpy array. |

orig_shape | tuple | The original image shape in (height, width) format. |

boxes | Boxes, optional | A Boxes object containing the detection bounding boxes. |

masks | Masks, optional | A Masks object containing the detection masks. |

probs | Probs, optional | A Probs object containing probabilities of each class for classification task. |

keypoints | Keypoints, optional | A Keypoints object containing detected keypoints for each object. |

obb | OBB, optional | An OBB object containing oriented bounding boxes. |

speed | dict | A dictionary of preprocess, inference, and postprocess speeds in milliseconds per image. |

names | dict | A dictionary mapping class indices to class names. |

path | str | The path to the image file. |

save_dir | str, optional | Directory to save results. |

Results objects have the following methods:

| Method | Return Type | Description |

|---|---|---|

update() | None | Updates the Results object with new detection data (boxes, masks, probs, obb, keypoints). |

cpu() | Results | Returns a copy of the Results object with all tensors moved to CPU memory. |

numpy() | Results | Returns a copy of the Results object with all tensors converted to numpy arrays. |

cuda() | Results | Returns a copy of the Results object with all tensors moved to GPU memory. |

to() | Results | Returns a copy of the Results object with tensors moved to specified device and dtype. |

new() | Results | Creates a new Results object with the same image, path, names, and speed attributes. |

plot() | np.ndarray | Plots detection results on an input RGB image and returns the annotated image. |

show() | None | Displays the image with annotated inference results. |

save() | str | Saves annotated inference results image to file and returns the filename. |

verbose() | str | Returns a log string for each task, detailing detection and classification outcomes. |

save_txt() | str | Saves detection results to a text file and returns the path to the saved file. |

save_crop() | None | Saves cropped detection images to specified directory. |

summary() | List[Dict[str, Any]] | Converts inference results to a summarized dictionary with optional normalization. |

to_df() | DataFrame | Converts detection results to a Polars DataFrame. |

to_csv() | str | Converts detection results to CSV format. |

to_json() | str | Converts detection results to JSON format. |

For more details see the Results class documentation.

Boxes

Boxes object can be used to index, manipulate, and convert bounding boxes to different formats.

from ultralytics import YOLO

# Load a pretrained YOLO26n model

model = YOLO("yolo26n.pt")

# Run inference on an image

results = model("https://ultralytics.com/images/bus.jpg") # results list

# View results

for r in results:

print(r.boxes) # print the Boxes object containing the detection bounding boxesHere is a table for the Boxes class methods and properties, including their name, type, and description:

| Name | Type | Description |

|---|---|---|

cpu() | Method | Move the object to CPU memory. |

numpy() | Method | Convert the object to a numpy array. |

cuda() | Method | Move the object to CUDA memory. |

to() | Method | Move the object to the specified device. |

xyxy | Property (torch.Tensor) | Return the boxes in xyxy format. |

conf | Property (torch.Tensor) | Return the confidence values of the boxes. |

cls | Property (torch.Tensor) | Return the class values of the boxes. |

id | Property (torch.Tensor) | Return the track IDs of the boxes (if available). |

xywh | Property (torch.Tensor) | Return the boxes in xywh format. |

xyxyn | Property (torch.Tensor) | Return the boxes in xyxy format normalized by original image size. |

xywhn | Property (torch.Tensor) | Return the boxes in xywh format normalized by original image size. |

For more details see the Boxes class documentation.

Masks

Masks object can be used to index, manipulate and convert masks to segments.

from ultralytics import YOLO

# Load a pretrained YOLO26n-seg Segment model

model = YOLO("yolo26n-seg.pt")

# Run inference on an image

results = model("https://ultralytics.com/images/bus.jpg") # results list

# View results

for r in results:

print(r.masks) # print the Masks object containing the detected instance masksHere is a table for the Masks class methods and properties, including their name, type, and description:

| Name | Type | Description |

|---|---|---|

cpu() | Method | Returns the masks tensor on CPU memory. |

numpy() | Method | Returns the masks tensor as a numpy array. |

cuda() | Method | Returns the masks tensor on GPU memory. |

to() | Method | Returns the masks tensor with the specified device and dtype. |

xyn | Property (torch.Tensor) | A list of normalized segments represented as tensors. |

xy | Property (torch.Tensor) | A list of segments in pixel coordinates represented as tensors. |

For more details see the Masks class documentation.

Keypoints

Keypoints object can be used to index, manipulate and normalize coordinates.

from ultralytics import YOLO

# Load a pretrained YOLO26n-pose Pose model

model = YOLO("yolo26n-pose.pt")

# Run inference on an image

results = model("https://ultralytics.com/images/bus.jpg") # results list

# View results

for r in results:

print(r.keypoints) # print the Keypoints object containing the detected keypointsHere is a table for the Keypoints class methods and properties, including their name, type, and description:

| Name | Type | Description |

|---|---|---|

cpu() | Method | Returns the keypoints tensor on CPU memory. |

numpy() | Method | Returns the keypoints tensor as a numpy array. |

cuda() | Method | Returns the keypoints tensor on GPU memory. |

to() | Method | Returns the keypoints tensor with the specified device and dtype. |

xyn | Property (torch.Tensor) | A list of normalized keypoints represented as tensors. |

xy | Property (torch.Tensor) | A list of keypoints in pixel coordinates represented as tensors. |

conf | Property (torch.Tensor) | Returns confidence values of keypoints if available, else None. |

For more details see the Keypoints class documentation.

Probs

Probs object can be used index, get top1 and top5 indices and scores of classification.

from ultralytics import YOLO

# Load a pretrained YOLO26n-cls Classify model

model = YOLO("yolo26n-cls.pt")

# Run inference on an image

results = model("https://ultralytics.com/images/bus.jpg") # results list

# View results

for r in results:

print(r.probs) # print the Probs object containing the detected class probabilitiesHere's a table summarizing the methods and properties for the Probs class:

| Name | Type | Description |

|---|---|---|

cpu() | Method | Returns a copy of the probs tensor on CPU memory. |

numpy() | Method | Returns a copy of the probs tensor as a numpy array. |

cuda() | Method | Returns a copy of the probs tensor on GPU memory. |

to() | Method | Returns a copy of the probs tensor with the specified device and dtype. |

top1 | Property (int) | Index of the top 1 class. |

top5 | Property (list[int]) | Indices of the top 5 classes. |

top1conf | Property (torch.Tensor) | Confidence of the top 1 class. |

top5conf | Property (torch.Tensor) | Confidences of the top 5 classes. |

For more details see the Probs class documentation.

OBB

OBB object can be used to index, manipulate, and convert oriented bounding boxes to different formats.

from ultralytics import YOLO

# Load a pretrained YOLO26n model

model = YOLO("yolo26n-obb.pt")

# Run inference on an image

results = model("https://ultralytics.com/images/boats.jpg") # results list

# View results

for r in results:

print(r.obb) # print the OBB object containing the oriented detection bounding boxesHere is a table for the OBB class methods and properties, including their name, type, and description:

| Name | Type | Description |

|---|---|---|

cpu() | Method | Move the object to CPU memory. |

numpy() | Method | Convert the object to a numpy array. |

cuda() | Method | Move the object to CUDA memory. |

to() | Method | Move the object to the specified device. |

conf | Property (torch.Tensor) | Return the confidence values of the boxes. |

cls | Property (torch.Tensor) | Return the class values of the boxes. |

id | Property (torch.Tensor) | Return the track IDs of the boxes (if available). |

xyxy | Property (torch.Tensor) | Return the horizontal boxes in xyxy format. |

xywhr | Property (torch.Tensor) | Return the rotated boxes in xywhr format. |

xyxyxyxy | Property (torch.Tensor) | Return the rotated boxes in xyxyxyxy format. |

xyxyxyxyn | Property (torch.Tensor) | Return the rotated boxes in xyxyxyxy format normalized by image size. |

For more details see the OBB class documentation.

Plotting Results

The plot() method in Results objects facilitates visualization of predictions by overlaying detected objects (such as bounding boxes, masks, keypoints, and probabilities) onto the original image. This method returns the annotated image as a NumPy array, allowing for easy display or saving.

from PIL import Image

from ultralytics import YOLO

# Load a pretrained YOLO26n model

model = YOLO("yolo26n.pt")

# Run inference on 'bus.jpg'

results = model(["https://ultralytics.com/images/bus.jpg", "https://ultralytics.com/images/zidane.jpg"]) # results list

# Visualize the results

for i, r in enumerate(results):

# Plot results image

im_bgr = r.plot() # BGR-order numpy array

im_rgb = Image.fromarray(im_bgr[..., ::-1]) # RGB-order PIL image

# Show results to screen (in supported environments)

r.show()

# Save results to disk

r.save(filename=f"results{i}.jpg")plot() Method Parameters

The plot() method supports various arguments to customize the output:

| Argument | Type | Description | Default |

|---|---|---|---|

conf | bool | Include detection confidence scores. | True |

line_width | float | Line width of bounding boxes. Scales with image size if None. | None |

font_size | float | Text font size. Scales with image size if None. | None |

font | str | Font name for text annotations. | 'Arial.ttf' |

pil | bool | Return image as a PIL Image object. | False |

img | np.ndarray | Alternative image for plotting. Uses the original image if None. | None |

im_gpu | torch.Tensor | GPU-accelerated image for faster mask plotting. Shape: (1, 3, 640, 640). | None |

kpt_radius | int | Radius for drawn keypoints. | 5 |

kpt_line | bool | Connect keypoints with lines. | True |

labels | bool | Include class labels in annotations. | True |

boxes | bool | Overlay bounding boxes on the image. | True |

masks | bool | Overlay masks on the image. | True |

probs | bool | Include classification probabilities. | True |

show | bool | Display the annotated image directly using the default image viewer. | False |

save | bool | Save the annotated image to a file specified by filename. | False |

filename | str | Path and name of the file to save the annotated image if save is True. | None |

color_mode | str | Specify the color mode, e.g., 'instance' or 'class'. | 'class' |

txt_color | tuple[int, int, int] | RGB text color for bounding box and image classification label. | (255, 255, 255) |

Thread-Safe Inference

Ensuring thread safety during inference is crucial when you are running multiple YOLO models in parallel across different threads. Thread-safe inference guarantees that each thread's predictions are isolated and do not interfere with one another, avoiding race conditions and ensuring consistent and reliable outputs.

When using YOLO models in a multi-threaded application, it's important to instantiate separate model objects for each thread or employ thread-local storage to prevent conflicts:

Instantiate a single model inside each thread for thread-safe inference:

from threading import Thread

from ultralytics import YOLO

def thread_safe_predict(model, image_path):

"""Performs thread-safe prediction on an image using a locally instantiated YOLO model."""

model = YOLO(model)

results = model.predict(image_path)

# Process results

# Starting threads that each have their own model instance

Thread(target=thread_safe_predict, args=("yolo26n.pt", "image1.jpg")).start()

Thread(target=thread_safe_predict, args=("yolo26n.pt", "image2.jpg")).start()For an in-depth look at thread-safe inference with YOLO models and step-by-step instructions, please refer to our YOLO Thread-Safe Inference Guide. This guide will provide you with all the necessary information to avoid common pitfalls and ensure that your multi-threaded inference runs smoothly.

Streaming Source for-loop

Here's a Python script using OpenCV (cv2) and YOLO to run inference on video frames. This script assumes you have already installed the necessary packages (opencv-python and ultralytics).

import cv2

from ultralytics import YOLO

# Load the YOLO model

model = YOLO("yolo26n.pt")

# Open the video file

video_path = "path/to/your/video/file.mp4"

cap = cv2.VideoCapture(video_path)

# Loop through the video frames

while cap.isOpened():

# Read a frame from the video

success, frame = cap.read()

if success:

# Run YOLO inference on the frame

results = model(frame)

# Visualize the results on the frame

annotated_frame = results[0].plot()

# Display the annotated frame

cv2.imshow("YOLO Inference", annotated_frame)

# Break the loop if 'q' is pressed

if cv2.waitKey(1) & 0xFF == ord("q"):

break

else:

# Break the loop if the end of the video is reached

break

# Release the video capture object and close the display window

cap.release()

cv2.destroyAllWindows()This script will run predictions on each frame of the video, visualize the results, and display them in a window. The loop can be exited by pressing 'q'.

FAQ

What is Ultralytics YOLO and its predict mode for real-time inference?

Ultralytics YOLO is a state-of-the-art model for real-time object detection, segmentation, and classification. Its predict mode allows users to perform high-speed inference on various data sources such as images, videos, and live streams. Designed for performance and versatility, it also offers batch processing and streaming modes. For more details on its features, check out the Ultralytics YOLO predict mode.

How can I run inference using Ultralytics YOLO on different data sources?

Ultralytics YOLO can process a wide range of data sources, including individual images, videos, directories, URLs, and streams. You can specify the data source in the model.predict() call. For example, use 'image.jpg' for a local image or 'https://ultralytics.com/images/bus.jpg' for a URL. Check out the detailed examples for various inference sources in the documentation.

How do I optimize YOLO inference speed and memory usage?

To optimize inference speed and manage memory efficiently, you can use the streaming mode by setting stream=True in the predictor's call method. The streaming mode generates a memory-efficient generator of Results objects instead of loading all frames into memory. For processing long videos or large datasets, streaming mode is particularly useful. Learn more about streaming mode.

What inference arguments does Ultralytics YOLO support?

The model.predict() method in YOLO supports various arguments such as conf, iou, imgsz, device, and more. These arguments allow you to customize the inference process, setting parameters like confidence thresholds, image size, and the device used for computation. Detailed descriptions of these arguments can be found in the inference arguments section.

How can I visualize and save the results of YOLO predictions?

After running inference with YOLO, the Results objects contain methods for displaying and saving annotated images. You can use methods like result.show() and result.save(filename="result.jpg") to visualize and save the results. Any missing parent directories in the filename path are created automatically (e.g., result.save("path/to/result.jpg")). For a comprehensive list of these methods, refer to the working with results section.