Link to this sectionObject Detection#

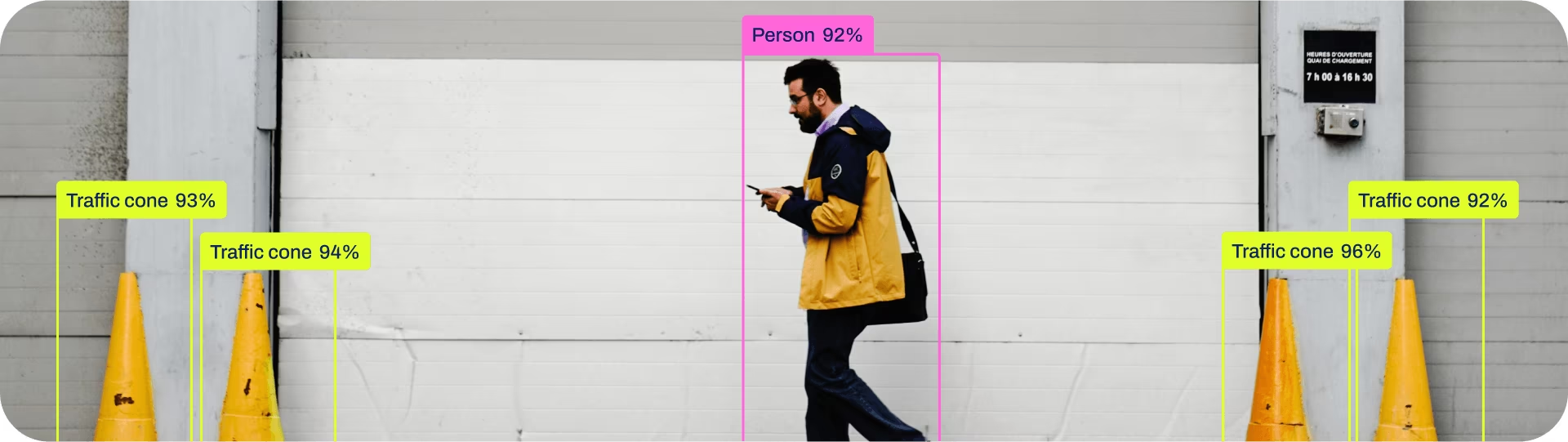

Object detection is a task that involves identifying the location and class of objects in an image or video stream.

The output of an object detector is a set of bounding boxes that enclose the objects in the image, along with class labels and confidence scores for each box. Object detection is a good choice when you need to identify objects of interest in a scene, but don't need to know exactly where the object is or its exact shape.

Watch: Object Detection with Pretrained Ultralytics YOLO Model.

YOLO26 Detect models are the default YOLO26 models, i.e., yolo26n.pt, and are pretrained on COCO.

Link to this sectionModels#

YOLO26 pretrained Detect models are shown here. Detect, Segment, and Pose models are pretrained on the COCO dataset, Semantic models are pretrained on Cityscapes, and Classify models are pretrained on the ImageNet dataset.

Models are downloaded automatically from the latest Ultralytics release on first use.

| Model | size (pixels) | mAPval 50-95 | mAPval 50-95(e2e) | Speed CPU ONNX (ms) | Speed T4 TensorRT10 (ms) | params (M) | FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLO26n | 640 | 40.9 | 40.1 | 38.9 ± 0.7 | 1.7 ± 0.0 | 2.4 | 5.4 |

| YOLO26s | 640 | 48.6 | 47.8 | 87.2 ± 0.9 | 2.5 ± 0.0 | 9.5 | 20.7 |

| YOLO26m | 640 | 53.1 | 52.5 | 220.0 ± 1.4 | 4.7 ± 0.1 | 20.4 | 68.2 |

| YOLO26l | 640 | 55.0 | 54.4 | 286.2 ± 2.0 | 6.2 ± 0.2 | 24.8 | 86.4 |

| YOLO26x | 640 | 57.5 | 56.9 | 525.8 ± 4.0 | 11.8 ± 0.2 | 55.7 | 193.9 |

- mAPval values are for single-model single-scale on COCO val2017 dataset.

Reproduce byyolo val detect data=coco.yaml device=0 - Speed averaged over COCO val images using an Amazon EC2 P4d instance.

Reproduce byyolo val detect data=coco.yaml batch=1 device=0|cpu - Params and FLOPs values are for the fused model after

model.fuse(), which merges Conv and BatchNorm layers and, for end2end models, removes the auxiliary one-to-many detection head. Pretrained checkpoints retain the full training architecture and may show higher counts.

Link to this sectionTrain#

Train YOLO26n on the COCO8 dataset for 100 epochs at image size 640. For a full list of available arguments see the Configuration page.

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.yaml") # build a new model from YAML

model = YOLO("yolo26n.pt") # load a pretrained model (recommended for training)

model = YOLO("yolo26n.yaml").load("yolo26n.pt") # build from YAML and transfer weights

# Train the model

results = model.train(data="coco8.yaml", epochs=100, imgsz=640)See full train mode details in the Train page. Detection models can also be trained on cloud GPUs through Ultralytics Platform.

Link to this sectionDataset format#

YOLO detection dataset format can be found in detail in the Dataset Guide. To convert your existing dataset from other formats (like COCO etc.) to YOLO format, please use JSON2YOLO tool by Ultralytics. You can also annotate and manage detection datasets directly on Ultralytics Platform with AI-assisted labeling tools.

Link to this sectionVal#

Validate trained YOLO26n model accuracy on the COCO8 dataset. No arguments are needed as the model retains its training data and arguments as model attributes.

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom model

# Validate the model

metrics = model.val() # no arguments needed, dataset and settings remembered

metrics.box.map # map50-95

metrics.box.map50 # map50

metrics.box.map75 # map75

metrics.box.maps # a list containing mAP50-95 for each category

metrics.box.image_metrics # per-image metrics dictionary with precision, recall, F1, TP, FP, and FNLink to this sectionPredict#

Use a trained YOLO26n model to run predictions on images.

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom model

# Predict with the model

results = model("https://ultralytics.com/images/bus.jpg") # predict on an image

# Access the results

for result in results:

xywh = result.boxes.xywh # center-x, center-y, width, height

xywhn = result.boxes.xywhn # normalized

xyxy = result.boxes.xyxy # top-left-x, top-left-y, bottom-right-x, bottom-right-y

xyxyn = result.boxes.xyxyn # normalized

names = [result.names[cls.item()] for cls in result.boxes.cls.int()] # class name of each box

confs = result.boxes.conf # confidence score of each boxSee full predict mode details in the Predict page.

Link to this sectionResults Output#

Object detection returns one Results object per image. The primary prediction field is result.boxes, which contains

box coordinates, class IDs, and confidence scores for each detected object.

| Attribute | Type | Shape | Description |

|---|---|---|---|

result.boxes | Boxes | (N) | Detection boxes. |

result.boxes.data | torch.float32 | (N,6/7) | Raw [x1,y1,x2,y2,conf,cls], plus optional track ID. |

result.boxes.xyxy | torch.float32 | (N,4) | xyxy pixel boxes. |

result.boxes.conf | torch.float32 | (N,) | Confidence scores. |

result.boxes.cls | torch.float32 | (N,) | Class IDs; cast to int for names. |

For task-specific Results fields across every task, see the Predict Results by Task section.

Link to this sectionExport#

Export a YOLO26n model to a different format like ONNX, CoreML, etc.

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom-trained model

# Export the model

model.export(format="onnx")Available YOLO26 export formats are in the table below. You can export to any format using the format argument, i.e., format='onnx' or format='engine'. You can predict or validate directly on exported models, i.e., yolo predict model=yolo26n.onnx. Usage examples are shown for your model after export completes.

| Format | format Argument | Model | Metadata | Arguments |

|---|---|---|---|---|

| PyTorch | - | yolo26n.pt | ✅ | - |

| TorchScript | torchscript | yolo26n.torchscript | ✅ | imgsz, half, dynamic, optimize, nms, batch, device |

| ONNX | onnx | yolo26n.onnx | ✅ | imgsz, half, dynamic, simplify, opset, nms, batch, device |

| OpenVINO | openvino | yolo26n_openvino_model/ | ✅ | imgsz, half, dynamic, int8, nms, batch, data, fraction, device |

| TensorRT | engine | yolo26n.engine | ✅ | imgsz, half, dynamic, simplify, workspace, int8, nms, batch, data, fraction, device |

| CoreML | coreml | yolo26n.mlpackage | ✅ | imgsz, dynamic, half, int8, nms, batch, device |

| TF SavedModel | saved_model | yolo26n_saved_model/ | ✅ | imgsz, keras, int8, nms, batch, data, fraction, device |

| TF GraphDef | pb | yolo26n.pb | ❌ | imgsz, batch, device |

| TF Lite | tflite | yolo26n.tflite | ✅ | imgsz, half, int8, nms, batch, data, fraction, device |

| TF Edge TPU | edgetpu | yolo26n_edgetpu.tflite | ✅ | imgsz, int8, data, fraction, device |

| TF.js | tfjs | yolo26n_web_model/ | ✅ | imgsz, half, int8, nms, batch, data, fraction, device |

| PaddlePaddle | paddle | yolo26n_paddle_model/ | ✅ | imgsz, batch, device |

| MNN | mnn | yolo26n.mnn | ✅ | imgsz, batch, int8, half, device |

| NCNN | ncnn | yolo26n_ncnn_model/ | ✅ | imgsz, half, batch, device |

| IMX500 | imx | yolo26n_imx_model/ | ✅ | imgsz, int8, data, fraction, nms, device |

| RKNN | rknn | yolo26n_rknn_model/ | ✅ | imgsz, batch, name, device |

| ExecuTorch | executorch | yolo26n_executorch_model/ | ✅ | imgsz, batch, device |

| Axelera | axelera | yolo26n_axelera_model/ | ✅ | imgsz, batch, int8, data, fraction, device |

| DEEPX | deepx | yolo26n_deepx_model/ | ✅ | imgsz, int8, data, optimize, device |

| Qualcomm QNN | qnn | yolo26n_qnn_model/ | ✅ | imgsz, batch, name, int8, data, fraction, device |

See full export details in the Export page.

Link to this sectionFAQ#

Link to this sectionCan I train and deploy detection models without coding?#

Yes. Ultralytics Platform provides a browser-based workflow for annotating datasets, training detection models on cloud GPUs, and deploying them to inference endpoints. See the Platform quickstart to get started.

Link to this sectionHow do I train a YOLO26 model on my custom dataset?#

Training a YOLO26 model on a custom dataset involves a few steps:

- Prepare the Dataset: Ensure your dataset is in the YOLO format. For guidance, refer to our Dataset Guide.

- Load the Model: Use the Ultralytics YOLO library to load a pretrained model or create a new model from a YAML file.

- Train the Model: Execute the

trainmethod in Python or theyolo detect traincommand in CLI.

from ultralytics import YOLO

# Load a pretrained model

model = YOLO("yolo26n.pt")

# Train the model on your custom dataset

model.train(data="my_custom_dataset.yaml", epochs=100, imgsz=640)For detailed configuration options, visit the Configuration page.

Link to this sectionWhat pretrained models are available in YOLO26?#

Ultralytics YOLO26 offers various pretrained models for object detection, instance segmentation, semantic segmentation, and pose estimation. These models are pretrained on the COCO dataset, Cityscapes for semantic segmentation, or ImageNet for classification tasks. Here are some of the available models:

For a detailed list and performance metrics, refer to the Models section.

Link to this sectionHow can I validate the accuracy of my trained YOLO model?#

To validate the accuracy of your trained YOLO26 model, you can use the .val() method in Python or the yolo detect val command in CLI. This will provide metrics like mAP50-95, mAP50, and more.

from ultralytics import YOLO

# Load the model

model = YOLO("path/to/best.pt")

# Validate the model

metrics = model.val()

print(metrics.box.map) # mAP50-95For more validation details, visit the Val page.

Link to this sectionWhat formats can I export a YOLO26 model to?#

Ultralytics YOLO26 allows exporting models to various formats such as ONNX, TensorRT, CoreML, and more to ensure compatibility across different platforms and devices.

from ultralytics import YOLO

# Load the model

model = YOLO("yolo26n.pt")

# Export the model to ONNX format

model.export(format="onnx")Check the full list of supported formats and instructions on the Export page.

Link to this sectionWhy should I use Ultralytics YOLO26 for object detection?#

Ultralytics YOLO26 is designed to offer state-of-the-art performance for object detection, instance segmentation, semantic segmentation, and pose estimation. Here are some key advantages:

- Pretrained Models: Utilize models pretrained on popular datasets like COCO and ImageNet for faster development.

- High Accuracy: Achieves impressive mAP scores, ensuring reliable object detection.

- Speed: Optimized for real-time inference, making it ideal for applications requiring swift processing.

- Flexibility: Export models to various formats like ONNX and TensorRT for deployment across multiple platforms.

Explore our Blog for use cases and success stories showcasing YOLO26 in action.