Object Detection Datasets Overview

Training a robust and accurate object detection model requires a comprehensive dataset. This guide introduces various formats of datasets that are compatible with the Ultralytics YOLO model and provides insights into their structure, usage, and how to convert between different formats.

Supported Dataset Formats

Ultralytics YOLO format

The Ultralytics YOLO format is a dataset configuration format that allows you to define the dataset root directory, the relative paths to training/validation/testing image directories or *.txt files containing image paths, and a dictionary of class names. Here is an example:

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# COCO8 dataset (first 8 images from COCO train2017) by Ultralytics

# Documentation: https://docs.ultralytics.com/datasets/detect/coco8/

# Example usage: yolo train data=coco8.yaml

# parent

# ├── ultralytics

# └── datasets

# └── coco8 ← downloads here (1 MB)

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: coco8 # dataset root dir

train: images/train # train images (relative to 'path') 4 images

val: images/val # val images (relative to 'path') 4 images

test: # test images (optional)

# Classes

names:

0: person

1: bicycle

2: car

3: motorcycle

4: airplane

5: bus

6: train

7: truck

8: boat

9: traffic light

10: fire hydrant

11: stop sign

12: parking meter

13: bench

14: bird

15: cat

16: dog

17: horse

18: sheep

19: cow

20: elephant

21: bear

22: zebra

23: giraffe

24: backpack

25: umbrella

26: handbag

27: tie

28: suitcase

29: frisbee

30: skis

31: snowboard

32: sports ball

33: kite

34: baseball bat

35: baseball glove

36: skateboard

37: surfboard

38: tennis racket

39: bottle

40: wine glass

41: cup

42: fork

43: knife

44: spoon

45: bowl

46: banana

47: apple

48: sandwich

49: orange

50: broccoli

51: carrot

52: hot dog

53: pizza

54: donut

55: cake

56: chair

57: couch

58: potted plant

59: bed

60: dining table

61: toilet

62: tv

63: laptop

64: mouse

65: remote

66: keyboard

67: cell phone

68: microwave

69: oven

70: toaster

71: sink

72: refrigerator

73: book

74: clock

75: vase

76: scissors

77: teddy bear

78: hair drier

79: toothbrush

# Download script/URL (optional)

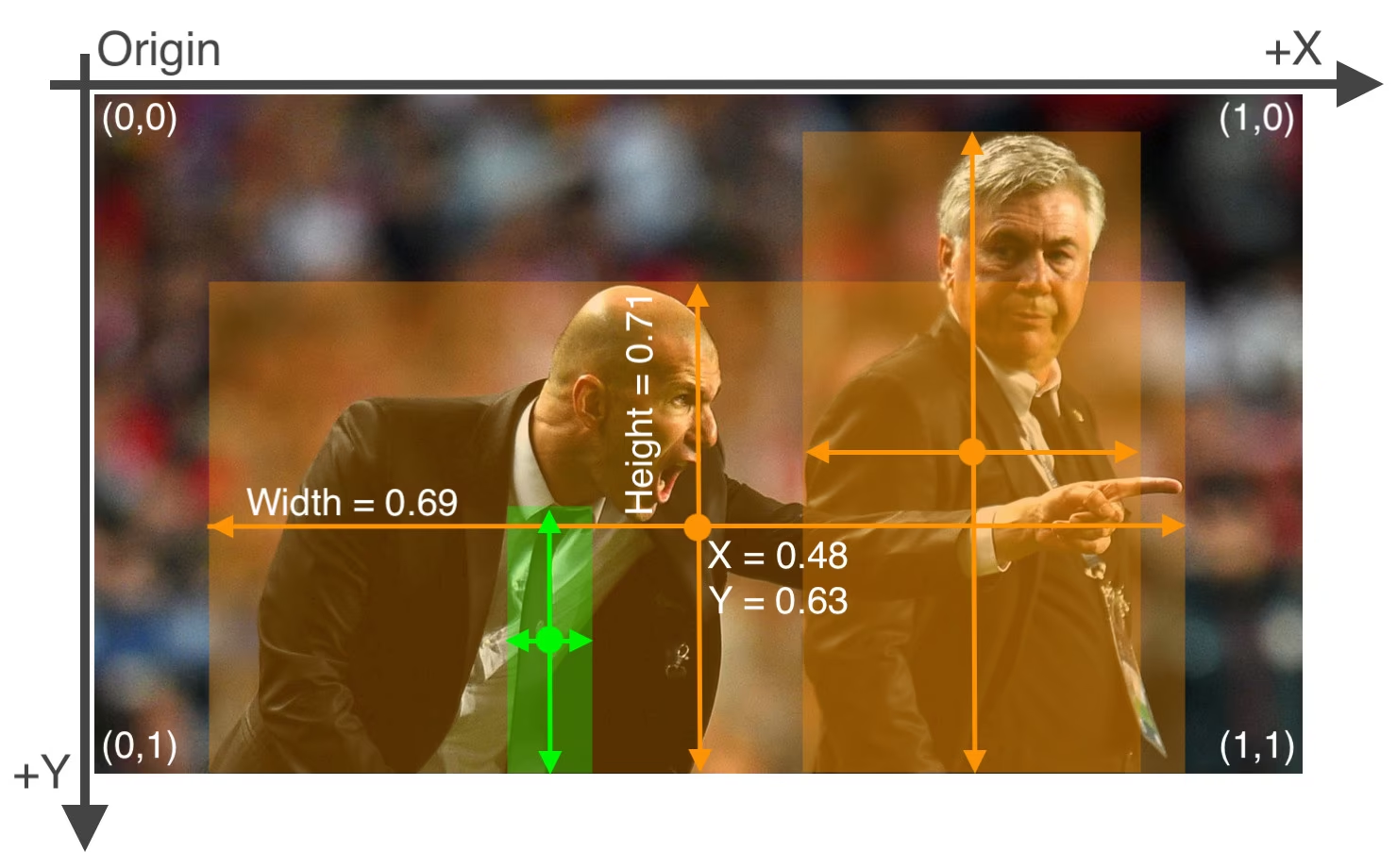

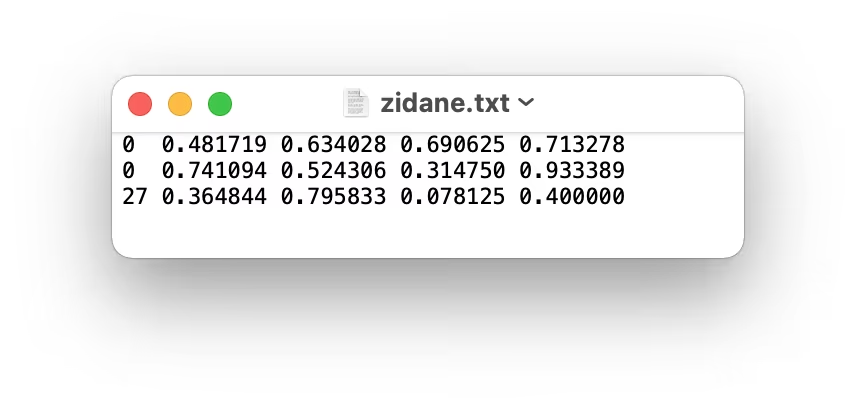

download: https://github.com/ultralytics/assets/releases/download/v0.0.0/coco8.zipLabels for this format should be exported to YOLO format with one *.txt file per image. If there are no objects in an image, no *.txt file is required. The *.txt file should be formatted with one row per object in class x_center y_center width height format. Box coordinates must be in normalized xywh format (from 0 to 1). If your boxes are in pixels, you should divide x_center and width by image width, and y_center and height by image height. Class numbers should be zero-indexed (start with 0).

The label file corresponding to the above image contains 2 persons (class 0) and a tie (class 27):

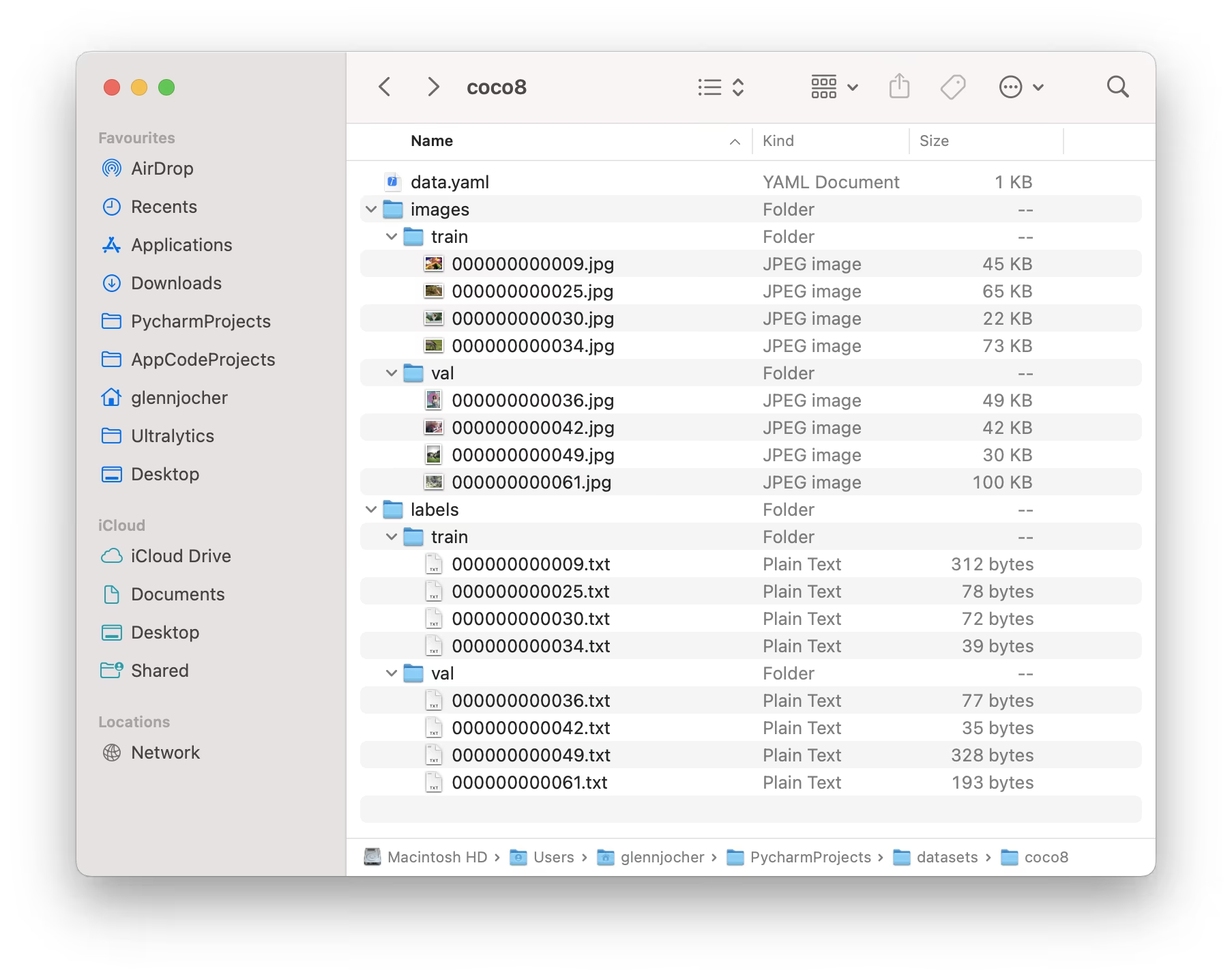

When using the Ultralytics YOLO format, organize your training and validation images and labels as shown in the COCO8 dataset example below.

Usage Example

Here's how you can use YOLO format datasets to train your model:

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="coco8.yaml", epochs=100, imgsz=640)Ultralytics NDJSON format

The NDJSON (Newline Delimited JSON) format provides an alternative way to define datasets for Ultralytics YOLO models. This format stores dataset metadata and annotations in a single file where each line contains a separate JSON object.

An NDJSON dataset file contains:

- Dataset record (first line): Contains dataset metadata including task type, class names, and general information

- Image records (subsequent lines): Contains individual image data including dimensions, annotations, and file paths

{

"type": "dataset",

"task": "detect",

"name": "Example",

"description": "COCO NDJSON example dataset",

"url": "https://app.ultralytics.com/user/datasets/example",

"class_names": { "0": "person", "1": "bicycle", "2": "car" },

"bytes": 426342,

"version": 0,

"created_at": "2024-01-01T00:00:00Z",

"updated_at": "2025-01-01T00:00:00Z"

}Usage Example

To use an NDJSON dataset with YOLO26, simply specify the path to the .ndjson file:

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt")

# Train using NDJSON dataset

results = model.train(data="path/to/dataset.ndjson", epochs=100, imgsz=640)Advantages of NDJSON format

- Single file: All dataset information contained in one file

- Streaming: Can process large datasets line-by-line without loading everything into memory

- Cloud integration: Supports remote image URLs for cloud-based training

- Extensible: Easy to add custom metadata fields

- Version control: Single file format works well with git and version control systems

Supported Datasets

Here is a list of the supported datasets and a brief description for each:

- African-wildlife: A dataset featuring images of African wildlife, including buffalo, elephant, rhino, and zebras.

- Argoverse: A dataset containing 3D tracking and motion forecasting data from urban environments with rich annotations.

- Brain-tumor: A dataset for detecting brain tumors includes MRI or CT scan images with details on tumor presence, location, and characteristics.

- COCO: Common Objects in Context (COCO) is a large-scale object detection, segmentation, and captioning dataset with 80 object categories.

- COCO8: A smaller subset of the first 4 images from COCO train and COCO val, suitable for quick tests.

- COCO8-Grayscale: A grayscale version of COCO8 created by converting RGB to grayscale, useful for single-channel model evaluation.

- COCO8-Multispectral: A 10-channel multispectral version of COCO8 created by interpolating RGB wavelengths, useful for spectral-aware model evaluation.

- COCO12-Formats: A test dataset with 12 images covering all supported image formats (AVIF, BMP, DNG, HEIC, JP2, JPEG, JPG, MPO, PNG, TIF, TIFF, WebP) for validating image loading pipelines.

- COCO128: A smaller subset of the first 128 images from COCO train and COCO val, suitable for tests.

- Construction-PPE: A dataset featuring construction site workers with labeled safety gear such as helmets, vests, gloves, boots, and goggles, including missing-equipment annotations like no_helmet, no_googles for real-world compliance monitoring.

- Global Wheat 2020: A dataset containing images of wheat heads for the Global Wheat Challenge 2020.

- HomeObjects-3K: A dataset of indoor household items including beds, chairs, TVs, and more—ideal for applications in smart home automation, robotics, augmented reality, and room layout analysis.

- KITTI: A dataset featuring real-world driving scenes with stereo, LiDAR, and GPS/IMU data, used here for 2D object detection tasks such as identifying cars, pedestrians, and cyclists in urban, rural, and highway environments.

- LVIS: A large-scale object detection, segmentation, and captioning dataset with 1203 object categories.

- Medical-pills: A dataset featuring images of medical-pills, annotated for applications such as pharmaceutical quality assurance, pill sorting, and regulatory compliance.

- Objects365: A high-quality, large-scale dataset for object detection with 365 object categories and over 600K annotated images.

- OpenImagesV7: A comprehensive dataset by Google with 1.7M train images and 42k validation images.

- Roboflow 100: A diverse object detection benchmark with 100 datasets spanning seven imagery domains for comprehensive model evaluation.

- Signature: A dataset featuring images of various documents with annotated signatures, supporting document verification and fraud detection research.

- SKU-110K: A dataset featuring dense object detection in retail environments with over 11K images and 1.7 million bounding boxes.

- TT100K: Explore the Tsinghua-Tencent 100K (TT100K) traffic sign dataset with 100,000 street view images and 30,000+ annotated traffic signs for robust detection and classification.

- VisDrone: A dataset containing object detection and multi-object tracking data from drone-captured imagery with over 10K images and video sequences.

- VOC: The Pascal Visual Object Classes (VOC) dataset for object detection and segmentation with 20 object classes and over 11K images.

- xView: A dataset for object detection in overhead imagery with 60 object categories and over 1 million annotated objects.

Adding your own dataset

If you have your own dataset and would like to use it for training detection models with Ultralytics YOLO format, ensure that it follows the format specified above under "Ultralytics YOLO format". Convert your annotations to the required format and specify the paths, number of classes, and class names in the YAML configuration file.

Port or Convert Label Formats

COCO Dataset Format to YOLO Format

You can easily convert labels from the popular COCO dataset format to the YOLO format using the following code snippet:

from ultralytics.data.converter import convert_coco

convert_coco(labels_dir="path/to/coco/annotations/")This conversion tool can be used to convert the COCO dataset or any dataset in the COCO format to the Ultralytics YOLO format. The process transforms the JSON-based COCO annotations into the simpler text-based YOLO format, making it compatible with Ultralytics YOLO models.

Remember to double-check if the dataset you want to use is compatible with your model and follows the necessary format conventions. Properly formatted datasets are crucial for training successful object detection models.

FAQ

What is the Ultralytics YOLO dataset format and how to structure it?

The Ultralytics YOLO format is a structured configuration for defining datasets in your training projects. It involves setting paths to your training, validation, and testing images and corresponding labels. For example:

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# COCO8 dataset (first 8 images from COCO train2017) by Ultralytics

# Documentation: https://docs.ultralytics.com/datasets/detect/coco8/

# Example usage: yolo train data=coco8.yaml

# parent

# ├── ultralytics

# └── datasets

# └── coco8 ← downloads here (1 MB)

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: coco8 # dataset root dir

train: images/train # train images (relative to 'path') 4 images

val: images/val # val images (relative to 'path') 4 images

test: # test images (optional)

# Classes

names:

0: person

1: bicycle

2: car

3: motorcycle

4: airplane

5: bus

6: train

7: truck

8: boat

9: traffic light

10: fire hydrant

11: stop sign

12: parking meter

13: bench

14: bird

15: cat

16: dog

17: horse

18: sheep

19: cow

20: elephant

21: bear

22: zebra

23: giraffe

24: backpack

25: umbrella

26: handbag

27: tie

28: suitcase

29: frisbee

30: skis

31: snowboard

32: sports ball

33: kite

34: baseball bat

35: baseball glove

36: skateboard

37: surfboard

38: tennis racket

39: bottle

40: wine glass

41: cup

42: fork

43: knife

44: spoon

45: bowl

46: banana

47: apple

48: sandwich

49: orange

50: broccoli

51: carrot

52: hot dog

53: pizza

54: donut

55: cake

56: chair

57: couch

58: potted plant

59: bed

60: dining table

61: toilet

62: tv

63: laptop

64: mouse

65: remote

66: keyboard

67: cell phone

68: microwave

69: oven

70: toaster

71: sink

72: refrigerator

73: book

74: clock

75: vase

76: scissors

77: teddy bear

78: hair drier

79: toothbrush

# Download script/URL (optional)

download: https://github.com/ultralytics/assets/releases/download/v0.0.0/coco8.zipLabels are saved in *.txt files with one file per image, formatted as class x_center y_center width height with normalized coordinates. For a detailed guide, see the COCO8 dataset example.

How do I convert a COCO dataset to the YOLO format?

You can convert a COCO dataset to the YOLO format using the Ultralytics conversion tools. Here's a quick method:

from ultralytics.data.converter import convert_coco

convert_coco(labels_dir="path/to/coco/annotations/")This code will convert your COCO annotations to YOLO format, enabling seamless integration with Ultralytics YOLO models. For additional details, visit the Port or Convert Label Formats section.

Which datasets are supported by Ultralytics YOLO for object detection?

Ultralytics YOLO supports a wide range of datasets, including:

Each dataset page provides detailed information on the structure and usage tailored for efficient YOLO26 training. Explore the full list in the Supported Datasets section.

How do I start training a YOLO26 model using my dataset?

To start training a YOLO26 model, ensure your dataset is formatted correctly and the paths are defined in a YAML file. Use the following script to begin training:

from ultralytics import YOLO

model = YOLO("yolo26n.pt") # Load a pretrained model

results = model.train(data="path/to/your_dataset.yaml", epochs=100, imgsz=640)Refer to the Usage section for more details on utilizing different modes, including CLI commands.

Where can I find practical examples of using Ultralytics YOLO for object detection?

Ultralytics provides numerous examples and practical guides for using YOLO26 in diverse applications. For a comprehensive overview, visit the Ultralytics Blog where you can find case studies, detailed tutorials, and community stories showcasing object detection, segmentation, and more with YOLO26. For specific examples, check the Usage section in the documentation.