VOC Dataset

The PASCAL VOC (Visual Object Classes) dataset is a well-known object detection, segmentation, and classification dataset. It is designed to encourage research on a wide variety of object categories and is commonly used for benchmarking computer vision models. It is an essential dataset for researchers and developers working on object detection, segmentation, and classification tasks.

Watch: How to Train Ultralytics YOLO26 on the Pascal VOC Dataset | Object Detection 🚀

Key Features

- VOC dataset includes two main challenges: VOC2007 and VOC2012.

- The dataset comprises 20 object categories, including common objects like cars, bicycles, and animals, as well as more specific categories such as boats, sofas, and dining tables.

- Annotations include object bounding boxes and class labels for object detection and classification tasks, and segmentation masks for the segmentation tasks.

- VOC provides standardized evaluation metrics like mean Average Precision (mAP) for object detection and classification, making it suitable for comparing model performance.

Dataset Structure

The VOC dataset is split into three subsets:

- Train: This subset contains images for training object detection, segmentation, and classification models.

- Validation: This subset has images used for validation purposes during model training.

- Test: This subset consists of images used for testing and benchmarking the trained models. Ground truth annotations for this subset are not publicly available, and the results were historically submitted to the PASCAL VOC evaluation server for performance evaluation.

Applications

The VOC dataset is widely used for training and evaluating deep learning models in object detection (such as Ultralytics YOLO, Faster R-CNN, and SSD), instance segmentation (such as Mask R-CNN), and image classification. The dataset's diverse set of object categories, large number of annotated images, and standardized evaluation metrics make it an essential resource for computer vision researchers and practitioners.

Dataset YAML

A YAML (Yet Another Markup Language) file is used to define the dataset configuration. It contains information about the dataset's paths, classes, and other relevant information. In the case of the VOC dataset, the VOC.yaml file is maintained at https://github.com/ultralytics/ultralytics/blob/main/ultralytics/cfg/datasets/VOC.yaml.

ultralytics/cfg/datasets/VOC.yaml

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# PASCAL VOC dataset http://host.robots.ox.ac.uk/pascal/VOC by University of Oxford

# Documentation: https://docs.ultralytics.com/datasets/detect/voc/

# Example usage: yolo train data=VOC.yaml

# parent

# ├── ultralytics

# └── datasets

# └── VOC ← downloads here (2.8 GB)

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: VOC

train: # train images (relative to 'path') 16551 images

- images/train2012

- images/train2007

- images/val2012

- images/val2007

val: # val images (relative to 'path') 4952 images

- images/test2007

test: # test images (optional)

- images/test2007

# Classes

names:

0: aeroplane

1: bicycle

2: bird

3: boat

4: bottle

5: bus

6: car

7: cat

8: chair

9: cow

10: diningtable

11: dog

12: horse

13: motorbike

14: person

15: pottedplant

16: sheep

17: sofa

18: train

19: tvmonitor

# Download script/URL (optional) ---------------------------------------------------------------------------------------

download: |

import xml.etree.ElementTree as ET

from pathlib import Path

from ultralytics.utils.downloads import download

from ultralytics.utils import ASSETS_URL, TQDM

def convert_label(path, lb_path, year, image_id):

"""Converts XML annotations from VOC format to YOLO format by extracting bounding boxes and class IDs."""

def convert_box(size, box):

dw, dh = 1.0 / size[0], 1.0 / size[1]

x, y, w, h = (box[0] + box[1]) / 2.0 - 1, (box[2] + box[3]) / 2.0 - 1, box[1] - box[0], box[3] - box[2]

return x * dw, y * dh, w * dw, h * dh

with open(path / f"VOC{year}/Annotations/{image_id}.xml") as in_file, open(lb_path, "w", encoding="utf-8") as out_file:

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find("size")

w = int(size.find("width").text)

h = int(size.find("height").text)

names = list(yaml["names"].values()) # names list

for obj in root.iter("object"):

cls = obj.find("name").text

if cls in names and int(obj.find("difficult").text) != 1:

xmlbox = obj.find("bndbox")

bb = convert_box((w, h), [float(xmlbox.find(x).text) for x in ("xmin", "xmax", "ymin", "ymax")])

cls_id = names.index(cls) # class id

out_file.write(" ".join(str(a) for a in (cls_id, *bb)) + "\n")

# Download

dir = Path(yaml["path"]) # dataset root dir

urls = [

f"{ASSETS_URL}/VOCtrainval_06-Nov-2007.zip", # 446MB, 5012 images

f"{ASSETS_URL}/VOCtest_06-Nov-2007.zip", # 438MB, 4953 images

f"{ASSETS_URL}/VOCtrainval_11-May-2012.zip", # 1.95GB, 17126 images

]

download(urls, dir=dir / "images", threads=3, exist_ok=True) # download and unzip over existing (required)

# Convert

path = dir / "images/VOCdevkit"

for year, image_set in ("2012", "train"), ("2012", "val"), ("2007", "train"), ("2007", "val"), ("2007", "test"):

imgs_path = dir / "images" / f"{image_set}{year}"

lbs_path = dir / "labels" / f"{image_set}{year}"

imgs_path.mkdir(exist_ok=True, parents=True)

lbs_path.mkdir(exist_ok=True, parents=True)

with open(path / f"VOC{year}/ImageSets/Main/{image_set}.txt") as f:

image_ids = f.read().strip().split()

for id in TQDM(image_ids, desc=f"{image_set}{year}"):

f = path / f"VOC{year}/JPEGImages/{id}.jpg" # old img path

lb_path = (lbs_path / f.name).with_suffix(".txt") # new label path

f.rename(imgs_path / f.name) # move image

convert_label(path, lb_path, year, id) # convert labels to YOLO format

Usage

To train a YOLO26n model on the VOC dataset for 100 epochs with an image size of 640, you can use the following code snippets. For a comprehensive list of available arguments, refer to the model Training page.

Train Example

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="VOC.yaml", epochs=100, imgsz=640)

# Start training from a pretrained *.pt model

yolo detect train data=VOC.yaml model=yolo26n.pt epochs=100 imgsz=640

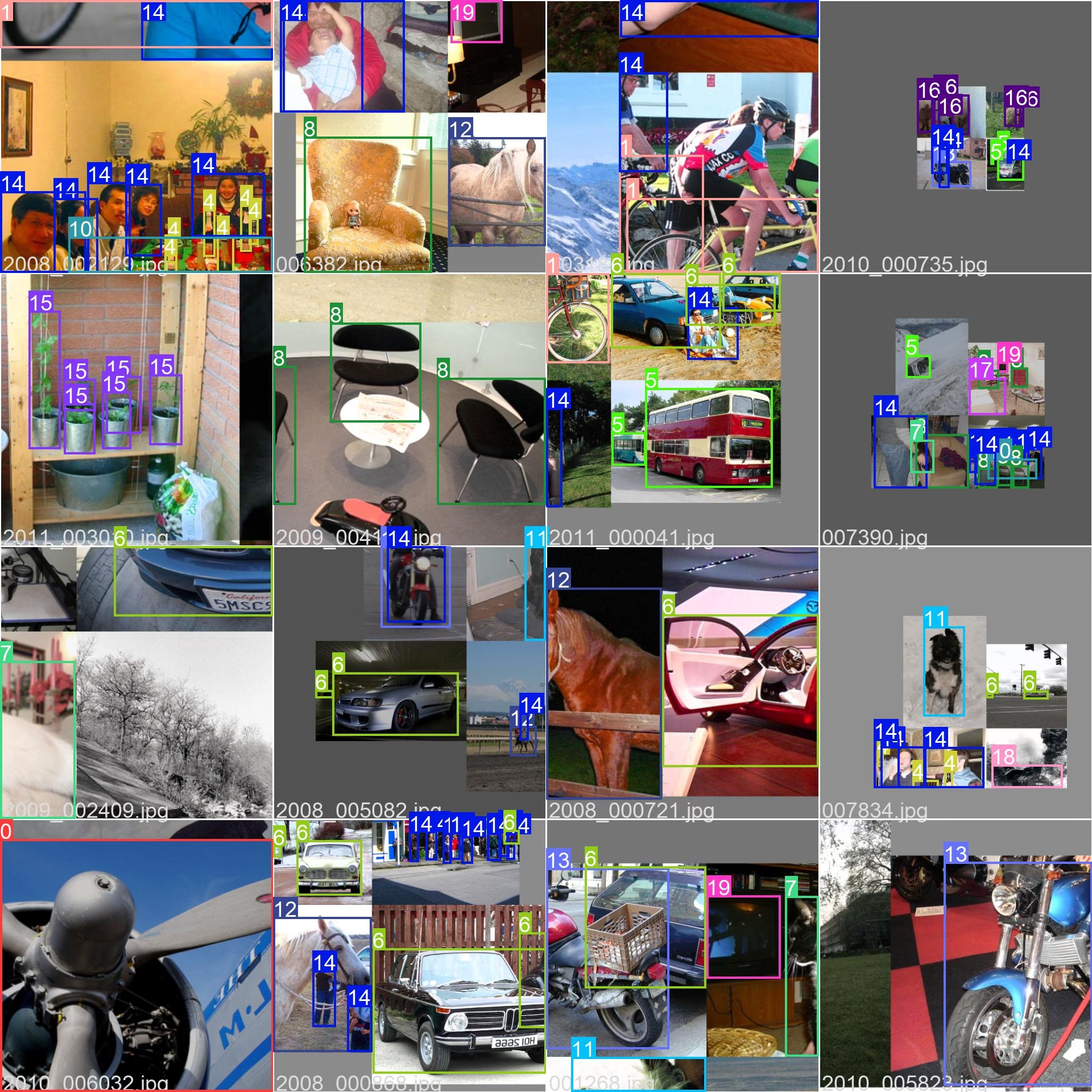

Sample Images and Annotations

The VOC dataset contains a diverse set of images with various object categories and complex scenes. Here are some examples of images from the dataset, along with their corresponding annotations:

- Mosaiced Image: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

The example showcases the variety and complexity of the images in the VOC dataset and the benefits of using mosaicing during the training process.

Citations and Acknowledgments

If you use the VOC dataset in your research or development work, please cite the following paper:

@misc{everingham2010pascal,

title={The PASCAL Visual Object Classes (VOC) Challenge},

author={Mark Everingham and Luc Van Gool and Christopher K. I. Williams and John Winn and Andrew Zisserman},

year={2010},

eprint={0909.5206},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

We would like to acknowledge the PASCAL VOC Consortium for creating and maintaining this valuable resource for the computer vision community. For more information about the VOC dataset and its creators, visit the PASCAL VOC dataset website.

FAQ

What is the PASCAL VOC dataset and why is it important for computer vision tasks?

The PASCAL VOC (Visual Object Classes) dataset is a renowned benchmark for object detection, segmentation, and classification in computer vision. It includes comprehensive annotations like bounding boxes, class labels, and segmentation masks across 20 different object categories. Researchers use it widely to evaluate the performance of models like Faster R-CNN, YOLO, and Mask R-CNN due to its standardized evaluation metrics such as mean Average Precision (mAP).

How do I train a YOLO26 model using the VOC dataset?

To train a YOLO26 model with the VOC dataset, you need the dataset configuration in a YAML file. Here's an example to start training a YOLO26n model for 100 epochs with an image size of 640:

Train Example

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="VOC.yaml", epochs=100, imgsz=640)

# Start training from a pretrained *.pt model

yolo detect train data=VOC.yaml model=yolo26n.pt epochs=100 imgsz=640

What are the primary challenges included in the VOC dataset?

The VOC dataset includes two main challenges: VOC2007 and VOC2012. These challenges test object detection, segmentation, and classification across 20 diverse object categories. Each image is meticulously annotated with bounding boxes, class labels, and segmentation masks. The challenges provide standardized metrics like mAP, facilitating the comparison and benchmarking of different computer vision models.

How does the PASCAL VOC dataset enhance model benchmarking and evaluation?

The PASCAL VOC dataset enhances model benchmarking and evaluation through its detailed annotations and standardized metrics like mean Average Precision (mAP). These metrics are crucial for assessing the performance of object detection and classification models. The dataset's diverse and complex images ensure comprehensive model evaluation across various real-world scenarios.

How do I use the VOC dataset for semantic segmentation in YOLO models?

To use the VOC dataset for semantic segmentation tasks with YOLO models, you need to configure the dataset properly in a YAML file. The YAML file defines paths and classes needed for training segmentation models. Check the VOC dataset YAML configuration file at VOC.yaml for detailed setups. For segmentation tasks, you would use a segmentation-specific model like yolo26n-seg.pt instead of the detection model.