SKU-110k Dataset

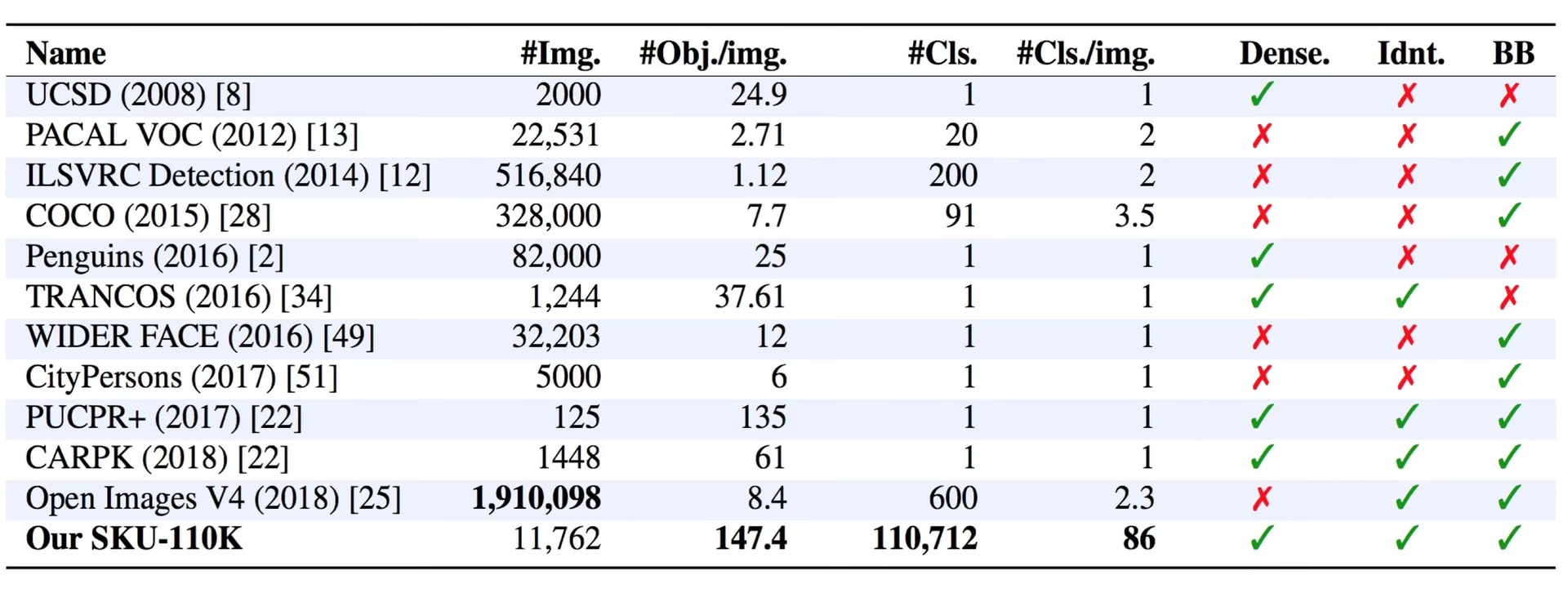

The SKU-110k dataset is a collection of densely packed retail shelf images, designed to support research in object detection tasks. Developed by Eran Goldman et al., the dataset contains over 110,000 unique store keeping unit (SKU) categories with densely packed objects, often looking similar or even identical, positioned in proximity.

Watch: How to Train YOLOv10 on SKU-110k Dataset using Ultralytics | Retail Dataset

Key Features

- SKU-110k contains images of store shelves from around the world, featuring densely packed objects that pose challenges for state-of-the-art object detectors.

- The dataset includes over 110,000 unique SKU categories, providing a diverse range of object appearances.

- Annotations include bounding boxes for objects and SKU category labels.

Dataset Structure

The SKU-110k dataset is organized into three main subsets:

- Training set: This subset contains 8,219 images and annotations used for training object detection models.

- Validation set: This subset consists of 588 images and annotations used for model validation during training.

- Test set: This subset includes 2,936 images designed for the final evaluation of trained object detection models.

Applications

The SKU-110k dataset is widely used for training and evaluating deep learning models in object detection tasks, especially in densely packed scenes such as retail shelf displays. Its applications include:

- Retail inventory management and automation

- Product recognition in e-commerce platforms

- Planogram compliance verification

- Self-checkout systems in stores

- Robotic picking and sorting in warehouses

The dataset's diverse set of SKU categories and densely packed object arrangements make it a valuable resource for researchers and practitioners in the field of computer vision.

Dataset YAML

A YAML (Yet Another Markup Language) file is used to define the dataset configuration. It contains information about the dataset's paths, classes, and other relevant information. For the case of the SKU-110K dataset, the SKU-110K.yaml file is maintained at https://github.com/ultralytics/ultralytics/blob/main/ultralytics/cfg/datasets/SKU-110K.yaml.

ultralytics/cfg/datasets/SKU-110K.yaml

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# SKU-110K retail items dataset https://github.com/eg4000/SKU110K_CVPR19 by Trax Retail

# Documentation: https://docs.ultralytics.com/datasets/detect/sku-110k/

# Example usage: yolo train data=SKU-110K.yaml

# parent

# ├── ultralytics

# └── datasets

# └── SKU-110K ← downloads here (13.6 GB)

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: SKU-110K # dataset root dir

train: train.txt # train images (relative to 'path') 8219 images

val: val.txt # val images (relative to 'path') 588 images

test: test.txt # test images (optional) 2936 images

# Classes

names:

0: object

# Download script/URL (optional) ---------------------------------------------------------------------------------------

download: |

import shutil

from pathlib import Path

import numpy as np

import polars as pl

from ultralytics.utils import TQDM

from ultralytics.utils.downloads import download

from ultralytics.utils.ops import xyxy2xywh

# Download

dir = Path(yaml["path"]) # dataset root dir

parent = Path(dir.parent) # download dir

urls = ["http://trax-geometry.s3.amazonaws.com/cvpr_challenge/SKU110K_fixed.tar.gz"]

download(urls, dir=parent)

# Rename directories

if dir.exists():

shutil.rmtree(dir)

(parent / "SKU110K_fixed").rename(dir) # rename dir

(dir / "labels").mkdir(parents=True, exist_ok=True) # create labels dir

# Convert labels

names = "image", "x1", "y1", "x2", "y2", "class", "image_width", "image_height" # column names

for d in "annotations_train.csv", "annotations_val.csv", "annotations_test.csv":

x = pl.read_csv(dir / "annotations" / d, has_header=False, new_columns=names, infer_schema_length=None).to_numpy() # annotations

images, unique_images = x[:, 0], np.unique(x[:, 0])

with open((dir / d).with_suffix(".txt").__str__().replace("annotations_", ""), "w", encoding="utf-8") as f:

f.writelines(f"./images/{s}\n" for s in unique_images)

for im in TQDM(unique_images, desc=f"Converting {dir / d}"):

cls = 0 # single-class dataset

with open((dir / "labels" / im).with_suffix(".txt"), "a", encoding="utf-8") as f:

for r in x[images == im]:

w, h = r[6], r[7] # image width, height

xywh = xyxy2xywh(np.array([[r[1] / w, r[2] / h, r[3] / w, r[4] / h]]))[0] # instance

f.write(f"{cls} {xywh[0]:.5f} {xywh[1]:.5f} {xywh[2]:.5f} {xywh[3]:.5f}\n") # write label

Usage

To train a YOLO26n model on the SKU-110K dataset for 100 epochs with an image size of 640, you can use the following code snippets. For a comprehensive list of available arguments, refer to the model Training page.

Train Example

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="SKU-110K.yaml", epochs=100, imgsz=640)

# Start training from a pretrained *.pt model

yolo detect train data=SKU-110K.yaml model=yolo26n.pt epochs=100 imgsz=640

Sample Data and Annotations

The SKU-110k dataset contains a diverse set of retail shelf images with densely packed objects, providing rich context for object detection tasks. Here are some examples of data from the dataset, along with their corresponding annotations:

- Densely packed retail shelf image: This image demonstrates an example of densely packed objects in a retail shelf setting. Objects are annotated with bounding boxes and SKU category labels.

The example showcases the variety and complexity of the data in the SKU-110k dataset and highlights the importance of high-quality data for object detection tasks. The dense arrangement of products presents unique challenges for detection algorithms, making this dataset particularly valuable for developing robust retail-focused computer vision solutions.

Citations and Acknowledgments

If you use the SKU-110k dataset in your research or development work, please cite the following paper:

@inproceedings{goldman2019dense,

author = {Eran Goldman and Roei Herzig and Aviv Eisenschtat and Jacob Goldberger and Tal Hassner},

title = {Precise Detection in Densely Packed Scenes},

booktitle = {Proc. Conf. Comput. Vision Pattern Recognition (CVPR)},

year = {2019}

}

We would like to acknowledge Eran Goldman et al. for creating and maintaining the SKU-110k dataset as a valuable resource for the computer vision research community. For more information about the SKU-110k dataset and its creators, visit the SKU-110k dataset GitHub repository.

FAQ

What is the SKU-110k dataset and why is it important for object detection?

The SKU-110k dataset consists of densely packed retail shelf images designed to aid research in object detection tasks. Developed by Eran Goldman et al., it includes over 110,000 unique SKU categories. Its importance lies in its ability to challenge state-of-the-art object detectors with diverse object appearances and proximity, making it an invaluable resource for researchers and practitioners in computer vision. Learn more about the dataset's structure and applications in our SKU-110k Dataset section.

How do I train a YOLO26 model using the SKU-110k dataset?

Training a YOLO26 model on the SKU-110k dataset is straightforward. Here's an example to train a YOLO26n model for 100 epochs with an image size of 640:

Train Example

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="SKU-110K.yaml", epochs=100, imgsz=640)

# Start training from a pretrained *.pt model

yolo detect train data=SKU-110K.yaml model=yolo26n.pt epochs=100 imgsz=640

For a comprehensive list of available arguments, refer to the model Training page.

What are the main subsets of the SKU-110k dataset?

The SKU-110k dataset is organized into three main subsets:

- Training set: Contains 8,219 images and annotations used for training object detection models.

- Validation set: Consists of 588 images and annotations used for model validation during training.

- Test set: Includes 2,936 images designed for the final evaluation of trained object detection models.

Refer to the Dataset Structure section for more details.

How do I configure the SKU-110k dataset for training?

The SKU-110k dataset configuration is defined in a YAML file, which includes details about the dataset's paths, classes, and other relevant information. The SKU-110K.yaml file is maintained at SKU-110K.yaml. For example, you can train a model using this configuration as shown in our Usage section.

What are the key features of the SKU-110k dataset in the context of deep learning?

The SKU-110k dataset features images of store shelves from around the world, showcasing densely packed objects that pose significant challenges for object detectors:

- Over 110,000 unique SKU categories

- Diverse object appearances

- Annotations include bounding boxes and SKU category labels

These features make the SKU-110k dataset particularly valuable for training and evaluating deep learning models in object detection tasks. For more details, see the Key Features section.

How do I cite the SKU-110k dataset in my research?

If you use the SKU-110k dataset in your research or development work, please cite the following paper:

@inproceedings{goldman2019dense,

author = {Eran Goldman and Roei Herzig and Aviv Eisenschtat and Jacob Goldberger and Tal Hassner},

title = {Precise Detection in Densely Packed Scenes},

booktitle = {Proc. Conf. Comput. Vision Pattern Recognition (CVPR)},

year = {2019}

}

More information about the dataset can be found in the Citations and Acknowledgments section.