Model Benchmarking with Ultralytics YOLO

Benchmark Visualization

Refresh Browser

You may need to refresh the page to view the graphs correctly due to potential cookie issues.

Introduction

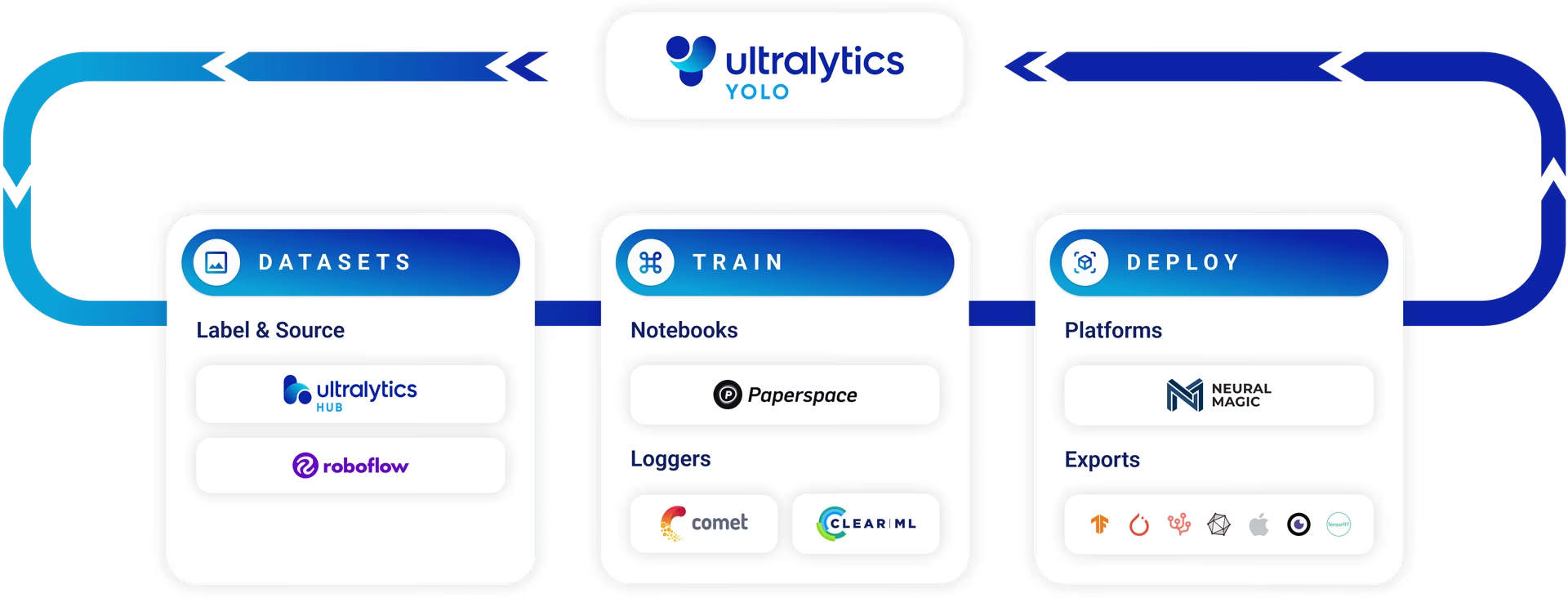

Once your model is trained and validated, the next logical step is to evaluate its performance in various real-world scenarios. Benchmark mode in Ultralytics YOLO26 serves this purpose by providing a robust framework for assessing the speed and accuracy of your model across a range of export formats.

Watch: Benchmark Ultralytics YOLO26 Models | How to Compare Model Performance on Different Hardware?

Why Is Benchmarking Crucial?

- Informed Decisions: Gain insights into the trade-offs between speed and accuracy.

- Resource Allocation: Understand how different export formats perform on different hardware.

- Optimization: Learn which export format offers the best performance for your specific use case.

- Cost Efficiency: Make more efficient use of hardware resources based on benchmark results.

Key Metrics in Benchmark Mode

- mAP50-95: For object detection, segmentation, and pose estimation.

- accuracy_top5: For image classification.

- Inference Time: Time taken for each image in milliseconds.

Supported Export Formats

- ONNX: For optimal CPU performance

- TensorRT: For maximal GPU efficiency

- OpenVINO: For Intel hardware optimization

- CoreML, TensorFlow SavedModel, and More: For diverse deployment needs.

Tip

- Export to ONNX or OpenVINO for up to 3x CPU speedup.

- Export to TensorRT for up to 5x GPU speedup.

Usage Examples

Run YOLO26n benchmarks across all supported export formats (ONNX, TensorRT, etc.). See the Arguments section below for a full list of export options.

Example

from ultralytics.utils.benchmarks import benchmark

# Benchmark on GPU

benchmark(model="yolo26n.pt", data="coco8.yaml", imgsz=640, half=False, device=0)

# Benchmark specific export format

benchmark(model="yolo26n.pt", data="coco8.yaml", imgsz=640, format="onnx")

yolo benchmark model=yolo26n.pt data='coco8.yaml' imgsz=640 half=False device=0

# Benchmark specific export format

yolo benchmark model=yolo26n.pt data='coco8.yaml' imgsz=640 format=onnx

Arguments

Arguments such as model, data, imgsz, half, device, verbose and format provide users with the flexibility to fine-tune the benchmarks to their specific needs and compare the performance of different export formats with ease.

| Key | Default Value | Description |

|---|---|---|

model | None | Specifies the path to the model file. Accepts both .pt and .yaml formats, e.g., "yolo26n.pt" for pretrained models or configuration files. |

data | None | Path to a YAML file defining the dataset for benchmarking, typically including paths and settings for validation data. Example: "coco8.yaml". |

imgsz | 640 | The input image size for the model. Can be a single integer for square images or a tuple (width, height) for non-square, e.g., (640, 480). |

half | False | Enables FP16 (half-precision) inference, reducing memory usage and possibly increasing speed on compatible hardware. Use half=True to enable. |

int8 | False | Activates INT8 quantization for further optimized performance on supported devices, especially useful for edge devices. Set int8=True to use. |

device | None | Defines the computation device(s) for benchmarking, such as "cpu" or "cuda:0". |

verbose | False | Controls the level of detail in logging output. Set verbose=True for detailed logs. |

format | '' | Benchmarks only the specified export format (e.g., format=onnx). Leave it blank to test every supported format automatically. |

Export Formats

Benchmarks will attempt to run automatically on all possible export formats listed below. Alternatively, you can run benchmarks for a specific format by using the format argument, which accepts any of the formats mentioned below.

| Format | format Argument | Model | Metadata | Arguments |

|---|---|---|---|---|

| PyTorch | - | yolo26n.pt | ✅ | - |

| TorchScript | torchscript | yolo26n.torchscript | ✅ | imgsz, half, dynamic, optimize, nms, batch, device |

| ONNX | onnx | yolo26n.onnx | ✅ | imgsz, half, dynamic, simplify, opset, nms, batch, device |

| OpenVINO | openvino | yolo26n_openvino_model/ | ✅ | imgsz, half, dynamic, int8, nms, batch, data, fraction, device |

| TensorRT | engine | yolo26n.engine | ✅ | imgsz, half, dynamic, simplify, workspace, int8, nms, batch, data, fraction, device |

| CoreML | coreml | yolo26n.mlpackage | ✅ | imgsz, dynamic, half, int8, nms, batch, device |

| TF SavedModel | saved_model | yolo26n_saved_model/ | ✅ | imgsz, keras, int8, nms, batch, data, fraction, device |

| TF GraphDef | pb | yolo26n.pb | ❌ | imgsz, batch, device |

| TF Lite | tflite | yolo26n.tflite | ✅ | imgsz, half, int8, nms, batch, data, fraction, device |

| TF Edge TPU | edgetpu | yolo26n_edgetpu.tflite | ✅ | imgsz, int8, data, fraction, device |

| TF.js | tfjs | yolo26n_web_model/ | ✅ | imgsz, half, int8, nms, batch, data, fraction, device |

| PaddlePaddle | paddle | yolo26n_paddle_model/ | ✅ | imgsz, batch, device |

| MNN | mnn | yolo26n.mnn | ✅ | imgsz, batch, int8, half, device |

| NCNN | ncnn | yolo26n_ncnn_model/ | ✅ | imgsz, half, batch, device |

| IMX500 | imx | yolo26n_imx_model/ | ✅ | imgsz, int8, data, fraction, nms, device |

| RKNN | rknn | yolo26n_rknn_model/ | ✅ | imgsz, batch, name, device |

| ExecuTorch | executorch | yolo26n_executorch_model/ | ✅ | imgsz, batch, device |

| Axelera | axelera | yolo26n_axelera_model/ | ✅ | imgsz, batch, int8, data, fraction, device |

See full export details in the Export page.

FAQ

How do I benchmark my YOLO26 model's performance using Ultralytics?

Ultralytics YOLO26 offers a Benchmark mode to assess your model's performance across different export formats. This mode provides insights into key metrics such as mean Average Precision (mAP50-95), accuracy, and inference time in milliseconds. To run benchmarks, you can use either Python or CLI commands. For example, to benchmark on a GPU:

Example

from ultralytics.utils.benchmarks import benchmark

# Benchmark on GPU

benchmark(model="yolo26n.pt", data="coco8.yaml", imgsz=640, half=False, device=0)

yolo benchmark model=yolo26n.pt data='coco8.yaml' imgsz=640 half=False device=0

For more details on benchmark arguments, visit the Arguments section.

What are the benefits of exporting YOLO26 models to different formats?

Exporting YOLO26 models to different formats such as ONNX, TensorRT, and OpenVINO allows you to optimize performance based on your deployment environment. For instance:

- ONNX: Provides up to 3x CPU speedup.

- TensorRT: Offers up to 5x GPU speedup.

- OpenVINO: Specifically optimized for Intel hardware.

These formats enhance both the speed and accuracy of your models, making them more efficient for various real-world applications. Visit the Export page for complete details.

Why is benchmarking crucial in evaluating YOLO26 models?

Benchmarking your YOLO26 models is essential for several reasons:

- Informed Decisions: Understand the trade-offs between speed and accuracy.

- Resource Allocation: Gauge the performance across different hardware options.

- Optimization: Determine which export format offers the best performance for specific use cases.

- Cost Efficiency: Optimize hardware usage based on benchmark results.

Key metrics such as mAP50-95, Top-5 accuracy, and inference time help in making these evaluations. Refer to the Key Metrics section for more information.

Which export formats are supported by YOLO26, and what are their advantages?

YOLO26 supports a variety of export formats, each tailored for specific hardware and use cases:

- ONNX: Best for CPU performance.

- TensorRT: Ideal for GPU efficiency.

- OpenVINO: Optimized for Intel hardware.

- CoreML & TensorFlow: Useful for iOS and general ML applications.

For a complete list of supported formats and their respective advantages, check out the Supported Export Formats section.

What arguments can I use to fine-tune my YOLO26 benchmarks?

When running benchmarks, several arguments can be customized to suit specific needs:

- model: Path to the model file (e.g., "yolo26n.pt").

- data: Path to a YAML file defining the dataset (e.g., "coco8.yaml").

- imgsz: The input image size, either as a single integer or a tuple.

- half: Enable FP16 inference for better performance.

- int8: Activate INT8 quantization for edge devices.

- device: Specify the computation device (e.g., "cpu", "cuda:0").

- verbose: Control the level of logging detail.

For a full list of arguments, refer to the Arguments section.