Ottimizzazione delle inferenze YOLO26 con il DeepSparse Engine di Neural Magic

Quando si distribuiscono modelli di object detection come Ultralytics YOLO26 su hardware diversi, si possono incontrare problemi unici come l'ottimizzazione. È qui che entra in gioco l'integrazione di YOLO26 con il DeepSparse Engine di Neural Magic. Trasforma il modo in cui i modelli YOLO26 vengono eseguiti e consente prestazioni a livello di GPU direttamente sulle CPU.

Questa guida mostra come distribuire YOLO26 utilizzando DeepSparse di Neural Magic, come eseguire inferenze e come effettuare il benchmark delle prestazioni per garantirne l'ottimizzazione.

SparseML EOL

Neural Magic era acquisita da Red Hat nel gennaio 2025, e sta dismettendo le versioni community dei loro deepsparse, sparseml, sparsezoo, e sparsify librerie. Per ulteriori informazioni, consultare l'avviso pubblicato nel Readme su sparseml Repository GitHub.

DeepSparse di Neural Magic

DeepSparse di Neural Magic è un runtime di inferenza progettato per ottimizzare l'esecuzione di reti neurali su CPU. Applica tecniche avanzate come sparsity, pruning e quantizzazione per ridurre drasticamente le esigenze computazionali mantenendo al contempo la precisione. DeepSparse offre una soluzione agile per un'esecuzione efficiente e scalabile di reti neurali su vari dispositivi.

Vantaggi dell'integrazione di DeepSparse di Neural Magic con YOLO26

Prima di addentrarci nella distribuzione di YOLO26 tramite DeepSparse, cerchiamo di comprenderne i vantaggi. Alcuni dei principali vantaggi includono:

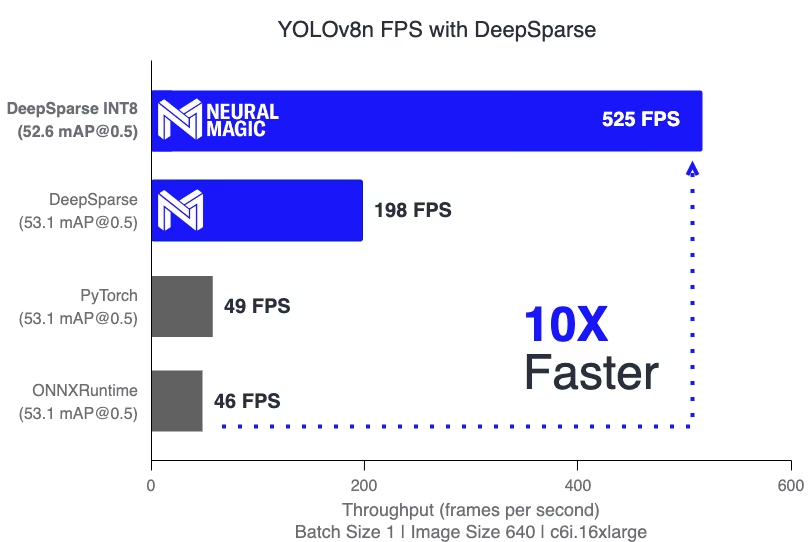

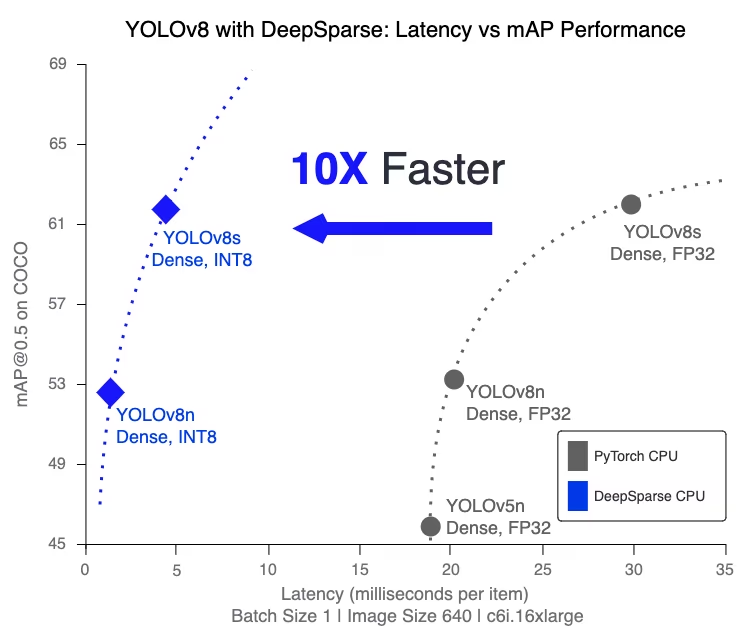

- Velocità di inferenza migliorata: Raggiunge fino a 525 FPS (su YOLO11n), accelerando significativamente le capacità di inferenza di YOLO rispetto ai metodi tradizionali.

- Efficienza del modello ottimizzata: Utilizza la potatura (pruning) e la quantizzazione per migliorare l'efficienza di YOLO26, riducendo le dimensioni del modello e i requisiti computazionali pur mantenendo l'accuratezza.

Alte prestazioni su CPU standard: Offre prestazioni simili a quelle di una GPU su CPU, fornendo un'opzione più accessibile ed economica per varie applicazioni.

Integrazione e distribuzione semplificate: Offre strumenti intuitivi per una facile integrazione di YOLO26 nelle applicazioni, incluse funzionalità di annotazione di immagini e video.

Supporto per vari tipi di modelli: Compatibile sia con i modelli YOLO26 standard che con quelli ottimizzati per la sparsità, aggiungendo flessibilità di distribuzione.

Soluzione Scalabile ed Economicamente Vantaggiosa: Riduce le spese operative e offre una distribuzione scalabile di modelli avanzati di object detection.

Come funziona la tecnologia DeepSparse di Neural Magic?

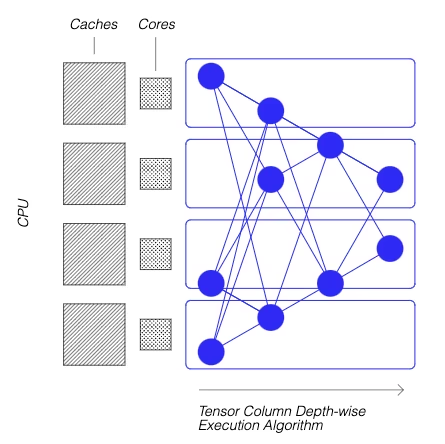

La tecnologia DeepSparse di Neural Magic si ispira all'efficienza del cervello umano nel calcolo delle reti neurali. Adotta due principi chiave dal cervello come segue:

Sparsezza: Il processo di sparsificazione comporta la rimozione di informazioni ridondanti dalle reti di deep learning, portando a modelli più piccoli e veloci senza compromettere l'accuratezza. Questa tecnica riduce significativamente le dimensioni della rete e le esigenze computazionali.

Località di riferimento: DeepSparse utilizza un metodo di esecuzione univoco, suddividendo la rete in colonne tensor. Queste colonne vengono eseguite in senso di profondità, adattandosi interamente alla cache della CPU. Questo approccio imita l'efficienza del cervello, riducendo al minimo lo spostamento dei dati e massimizzando l'uso della cache della CPU.

Creazione di una versione sparsa di YOLO26 addestrata su un dataset personalizzato

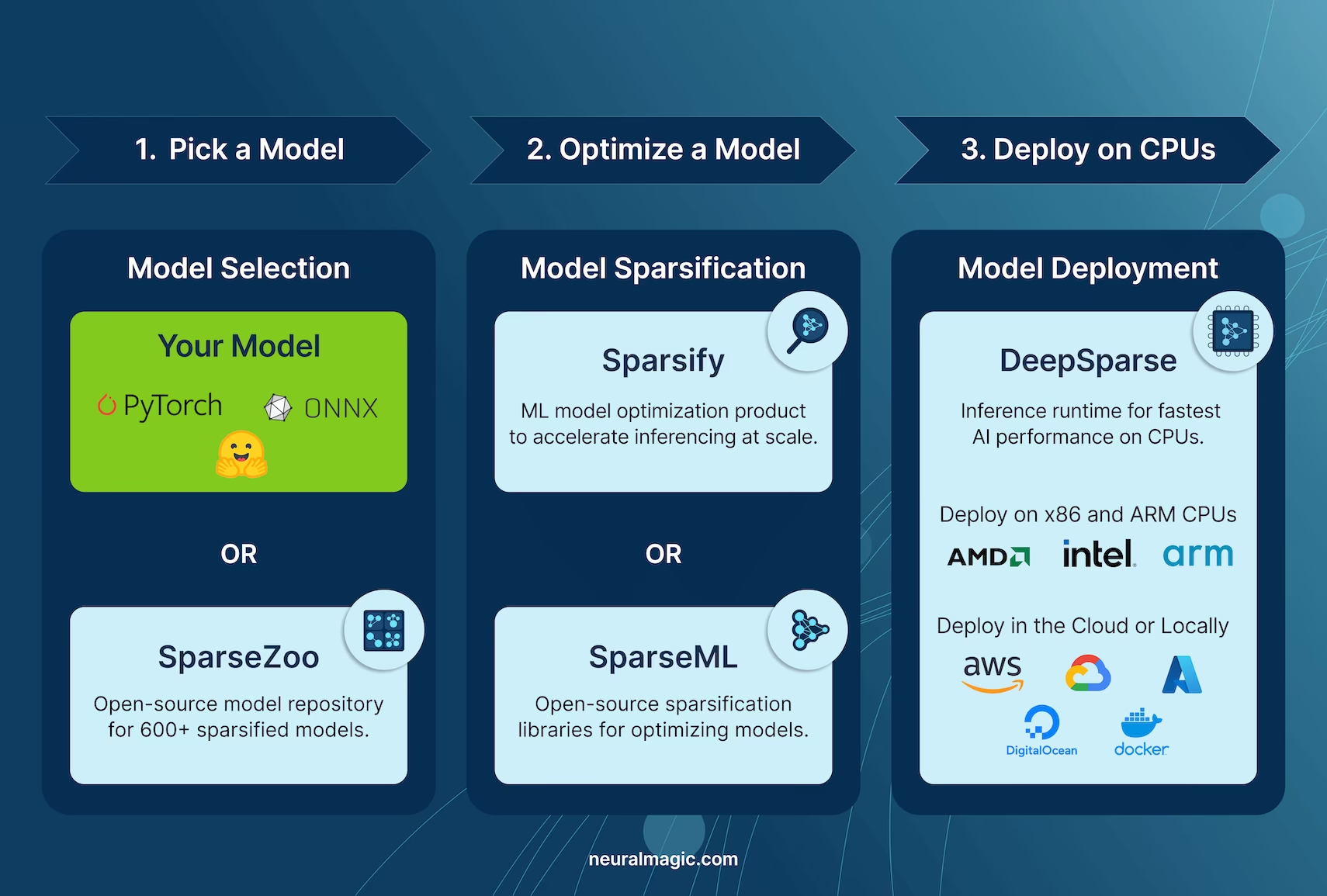

SparseZoo, un repository di modelli open-source di Neural Magic, offre una collezione di checkpoint di modelli YOLO26 pre-sparsificati. Con SparseML, perfettamente integrato con Ultralytics, gli utenti possono facilmente ottimizzare questi checkpoint sparsi sui loro dataset specifici utilizzando una semplice interfaccia a riga di comando.

Per maggiori dettagli, consulta la documentazione SparseML YOLO26 di Neural Magic.

Utilizzo: Distribuzione di YOLO26 tramite DeepSparse

La distribuzione di YOLO26 con DeepSparse di Neural Magic comporta alcuni semplici passaggi. Prima di addentrarti nelle istruzioni d'uso, assicurati di consultare la gamma di modelli YOLO26 offerti da Ultralytics. Questo ti aiuterà a scegliere il modello più appropriato per le tue esigenze di progetto. Ecco come puoi iniziare.

Passaggio 1: Installazione

Per installare i pacchetti richiesti, esegui:

Installazione

# Install the required packages

pip install deepsparse[yolov8]

Passaggio 2: Esportazione di YOLO26 in formato ONNX

Il DeepSparse Engine richiede modelli YOLO26 in formato ONNX. L'esportazione del modello in questo formato è essenziale per la compatibilità con DeepSparse. Utilizza il seguente comando per esportare i modelli YOLO26:

Esportazione del modello

# Export YOLO26 model to ONNX format

yolo task=detect mode=export model=yolo26n.pt format=onnx opset=13

Questo comando salverà il yolo26n.onnx modello sul tuo disco.

Fase 3: Distribuzione ed esecuzione delle inferenze

Con il tuo modello YOLO26 in formato ONNX, puoi distribuire ed eseguire inferenze utilizzando DeepSparse. Questo può essere fatto facilmente con la loro intuitiva API python:

Distribuzione ed esecuzione di inferenze

from deepsparse import Pipeline

# Specify the path to your YOLO26 ONNX model

model_path = "path/to/yolo26n.onnx"

# Set up the DeepSparse Pipeline

yolo_pipeline = Pipeline.create(task="yolov8", model_path=model_path)

# Run the model on your images

images = ["path/to/image.jpg"]

pipeline_outputs = yolo_pipeline(images=images)

Fase 4: Valutazione comparativa delle prestazioni

È importante verificare che il tuo modello YOLO26 stia funzionando in modo ottimale su DeepSparse. Puoi eseguire il benchmark delle prestazioni del tuo modello per analizzare throughput e latenza:

Benchmarking

# Benchmark performance

deepsparse.benchmark model_path="path/to/yolo26n.onnx" --scenario=sync --input_shapes="[1,3,640,640]"

Fase 5: Funzionalità aggiuntive

DeepSparse offre funzionalità aggiuntive per l'integrazione pratica di YOLO26 nelle applicazioni, come l'annotazione di immagini e la valutazione dei dataset.

Funzionalità aggiuntive

# For image annotation

deepsparse.yolov8.annotate --source "path/to/image.jpg" --model_filepath "path/to/yolo26n.onnx"

# For evaluating model performance on a dataset

deepsparse.yolov8.eval --model_path "path/to/yolo26n.onnx"

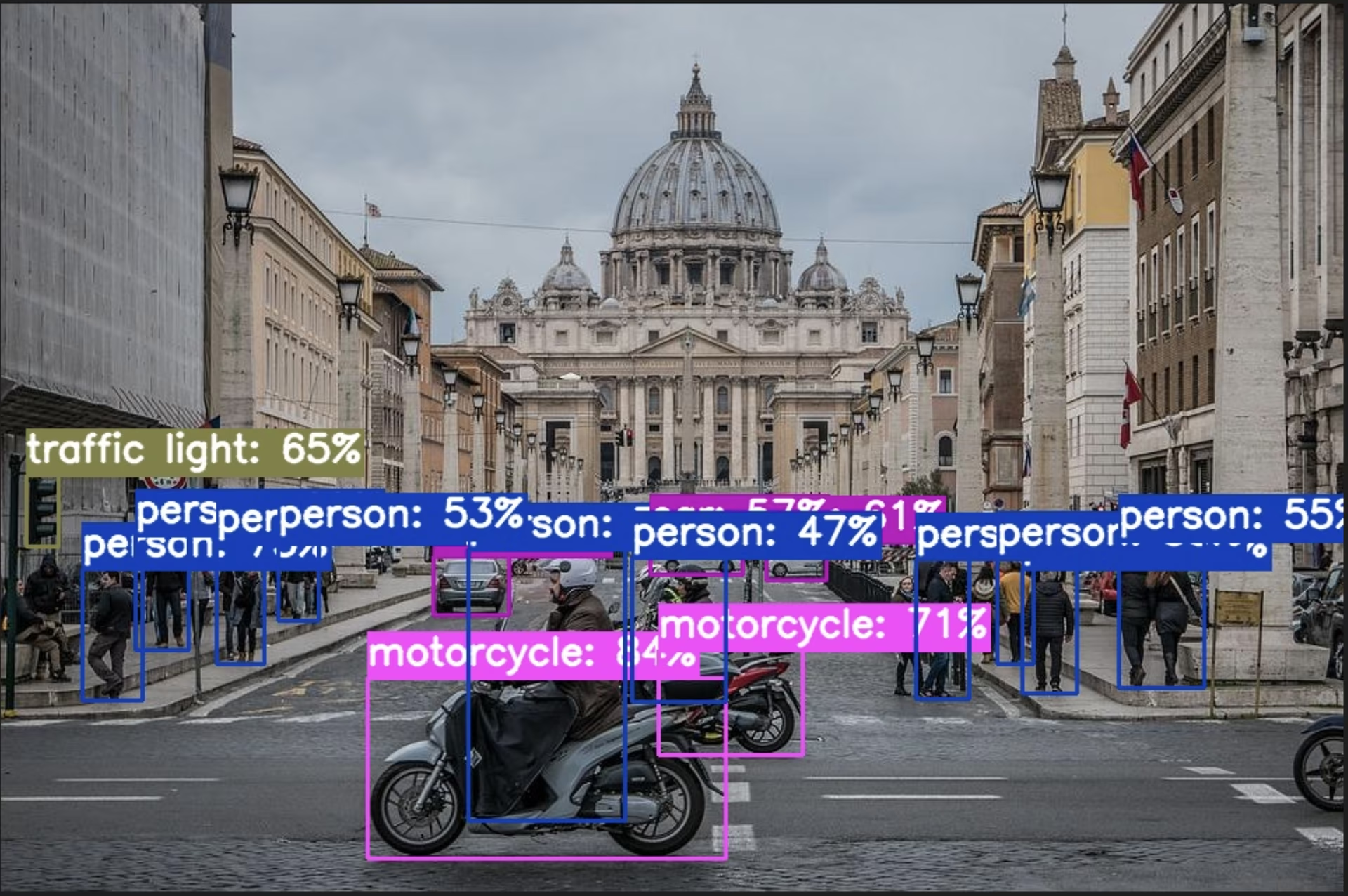

L'esecuzione del comando di annotazione elabora l'immagine specificata, rilevando gli oggetti e salvando l'immagine annotata con caselle di delimitazione e classificazioni. L'immagine annotata verrà archiviata in una cartella annotation-results. Questo aiuta a fornire una rappresentazione visiva delle capacità di rilevamento del modello.

Dopo aver eseguito il comando eval, riceverai metriche di output dettagliate come precision, recall e mAP (mean Average Precision). Questo fornisce una visione completa delle prestazioni del tuo modello sul dataset ed è particolarmente utile per la messa a punto e l'ottimizzazione dei tuoi modelli YOLO26 per casi d'uso specifici, garantendo elevata accuratezza ed efficienza.

Riepilogo

Questa guida ha esplorato l'integrazione di YOLO26 di Ultralytics con il DeepSparse Engine di Neural Magic. Ha evidenziato come questa integrazione migliori le prestazioni di YOLO26 su piattaforme CPU, offrendo un'efficienza a livello di GPU e tecniche avanzate di sparsità delle reti neurali.

Per informazioni più dettagliate e un utilizzo avanzato, visita la documentazione DeepSparse di Neural Magic. Puoi anche esplorare la guida all'integrazione di YOLO26 e guardare una sessione dimostrativa su YouTube.

Inoltre, per una comprensione più ampia delle varie integrazioni di YOLO26, visita la pagina della guida all'integrazione di Ultralytics, dove puoi scoprire una serie di altre entusiasmanti possibilità di integrazione.

FAQ

Che cos'è il DeepSparse Engine di Neural Magic e come ottimizza le prestazioni di YOLO26?

Il DeepSparse Engine di Neural Magic è un runtime di inferenza progettato per ottimizzare l'esecuzione delle reti neurali sulle CPU tramite tecniche avanzate come la sparsità, il pruning e la quantizzazione. Integrando DeepSparse con YOLO26, è possibile ottenere prestazioni simili a quelle delle GPU su CPU standard, migliorando significativamente la velocità di inferenza, l'efficienza del modello e le prestazioni complessive, mantenendo al contempo l'accuratezza. Per maggiori dettagli, consulta la sezione DeepSparse di Neural Magic.

Come posso installare i pacchetti necessari per distribuire YOLO26 utilizzando DeepSparse di Neural Magic?

L'installazione dei pacchetti necessari per il deployment di YOLO26 con DeepSparse di Neural Magic è semplice. Puoi installarli facilmente utilizzando la CLI. Ecco il comando da eseguire:

pip install deepsparse[yolov8]

Una volta installato, segui i passaggi forniti nella sezione Installazione per configurare il tuo ambiente e iniziare a usare DeepSparse con YOLO26.

Come si convertono i modelli YOLO26 in formato ONNX per l'utilizzo con DeepSparse?

Per convertire i modelli YOLO26 nel formato ONNX, necessario per la compatibilità con DeepSparse, puoi utilizzare il seguente comando CLI:

yolo task=detect mode=export model=yolo26n.pt format=onnx opset=13

Questo comando esporterà il tuo modello YOLO26 (yolo26n.pt) a un formato (yolo26n.onnx) che può essere utilizzato dal DeepSparse Engine. Ulteriori informazioni sull'esportazione del modello sono disponibili nella Sezione Esportazione del modello.

Come si esegue il benchmark delle prestazioni di YOLO26 sul DeepSparse Engine?

Il benchmarking delle prestazioni di YOLO26 su DeepSparse ti aiuta ad analizzare throughput e latenza per assicurarti che il tuo modello sia ottimizzato. Puoi utilizzare il seguente comando CLI per eseguire un benchmark:

deepsparse.benchmark model_path="path/to/yolo26n.onnx" --scenario=sync --input_shapes="[1,3,640,640]"

Questo comando ti fornirà metriche di performance vitali. Per maggiori dettagli, consulta la sezione Sezione Benchmarking Performance.

Perché dovrei usare DeepSparse di Neural Magic con YOLO26 per i task di object detection?

L'integrazione di DeepSparse di Neural Magic con YOLO26 offre numerosi vantaggi:

- Velocità di Inferenza Migliorata: Raggiunge fino a 525 FPS (su YOLO11n), dimostrando le capacità di ottimizzazione di DeepSparse.

- Efficienza del Modello Ottimizzata: Utilizza tecniche di sparsità, pruning e quantizzazione per ridurre le dimensioni del modello e le esigenze computazionali, mantenendo al contempo la precisione.

- Alte prestazioni su CPU standard: Offre prestazioni simili a quelle di una GPU su hardware CPU economico.

- Integrazione Semplificata: Strumenti intuitivi per una facile implementazione e integrazione.

- Flessibilità: Supporta modelli YOLO26 sia standard che ottimizzati per la sparsità.

- Economicamente Vantaggioso: Riduce le spese operative attraverso un utilizzo efficiente delle risorse.

Per un'analisi più approfondita di questi vantaggi, visita la sezione Vantaggi dell'integrazione di DeepSparse di Neural Magic con YOLO26.