Ultralytics YOLO11

Genel Bakış

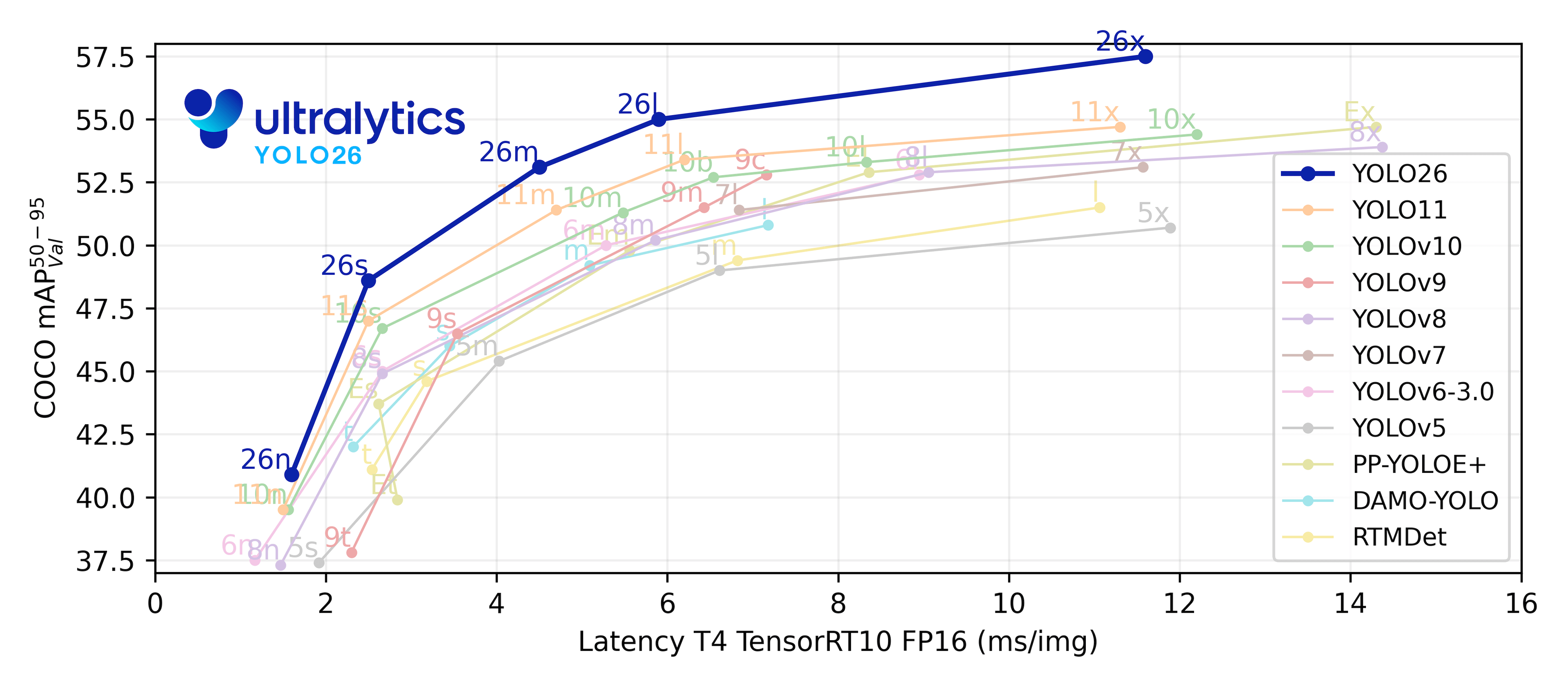

YOLO11, Ultralytics tarafından 10 Eylül 2024'te piyasaya sürüldü ve mükemmel doğruluk, hız ve verimlilik sunuyor. Önceki YOLO sürümlerinin etkileyici gelişmelerine dayanarak, YOLO11 mimari ve eğitim yöntemlerinde önemli iyileştirmeler sunarak geniş bir bilgisayar görüşü görevi yelpazesi için çok yönlü bir seçim haline geliyor. Uçtan uca NMS'siz çıkarım ve optimize edilmiş uç dağıtım özelliklerine sahip en yeni Ultralytics modeli için YOLO26'ya bakın.

Ultralytics YOLO11 🚀 NotebookLM tarafından oluşturulan Podcast

İzle: Nesne Algılama ve İzleme için Ultralytics YOLO11 Nasıl Kullanılır | Nasıl Kıyaslanır | YOLO11 YAYINLANDI🚀

Ultralytics Platformu'nda Deneyin

Ultralytics Platform üzerinde YOLO11 modellerini doğrudan keşfedin ve çalıştırın.

Temel Özellikler

- Gelişmiş Özellik Çıkarımı: YOLO11, daha hassas nesne tespiti ve karmaşık görev performansı için özellik çıkarımı yeteneklerini geliştiren geliştirilmiş bir backbone ve neck mimarisi kullanır.

- Verimlilik ve Hız için Optimize Edildi: YOLO11, gelişmiş mimari tasarımlar ve optimize edilmiş eğitim işlem hatları sunarak daha hızlı işlem hızları sağlar ve doğruluk ile performans arasında optimum bir denge kurar.

- Daha Az Parametreyle Daha Yüksek Doğruluk: Model tasarımındaki gelişmelerle YOLO11m, YOLOv8m'den %22 daha az parametre kullanırken COCO veri kümesinde daha yüksek bir ortalama Ortalama Kesinlik (mAP) elde ederek, doğruluktan ödün vermeden hesaplama açısından verimli hale gelir.

- Ortamlar Arası Uyarlanabilirlik: YOLO11, uç cihazlar, bulut platformları ve NVIDIA GPU'ları destekleyen sistemler dahil olmak üzere çeşitli ortamlarda sorunsuz bir şekilde dağıtılabilir ve maksimum esneklik sağlar.

- Geniş Yelpazede Desteklenen Görevler: İster nesne algılama, örnek bölümleme, görüntü sınıflandırma, poz tahmini veya yönlendirilmiş nesne algılama (OBB) olsun, YOLO11 çeşitli bilgisayar görüşü zorluklarına hitap etmek için tasarlanmıştır.

Desteklenen Görevler ve Modlar

YOLO11, önceki Ultralytics YOLO sürümleri tarafından oluşturulan çok yönlü model yelpazesi üzerine inşa edilerek çeşitli bilgisayar görüşü görevlerinde gelişmiş destek sunar:

| Model | Dosya adları | Görev | Çıkarım | Doğrulama | Eğitim | Dışa aktar |

|---|---|---|---|---|---|---|

| YOLO11 | yolo11n.pt yolo11s.pt yolo11m.pt yolo11l.pt yolo11x.pt | Algılama | ✅ | ✅ | ✅ | ✅ |

| YOLO11-seg | yolo11n-seg.pt yolo11s-seg.pt yolo11m-seg.pt yolo11l-seg.pt yolo11x-seg.pt | Örnek Segmentasyonu | ✅ | ✅ | ✅ | ✅ |

| YOLO11-pose | yolo11n-pose.pt yolo11s-pose.pt yolo11m-pose.pt yolo11l-pose.pt yolo11x-pose.pt | Poz/Anahtar Noktaları | ✅ | ✅ | ✅ | ✅ |

| YOLO11-obb | yolo11n-obb.pt yolo11s-obb.pt yolo11m-obb.pt yolo11l-obb.pt yolo11x-obb.pt | Yönlendirilmiş Algılama | ✅ | ✅ | ✅ | ✅ |

| YOLO11-cls | yolo11n-cls.pt yolo11s-cls.pt yolo11m-cls.pt yolo11l-cls.pt yolo11x-cls.pt | Sınıflandırma | ✅ | ✅ | ✅ | ✅ |

Bu tablo, YOLO11 model varyantlarına genel bir bakış sunarak, belirli görevlerdeki uygulanabilirliklerini ve Çıkarım, Doğrulama, Eğitim ve Dışa Aktarma gibi çalışma modlarıyla uyumluluklarını sergilemektedir. Bu esneklik, YOLO11'i gerçek zamanlı algılamadan karmaşık segmentasyon görevlerine kadar bilgisayarlı görüdeki çok çeşitli uygulamalar için uygun hale getirir.

Performans Metrikleri

Performans

Detection Belgeleri'ne bakınız. Bu modeller COCO üzerinde eğitilmiştir ve 80 önceden eğitilmiş sınıf içerir.

| Model | boyut (piksel) | mAPval 50-95 | Hız CPU ONNX (ms) | Hız T4 TensorRT10 (ms) | parametreler (M) | FLOP'lar (B) |

|---|---|---|---|---|---|---|

| YOLO11n | 640 | 39.5 | 56.1 ± 0.8 | 1.5 ± 0.0 | 2.6 | 6.5 |

| YOLO11s | 640 | 47.0 | 90.0 ± 1.2 | 2.5 ± 0.0 | 9.4 | 21.5 |

| YOLO11m | 640 | 51.5 | 183.2 ± 2.0 | 4.7 ± 0.1 | 20.1 | 68.0 |

| YOLO11l | 640 | 53.4 | 238.6 ± 1.4 | 6.2 ± 0.1 | 25.3 | 86.9 |

| YOLO11x | 640 | 54.7 | 462.8 ± 6.7 | 11.3 ± 0.2 | 56.9 | 194.9 |

Segmentation Belgeleri'ne bakınız. Bu modeller COCO üzerinde eğitilmiştir ve 80 önceden eğitilmiş sınıf içerir.

| Model | boyut (piksel) | mAPbox 50-95 | mAPmask 50-95 | Hız CPU ONNX (ms) | Hız T4 TensorRT10 (ms) | parametreler (M) | FLOP'lar (B) |

|---|---|---|---|---|---|---|---|

| YOLO11n-seg | 640 | 38.9 | 32.0 | 65.9 ± 1.1 | 1.8 ± 0.0 | 2.9 | 9.7 |

| YOLO11s-seg | 640 | 46.6 | 37.8 | 117.6 ± 4.9 | 2.9 ± 0.0 | 10.1 | 33.0 |

| YOLO11m-seg | 640 | 51.5 | 41.5 | 281.6 ± 1.2 | 6.3 ± 0.1 | 22.4 | 113.2 |

| YOLO11l-seg | 640 | 53.4 | 42.9 | 344.2 ± 3.2 | 7.8 ± 0.2 | 27.6 | 132.2 |

| YOLO11x-seg | 640 | 54.7 | 43.8 | 664.5 ± 3.2 | 15.8 ± 0.7 | 62.1 | 296.4 |

Classification Belgeleri'ne bakınız. Bu modeller ImageNet üzerinde eğitilmiştir ve 1000 önceden eğitilmiş sınıf içerir.

| Model | boyut (piksel) | acc top1 | acc top5 | Hız CPU ONNX (ms) | Hız T4 TensorRT10 (ms) | parametreler (M) | FLOP'lar (Milyar) - 224 |

|---|---|---|---|---|---|---|---|

| YOLO11n-cls | 224 | 70.0 | 89.4 | 5.0 ± 0.3 | 1.1 ± 0.0 | 2.8 | 0.5 |

| YOLO11s-cls | 224 | 75.4 | 92.7 | 7.9 ± 0.2 | 1.3 ± 0.0 | 6.7 | 1.6 |

| YOLO11m-cls | 224 | 77.3 | 93.9 | 17.2 ± 0.4 | 2.0 ± 0.0 | 11.6 | 4.9 |

| YOLO11l-cls | 224 | 78.3 | 94.3 | 23.2 ± 0.3 | 2.8 ± 0.0 | 14.1 | 6.2 |

| YOLO11x-cls | 224 | 79.5 | 94.9 | 41.4 ± 0.9 | 3.8 ± 0.0 | 29.6 | 13.6 |

Pose Estimation Belgeleri'ne bakınız. Bu modeller COCO üzerinde eğitilmiştir ve 'kişi' olmak üzere 1 önceden eğitilmiş sınıf içerir.

| Model | boyut (piksel) | mAPpose 50-95 | mAPpose 50 | Hız CPU ONNX (ms) | Hız T4 TensorRT10 (ms) | parametreler (M) | FLOP'lar (B) |

|---|---|---|---|---|---|---|---|

| YOLO11n-pose | 640 | 50.0 | 81.0 | 52.4 ± 0.5 | 1.7 ± 0.0 | 2.9 | 7.4 |

| YOLO11s-pose | 640 | 58.9 | 86.3 | 90.5 ± 0.6 | 2.6 ± 0.0 | 9.9 | 23.1 |

| YOLO11m-pose | 640 | 64.9 | 89.4 | 187.3 ± 0.8 | 4.9 ± 0.1 | 20.9 | 71.4 |

| YOLO11l-pose | 640 | 66.1 | 89.9 | 247.7 ± 1.1 | 6.4 ± 0.1 | 26.1 | 90.3 |

| YOLO11x-pose | 640 | 69.5 | 91.1 | 488.0 ± 13.9 | 12.1 ± 0.2 | 58.8 | 202.8 |

Oriented Detection Belgeleri'ne bakınız. Bu modeller DOTAv1 üzerinde eğitilmiştir ve 15 önceden eğitilmiş sınıf içerir.

| Model | boyut (piksel) | mAPtest 50 | Hız CPU ONNX (ms) | Hız T4 TensorRT10 (ms) | parametreler (M) | FLOP'lar (B) |

|---|---|---|---|---|---|---|

| YOLO11n-obb | 1024 | 78.4 | 117.6 ± 0.8 | 4.4 ± 0.0 | 2.7 | 16.8 |

| YOLO11s-obb | 1024 | 79.5 | 219.4 ± 4.0 | 5.1 ± 0.0 | 9.7 | 57.1 |

| YOLO11m-obb | 1024 | 80.9 | 562.8 ± 2.9 | 10.1 ± 0.4 | 20.9 | 182.8 |

| YOLO11l-obb | 1024 | 81.0 | 712.5 ± 5.0 | 13.5 ± 0.6 | 26.1 | 231.2 |

| YOLO11x-obb | 1024 | 81.3 | 1408.6 ± 7.7 | 28.6 ± 1.0 | 58.8 | 519.1 |

Kullanım Örnekleri

Bu bölüm, basit YOLO11 eğitimi ve çıkarım örnekleri sunmaktadır. Bunlar ve diğer modlar hakkında tam dokümantasyon için Predict, Train, Val ve Export doküman sayfalarına bakın.

Aşağıdaki örneğin, nesne algılama için YOLO11 Detect modelleri için olduğunu unutmayın. Ek desteklenen görevler için Segment, Classify, OBB ve Pose belgelerine bakın.

Örnek

PyTorch önceden eğitilmiş *.pt modellerin yanı sıra yapılandırma *.yaml dosyaları YOLO() Python'da bir model örneği oluşturmak için sınıf:

from ultralytics import YOLO

# Load a COCO-pretrained YOLO11n model

model = YOLO("yolo11n.pt")

# Train the model on the COCO8 example dataset for 100 epochs

results = model.train(data="coco8.yaml", epochs=100, imgsz=640)

# Run inference with the YOLO11n model on the 'bus.jpg' image

results = model("path/to/bus.jpg")

Modelleri doğrudan çalıştırmak için CLI komutları mevcuttur:

# Load a COCO-pretrained YOLO11n model and train it on the COCO8 example dataset for 100 epochs

yolo train model=yolo11n.pt data=coco8.yaml epochs=100 imgsz=640

# Load a COCO-pretrained YOLO11n model and run inference on the 'bus.jpg' image

yolo predict model=yolo11n.pt source=path/to/bus.jpg

Alıntılar ve Teşekkürler

Ultralytics YOLO11 Yayını

Ultralytics, modellerin hızla gelişen yapısı nedeniyle YOLO11 için resmi bir araştırma makalesi yayınlamadı. Statik dokümantasyon üretmek yerine, teknolojiyi geliştirmeye ve kullanımını kolaylaştırmaya odaklanıyoruz. YOLO mimarisi, özellikleri ve kullanımı hakkında en güncel bilgiler için lütfen GitHub depomuza ve belgelerimize bakın.

Çalışmanızda YOLO11'i veya bu depodaki başka bir yazılımı kullanıyorsanız, lütfen aşağıdaki formatı kullanarak atıfta bulunun:

@software{yolo11_ultralytics,

author = {Glenn Jocher and Jing Qiu},

title = {Ultralytics YOLO11},

version = {11.0.0},

year = {2024},

url = {https://github.com/ultralytics/ultralytics},

orcid = {0000-0001-5950-6979, 0000-0003-3783-7069},

license = {AGPL-3.0}

}

Lütfen DOI'nin beklemede olduğunu ve kullanılabilir olduğunda alıntıya ekleneceğini unutmayın. YOLO11 modelleri AGPL-3.0 ve Enterprise lisansları altında sağlanmaktadır.

SSS

Ultralytics YOLO11'deki YOLOv8'e kıyasla temel iyileştirmeler nelerdir?

Ultralytics YOLO11, YOLOv8'e göre birçok önemli gelişme sunmaktadır. Başlıca iyileştirmeler şunlardır:

- Gelişmiş Özellik Çıkarımı: YOLO11, daha hassas nesne tespiti için özellik çıkarımı yeteneklerini geliştiren geliştirilmiş bir backbone ve neck mimarisi kullanır.

- Optimize Edilmiş Verimlilik ve Hız: Gelişmiş mimari tasarımlar ve optimize edilmiş eğitim işlem hatları, doğruluk ve performans arasında bir denge kurarken daha hızlı işlem hızları sağlar.

- Daha Az Parametreyle Daha Yüksek Doğruluk: YOLO11m, YOLOv8m'den %22 daha az parametreyle COCO veri kümesinde daha yüksek ortalama Ortalama Kesinlik (mAP) elde ederek, doğruluktan ödün vermeden hesaplama açısından verimli hale gelir.

- Ortamlar Arası Uyarlanabilirlik: YOLO11, uç cihazlar, bulut platformları ve NVIDIA GPU'ları destekleyen sistemler dahil olmak üzere çeşitli ortamlarda dağıtılabilir.

- Geniş Yelpazede Desteklenen Görevler: YOLO11, nesne algılama, örnek bölümleme, görüntü sınıflandırma, poz tahmini ve yönlendirilmiş nesne algılama (OBB) gibi çeşitli bilgisayar görüşü görevlerini destekler.

Nesne tespiti için bir YOLO11 modelini nasıl eğitirim?

Nesne algılama için bir YOLO11 modeli eğitimi, Python veya CLI komutları kullanılarak yapılabilir. Aşağıda her iki yöntem için de örnekler bulunmaktadır:

Örnek

from ultralytics import YOLO

# Load a COCO-pretrained YOLO11n model

model = YOLO("yolo11n.pt")

# Train the model on the COCO8 example dataset for 100 epochs

results = model.train(data="coco8.yaml", epochs=100, imgsz=640)

# Load a COCO-pretrained YOLO11n model and train it on the COCO8 example dataset for 100 epochs

yolo train model=yolo11n.pt data=coco8.yaml epochs=100 imgsz=640

Daha ayrıntılı talimatlar için Eğitim belgelerine bakın.

YOLO11 modelleri hangi görevleri gerçekleştirebilir?

YOLO11 modelleri çok yönlüdür ve aşağıdakiler dahil olmak üzere çok çeşitli bilgisayarla görme görevlerini destekler:

- Nesne Algılama: Bir görüntüdeki nesneleri tanımlama ve konumlandırma.

- Örnek Bölütleme: Nesneleri algılama ve sınırlarını belirleme.

- Görüntü Sınıflandırma: Görüntüleri önceden tanımlanmış sınıflara ayırma.

- Poz Tahmini: İnsan vücudundaki kilit noktaları tespit etme ve izleme.

- Yönlendirilmiş Nesne Tespiti (OBB): Daha yüksek hassasiyet için döndürme ile nesneleri tespit etme.

Her görev hakkında daha fazla bilgi için Algılama, Örnek Segmentasyonu, Sınıflandırma, Poz Tahmini ve Yönlendirilmiş Algılama belgelerine bakın.

YOLO11 daha az parametreyle nasıl daha yüksek doğruluk elde eder?

YOLO11, model tasarımı ve optimizasyon tekniklerindeki gelişmeler sayesinde daha az parametre ile daha yüksek doğruluk elde eder. Geliştirilmiş mimari, verimli özellik çıkarımı ve işleme sağlayarak, YOLOv8m'den %22 daha az parametre kullanırken COCO gibi veri kümelerinde daha yüksek ortalama Ortalama Hassasiyet (mAP) sağlar. Bu, YOLO11'i doğruluktan ödün vermeden hesaplama açısından verimli hale getirerek, kaynak kısıtlı cihazlarda dağıtım için uygun hale getirir.

YOLO11, uç cihazlarda konuşlandırılabilir mi?

Evet, YOLO11, uç cihazlar da dahil olmak üzere çeşitli ortamlarda uyarlanabilirlik için tasarlanmıştır. Optimize edilmiş mimarisi ve verimli işleme yetenekleri, onu uç cihazlarda, bulut platformlarında ve NVIDIA GPU'ları destekleyen sistemlerde dağıtım için uygun hale getirir. Bu esneklik, YOLO11'in mobil cihazlarda gerçek zamanlı algılamadan bulut ortamlarındaki karmaşık segmentasyon görevlerine kadar çeşitli uygulamalarda kullanılabilmesini sağlar. Dağıtım seçenekleri hakkında daha fazla ayrıntı için Dışa Aktarma belgelerine bakın.