Segmentación de instancias

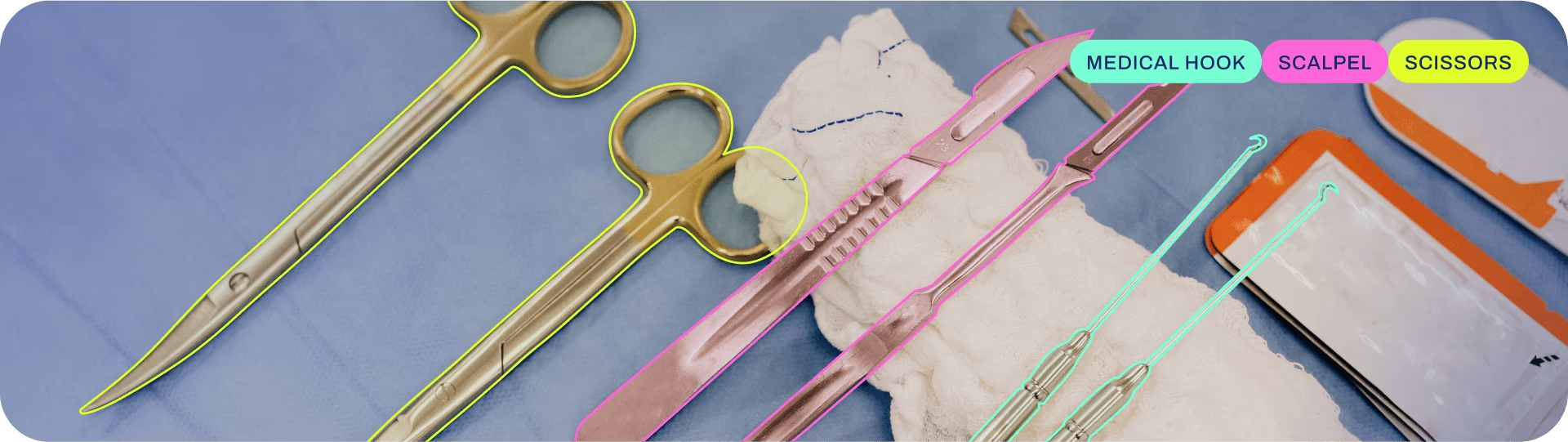

La segmentación de instancias va un paso más allá de la detección de objetos e implica la identificación de objetos individuales en una imagen y su segmentación del resto de la imagen.

La salida de un modelo de segmentación de instancias es un conjunto de máscaras o contornos que delinean cada objeto en la imagen, junto con etiquetas de clase y puntajes de confianza para cada objeto. La segmentación de instancias es útil cuando necesitas saber no solo dónde están los objetos en una imagen, sino también cuál es su forma exacta.

Ver: Ejecutar segmentación con un modelo YOLO de Ultralytics preentrenado en python.

Consejo

Los modelos YOLO26 Segment utilizan el -seg sufijo, es decir, yolo26n-seg.pt, y están preentrenados en COCO.

Modelos

Aquí se muestran los modelos Segment preentrenados de YOLO26. Los modelos Detect, Segment y Pose están preentrenados en el conjunto de datos COCO, mientras que los modelos Classify están preentrenados en el conjunto de datos ImageNet.

Los modelos se descargan automáticamente desde la última versión de Ultralytics en el primer uso.

| Modelo | tamaño (píxeles) | mAPbox 50-95(e2e) | mAPmask 50(e2e) | Velocidad CPU ONNX (ms) | Velocidad T4 TensorRT10 (ms) | parámetros (M) | FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLO26n-seg | 640 | 39.6 | 33.9 | 53.3 ± 0.5 | 2.1 ± 0.0 | 2.7 | 9.1 |

| YOLO26s-seg | 640 | 47.3 | 40.0 | 118.4 ± 0.9 | 3.3 ± 0.0 | 10.4 | 34.2 |

| YOLO26m-seg | 640 | 52.5 | 44.1 | 328.2 ± 2.4 | 6.7 ± 0.1 | 23.6 | 121.5 |

| YOLO26l-seg | 640 | 54.4 | 45.5 | 387.0 ± 3.7 | 8.0 ± 0.1 | 28.0 | 139.8 |

| YOLO26x-seg | 640 | 56.5 | 47.0 | 787.0 ± 6.8 | 16.4 ± 0.1 | 62.8 | 313.5 |

- mAPval los valores corresponden a un solo modelo a escala única en COCO val2017 conjunto de datos.

Reproducir medianteyolo val segment data=coco.yaml device=0 - Velocidad promediado sobre imágenes val de COCO utilizando un instancia de Amazon EC2 P4d .

Reproducir medianteyolo val segment data=coco.yaml batch=1 device=0|cpu - Parámetros y FLOPs los valores son para el modelo fusionado después de

model.fuse(), que fusiona las capas Conv y BatchNorm y, para modelos de extremo a extremo, elimina el cabezal de detección auxiliar de uno a muchos. Los puntos de control preentrenados conservan la arquitectura de entrenamiento completa y pueden mostrar recuentos más altos.

Entrenar

Entrene YOLO26n-seg en el conjunto de datos COCO8-seg durante 100 épocas con un tamaño de imagen de 640. Para obtener una lista completa de los argumentos disponibles, consulte la página de Configuración.

Ejemplo

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.yaml") # build a new model from YAML

model = YOLO("yolo26n-seg.pt") # load a pretrained model (recommended for training)

model = YOLO("yolo26n-seg.yaml").load("yolo26n-seg.pt") # build from YAML and transfer weights

# Train the model

results = model.train(data="coco8-seg.yaml", epochs=100, imgsz=640)

# Build a new model from YAML and start training from scratch

yolo segment train data=coco8-seg.yaml model=yolo26n-seg.yaml epochs=100 imgsz=640

# Start training from a pretrained *.pt model

yolo segment train data=coco8-seg.yaml model=yolo26n-seg.pt epochs=100 imgsz=640

# Build a new model from YAML, transfer pretrained weights to it and start training

yolo segment train data=coco8-seg.yaml model=yolo26n-seg.yaml pretrained=yolo26n-seg.pt epochs=100 imgsz=640

Ver detalles completos del train modo en la Entrenar página. Los modelos de segmentación también se pueden entrenar en GPU en la nube a través de Ultralytics Platform.

Formato del dataset

El formato del conjunto de datos YOLO se describe detalladamente en la Guía del conjunto de datos. Para convertir tu conjunto de datos actual de otros formatos (como COCO .) al YOLO , utiliza la herramienta JSON2YOLO de Ultralytics. También puedes crear máscaras de segmentación en Ultralytics utilizando herramientas de polígonos y la anotación inteligente SAM.

Val

Valide el modelo YOLO26n-seg entrenado precisión en el dataset COCO8-seg. No se necesitan argumentos ya que el model conserva su entrenamiento data y argumentos como atributos del modelo.

Ejemplo

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom model

# Validate the model

metrics = model.val() # no arguments needed, dataset and settings remembered

metrics.box.map # map50-95(B)

metrics.box.map50 # map50(B)

metrics.box.map75 # map75(B)

metrics.box.maps # a list containing mAP50-95(B) for each category

metrics.box.image_metrics # per-image metrics dictionary for det with precision, recall, F1, TP, FP, and FN

metrics.seg.map # map50-95(M)

metrics.seg.map50 # map50(M)

metrics.seg.map75 # map75(M)

metrics.seg.maps # a list containing mAP50-95(M) for each category

metrics.seg.image_metrics # per-image metrics dictionary for seg with precision, recall, F1, TP, FP, and FN

yolo segment val model=yolo26n-seg.pt # val official model

yolo segment val model=path/to/best.pt # val custom model

Predecir

Utilice un modelo YOLO26n-seg entrenado para ejecutar predicciones en imágenes.

Ejemplo

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom model

# Predict with the model

results = model("https://ultralytics.com/images/bus.jpg") # predict on an image

# Access the results

for result in results:

xy = result.masks.xy # mask in polygon format

xyn = result.masks.xyn # normalized

masks = result.masks.data # mask in matrix format (num_objects x H x W)

yolo segment predict model=yolo26n-seg.pt source='https://ultralytics.com/images/bus.jpg' # predict with official model

yolo segment predict model=path/to/best.pt source='https://ultralytics.com/images/bus.jpg' # predict with custom model

Ver detalles completos del predict modo en la Predecir página.

Exportar

Exporte un modelo YOLO26n-seg a un formato diferente como ONNX, CoreML, etc.

Ejemplo

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n-seg.pt") # load an official model

model = YOLO("path/to/best.pt") # load a custom-trained model

# Export the model

model.export(format="onnx")

yolo export model=yolo26n-seg.pt format=onnx # export official model

yolo export model=path/to/best.pt format=onnx # export custom-trained model

Los formatos de exportación disponibles para YOLO26-seg se encuentran en la siguiente tabla. Puede exportar a cualquier formato utilizando el format argumento, es decir, format='onnx' o format='engine'. Se puede predecir o validar directamente sobre modelos exportados, es decir, yolo predict model=yolo26n-seg.onnxDespués de que finalice la exportación, se mostrarán ejemplos de uso para su modelo.

| Formato | format Argumento | Modelo | Metadatos | Argumentos |

|---|---|---|---|---|

| PyTorch | - | yolo26n-seg.pt | ✅ | - |

| TorchScript | torchscript | yolo26n-seg.torchscript | ✅ | imgsz, half, dynamic, optimize, nms, batch, device |

| ONNX | onnx | yolo26n-seg.onnx | ✅ | imgsz, half, dynamic, simplify, opset, nms, batch, device |

| OpenVINO | openvino | yolo26n-seg_openvino_model/ | ✅ | imgsz, half, dynamic, int8, nms, batch, data, fraction, device |

| TensorRT | engine | yolo26n-seg.engine | ✅ | imgsz, half, dynamic, simplify, workspace, int8, nms, batch, data, fraction, device |

| CoreML | coreml | yolo26n-seg.mlpackage | ✅ | imgsz, dynamic, half, int8, nms, batch, device |

| TF SavedModel | saved_model | yolo26n-seg_saved_model/ | ✅ | imgsz, keras, int8, nms, batch, device |

| TF GraphDef | pb | yolo26n-seg.pb | ❌ | imgsz, batch, device |

| TF Lite | tflite | yolo26n-seg.tflite | ✅ | imgsz, half, int8, nms, batch, data, fraction, device |

| TF Edge TPU | edgetpu | yolo26n-seg_edgetpu.tflite | ✅ | imgsz, device |

| TF.js | tfjs | yolo26n-seg_web_model/ | ✅ | imgsz, half, int8, nms, batch, device |

| PaddlePaddle | paddle | yolo26n-seg_paddle_model/ | ✅ | imgsz, batch, device |

| MNN | mnn | yolo26n-seg.mnn | ✅ | imgsz, batch, int8, half, device |

| NCNN | ncnn | yolo26n-seg_ncnn_model/ | ✅ | imgsz, half, batch, device |

| IMX500 | imx | yolo26n-seg_imx_model/ | ✅ | imgsz, int8, data, fraction, nms, device |

| RKNN | rknn | yolo26n-seg_rknn_model/ | ✅ | imgsz, batch, name, device |

| ExecuTorch | executorch | yolo26n-seg_executorch_model/ | ✅ | imgsz, batch, device |

| Axelera | axelera | yolo26n-seg_axelera_model/ | ✅ | imgsz, batch, int8, data, fraction, device |

Ver detalles completos del export detalles en la Exportar página.

Preguntas frecuentes

¿Cómo entreno un modelo de segmentation de YOLO26 en un conjunto de datos personalizado?

Para entrenar un modelo de segmentación YOLO26 con un conjunto de datos personalizado, primero debe preparar su conjunto de datos en el formato de segmentación YOLO. Puede utilizar herramientas como JSON2YOLO para convertir conjuntos de datos de otros formatos. Una vez que su conjunto de datos esté listo, puede entrenar el modelo utilizando comandos de Python o CLI:

Ejemplo

from ultralytics import YOLO

# Load a pretrained YOLO26 segment model

model = YOLO("yolo26n-seg.pt")

# Train the model

results = model.train(data="path/to/your_dataset.yaml", epochs=100, imgsz=640)

yolo segment train data=path/to/your_dataset.yaml model=yolo26n-seg.pt epochs=100 imgsz=640

Consulta la página de Configuración para obtener más argumentos disponibles.

¿Cuál es la diferencia entre la detección de objetos y la segmentación de instancias en YOLO26?

La detección de objetos identifica y localiza objetos dentro de una imagen dibujando cajas delimitadoras alrededor de ellos, mientras que la segmentación de instancias no solo identifica las cajas delimitadoras, sino que también delinea la forma exacta de cada objeto. Los modelos de segmentation de instancias de YOLO26 proporcionan máscaras o contornos que delinean cada objeto detect, lo cual es particularmente útil para tareas donde conocer la forma precisa de los objetos es importante, como en imágenes médicas o conducción autónoma.

¿Por qué utilizar YOLO26 para la segmentación de instancias?

Ultralytics YOLO26 es un modelo de vanguardia reconocido por su alta precisión y rendimiento en tiempo real, lo que lo hace ideal para tareas de segmentación de instancias. Los modelos YOLO26 Segment vienen preentrenados en el conjunto de datos COCO, lo que garantiza un rendimiento robusto en una variedad de objetos. Además, YOLO es compatible con las funcionalidades de entrenamiento, validación, predicción y exportación con una integración perfecta, lo que lo hace muy versátil tanto para aplicaciones de investigación como industriales.

¿Cómo cargo y valido un modelo de segmentación YOLO preentrenado?

Cargar y validar un modelo de segmentación YOLO preentrenado es sencillo. Aquí te mostramos cómo puedes hacerlo usando python y CLI:

Ejemplo

from ultralytics import YOLO

# Load a pretrained model

model = YOLO("yolo26n-seg.pt")

# Validate the model

metrics = model.val()

print("Mean Average Precision for boxes:", metrics.box.map)

print("Mean Average Precision for masks:", metrics.seg.map)

yolo segment val model=yolo26n-seg.pt

Estos pasos te proporcionarán métricas de validación como la Precisión Media Promedio (mAP), crucial para evaluar el rendimiento del modelo.

¿Cómo puedo exportar un modelo de segmentación YOLO al formato ONNX?

Exportar un modelo de segmentación YOLO al formato ONNX es sencillo y se puede hacer usando comandos de python o CLI:

Ejemplo

from ultralytics import YOLO

# Load a pretrained model

model = YOLO("yolo26n-seg.pt")

# Export the model to ONNX format

model.export(format="onnx")

yolo export model=yolo26n-seg.pt format=onnx

Para obtener más detalles sobre la exportación a varios formatos, consulte la página de Exportación.