Exportación MNN para modelos YOLO26 y despliegue

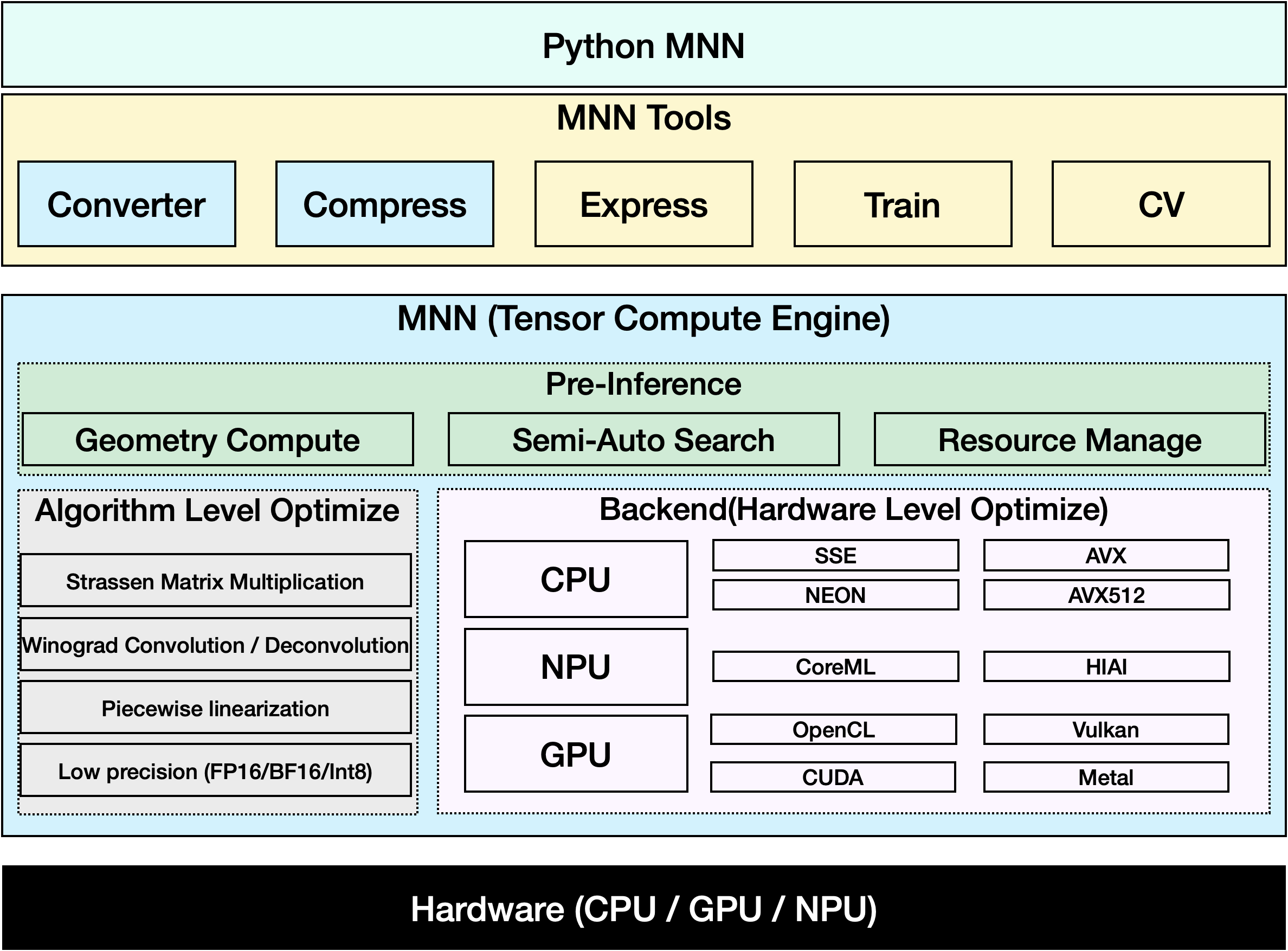

MNN

MNN es un framework de aprendizaje profundo altamente eficiente y ligero. Soporta la inferencia y el entrenamiento de modelos de aprendizaje profundo y tiene un rendimiento líder en la industria para la inferencia y el entrenamiento en el dispositivo. En la actualidad, MNN se ha integrado en más de 30 aplicaciones de Alibaba Inc, como Taobao, Tmall, Youku, DingTalk, Xianyu, etc., cubriendo más de 70 escenarios de uso como la transmisión en vivo, la captura de vídeo corto, la recomendación de búsqueda, la búsqueda de productos por imagen, el marketing interactivo, la distribución de equidad, el control de riesgos de seguridad. Además, MNN también se utiliza en dispositivos integrados, como el IoT.

Ver: Cómo exportar Ultralytics YOLO26 al formato MNN | Acelere la inferencia en dispositivos móviles📱

Exportar a MNN: Conversión de su modelo YOLO26

Puede ampliar la compatibilidad del modelo y la flexibilidad de implementación convirtiendo los modelos de Ultralytics YOLO al formato MNN. Esta conversión optimiza sus modelos para entornos móviles e integrados, lo que garantiza un rendimiento eficiente en dispositivos con recursos limitados.

Instalación

Para instalar los paquetes necesarios, ejecute:

Instalación

# Install the required package for YOLO26 and MNN

pip install ultralytics

pip install MNN

Uso

Todos los modelos Ultralytics YOLO26 están diseñados para soportar la exportación de forma nativa, facilitando su integración en su flujo de trabajo de despliegue preferido. Puede ver la lista completa de formatos de exportación y opciones de configuración compatibles para elegir la mejor configuración para su aplicación.

Uso

from ultralytics import YOLO

# Load the YOLO26 model

model = YOLO("yolo26n.pt")

# Export the model to MNN format

model.export(format="mnn") # creates 'yolo26n.mnn'

# Load the exported MNN model

mnn_model = YOLO("yolo26n.mnn")

# Run inference

results = mnn_model("https://ultralytics.com/images/bus.jpg")

# Export a YOLO26n PyTorch model to MNN format

yolo export model=yolo26n.pt format=mnn # creates 'yolo26n.mnn'

# Run inference with the exported model

yolo predict model='yolo26n.mnn' source='https://ultralytics.com/images/bus.jpg'

Argumentos de exportación

| Argumento | Tipo | Predeterminado | Descripción |

|---|---|---|---|

format | str | 'mnn' | Formato de destino para el modelo exportado, que define la compatibilidad con varios entornos de implementación. |

imgsz | int o tuple | 640 | Tamaño de imagen deseado para la entrada del modelo. Puede ser un entero para imágenes cuadradas o una tupla (height, width) para dimensiones específicas. |

half | bool | False | Activa la cuantización FP16 (media precisión), reduciendo el tamaño del modelo y, potencialmente, acelerando la inferencia en hardware compatible. |

int8 | bool | False | Activa la cuantización INT8, comprimiendo aún más el modelo y acelerando la inferencia con una pérdida mínima de precisión, principalmente para dispositivos de borde. |

batch | int | 1 | Especifica el tamaño del lote de inferencia del modelo exportado o el número máximo de imágenes que el modelo exportado procesará simultáneamente en predict modo. |

device | str | None | Especifica el dispositivo para la exportación: GPU (device=0), CPU (device=cpu), MPS para Apple silicon (device=mps). |

Para obtener más detalles sobre el proceso de exportación, visita la página de documentación de Ultralytics sobre la exportación.

Inferencia solo con MNN

Se ha implementado una función que se basa únicamente en MNN para la inferencia y el preprocesamiento de YOLO26, proporcionando versiones tanto de Python como de C++ para un despliegue sencillo en cualquier escenario.

MNN

import argparse

import MNN

import MNN.cv as cv2

import MNN.numpy as np

def inference(model, img, precision, backend, thread):

config = {}

config["precision"] = precision

config["backend"] = backend

config["numThread"] = thread

rt = MNN.nn.create_runtime_manager((config,))

# net = MNN.nn.load_module_from_file(model, ['images'], ['output0'], runtime_manager=rt)

net = MNN.nn.load_module_from_file(model, [], [], runtime_manager=rt)

original_image = cv2.imread(img)

ih, iw, _ = original_image.shape

length = max((ih, iw))

scale = length / 640

image = np.pad(original_image, [[0, length - ih], [0, length - iw], [0, 0]], "constant")

image = cv2.resize(

image, (640, 640), 0.0, 0.0, cv2.INTER_LINEAR, -1, [0.0, 0.0, 0.0], [1.0 / 255.0, 1.0 / 255.0, 1.0 / 255.0]

)

image = image[..., ::-1] # BGR to RGB

input_var = image[None]

input_var = MNN.expr.convert(input_var, MNN.expr.NC4HW4)

output_var = net.forward(input_var)

output_var = MNN.expr.convert(output_var, MNN.expr.NCHW)

output_var = output_var.squeeze()

# output_var shape: [84, 8400]; 84 means: [cx, cy, w, h, prob * 80]

cx = output_var[0]

cy = output_var[1]

w = output_var[2]

h = output_var[3]

probs = output_var[4:]

# [cx, cy, w, h] -> [y0, x0, y1, x1]

x0 = cx - w * 0.5

y0 = cy - h * 0.5

x1 = cx + w * 0.5

y1 = cy + h * 0.5

boxes = np.stack([x0, y0, x1, y1], axis=1)

# ensure ratio is within the valid range [0.0, 1.0]

boxes = np.clip(boxes, 0, 1)

# get max prob and idx

scores = np.max(probs, 0)

class_ids = np.argmax(probs, 0)

result_ids = MNN.expr.nms(boxes, scores, 100, 0.45, 0.25)

print(result_ids.shape)

# nms result box, score, ids

result_boxes = boxes[result_ids]

result_scores = scores[result_ids]

result_class_ids = class_ids[result_ids]

for i in range(len(result_boxes)):

x0, y0, x1, y1 = result_boxes[i].read_as_tuple()

y0 = int(y0 * scale)

y1 = int(y1 * scale)

x0 = int(x0 * scale)

x1 = int(x1 * scale)

# clamp to the original image size to handle cases where padding was applied

x1 = min(iw, x1)

y1 = min(ih, y1)

print(result_class_ids[i])

cv2.rectangle(original_image, (x0, y0), (x1, y1), (0, 0, 255), 2)

cv2.imwrite("res.jpg", original_image)

if __name__ == "__main__":

parser = argparse.ArgumentParser()

parser.add_argument("--model", type=str, required=True, help="the yolo26 model path")

parser.add_argument("--img", type=str, required=True, help="the input image path")

parser.add_argument("--precision", type=str, default="normal", help="inference precision: normal, low, high, lowBF")

parser.add_argument(

"--backend",

type=str,

default="CPU",

help="inference backend: CPU, OPENCL, OPENGL, NN, VULKAN, METAL, TRT, CUDA, HIAI",

)

parser.add_argument("--thread", type=int, default=4, help="inference using thread: int")

args = parser.parse_args()

inference(args.model, args.img, args.precision, args.backend, args.thread)

#include <stdio.h>

#include <MNN/ImageProcess.hpp>

#include <MNN/expr/Module.hpp>

#include <MNN/expr/Executor.hpp>

#include <MNN/expr/ExprCreator.hpp>

#include <MNN/expr/Executor.hpp>

#include <cv/cv.hpp>

using namespace MNN;

using namespace MNN::Express;

using namespace MNN::CV;

int main(int argc, const char* argv[]) {

if (argc < 3) {

MNN_PRINT("Usage: ./yolo26_demo.out model.mnn input.jpg [forwardType] [precision] [thread]\n");

return 0;

}

int thread = 4;

int precision = 0;

int forwardType = MNN_FORWARD_CPU;

if (argc >= 4) {

forwardType = atoi(argv[3]);

}

if (argc >= 5) {

precision = atoi(argv[4]);

}

if (argc >= 6) {

thread = atoi(argv[5]);

}

MNN::ScheduleConfig sConfig;

sConfig.type = static_cast<MNNForwardType>(forwardType);

sConfig.numThread = thread;

BackendConfig bConfig;

bConfig.precision = static_cast<BackendConfig::PrecisionMode>(precision);

sConfig.backendConfig = &bConfig;

std::shared_ptr<Executor::RuntimeManager> rtmgr = std::shared_ptr<Executor::RuntimeManager>(Executor::RuntimeManager::createRuntimeManager(sConfig));

if(rtmgr == nullptr) {

MNN_ERROR("Empty RuntimeManger\n");

return 0;

}

rtmgr->setCache(".cachefile");

std::shared_ptr<Module> net(Module::load(std::vector<std::string>{}, std::vector<std::string>{}, argv[1], rtmgr));

auto original_image = imread(argv[2]);

auto dims = original_image->getInfo()->dim;

int ih = dims[0];

int iw = dims[1];

int len = ih > iw ? ih : iw;

float scale = len / 640.0;

std::vector<int> padvals { 0, len - ih, 0, len - iw, 0, 0 };

auto pads = _Const(static_cast<void*>(padvals.data()), {3, 2}, NCHW, halide_type_of<int>());

auto image = _Pad(original_image, pads, CONSTANT);

image = resize(image, Size(640, 640), 0, 0, INTER_LINEAR, -1, {0., 0., 0.}, {1./255., 1./255., 1./255.});

image = cvtColor(image, COLOR_BGR2RGB);

auto input = _Unsqueeze(image, {0});

input = _Convert(input, NC4HW4);

auto outputs = net->onForward({input});

auto output = _Convert(outputs[0], NCHW);

output = _Squeeze(output);

// output shape: [84, 8400]; 84 means: [cx, cy, w, h, prob * 80]

auto cx = _Gather(output, _Scalar<int>(0));

auto cy = _Gather(output, _Scalar<int>(1));

auto w = _Gather(output, _Scalar<int>(2));

auto h = _Gather(output, _Scalar<int>(3));

std::vector<int> startvals { 4, 0 };

auto start = _Const(static_cast<void*>(startvals.data()), {2}, NCHW, halide_type_of<int>());

std::vector<int> sizevals { -1, -1 };

auto size = _Const(static_cast<void*>(sizevals.data()), {2}, NCHW, halide_type_of<int>());

auto probs = _Slice(output, start, size);

// [cx, cy, w, h] -> [y0, x0, y1, x1]

auto x0 = cx - w * _Const(0.5);

auto y0 = cy - h * _Const(0.5);

auto x1 = cx + w * _Const(0.5);

auto y1 = cy + h * _Const(0.5);

auto boxes = _Stack({x0, y0, x1, y1}, 1);

// ensure ratio is within the valid range [0.0, 1.0]

boxes = _Maximum(boxes, _Scalar<float>(0.0f));

boxes = _Minimum(boxes, _Scalar<float>(1.0f));

auto scores = _ReduceMax(probs, {0});

auto ids = _ArgMax(probs, 0);

auto result_ids = _Nms(boxes, scores, 100, 0.45, 0.25);

auto result_ptr = result_ids->readMap<int>();

auto box_ptr = boxes->readMap<float>();

auto ids_ptr = ids->readMap<int>();

auto score_ptr = scores->readMap<float>();

for (int i = 0; i < 100; i++) {

auto idx = result_ptr[i];

if (idx < 0) break;

auto x0 = box_ptr[idx * 4 + 0] * scale;

auto y0 = box_ptr[idx * 4 + 1] * scale;

auto x1 = box_ptr[idx * 4 + 2] * scale;

auto y1 = box_ptr[idx * 4 + 3] * scale;

// clamp to the original image size to handle cases where padding was applied

x1 = std::min(static_cast<float>(iw), x1);

y1 = std::min(static_cast<float>(ih), y1);

auto class_idx = ids_ptr[idx];

auto score = score_ptr[idx];

rectangle(original_image, {x0, y0}, {x1, y1}, {0, 0, 255}, 2);

}

if (imwrite("res.jpg", original_image)) {

MNN_PRINT("result image write to `res.jpg`.\n");

}

rtmgr->updateCache();

return 0;

}

Resumen

En esta guía, presentamos cómo exportar el modelo Ultralytics YOLO26 a MNN y usar MNN para la inferencia. El formato MNN proporciona un rendimiento excelente para aplicaciones de IA en el borde, lo que lo hace ideal para desplegar modelos de visión por computadora en dispositivos con recursos limitados.

Para más información sobre su uso, consulta la documentación de MNN.

Preguntas frecuentes

¿Cómo exporto modelos Ultralytics YOLO26 al formato MNN?

Para exportar su modelo Ultralytics YOLO26 al formato MNN, siga estos pasos:

Exportar

from ultralytics import YOLO

# Load the YOLO26 model

model = YOLO("yolo26n.pt")

# Export to MNN format

model.export(format="mnn") # creates 'yolo26n.mnn' with fp32 weight

model.export(format="mnn", half=True) # creates 'yolo26n.mnn' with fp16 weight

model.export(format="mnn", int8=True) # creates 'yolo26n.mnn' with int8 weight

yolo export model=yolo26n.pt format=mnn # creates 'yolo26n.mnn' with fp32 weight

yolo export model=yolo26n.pt format=mnn half=True # creates 'yolo26n.mnn' with fp16 weight

yolo export model=yolo26n.pt format=mnn int8=True # creates 'yolo26n.mnn' with int8 weight

Para obtener opciones de exportación detalladas, consulta la página Exportar en la documentación.

¿Cómo predigo con un modelo YOLO26 MNN exportado?

Para predecir con un modelo YOLO26 MNN exportado, utilice la predict función de la clase YOLO.

Predecir

from ultralytics import YOLO

# Load the YOLO26 MNN model

model = YOLO("yolo26n.mnn")

# Export to MNN format

results = model("https://ultralytics.com/images/bus.jpg") # predict with `fp32`

results = model("https://ultralytics.com/images/bus.jpg", half=True) # predict with `fp16` if device support

for result in results:

result.show() # display to screen

result.save(filename="result.jpg") # save to disk

yolo predict model='yolo26n.mnn' source='https://ultralytics.com/images/bus.jpg' # predict with `fp32`

yolo predict model='yolo26n.mnn' source='https://ultralytics.com/images/bus.jpg' --half=True # predict with `fp16` if device support

¿Qué plataformas son compatibles con MNN?

MNN es versátil y soporta varias plataformas:

- Móvil: Android, iOS, Harmony.

- Sistemas Integrados y Dispositivos IoT: Dispositivos como Raspberry Pi y NVIDIA Jetson.

- Equipos de escritorio y servidores: Linux, Windows y macOS.

¿Cómo puedo desplegar modelos Ultralytics YOLO26 MNN en dispositivos móviles?

Para desplegar sus modelos YOLO26 en dispositivos móviles:

- Compilar para Android: Siga la guía de MNN Android.

- Compilar para iOS: Siga la guía de MNN iOS.

- Compilar para Harmony: Siga la guía de MNN Harmony.