Machine Learning Best Practices and Tips for Model Training

Introduction

One of the most important steps when working on a computer vision project is model training. Before reaching this step, you need to define your goals and collect and annotate your data. After preprocessing the data to make sure it is clean and consistent, you can move on to training your model.

Watch: Model Training Tips | How to Handle Large Datasets | Batch Size, GPU Utilization and Mixed Precision

So, what is model training? Model training is the process of teaching your model to recognize visual patterns and make predictions based on your data. It directly impacts the performance and accuracy of your application. In this guide, we'll cover best practices, optimization techniques, and troubleshooting tips to help you train your computer vision models effectively.

How to Train a Machine Learning Model

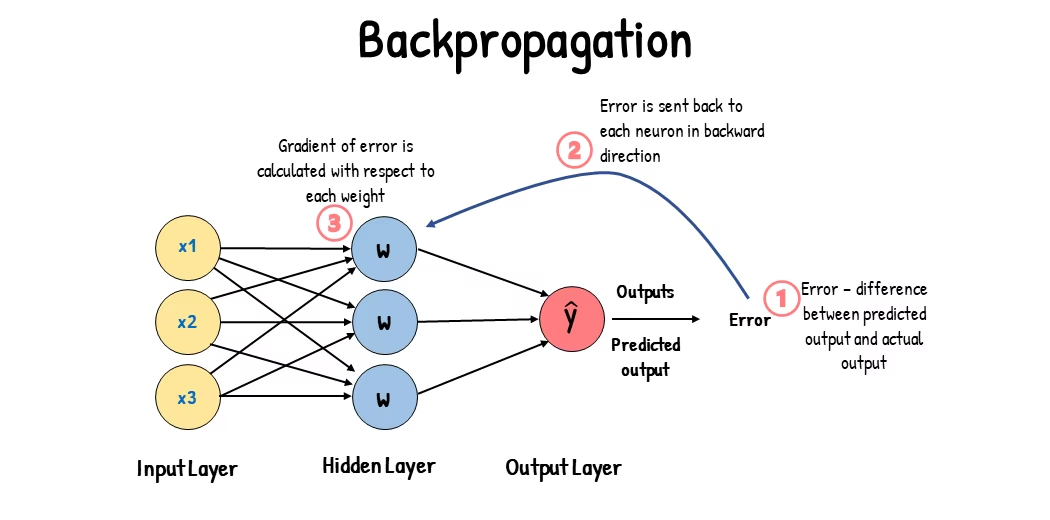

A computer vision model is trained by adjusting its internal parameters to minimize errors. Initially, the model is fed a large set of labeled images. It makes predictions about what is in these images, and the predictions are compared to the actual labels or contents to calculate errors. These errors show how far off the model's predictions are from the true values.

During training, the model iteratively makes predictions, calculates errors, and updates its parameters through a process called backpropagation. In this process, the model adjusts its internal parameters (weights and biases) to reduce the errors. By repeating this cycle many times, the model gradually improves its accuracy. Over time, it learns to recognize complex patterns such as shapes, colors, and textures.

This learning process makes it possible for the computer vision model to perform various tasks, including object detection, instance segmentation, semantic segmentation, and image classification. The ultimate goal is to create a model that can generalize its learning to new, unseen images so that it can accurately understand visual data in real-world applications.

Now that we know what is happening behind the scenes when we train a model, let's look at points to consider when training a model.

Training on Large Datasets

There are a few different aspects to think about when you are planning on using a large dataset to train a model. For example, you can adjust the batch size, control the GPU utilization, choose to use multiscale training, etc. Let's walk through each of these options in detail.

Batch Size and GPU Utilization

When training models on large datasets, efficiently utilizing your GPU is key. Batch size is an important factor. It is the number of data samples that a machine learning model processes in a single training iteration. Using the maximum batch size supported by your GPU, you can fully take advantage of its capabilities and reduce the time model training takes. However, you want to avoid running out of GPU memory. If you encounter memory errors, reduce the batch size incrementally until the model trains smoothly.

Watch: How to Use Batch Inference with Ultralytics YOLO26 | Speed Up Object Detection in Python 🎉

With respect to YOLO26, you can set the batch_size parameter in the training configuration to match your GPU capacity. Also, setting batch=-1 in your training script will automatically determine the batch size that can be efficiently processed based on your device's capabilities. By fine-tuning the batch size, you can make the most of your GPU resources and improve the overall training process.

Subset Training

Subset training is a smart strategy that involves training your model on a smaller set of data that represents the larger dataset. It can save time and resources, especially during initial model development and testing. If you are running short on time or experimenting with different model configurations, subset training is a good option.

When it comes to YOLO26, you can easily implement subset training by using the fraction parameter. This parameter lets you specify what fraction of your dataset to use for training. For example, setting fraction=0.1 will train your model on 10% of the data. You can use this technique for quick iterations and tuning your model before committing to training a model using a full dataset. Subset training helps you make rapid progress and identify potential issues early on.

Multi-scale Training

Multiscale training is a technique that improves your model's ability to generalize by training it on images of varying sizes. Your model can learn to detect objects at different scales and distances and become more robust.

For example, when you train YOLO26, you can enable multiscale training by setting the scale parameter. This parameter adjusts the size of training images by a specified factor, simulating objects at different distances. For example, setting scale=0.5 randomly zooms training images by a factor between 0.5 and 1.5 during training. Configuring this parameter allows your model to experience a variety of image scales and improve its detection capabilities across different object sizes and scenarios.

Ultralytics also supports image-size multi-scale training via the multi_scale parameter. Unlike scale, which zooms images and then pads/crops back to imgsz, multi_scale changes imgsz itself each batch (rounded to the model stride). For example, with imgsz=640 and multi_scale=0.25, the training size is sampled from 480 up to 800 in stride steps (e.g., 480, 512, 544, ..., 800), while multi_scale=0.0 keeps a fixed size.

Caching

Caching is an important technique to improve the efficiency of training machine learning models. By storing preprocessed images in memory, caching reduces the time the GPU spends waiting for data to be loaded from the disk. The model can continuously receive data without delays caused by disk I/O operations.

Caching can be controlled when training YOLO26 using the cache parameter:

cache=True: Stores dataset images in RAM, providing the fastest access speed but at the cost of increased memory usage.cache='disk': Stores the images on disk, slower than RAM but faster than loading fresh data each time.cache=False: Disables caching, relying entirely on disk I/O, which is the slowest option.

Mixed Precision Training

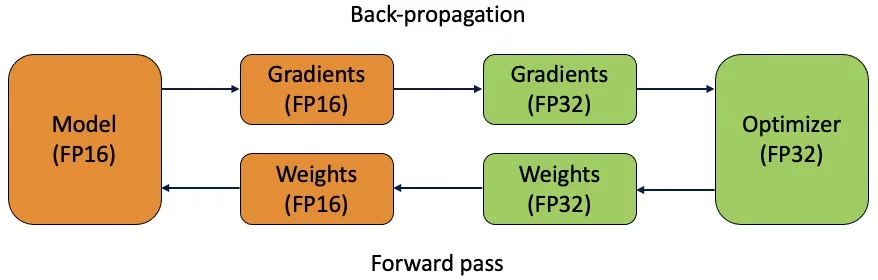

Mixed precision training uses both 16-bit (FP16) and 32-bit (FP32) floating-point types. The strengths of both FP16 and FP32 are leveraged by using FP16 for faster computation and FP32 to maintain precision where needed. Most of the neural network's operations are done in FP16 to benefit from faster computation and lower memory usage. However, a master copy of the model's weights is kept in FP32 to ensure accuracy during the weight update steps. You can handle larger models or larger batch sizes within the same hardware constraints.

To implement mixed precision training, you'll need to modify your training scripts and ensure your hardware (like GPUs) supports it. Many modern deep learning frameworks, such as PyTorch and TensorFlow, offer built-in support for mixed precision.

Mixed precision training is straightforward when working with YOLO26. You can use the amp flag in your training configuration. Setting amp=True enables Automatic Mixed Precision (AMP) training. Mixed precision training is a simple yet effective way to optimize your model training process.

Pretrained Weights

Using pretrained weights is a smart way to speed up your model's training process. Pretrained weights come from models already trained on large datasets, giving your model a head start. Transfer learning adapts pretrained models to new, related tasks. Fine-tuning a pretrained model involves starting with these weights and then continuing training on your specific dataset. This method of training results in faster training times and often better performance because the model starts with a solid understanding of basic features.

The pretrained parameter makes transfer learning easy with YOLO26. Setting pretrained=True will use default pretrained weights, or you can specify a path to a custom pretrained model. Using pretrained weights and transfer learning effectively boosts your model's capabilities and reduces training costs.

Other Techniques to Consider When Handling a Large Dataset

There are a couple of other techniques to consider when handling a large dataset:

- Learning Rate Schedulers: Implementing learning rate schedulers dynamically adjusts the learning rate during training. A well-tuned learning rate can prevent the model from overshooting minima and improve stability. When training YOLO26, the

lrfparameter helps manage learning rate scheduling by setting the final learning rate as a fraction of the initial rate. - Distributed Training: For handling large datasets, distributed training can be a game-changer. You can reduce the training time by spreading the training workload across multiple GPUs or machines. This approach is particularly valuable for enterprise-scale projects with substantial computational resources.

The Number of Epochs To Train For

When training a model, an epoch refers to one complete pass through the entire training dataset. During an epoch, the model processes each example in the training set once and updates its parameters based on the learning algorithm. Multiple epochs are usually needed to allow the model to learn and refine its parameters over time.

A common question that comes up is how to determine the number of epochs to train the model for. A good starting point is 300 epochs. If the model overfits early, you can reduce the number of epochs. If overfitting does not occur after 300 epochs, you can extend the training to 600, 1200, or more epochs.

However, the ideal number of epochs can vary based on your dataset's size and project goals. Larger datasets might require more epochs for the model to learn effectively, while smaller datasets might need fewer epochs to avoid overfitting. With respect to YOLO26, you can set the epochs parameter in your training script.

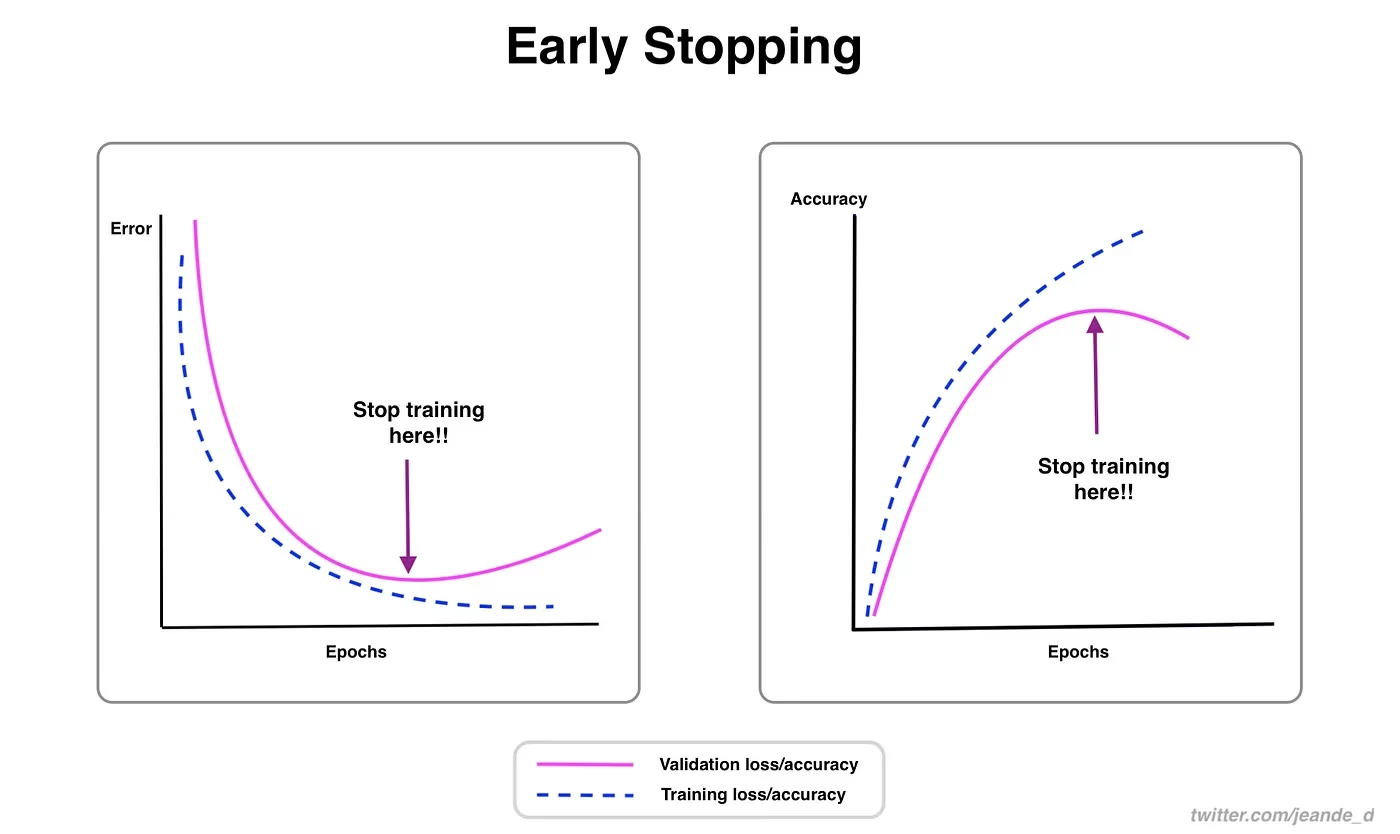

Early Stopping

Early stopping is a valuable technique for optimizing model training. By monitoring validation performance, you can halt training once the model stops improving. You can save computational resources and prevent overfitting.

The process involves setting a patience parameter that determines how many epochs to wait for an improvement in validation metrics before stopping training. If the model's performance does not improve within these epochs, training is stopped to avoid wasting time and resources.

For YOLO26, you can enable early stopping by setting the patience parameter in your training configuration. For example, patience=5 means training will stop if there's no improvement in validation metrics for 5 consecutive epochs. Using this method ensures the training process remains efficient and achieves optimal performance without excessive computation.

Choosing Between Cloud and Local Training

There are two options for training your model: cloud training and local training.

Cloud training offers scalability and powerful hardware and is ideal for handling large datasets and complex models. Platforms like Google Cloud, AWS, and Azure provide on-demand access to high-performance GPUs and TPUs, speeding up training times and enabling experiments with larger models. However, cloud training can be expensive, especially for long periods, and data transfer can add to costs and latency.

Local training provides greater control and customization, letting you tailor your environment to specific needs and avoid ongoing cloud costs. It can be more economical for long-term projects, and since your data stays on-premises, it's more secure. However, local hardware may have resource limitations and require maintenance, which can lead to longer training times for large models.

Selecting an Optimizer

An optimizer is an algorithm that adjusts the weights of your neural network to minimize the loss function, which measures how well the model is performing. In simpler terms, the optimizer helps the model learn by tweaking its parameters to reduce errors. Choosing the right optimizer directly affects how quickly and accurately the model learns.

You can also fine-tune optimizer parameters to improve model performance. Adjusting the learning rate sets the size of the steps when updating parameters. For stability, you might start with a moderate learning rate and gradually decrease it over time to improve long-term learning. Additionally, setting the momentum determines how much influence past updates have on current updates. A common value for momentum is around 0.9. It generally provides a good balance.

Common Optimizers

Different optimizers have various strengths and weaknesses. Let's take a glimpse at a few common optimizers.

-

SGD (Stochastic Gradient Descent):

- Updates model parameters using the gradient of the loss function with respect to the parameters.

- Simple and efficient but can be slow to converge and might get stuck in local minima.

-

Adam (Adaptive Moment Estimation):

- Combines the benefits of both SGD with momentum and RMSProp.

- Adjusts the learning rate for each parameter based on estimates of the first and second moments of the gradients.

- Well-suited for noisy data and sparse gradients.

- Efficient and generally requires less tuning, making it a recommended optimizer for YOLO26.

-

RMSProp (Root Mean Square Propagation):

- Adjusts the learning rate for each parameter by dividing the gradient by a running average of the magnitudes of recent gradients.

- Helps in handling the vanishing gradient problem and is effective for recurrent neural networks.

-

MuSGD (Muon + SGD hybrid):

- Combines SGD-style updates with Muon-inspired behavior for improved stability in large-scale training.

- A good choice when you want SGD-like generalization but need smoother convergence than vanilla SGD.

- Especially relevant for YOLO26 training recipes; if unsure, start with

optimizer=autoand compare against MuSGD on your dataset.

For YOLO26, the optimizer parameter lets you choose from various optimizers, including SGD, MuSGD, Adam, AdamW, NAdam, RAdam, and RMSProp, or you can set it to auto for automatic selection based on model configuration.

yolo train model=yolo26n.pt data=coco8.yaml optimizer=MuSGDConnecting with the Community

Being part of a community of computer vision enthusiasts can help you solve problems and learn faster. Here are some ways to connect, get help, and share ideas.

Community Resources

- GitHub Issues: Visit the YOLO26 GitHub repository and use the Issues tab to ask questions, report bugs, and suggest new features. The community and maintainers are very active and ready to help.

- Ultralytics Discord Server: Join the Ultralytics Discord server to chat with other users and developers, get support, and share your experiences.

Official Documentation

- Ultralytics YOLO26 Documentation: Check out the official YOLO26 documentation for detailed guides and helpful tips on various computer vision projects.

Using these resources will help you solve challenges and stay up-to-date with the latest trends and practices in the computer vision community.

Key Takeaways

Training computer vision models involves following good practices, optimizing your strategies, and solving problems as they arise. Techniques like adjusting batch sizes, mixed precision training, and starting with pretrained weights can make your models work better and train faster. Methods like subset training and early stopping help you save time and resources. Staying connected with the community and keeping up with new trends will help you keep improving your model training skills.

FAQ

How can I improve GPU utilization when training a large dataset with Ultralytics YOLO?

To improve GPU utilization, set the batch_size parameter in your training configuration to the maximum size supported by your GPU. This ensures that you make full use of the GPU's capabilities, reducing training time. If you encounter memory errors, incrementally reduce the batch size until training runs smoothly. For YOLO26, setting batch=-1 in your training script will automatically determine the optimal batch size for efficient processing. For further information, refer to the training configuration.

What is mixed precision training, and how do I enable it in YOLO26?

Mixed precision training utilizes both 16-bit (FP16) and 32-bit (FP32) floating-point types to balance computational speed and precision. This approach speeds up training and reduces memory usage without sacrificing model accuracy. To enable mixed precision training in YOLO26, set the amp parameter to True in your training configuration. This activates Automatic Mixed Precision (AMP) training. For more details on this optimization technique, see the training configuration.

How does multiscale training enhance YOLO26 model performance?

Multiscale training enhances model performance by training on images of varying sizes, allowing the model to better generalize across different scales and distances. In YOLO26, you can enable multiscale training by setting the scale parameter in the training configuration. For example, scale=0.5 samples a zoom factor between 0.5 and 1.5, then pads/crops back to imgsz. This technique simulates objects at different distances, making the model more robust across various scenarios. For settings and more details, check out the training configuration.

How can I use pretrained weights to speed up training in YOLO26?

Using pretrained weights can greatly accelerate training and enhance model accuracy by leveraging a model already familiar with foundational visual features. In YOLO26, simply set the pretrained parameter to True or provide a path to your custom pretrained weights in the training configuration. This method, called transfer learning, allows models trained on large datasets to be effectively adapted to your specific application. Learn more about how to use pretrained weights and their benefits in the training configuration guide.

What is the recommended number of epochs for training a model, and how do I set this in YOLO26?

The number of epochs refers to the complete passes through the training dataset during model training. A typical starting point is 300 epochs. If your model overfits early, you can reduce the number. Alternatively, if overfitting isn't observed, you might extend training to 600, 1200, or more epochs. To set this in YOLO26, use the epochs parameter in your training script. For additional advice on determining the ideal number of epochs, refer to this section on number of epochs.