YOLOv10: Real-Time End-to-End Object Detection

YOLOv10, released in May 2024 and built on the Ultralytics Python package by researchers at Tsinghua University, introduces a new approach to real-time object detection, addressing both the post-processing and model architecture deficiencies found in previous YOLO versions. By eliminating non-maximum suppression (NMS) and optimizing various model components, YOLOv10 achieved excellent performance with significantly reduced computational overhead at its time of release. Its NMS-free end-to-end design pioneered an approach that has been further developed in YOLO26.

Watch: How to Train YOLOv10 on SKU-110k Dataset using Ultralytics | Retail Dataset

Overview

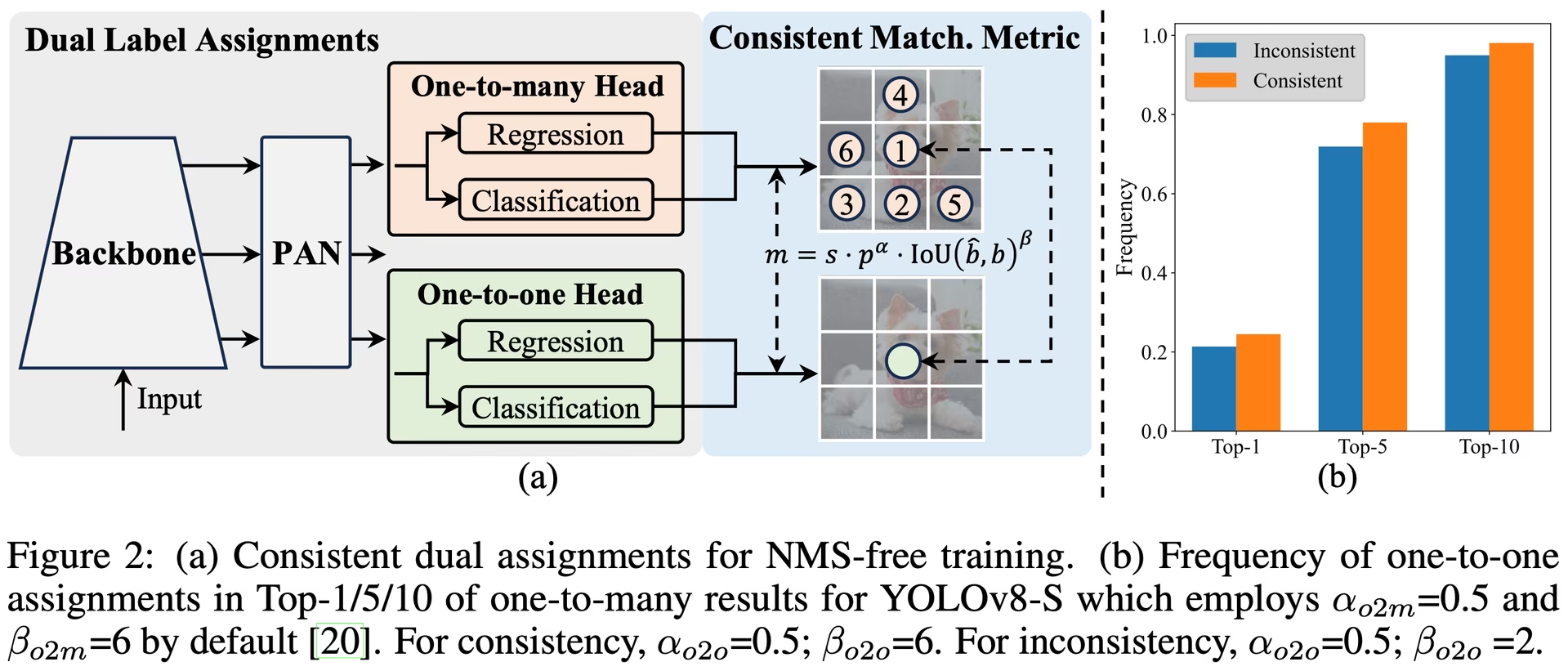

Real-time object detection aims to accurately predict object categories and positions in images with low latency. The YOLO series has been at the forefront of this research due to its balance between performance and efficiency. However, reliance on NMS and architectural inefficiencies have hindered optimal performance. YOLOv10 addresses these issues by introducing consistent dual assignments for NMS-free training and a holistic efficiency-accuracy driven model design strategy.

Architecture

The architecture of YOLOv10 builds upon the strengths of previous YOLO models while introducing several key innovations. The model architecture consists of the following components:

- Backbone: Responsible for feature extraction, the backbone in YOLOv10 uses an enhanced version of CSPNet (Cross Stage Partial Network) to improve gradient flow and reduce computational redundancy.

- Neck: The neck is designed to aggregate features from different scales and passes them to the head. It includes PAN (Path Aggregation Network) layers for effective multiscale feature fusion.

- One-to-Many Head: Generates multiple predictions per object during training to provide rich supervisory signals and improve learning accuracy.

- One-to-One Head: Generates a single best prediction per object during inference to eliminate the need for NMS, thereby reducing latency and improving efficiency.

Key Features

- NMS-Free Training: Utilizes consistent dual assignments to eliminate the need for NMS, reducing inference latency.

- Holistic Model Design: Comprehensive optimization of various components from both efficiency and accuracy perspectives, including lightweight classification heads, spatial-channel decoupled down sampling, and rank-guided block design.

- Enhanced Model Capabilities: Incorporates large-kernel convolutions and partial self-attention modules to improve performance without significant computational cost.

Model Variants

YOLOv10 comes in various model scales to cater to different application needs:

- YOLOv10n: Nano version for extremely resource-constrained environments.

- YOLOv10s: Small version balancing speed and accuracy.

- YOLOv10m: Medium version for general-purpose use.

- YOLOv10b: Balanced version with increased width for higher accuracy.

- YOLOv10l: Large version for higher accuracy at the cost of increased computational resources.

- YOLOv10x: Extra-large version for maximum accuracy and performance.

Performance

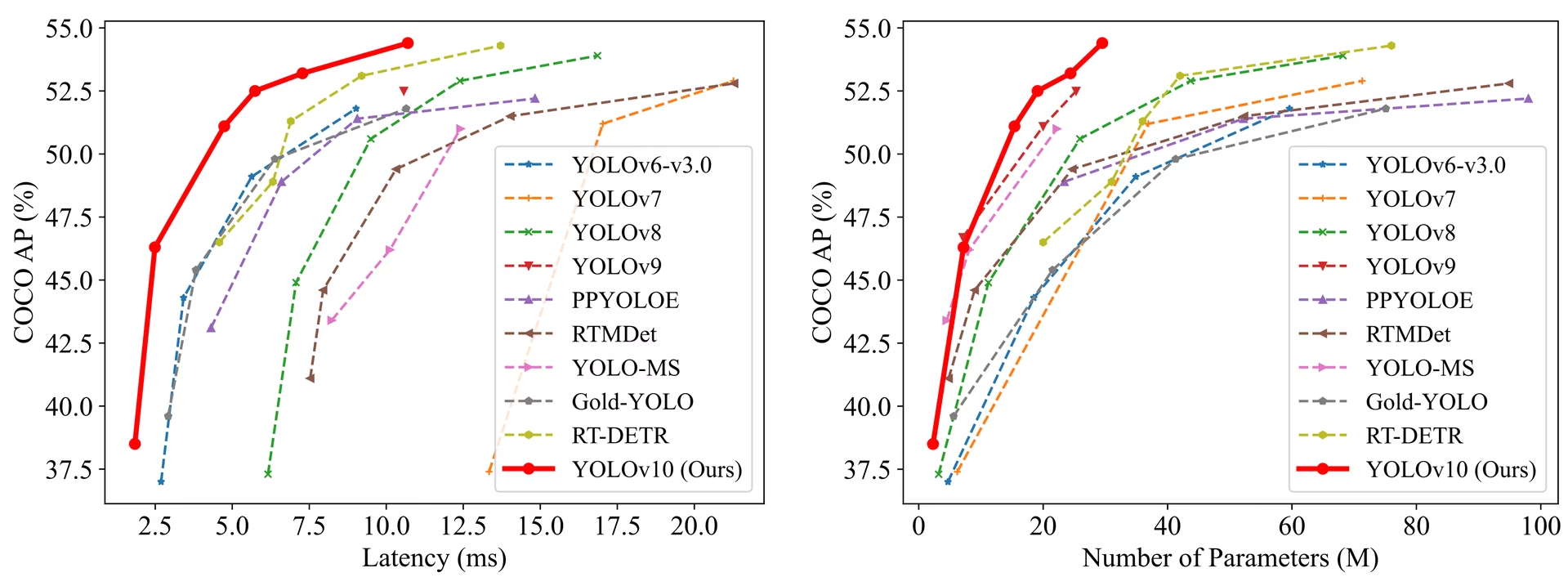

YOLOv10 outperforms previous YOLO versions and other state-of-the-art models in terms of accuracy and efficiency. For example, YOLOv10s is 1.8x faster than RT-DETR-R18 with similar AP on the COCO dataset, and YOLOv10b has 46% less latency and 25% fewer parameters than YOLOv9-C with the same performance.

Latency measured with TensorRT FP16 on T4 GPU.

| Model | Input Size | APval | FLOPs (G) | Latency (ms) |

|---|---|---|---|---|

| [YOLOv10n][1] | 640 | 38.5 | 6.7 | 1.84 |

| [YOLOv10s][2] | 640 | 46.3 | 21.6 | 2.49 |

| [YOLOv10m][3] | 640 | 51.1 | 59.1 | 4.74 |

| [YOLOv10b][4] | 640 | 52.5 | 92.0 | 5.74 |

| [YOLOv10l][5] | 640 | 53.2 | 120.3 | 7.28 |

| [YOLOv10x][6] | 640 | 54.4 | 160.4 | 10.70 |

Methodology

Consistent Dual Assignments for NMS-Free Training

YOLOv10 employs dual label assignments, combining one-to-many and one-to-one strategies during training to ensure rich supervision and efficient end-to-end deployment. The consistent matching metric aligns the supervision between both strategies, enhancing the quality of predictions during inference.

Holistic Efficiency-Accuracy Driven Model Design

Efficiency Enhancements

- Lightweight Classification Head: Reduces the computational overhead of the classification head by using depth-wise separable convolutions.

- Spatial-Channel Decoupled Down sampling: Decouples spatial reduction and channel modulation to minimize information loss and computational cost.

- Rank-Guided Block Design: Adapts block design based on intrinsic stage redundancy, ensuring optimal parameter utilization.

Accuracy Enhancements

- Large-Kernel Convolution: Enlarges the receptive field to enhance feature extraction capability.

- Partial Self-Attention (PSA): Incorporates self-attention modules to improve global representation learning with minimal overhead.

Experiments and Results

YOLOv10 has been extensively tested on standard benchmarks like COCO, demonstrating superior performance and efficiency. The model achieves state-of-the-art results across different variants, showcasing significant improvements in latency and accuracy compared to previous versions and other contemporary detectors.

Comparisons

Compared to other state-of-the-art detectors:

- YOLOv10s / x are 1.8× / 1.3× faster than RT-DETR-R18 / R101 with similar accuracy

- YOLOv10b has 25% fewer parameters and 46% lower latency than YOLOv9-C at same accuracy

- YOLOv10l / x outperform YOLOv8l / x by 0.3 AP / 0.5 AP with 1.8× / 2.3× fewer parameters

Here is a detailed comparison of YOLOv10 variants with other state-of-the-art models:

| Model | Params (M) | FLOPs (G) | mAPval 50-95 | Latency (ms) | Latency-forward (ms) |

|---|---|---|---|---|---|

| YOLOv6-3.0-N | 4.7 | 11.4 | 37.0 | 2.69 | 1.76 |

| Gold-YOLO-N | 5.6 | 12.1 | 39.6 | 2.92 | 1.82 |

| YOLOv8n | 3.2 | 8.7 | 37.3 | 6.16 | 1.77 |

| YOLOv10n | 2.3 | 6.7 | 39.5 | 1.84 | 1.79 |

| YOLOv6-3.0-S | 18.5 | 45.3 | 44.3 | 3.42 | 2.35 |

| Gold-YOLO-S | 21.5 | 46.0 | 45.4 | 3.82 | 2.73 |

| YOLOv8s | 11.2 | 28.6 | 44.9 | 7.07 | 2.33 |

| YOLOv10s | 7.2 | 21.6 | 46.8 | 2.49 | 2.39 |

| RT-DETR-R18 | 20.0 | 60.0 | 46.5 | 4.58 | 4.49 |

| YOLOv6-3.0-M | 34.9 | 85.8 | 49.1 | 5.63 | 4.56 |

| Gold-YOLO-M | 41.3 | 87.5 | 49.8 | 6.38 | 5.45 |

| YOLOv8m | 25.9 | 78.9 | 50.6 | 9.50 | 5.09 |

| YOLOv10m | 15.4 | 59.1 | 51.3 | 4.74 | 4.63 |

| YOLOv6-3.0-L | 59.6 | 150.7 | 51.8 | 9.02 | 7.90 |

| Gold-YOLO-L | 75.1 | 151.7 | 51.8 | 10.65 | 9.78 |

| YOLOv8l | 43.7 | 165.2 | 52.9 | 12.39 | 8.06 |

| RT-DETR-R50 | 42.0 | 136.0 | 53.1 | 9.20 | 9.07 |

| YOLOv10l | 24.4 | 120.3 | 53.4 | 7.28 | 7.21 |

| YOLOv8x | 68.2 | 257.8 | 53.9 | 16.86 | 12.83 |

| RT-DETR-R101 | 76.0 | 259.0 | 54.3 | 13.71 | 13.58 |

| YOLOv10x | 29.5 | 160.4 | 54.4 | 10.70 | 10.60 |

Params and FLOPs values are for the fused model after model.fuse(), which merges Conv and BatchNorm layers and removes the auxiliary one-to-many detection head. Pretrained checkpoints retain the full training architecture and may show higher counts.

Usage Examples

For predicting new images with YOLOv10. Models can also be trained on cloud GPUs through Ultralytics Platform:

from ultralytics import YOLO

# Load a pretrained YOLOv10n model

model = YOLO("yolov10n.pt")

# Perform object detection on an image

results = model("image.jpg")

# Display the results

results[0].show()For training YOLOv10 on a custom dataset:

from ultralytics import YOLO

# Load YOLOv10n model from scratch

model = YOLO("yolov10n.yaml")

# Train the model

model.train(data="coco8.yaml", epochs=100, imgsz=640)Supported Tasks and Modes

The YOLOv10 model series offers a range of models, each optimized for high-performance Object Detection. These models cater to varying computational needs and accuracy requirements, making them versatile for a wide array of applications.

| Model | Filenames | Tasks | Inference | Validation | Training | Export |

|---|---|---|---|---|---|---|

| YOLOv10 | yolov10n.pt yolov10s.pt yolov10m.pt yolov10l.pt yolov10x.pt | Object Detection | ✅ | ✅ | ✅ | ✅ |

Exporting YOLOv10

Due to the new operations introduced with YOLOv10, not all export formats provided by Ultralytics are currently supported. The following table outlines which formats have been successfully converted using Ultralytics for YOLOv10. Feel free to open a pull request if you're able to provide a contribution change for adding export support of additional formats for YOLOv10.

| Export Format | Export Support | Exported Model Inference | Notes |

|---|---|---|---|

| TorchScript | ✅ | ✅ | Standard PyTorch model format. |

| ONNX | ✅ | ✅ | Widely supported for deployment. |

| OpenVINO | ✅ | ✅ | Optimized for Intel hardware. |

| TensorRT | ✅ | ✅ | Optimized for NVIDIA GPUs. |

| CoreML | ✅ | ✅ | Limited to Apple devices. |

| TF SavedModel | ✅ | ✅ | TensorFlow's standard model format. |

| TF GraphDef | ✅ | ✅ | Legacy TensorFlow format. |

| TF Lite | ✅ | ✅ | Optimized for mobile and embedded. |

| TF Edge TPU | ✅ | ✅ | Specific to Google's Edge TPU devices. |

| TF.js | ✅ | ✅ | JavaScript environment for browser use. |

| PaddlePaddle | ❌ | ❌ | Popular in China; less global support. |

| NCNN | ✅ | ❌ | Layer torch.topk not exists or registered |

Conclusion

YOLOv10 set a new standard in real-time object detection at its release by addressing the shortcomings of previous YOLO versions and incorporating innovative design strategies. Its NMS-free approach pioneered end-to-end object detection in the YOLO family. For the latest Ultralytics model with improved performance and NMS-free inference, see YOLO26.

Citations and Acknowledgments

We would like to acknowledge the YOLOv10 authors from Tsinghua University for their extensive research and significant contributions to the Ultralytics framework:

@article{THU-MIGyolov10,

title={YOLOv10: Real-Time End-to-End Object Detection},

author={Ao Wang, Hui Chen, Lihao Liu, et al.},

journal={arXiv preprint arXiv:2405.14458},

year={2024},

institution={Tsinghua University},

license = {AGPL-3.0}

}For detailed implementation, architectural innovations, and experimental results, please refer to the YOLOv10 research paper and GitHub repository by the Tsinghua University team.

FAQ

What is YOLOv10 and how does it differ from previous YOLO versions?

YOLOv10, developed by researchers at Tsinghua University, introduces several key innovations to real-time object detection. It eliminates the need for non-maximum suppression (NMS) by employing consistent dual assignments during training and optimized model components for superior performance with reduced computational overhead. For more details on its architecture and key features, check out the YOLOv10 overview section.

How can I get started with running inference using YOLOv10?

For easy inference, you can use the Ultralytics YOLO Python library or the command line interface (CLI). Below are examples of predicting new images using YOLOv10:

from ultralytics import YOLO

# Load the pretrained YOLOv10n model

model = YOLO("yolov10n.pt")

results = model("image.jpg")

results[0].show()For more usage examples, visit our Usage Examples section.

Which model variants does YOLOv10 offer and what are their use cases?

YOLOv10 offers several model variants to cater to different use cases:

- YOLOv10n: Suitable for extremely resource-constrained environments

- YOLOv10s: Balances speed and accuracy

- YOLOv10m: General-purpose use

- YOLOv10b: Higher accuracy with increased width

- YOLOv10l: High accuracy at the cost of computational resources

- YOLOv10x: Maximum accuracy and performance

Each variant is designed for different computational needs and accuracy requirements, making them versatile for a variety of applications. Explore the Model Variants section for more information.

How does the NMS-free approach in YOLOv10 improve performance?

YOLOv10 eliminates the need for non-maximum suppression (NMS) during inference by employing consistent dual assignments for training. This approach reduces inference latency and enhances prediction efficiency. The architecture also includes a one-to-one head for inference, ensuring that each object gets a single best prediction. For a detailed explanation, see the Consistent Dual Assignments for NMS-Free Training section.

Where can I find the export options for YOLOv10 models?

YOLOv10 supports several export formats, including TorchScript, ONNX, OpenVINO, and TensorRT. However, not all export formats provided by Ultralytics are currently supported for YOLOv10 due to its new operations. For details on the supported formats and instructions on exporting, visit the Exporting YOLOv10 section.

What are the performance benchmarks for YOLOv10 models?

YOLOv10 outperforms previous YOLO versions and other state-of-the-art models in both accuracy and efficiency. For example, YOLOv10s is 1.8x faster than RT-DETR-R18 with a similar AP on the COCO dataset. YOLOv10b shows 46% less latency and 25% fewer parameters than YOLOv9-C with the same performance. Detailed benchmarks can be found in the Comparisons section.