Fast Segment Anything Model (FastSAM)

The Fast Segment Anything Model (FastSAM) is a novel, real-time CNN-based solution for the Segment Anything task. This task is designed to segment any object within an image based on various possible user interaction prompts. FastSAM significantly reduces computational demands while maintaining competitive performance, making it a practical choice for a variety of vision tasks.

Watch: Object Tracking using FastSAM with Ultralytics

Model Architecture

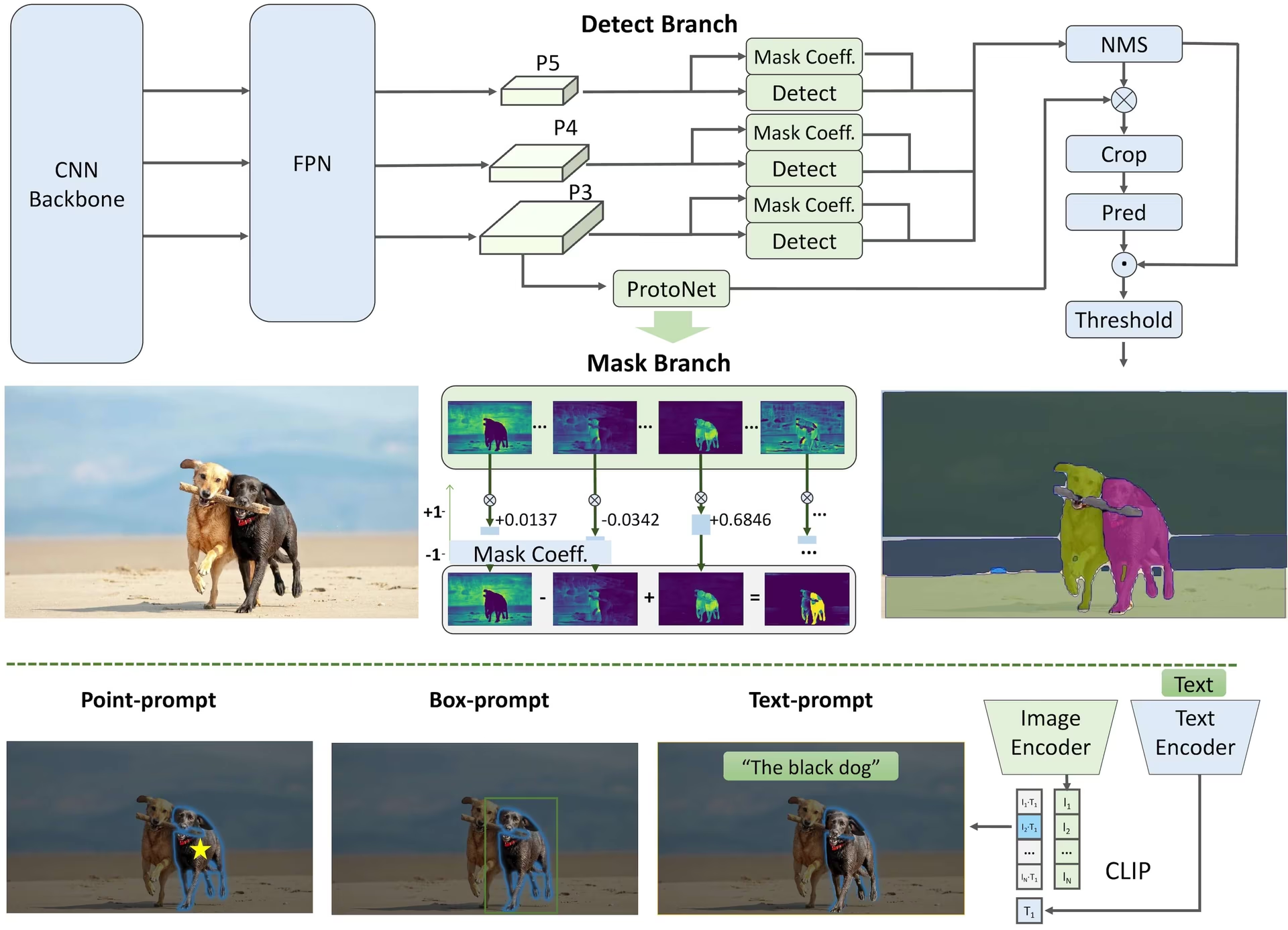

Overview

FastSAM is designed to address the limitations of the Segment Anything Model (SAM), a heavy Transformer model with substantial computational resource requirements. The FastSAM decouples the segment anything task into two sequential stages: all-instance segmentation and prompt-guided selection. The first stage uses YOLOv8-seg to produce the segmentation masks of all instances in the image. In the second stage, it outputs the region-of-interest corresponding to the prompt.

Key Features

-

Real-time Solution: By leveraging the computational efficiency of CNNs, FastSAM provides a real-time solution for the segment anything task, making it valuable for industrial applications that require quick results.

-

Efficiency and Performance: FastSAM offers a significant reduction in computational and resource demands without compromising on performance quality. It achieves comparable performance to SAM but with drastically reduced computational resources, enabling real-time application.

-

Prompt-guided Segmentation: FastSAM can segment any object within an image guided by various possible user interaction prompts, providing flexibility and adaptability in different scenarios.

-

Based on YOLOv8-seg: FastSAM is based on YOLOv8-seg, an object detector equipped with an instance segmentation branch. This allows it to effectively produce the segmentation masks of all instances in an image.

-

Competitive Results on Benchmarks: On the object proposal task on MS COCO, FastSAM achieves high scores at a significantly faster speed than SAM on a single NVIDIA RTX 3090, demonstrating its efficiency and capability.

-

Practical Applications: The proposed approach provides a new, practical solution for a large number of vision tasks at a really high speed, tens or hundreds of times faster than current methods.

-

Model Compression Feasibility: FastSAM demonstrates the feasibility of a path that can significantly reduce the computational effort by introducing an artificial prior to the structure, thus opening new possibilities for large model architecture for general vision tasks.

Available Models, Supported Tasks, and Operating Modes

This table presents the available models with their specific pretrained weights, the tasks they support, and their compatibility with different operating modes like Inference, Validation, Training, and Export, indicated by ✅ emojis for supported modes and ❌ emojis for unsupported modes.

| Model Type | Pretrained Weights | Tasks Supported | Inference | Validation | Training | Export |

|---|---|---|---|---|---|---|

| FastSAM-s | FastSAM-s.pt | Instance Segmentation | ✅ | ❌ | ❌ | ✅ |

| FastSAM-x | FastSAM-x.pt | Instance Segmentation | ✅ | ❌ | ❌ | ✅ |

FastSAM Comparison vs YOLO

Here we compare Meta's SAM 2 models, including the smallest SAM2-t variant, with Ultralytics segmentation models including YOLO26n-seg:

| Model | Size (MB) | Parameters (M) | Speed (CPU) (ms/im) |

|---|---|---|---|

| Meta SAM-b | 375 | 93.7 | 41703 |

| Meta SAM2-b | 162 | 80.8 | 28867 |

| Meta SAM2-t | 78.1 | 38.9 | 23430 |

| MobileSAM | 40.7 | 10.1 | 23802 |

| FastSAM-s with YOLOv8 backbone | 23.9 | 11.8 | 58.0 |

| Ultralytics YOLOv8n-seg | 7.1 (11.0x smaller) | 3.4 (11.4x less) | 24.8 (945x faster) |

| Ultralytics YOLO11n-seg | 6.2 (12.6x smaller) | 2.9 (13.4x less) | 24.3 (964x faster) |

| Ultralytics YOLO26n-seg | 6.7 (11.7x smaller) | 2.7 (14.4x less) | 25.2 (930x faster) |

This comparison demonstrates the substantial differences in model sizes and speeds between SAM variants and YOLO segmentation models. While SAM provides unique automatic segmentation capabilities, YOLO models, particularly YOLOv8n-seg, YOLO11n-seg and YOLO26n-seg, are significantly smaller, faster, and more computationally efficient.

SAM speeds measured with PyTorch, YOLO speeds measured with ONNX Runtime. Tests run on a 2025 Apple M4 Air with 16GB of RAM using torch==2.10.0, ultralytics==8.4.31, and onnxruntime==1.24.4. To reproduce this test:

from ultralytics import ASSETS, SAM, YOLO, FastSAM

# Profile SAM2-t, SAM2-b, SAM-b, MobileSAM

for file in ["sam_b.pt", "sam2_b.pt", "sam2_t.pt", "mobile_sam.pt"]:

model = SAM(file)

model.info()

model(ASSETS)

# Profile FastSAM-s

model = FastSAM("FastSAM-s.pt")

model.info()

model(ASSETS)

# Profile YOLO models (ONNX)

for file_name in ["yolov8n-seg.pt", "yolo11n-seg.pt", "yolo26n-seg.pt"]:

model = YOLO(file_name)

model.info()

onnx_path = model.export(format="onnx", dynamic=True)

model = YOLO(onnx_path)

model(ASSETS)Usage Examples

The FastSAM models are easy to integrate into your Python applications. Ultralytics provides user-friendly Python API and CLI commands to streamline development.

Predict Usage

To perform object detection on an image, use the predict method as shown below:

from ultralytics import FastSAM

# Define an inference source

source = "path/to/bus.jpg"

# Create a FastSAM model

model = FastSAM("FastSAM-s.pt") # or FastSAM-x.pt

# Run inference on an image

everything_results = model(source, device="cpu", retina_masks=True, imgsz=1024, conf=0.4, iou=0.9)

# Run inference with bboxes prompt

results = model(source, bboxes=[439, 437, 524, 709])

# Run inference with points prompt

results = model(source, points=[[200, 200]], labels=[1])

# Run inference with texts prompt

results = model(source, texts="a photo of a dog")

# Run inference with bboxes and points and texts prompt at the same time

results = model(source, bboxes=[439, 437, 524, 709], points=[[200, 200]], labels=[1], texts="a photo of a dog")This snippet demonstrates the simplicity of loading a pretrained model and running a prediction on an image.

This way you can run inference on image and get all the segment results once and run prompts inference multiple times without running inference multiple times.

from ultralytics.models.fastsam import FastSAMPredictor

# Create FastSAMPredictor

overrides = dict(conf=0.25, task="segment", mode="predict", model="FastSAM-s.pt", save=False, imgsz=1024)

predictor = FastSAMPredictor(overrides=overrides)

# Segment everything

everything_results = predictor("ultralytics/assets/bus.jpg")

# Prompt inference

bbox_results = predictor.prompt(everything_results, bboxes=[[200, 200, 300, 300]])

point_results = predictor.prompt(everything_results, points=[200, 200])

text_results = predictor.prompt(everything_results, texts="a photo of a dog")All the returned results in the above examples are Results objects which allow access to predicted masks and source image easily.

Val Usage

Validation of the model on a dataset can be done as follows:

from ultralytics import FastSAM

# Create a FastSAM model

model = FastSAM("FastSAM-s.pt") # or FastSAM-x.pt

# Validate the model

results = model.val(data="coco8-seg.yaml")Please note that FastSAM only supports detection and segmentation of a single class of object. This means it will recognize and segment all objects as the same class. Therefore, when preparing the dataset, you need to convert all object category IDs to 0.

Track Usage

To perform object tracking on an image, use the track method as shown below:

from ultralytics import FastSAM

# Create a FastSAM model

model = FastSAM("FastSAM-s.pt") # or FastSAM-x.pt

# Track with a FastSAM model on a video

results = model.track(source="path/to/video.mp4", imgsz=640)FastSAM official Usage

FastSAM is also available directly from the https://github.com/CASIA-IVA-Lab/FastSAM repository. Here is a brief overview of the typical steps you might take to use FastSAM:

Installation

-

Clone the FastSAM repository:

git clone https://github.com/CASIA-IVA-Lab/FastSAM.git -

Create and activate a Conda environment with Python 3.9:

conda create -n FastSAM python=3.9 conda activate FastSAM -

Navigate to the cloned repository and install the required packages:

cd FastSAM pip install -r requirements.txt -

Install the CLIP model:

pip install git+https://github.com/ultralytics/CLIP.git

Example Usage

-

Download a model checkpoint.

-

Use FastSAM for inference. Example commands:

-

Segment everything in an image:

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg -

Segment specific objects using text prompt:

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg --text_prompt "the yellow dog" -

Segment objects within a bounding box (provide box coordinates in xywh format):

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg --box_prompt "[570,200,230,400]" -

Segment objects near specific points:

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg --point_prompt "[[520,360],[620,300]]" --point_label "[1,0]"

-

Additionally, you can try FastSAM through the CASIA-IVA-Lab Colab demo.

Citations and Acknowledgments

We would like to acknowledge the FastSAM authors for their significant contributions in the field of real-time instance segmentation:

@misc{zhao2023fast,

title={Fast Segment Anything},

author={Xu Zhao and Wenchao Ding and Yongqi An and Yinglong Du and Tao Yu and Min Li and Ming Tang and Jinqiao Wang},

year={2023},

eprint={2306.12156},

archivePrefix={arXiv},

primaryClass={cs.CV}

}The original FastSAM paper can be found on arXiv. The authors have made their work publicly available, and the codebase can be accessed on GitHub. We appreciate their efforts in advancing the field and making their work accessible to the broader community.

FAQ

What is FastSAM and how does it differ from SAM?

FastSAM, short for Fast Segment Anything Model, is a real-time convolutional neural network (CNN)-based solution designed to reduce computational demands while maintaining high performance in object segmentation tasks. Unlike the Segment Anything Model (SAM), which uses a heavier Transformer-based architecture, FastSAM leverages Ultralytics YOLOv8-seg for efficient instance segmentation in two stages: all-instance segmentation followed by prompt-guided selection.

How does FastSAM achieve real-time segmentation performance?

FastSAM achieves real-time segmentation by decoupling the segmentation task into all-instance segmentation with YOLOv8-seg and prompt-guided selection stages. By utilizing the computational efficiency of CNNs, FastSAM offers significant reductions in computational and resource demands while maintaining competitive performance. This dual-stage approach enables FastSAM to deliver fast and efficient segmentation suitable for applications requiring quick results.

What are the practical applications of FastSAM?

FastSAM is practical for a variety of computer vision tasks that require real-time segmentation performance. Applications include:

- Industrial automation for quality control and assurance

- Real-time video analysis for security and surveillance

- Autonomous vehicles for object detection and segmentation

- Medical imaging for precise and quick segmentation tasks

Its ability to handle various user interaction prompts makes FastSAM adaptable and flexible for diverse scenarios.

How do I use the FastSAM model for inference in Python?

To use FastSAM for inference in Python, you can follow the example below:

from ultralytics import FastSAM

# Define an inference source

source = "path/to/bus.jpg"

# Create a FastSAM model

model = FastSAM("FastSAM-s.pt") # or FastSAM-x.pt

# Run inference on an image

everything_results = model(source, device="cpu", retina_masks=True, imgsz=1024, conf=0.4, iou=0.9)

# Run inference with bboxes prompt

results = model(source, bboxes=[439, 437, 524, 709])

# Run inference with points prompt

results = model(source, points=[[200, 200]], labels=[1])

# Run inference with texts prompt

results = model(source, texts="a photo of a dog")

# Run inference with bboxes and points and texts prompt at the same time

results = model(source, bboxes=[439, 437, 524, 709], points=[[200, 200]], labels=[1], texts="a photo of a dog")For more details on inference methods, check the Predict Usage section of the documentation.

What types of prompts does FastSAM support for segmentation tasks?

FastSAM supports multiple prompt types for guiding the segmentation tasks:

- Everything Prompt: Generates segmentation for all visible objects.

- Bounding Box (BBox) Prompt: Segments objects within a specified bounding box.

- Text Prompt: Uses a descriptive text to segment objects matching the description.

- Point Prompt: Segments objects near specific user-defined points.

This flexibility allows FastSAM to adapt to a wide range of user interaction scenarios, enhancing its utility across different applications. For more information on using these prompts, refer to the Key Features section.