Link to this sectionCoral Edge TPU sur un Raspberry Pi avec Ultralytics YOLO26 🚀#

Link to this sectionQu'est-ce qu'une Coral Edge TPU ?#

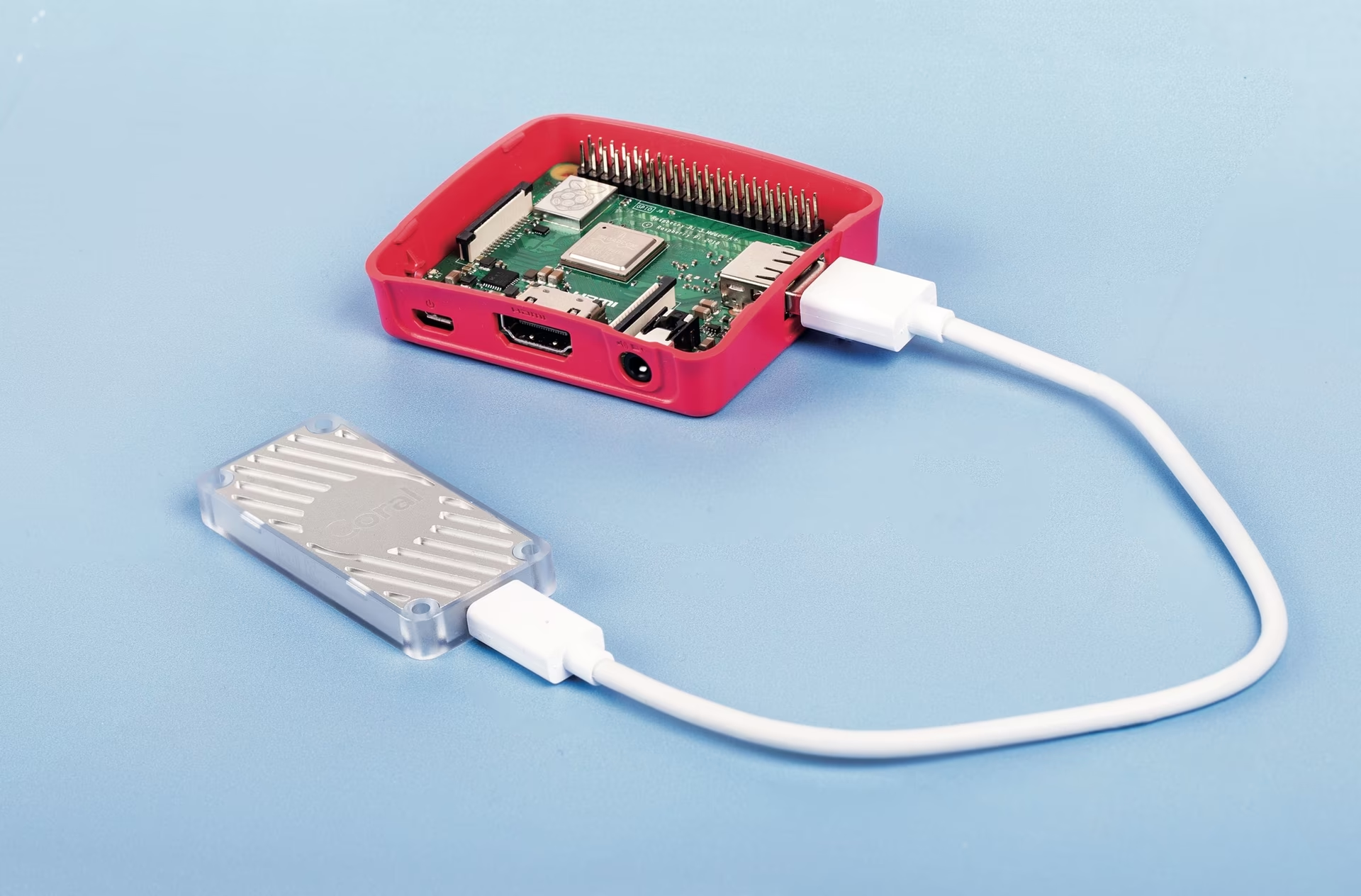

La Coral Edge TPU est un appareil compact qui ajoute un coprocesseur Edge TPU à ton système. Il permet une inférence ML haute performance et basse consommation pour les modèles TensorFlow Lite. Apprends-en plus sur la page d'accueil de Coral Edge TPU.

Watch: How to Run Inference on Raspberry Pi using Google Coral Edge TPU

Link to this sectionBooste les performances de ton Raspberry Pi avec la Coral Edge TPU#

Beaucoup souhaitent exécuter leurs modèles sur un appareil embarqué ou mobile tel qu'un Raspberry Pi, car ils sont très économes en énergie et utilisables dans de nombreuses applications variées. Cependant, les performances d'inférence sur ces appareils sont généralement médiocres, même avec des formats comme ONNX ou OpenVINO. La Coral Edge TPU est une excellente solution à ce problème, car elle peut être utilisée avec un Raspberry Pi pour accélérer considérablement les performances d'inférence.

Link to this sectionEdge TPU sur Raspberry Pi avec TensorFlow Lite (Nouveau)⭐#

Le guide existant de Coral sur l'utilisation de l'Edge TPU avec un Raspberry Pi est obsolète, et les versions actuelles du runtime Coral Edge TPU ne fonctionnent plus avec les versions actuelles du runtime TensorFlow Lite. De plus, Google semble avoir complètement abandonné le projet Coral, sans aucune mise à jour entre 2021 et 2025. Ce guide te montrera comment faire fonctionner l'Edge TPU avec les dernières versions du runtime TensorFlow Lite et un runtime Coral Edge TPU mis à jour sur un ordinateur monocarte (SBC) Raspberry Pi.

Link to this sectionPrérequis#

- Raspberry Pi 4B (2 Go ou plus recommandés) ou Raspberry Pi 5 (Recommandé)

- Raspberry Pi OS Bullseye/Bookworm (64-bit) avec bureau (Recommandé)

- Coral USB Accelerator

- Une plateforme non basée sur ARM pour exporter un modèle Ultralytics PyTorch

Link to this sectionGuide d'installation#

Ce guide suppose que tu as déjà une installation fonctionnelle de Raspberry Pi OS et que tu as installé ultralytics ainsi que toutes les dépendances. Pour installer ultralytics, consulte le guide de démarrage rapide pour te configurer avant de continuer ici.

Link to this sectionInstallation du runtime Edge TPU#

Tout d'abord, nous devons installer le runtime Edge TPU. De nombreuses versions sont disponibles, tu dois donc choisir celle qui convient à ton système d'exploitation. La version haute fréquence fait fonctionner l'Edge TPU à une vitesse d'horloge plus élevée, ce qui améliore les performances. Cependant, cela peut entraîner un étranglement thermique de l'Edge TPU ; il est donc recommandé de prévoir un mécanisme de refroidissement.

| Raspberry Pi OS | Mode haute fréquence | Version à télécharger |

|---|---|---|

| Bullseye 32bit | Non | libedgetpu1-std_ ... .bullseye_armhf.deb |

| Bullseye 64bit | Non | libedgetpu1-std_ ... .bullseye_arm64.deb |

| Bullseye 32bit | Oui | libedgetpu1-max_ ... .bullseye_armhf.deb |

| Bullseye 64bit | Oui | libedgetpu1-max_ ... .bullseye_arm64.deb |

| Bookworm 32bit | Non | libedgetpu1-std_ ... .bookworm_armhf.deb |

| Bookworm 64bit | Non | libedgetpu1-std_ ... .bookworm_arm64.deb |

| Bookworm 32bit | Oui | libedgetpu1-max_ ... .bookworm_armhf.deb |

| Bookworm 64bit | Oui | libedgetpu1-max_ ... .bookworm_arm64.deb |

Télécharge la dernière version ici.

Après avoir téléchargé le fichier, tu peux l'installer avec la commande suivante :

sudo dpkg -i path/to/package.debAprès avoir installé le runtime, branche ta Coral Edge TPU sur un port USB 3.0 du Raspberry Pi pour que la nouvelle règle udev puisse prendre effet.

Important

Si tu as déjà installé le runtime Coral Edge TPU, désinstalle-le en utilisant la commande suivante.

# If you installed the standard version

sudo apt remove libedgetpu1-std

# If you installed the high-frequency version

sudo apt remove libedgetpu1-maxLink to this sectionExportation vers Edge TPU#

Pour utiliser l'Edge TPU, tu dois convertir ton modèle dans un format compatible. Il est recommandé d'effectuer l'exportation sur Google Colab, une machine Linux x86_64, en utilisant le conteneur Docker Ultralytics, ou en utilisant la plateforme Ultralytics, car le compilateur Edge TPU n'est pas disponible sur ARM. Consulte le mode d'exportation pour les arguments disponibles.

from ultralytics import YOLO

# Load a model

model = YOLO("path/to/model.pt") # Load an official model or custom model

# Export the model

model.export(format="edgetpu")Le modèle exporté sera enregistré dans le dossier <model_name>_saved_model/ sous le nom <model_name>_full_integer_quant_edgetpu.tflite. Assure-toi que le nom du fichier se termine par le suffixe _edgetpu.tflite ; sinon, Ultralytics ne détectera pas que tu utilises un modèle Edge TPU.

Link to this sectionExécution du modèle#

Avant de pouvoir réellement exécuter le modèle, tu devras installer les bibliothèques correctes.

Si TensorFlow est déjà installé, désinstalle-le avec la commande suivante :

pip uninstall tensorflow tensorflow-aarch64Ensuite, installe ou mets à jour tflite-runtime :

pip install -U tflite-runtimeMaintenant, tu peux exécuter l'inférence en utilisant le code suivant :

from ultralytics import YOLO

# Load a model

model = YOLO("path/to/<model_name>_full_integer_quant_edgetpu.tflite") # Load an official model or custom model

# Run Prediction

model.predict("path/to/source.png")Trouve des informations complètes sur la page Prédire pour tous les détails du mode de prédiction.

Si tu as plusieurs Edge TPUs, tu peux utiliser le code suivant pour en sélectionner une en particulier.

from ultralytics import YOLO

# Load a model

model = YOLO("path/to/<model_name>_full_integer_quant_edgetpu.tflite") # Load an official model or custom model

# Run Prediction

model.predict("path/to/source.png") # Inference defaults to the first TPU

model.predict("path/to/source.png", device="tpu:0") # Select the first TPU

model.predict("path/to/source.png", device="tpu:1") # Select the second TPULink to this sectionBenchmarks#

Testé avec Raspberry Pi OS Bookworm 64-bit et une Coral Edge TPU USB.

Le temps d'inférence est affiché, le pré-/post-traitement n'est pas inclus.

| Taille de l'image | Modèle | Temps d'inférence standard (ms) | Temps d'inférence haute fréquence (ms) |

|---|---|---|---|

| 320 | YOLOv8n | 32.2 | 26.7 |

| 320 | YOLOv8s | 47.1 | 39.8 |

| 512 | YOLOv8n | 73.5 | 60.7 |

| 512 | YOLOv8s | 149.6 | 125.3 |

En moyenne :

- Le Raspberry Pi 5 est 22 % plus rapide avec le mode standard que le Raspberry Pi 4B.

- Le Raspberry Pi 5 est 30,2 % plus rapide avec le mode haute fréquence que le Raspberry Pi 4B.

- Le mode haute fréquence est 28,4 % plus rapide que le mode standard.

Link to this sectionFAQ#

Link to this sectionQu'est-ce qu'une Coral Edge TPU et comment améliore-t-elle les performances du Raspberry Pi avec Ultralytics YOLO26 ?#

La Coral Edge TPU est un appareil compact conçu pour ajouter un coprocesseur Edge TPU à ton système. Ce coprocesseur permet une inférence d'apprentissage automatique basse consommation et haute performance, particulièrement optimisée pour les modèles TensorFlow Lite. Lorsqu'il est utilisé avec un Raspberry Pi, l'Edge TPU accélère l'inférence du modèle ML, boostant significativement les performances, surtout pour les modèles Ultralytics YOLO26. Tu peux en lire plus sur la Coral Edge TPU sur leur page d'accueil.

Link to this sectionComment installer le runtime Coral Edge TPU sur un Raspberry Pi ?#

Pour installer le runtime Coral Edge TPU sur ton Raspberry Pi, télécharge le package .deb approprié pour ta version de Raspberry Pi OS depuis ce lien. Une fois téléchargé, utilise la commande suivante pour l'installer :

sudo dpkg -i path/to/package.debAssure-toi de désinstaller toute version précédente du runtime Coral Edge TPU en suivant les étapes décrites dans la section Guide d'installation.

Link to this sectionPuis-je exporter mon modèle Ultralytics YOLO26 pour qu'il soit compatible avec la Coral Edge TPU ?#

Oui, tu peux exporter ton modèle Ultralytics YOLO26 pour qu'il soit compatible avec la Coral Edge TPU. Il est recommandé d'effectuer l'exportation sur Google Colab, une machine Linux x86_64, ou en utilisant le conteneur Docker Ultralytics. Tu peux également utiliser la plateforme Ultralytics pour exporter. Voici comment exporter ton modèle en utilisant Python et CLI :

from ultralytics import YOLO

# Load a model

model = YOLO("path/to/model.pt") # Load an official model or custom model

# Export the model

model.export(format="edgetpu")Pour plus d'informations, réfère-toi à la documentation du mode d'exportation.

Link to this sectionQue faire si TensorFlow est déjà installé sur mon Raspberry Pi, mais que je veux utiliser tflite-runtime à la place ?#

Si tu as TensorFlow installé sur ton Raspberry Pi et que tu dois passer à tflite-runtime, tu devras d'abord désinstaller TensorFlow en utilisant :

pip uninstall tensorflow tensorflow-aarch64Ensuite, installe ou mets à jour tflite-runtime avec la commande suivante :

pip install -U tflite-runtimePour des instructions détaillées, réfère-toi à la section Exécution du modèle.

Link to this sectionComment exécuter une inférence avec un modèle YOLO26 exporté sur un Raspberry Pi en utilisant la Coral Edge TPU ?#

Après avoir exporté ton modèle YOLO26 vers un format compatible Edge TPU, tu peux exécuter l'inférence en utilisant les extraits de code suivants :

from ultralytics import YOLO

# Load a model

model = YOLO("path/to/edgetpu_model.tflite") # Load an official model or custom model

# Run Prediction

model.predict("path/to/source.png")Des détails complets sur les fonctionnalités du mode de prédiction complet sont disponibles sur la page Prédire.