Ultralytics Docs: Using YOLO26 with SAHI for Sliced Inference

Welcome to the Ultralytics documentation on how to use YOLO26 with SAHI (Slicing Aided Hyper Inference). This comprehensive guide aims to furnish you with all the essential knowledge you'll need to implement SAHI alongside YOLO26. We'll deep-dive into what SAHI is, why sliced inference is critical for large-scale applications, and how to integrate these functionalities with YOLO26 for enhanced object detection performance.

Introduction to SAHI

SAHI (Slicing Aided Hyper Inference) is an innovative library designed to optimize object detection algorithms for large-scale and high-resolution imagery. Its core functionality lies in partitioning images into manageable slices, running object detection on each slice, and then stitching the results back together. SAHI is compatible with a range of object detection models, including the YOLO series, thereby offering flexibility while ensuring optimized use of computational resources.

Watch: Inference with SAHI (Slicing Aided Hyper Inference) using Ultralytics YOLO26

Key Features of SAHI

- Seamless Integration: SAHI integrates effortlessly with YOLO models, meaning you can start slicing and detecting without a lot of code modification.

- Resource Efficiency: By breaking down large images into smaller parts, SAHI optimizes the memory usage, allowing you to run high-quality detection on hardware with limited resources.

- High Accuracy: SAHI maintains the detection accuracy by employing smart algorithms to merge overlapping detection boxes during the stitching process.

What is Sliced Inference?

Sliced Inference refers to the practice of subdividing a large or high-resolution image into smaller segments (slices), conducting object detection on these slices, and then recompiling the slices to reconstruct the object locations on the original image. This technique is invaluable in scenarios where computational resources are limited or when working with extremely high-resolution images that could otherwise lead to memory issues.

Benefits of Sliced Inference

Reduced Computational Burden: Smaller image slices are faster to process, and they consume less memory, enabling smoother operation on lower-end hardware.

Preserved Detection Quality: Since each slice is treated independently, there is no reduction in the quality of object detection, provided the slices are large enough to capture the objects of interest.

Enhanced Scalability: The technique allows for object detection to be more easily scaled across different sizes and resolutions of images, making it ideal for a wide range of applications from satellite imagery to medical diagnostics.

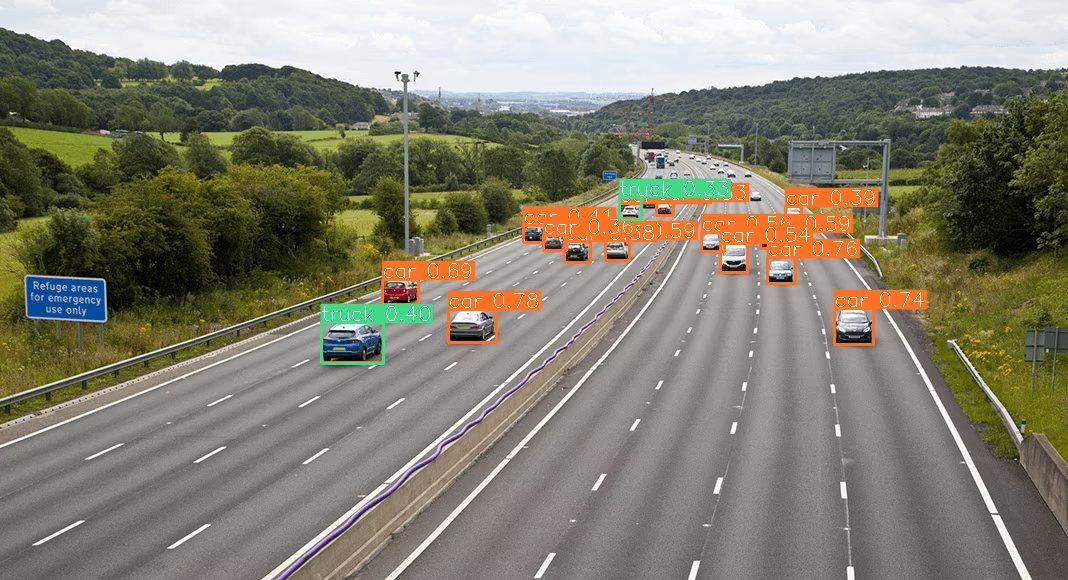

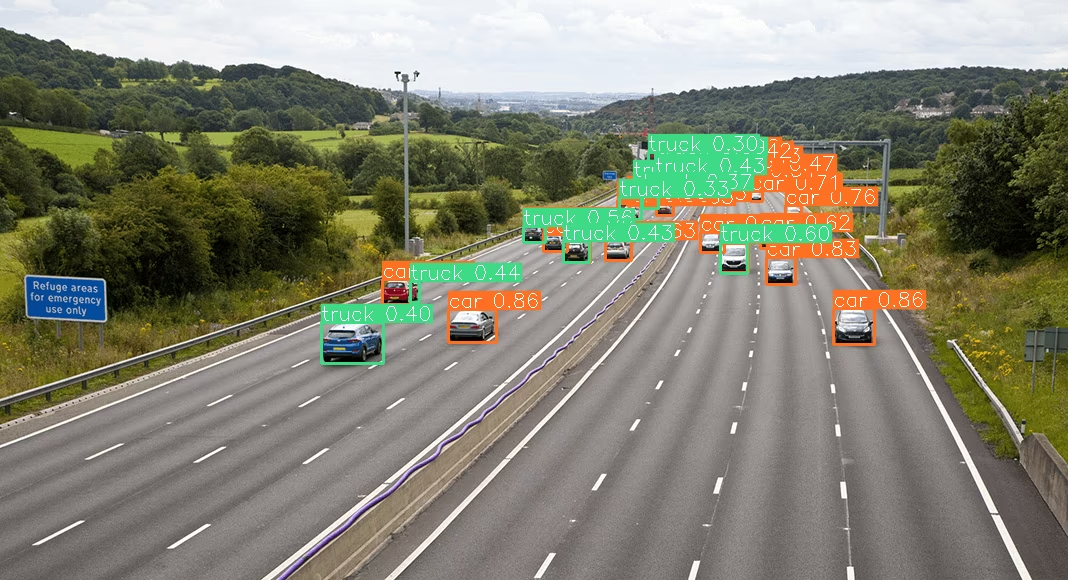

| YOLO26 without SAHI | YOLO26 with SAHI |

|---|---|

|  |

Installation and Preparation

Installation

To get started, install the latest versions of SAHI and Ultralytics:

pip install -U ultralytics sahi

Import Modules and Download Resources

Here's how to download some test images:

from sahi.utils.file import download_from_url

# Download test images

download_from_url(

"https://raw.githubusercontent.com/obss/sahi/main/demo/demo_data/small-vehicles1.jpeg",

"demo_data/small-vehicles1.jpeg",

)

download_from_url(

"https://raw.githubusercontent.com/obss/sahi/main/demo/demo_data/terrain2.png",

"demo_data/terrain2.png",

)

Standard Inference with YOLO26

Instantiate the Model

You can instantiate a YOLO26 model for object detection like this:

from sahi import AutoDetectionModel

detection_model = AutoDetectionModel.from_pretrained(

model_type="ultralytics",

model_path="yolo26n.pt",

confidence_threshold=0.3,

device="cpu", # or 'cuda:0'

)

Perform Standard Prediction

Perform standard inference using an image path.

from sahi.predict import get_prediction

result = get_prediction("demo_data/small-vehicles1.jpeg", detection_model)

result.export_visuals(export_dir="demo_data/", hide_conf=True)

Visualize Results

Export and visualize the predicted bounding boxes and masks:

from PIL import Image

# Open the predicted image

processed_image = Image.open("demo_data/prediction_visual.png")

# Display the predicted image

processed_image.show()

Sliced Inference with YOLO26

Perform sliced inference by specifying the slice dimensions and overlap ratios:

from PIL import Image

from sahi.predict import get_sliced_prediction

result = get_sliced_prediction(

"demo_data/small-vehicles1.jpeg",

detection_model,

slice_height=256,

slice_width=256,

overlap_height_ratio=0.2,

overlap_width_ratio=0.2,

)

# Export results

result.export_visuals(export_dir="demo_data/", hide_conf=True)

# Open the predicted image

processed_image = Image.open("demo_data/prediction_visual.png")

# Display the predicted image

processed_image.show()

Handling Prediction Results

SAHI provides a PredictionResult object, which can be converted into various annotation formats:

# Access the object prediction list

object_prediction_list = result.object_prediction_list

# Convert to COCO annotation, COCO prediction, imantics, and fiftyone formats

result.to_coco_annotations()[:3]

result.to_coco_predictions(image_id=1)[:3]

result.to_imantics_annotations()[:3]

result.to_fiftyone_detections()[:3]

Batch Prediction

For batch prediction on a directory of images:

from sahi.predict import predict

predict(

model_type="ultralytics",

model_path="yolo26n.pt",

model_device="cpu", # or 'cuda:0'

model_confidence_threshold=0.4,

source="path/to/dir",

slice_height=256,

slice_width=256,

overlap_height_ratio=0.2,

overlap_width_ratio=0.2,

)

You are now ready to use YOLO26 with SAHI for both standard and sliced inference.

Citations and Acknowledgments

If you use SAHI in your research or development work, please cite the original SAHI paper and acknowledge the authors:

@article{akyon2022sahi,

title={Slicing Aided Hyper Inference and Fine-tuning for Small Object Detection},

author={Akyon, Fatih Cagatay and Altinuc, Sinan Onur and Temizel, Alptekin},

journal={2022 IEEE International Conference on Image Processing (ICIP)},

doi={10.1109/ICIP46576.2022.9897990},

pages={966-970},

year={2022}

}

We extend our thanks to the SAHI research group for creating and maintaining this invaluable resource for the computer vision community. For more information about SAHI and its creators, visit the SAHI GitHub repository.

FAQ

How can I integrate YOLO26 with SAHI for sliced inference in object detection?

Integrating Ultralytics YOLO26 with SAHI (Slicing Aided Hyper Inference) for sliced inference optimizes your object detection tasks on high-resolution images by partitioning them into manageable slices. This approach improves memory usage and ensures high detection accuracy. To get started, you need to install the ultralytics and sahi libraries:

pip install -U ultralytics sahi

Then, download test images:

from sahi.utils.file import download_from_url

# Download test images

download_from_url(

"https://raw.githubusercontent.com/obss/sahi/main/demo/demo_data/small-vehicles1.jpeg",

"demo_data/small-vehicles1.jpeg",

)

download_from_url(

"https://raw.githubusercontent.com/obss/sahi/main/demo/demo_data/terrain2.png",

"demo_data/terrain2.png",

)

For more detailed instructions, refer to our Sliced Inference guide.

Why should I use SAHI with YOLO26 for object detection on large images?

Using SAHI with Ultralytics YOLO26 for object detection on large images offers several benefits:

- Reduced Computational Burden: Smaller slices are faster to process and consume less memory, making it feasible to run high-quality detections on hardware with limited resources.

- Maintained Detection Accuracy: SAHI uses intelligent algorithms to merge overlapping boxes, preserving the detection quality.

- Enhanced Scalability: By scaling object detection tasks across different image sizes and resolutions, SAHI becomes ideal for various applications, such as satellite imagery analysis and medical diagnostics.

Learn more about the benefits of sliced inference in our documentation.

Can I visualize prediction results when using YOLO26 with SAHI?

Yes, you can visualize prediction results when using YOLO26 with SAHI. Here's how you can export and visualize the results:

from PIL import Image

result.export_visuals(export_dir="demo_data/", hide_conf=True)

processed_image = Image.open("demo_data/prediction_visual.png")

processed_image.show()

This command will save the visualized predictions to the specified directory, and you can then load the image to view it in your notebook or application. For a detailed guide, check out the Standard Inference section.

What features does SAHI offer for improving YOLO26 object detection?

SAHI (Slicing Aided Hyper Inference) offers several features that complement Ultralytics YOLO26 for object detection:

- Seamless Integration: SAHI easily integrates with YOLO models, requiring minimal code adjustments.

- Resource Efficiency: It partitions large images into smaller slices, which optimizes memory usage and speed.

- High Accuracy: By effectively merging overlapping detection boxes during the stitching process, SAHI maintains high detection accuracy.

For a deeper understanding, read about SAHI's key features.

How do I handle large-scale inference projects using YOLO26 and SAHI?

To handle large-scale inference projects using YOLO26 and SAHI, follow these best practices:

- Install Required Libraries: Ensure that you have the latest versions of ultralytics and sahi.

- Configure Sliced Inference: Determine the optimal slice dimensions and overlap ratios for your specific project.

- Run Batch Predictions: Use SAHI's capabilities to perform batch predictions on a directory of images, which improves efficiency.

Example for batch prediction:

from sahi.predict import predict

predict(

model_type="ultralytics",

model_path="path/to/yolo26n.pt",

model_device="cpu", # or 'cuda:0'

model_confidence_threshold=0.4,

source="path/to/dir",

slice_height=256,

slice_width=256,

overlap_height_ratio=0.2,

overlap_width_ratio=0.2,

)

For more detailed steps, visit our section on Batch Prediction.