TensorRT Export for YOLO26 Models

Deploying computer vision models in high-performance environments can require a format that maximizes speed and efficiency. This is especially true when you are deploying your model on NVIDIA GPUs.

By using the TensorRT export format, you can enhance your Ultralytics YOLO26 models for swift and efficient inference on NVIDIA hardware. This guide will give you easy-to-follow steps for the conversion process and help you make the most of NVIDIA's advanced technology in your deep learning projects.

TensorRT

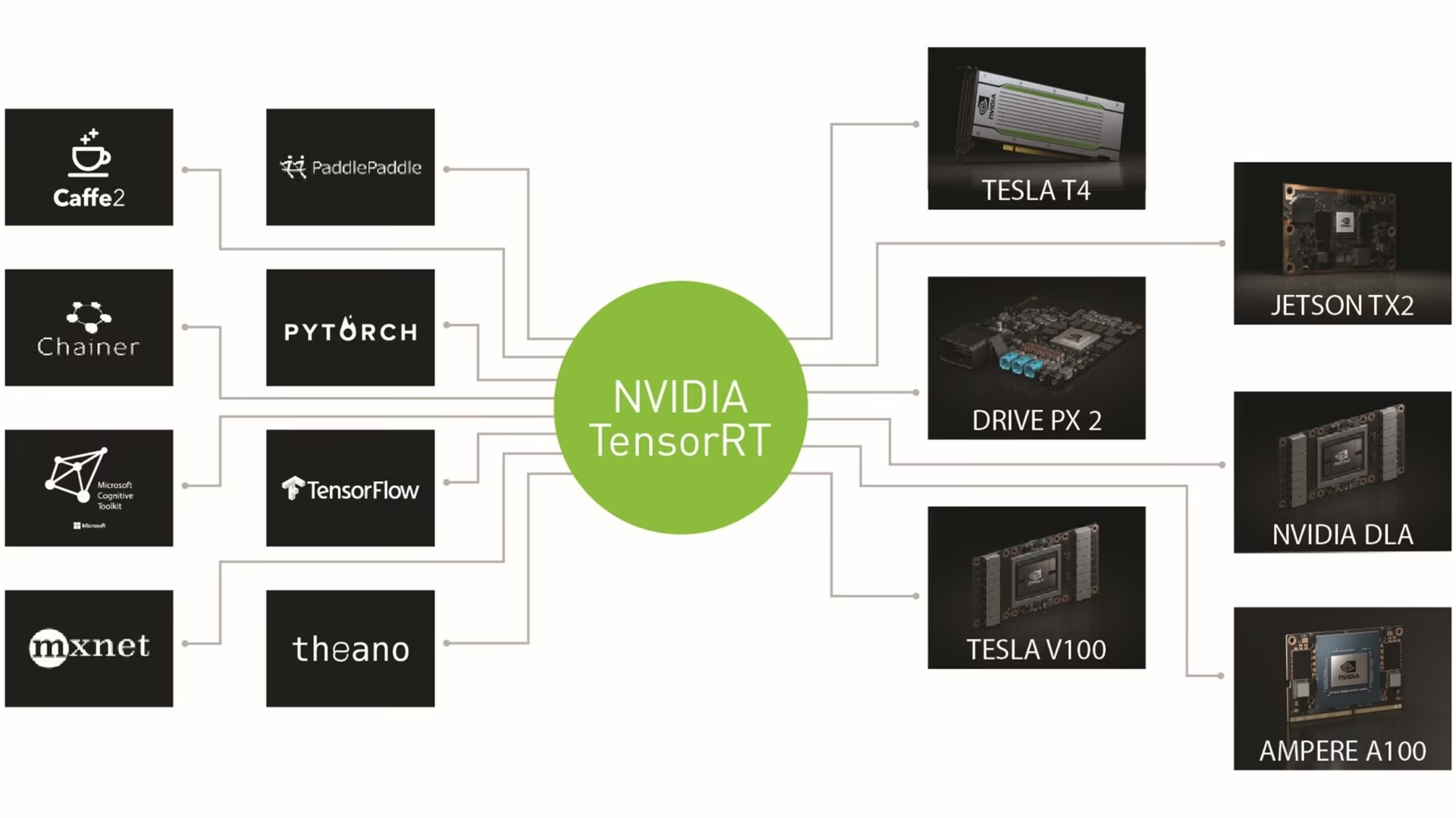

TensorRT, developed by NVIDIA, is an advanced software development kit (SDK) designed for high-speed deep learning inference. It's well-suited for real-time applications like object detection.

This toolkit optimizes deep learning models for NVIDIA GPUs and results in faster and more efficient operations. TensorRT models undergo TensorRT optimization, which includes techniques like layer fusion, precision calibration (INT8 and FP16), dynamic tensor memory management, and kernel auto-tuning. Converting deep learning models into the TensorRT format allows developers to realize the potential of NVIDIA GPUs fully.

TensorRT is known for its compatibility with various model formats, including TensorFlow, PyTorch, and ONNX, providing developers with a flexible solution for integrating and optimizing models from different frameworks. This versatility enables efficient model deployment across diverse hardware and software environments.

Key Features of TensorRT Models

TensorRT models offer a range of key features that contribute to their efficiency and effectiveness in high-speed deep learning inference:

-

Precision Calibration: TensorRT supports precision calibration, allowing models to be fine-tuned for specific accuracy requirements. This includes support for reduced precision formats like INT8 and FP16, which can further boost inference speed while maintaining acceptable accuracy levels.

-

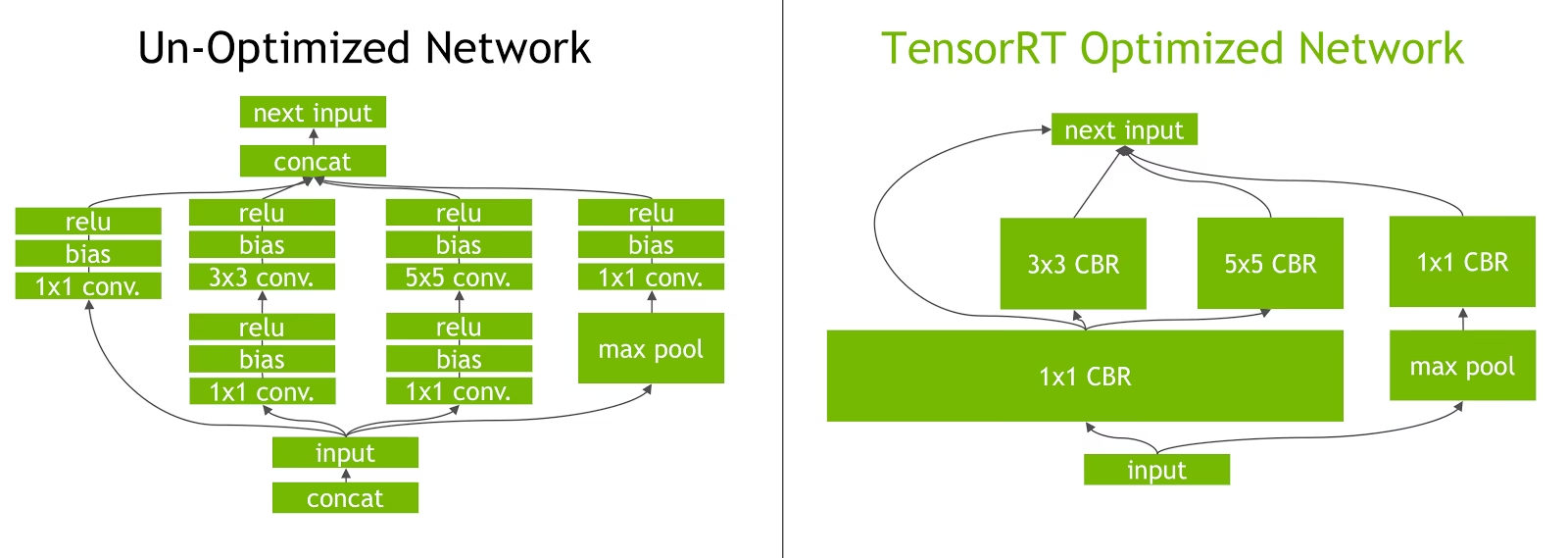

Layer Fusion: The TensorRT optimization process includes layer fusion, where multiple layers of a neural network are combined into a single operation. This reduces computational overhead and improves inference speed by minimizing memory access and computation.

-

Dynamic Tensor Memory Management: TensorRT efficiently manages tensor memory usage during inference, reducing memory overhead and optimizing memory allocation. This results in more efficient GPU memory utilization.

-

Automatic Kernel Tuning: TensorRT applies automatic kernel tuning to select the most optimized GPU kernel for each layer of the model. This adaptive approach ensures that the model takes full advantage of the GPU's computational power.

Deployment Options in TensorRT

Before we look at the code for exporting YOLO26 models to the TensorRT format, let's understand where TensorRT models are normally used.

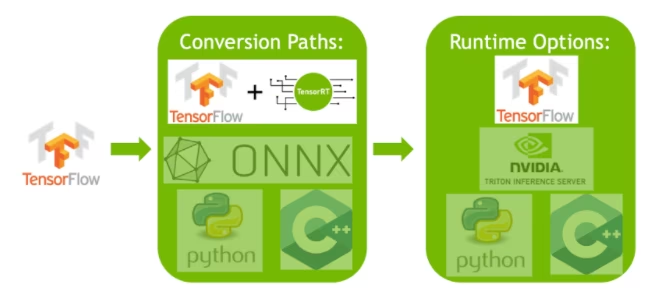

TensorRT offers several deployment options, and each option balances ease of integration, performance optimization, and flexibility differently:

- Deploying within TensorFlow: This method integrates TensorRT into TensorFlow, allowing optimized models to run in a familiar TensorFlow environment. It's useful for models with a mix of supported and unsupported layers, as TF-TRT can handle these efficiently.

-

Standalone TensorRT Runtime API: Offers granular control, ideal for performance-critical applications. It's more complex but allows for custom implementation of unsupported operators.

-

NVIDIA Triton Inference Server: An option that supports models from various frameworks. Particularly suited for cloud or edge inference, it provides features like concurrent model execution and model analysis.

Exporting YOLO26 Models to TensorRT

You can improve execution efficiency and optimize performance by converting YOLO26 models to TensorRT format.

Installation

To install the required package, run:

# Install the required package for YOLO26

pip install ultralyticsFor detailed instructions and best practices related to the installation process, check our YOLO26 Installation guide. While installing the required packages for YOLO26, if you encounter any difficulties, consult our Common Issues guide for solutions and tips.

Usage

Before diving into the usage instructions, be sure to check out the range of YOLO26 models offered by Ultralytics. This will help you choose the most appropriate model for your project requirements.

from ultralytics import YOLO

# Load the YOLO26 model

model = YOLO("yolo26n.pt")

# Export the model to TensorRT format

model.export(format="engine") # creates 'yolo26n.engine'

# Load the exported TensorRT model

tensorrt_model = YOLO("yolo26n.engine")

# Run inference

results = tensorrt_model("https://ultralytics.com/images/bus.jpg")Export Arguments

| Argument | Type | Default | Description |

|---|---|---|---|

format | str | 'engine' | Target format for the exported model, defining compatibility with various deployment environments. |

imgsz | int or tuple | 640 | Desired image size for the model input. Can be an integer for square images or a tuple (height, width) for specific dimensions. |

half | bool | False | Enables FP16 (half-precision) quantization, reducing model size and potentially speeding up inference on supported hardware. |

int8 | bool | False | Activates INT8 quantization, further compressing the model and speeding up inference with minimal accuracy loss, primarily for edge devices. |

dynamic | bool | False | Allows dynamic input sizes, enhancing flexibility in handling varying image dimensions. |

simplify | bool | True | Simplifies the model graph with onnxslim, potentially improving performance and compatibility. |

workspace | float or None | None | Sets the maximum workspace size in GiB for TensorRT optimizations, balancing memory usage and performance; use None for auto-allocation by TensorRT up to device maximum. |

nms | bool | False | Adds Non-Maximum Suppression (NMS), essential for accurate and efficient detection post-processing. |

batch | int | 1 | Specifies export model batch inference size or the max number of images the exported model will process concurrently in predict mode. |

data | str | 'coco8.yaml' | Path to the dataset configuration file (default: coco8.yaml), essential for quantization. |

fraction | float | 1.0 | Specifies the fraction of the dataset to use for INT8 quantization calibration. Allows for calibrating on a subset of the full dataset, useful for experiments or when resources are limited. If not specified with INT8 enabled, the full dataset will be used. |

device | str | None | Specifies the device for exporting: GPU (device=0), DLA for NVIDIA Jetson (device=dla:0 or device=dla:1). |

Please make sure to use a GPU with CUDA support when exporting to TensorRT.

For more details about the export process, visit the Ultralytics documentation page on exporting.

Exporting TensorRT with INT8 Quantization

Exporting Ultralytics YOLO models using TensorRT with INT8 precision executes post-training quantization (PTQ). TensorRT uses calibration for PTQ, which measures the distribution of activations within each activation tensor as the YOLO model processes inference on representative input data, and then uses that distribution to estimate scale values for each tensor. Each activation tensor that is a candidate for quantization has an associated scale that is deduced by a calibration process.

When processing implicitly quantized networks TensorRT uses INT8 opportunistically to optimize layer execution time. If a layer runs faster in INT8 and has assigned quantization scales on its data inputs and outputs, then a kernel with INT8 precision is assigned to that layer, otherwise TensorRT selects a precision of either FP32 or FP16 for the kernel based on whichever results in faster execution time for that layer.

It is critical to ensure that the same device that will use the TensorRT model weights for deployment is used for exporting with INT8 precision, as the calibration results can vary across devices.

Configuring INT8 Export

The arguments provided when using export for an Ultralytics YOLO model will greatly influence the performance of the exported model. They will also need to be selected based on the device resources available, however the default arguments should work for most Ampere (or newer) NVIDIA discrete GPUs. The calibration algorithm used is "MINMAX_CALIBRATION" and you can read more details about the options available in the TensorRT Developer Guide. Ultralytics tests found that "MINMAX_CALIBRATION" was the best choice and exports are fixed to using this algorithm.

-

workspace: Controls the size (in GiB) of the device memory allocation while converting the model weights.-

Adjust the

workspacevalue according to your calibration needs and resource availability. While a largerworkspacemay increase calibration time, it allows TensorRT to explore a wider range of optimization tactics, potentially enhancing model performance and accuracy. Conversely, a smallerworkspacecan reduce calibration time but may limit the optimization strategies, affecting the quality of the quantized model. -

Default is

workspace=None, which will allow for TensorRT to automatically allocate memory, when configuring manually, this value may need to be increased if calibration crashes (exits without warning). -

TensorRT will report

UNSUPPORTED_STATEduring export if the value forworkspaceis larger than the memory available to the device, which means the value forworkspaceshould be lowered or set toNone. -

If

workspaceis set to max value and calibration fails/crashes, consider usingNonefor auto-allocation or by reducing the values forimgszandbatchto reduce memory requirements. -

Remember calibration for INT8 is specific to each device, borrowing a "high-end" GPU for calibration, might result in poor performance when inference is run on another device.

-

-

batch: The maximum batch-size that will be used for inference. During inference smaller batches can be used, but inference will not accept batches any larger than what is specified.

Using small batches can lead to inaccurate scaling during INT8 calibration. This is because the process adjusts based on the data it sees. Small batches might not capture the full range of values, leading to issues with the final calibration. Using a larger batch size helps ensure more representative calibration results.

Experimentation by NVIDIA led them to recommend using at least 500 calibration images that are representative of the data for your model, with INT8 quantization calibration. This is a guideline and not a hard requirement, and you will need to experiment with what is required to perform well for your dataset. Since the calibration data is required for INT8 calibration with TensorRT, make certain to use the data argument when int8=True for TensorRT and use data="my_dataset.yaml", which will use the images from validation to calibrate with. When no value is passed for data with export to TensorRT with INT8 quantization, the default will be to use one of the "small" example datasets based on the model task instead of throwing an error.

from ultralytics import YOLO

model = YOLO("yolo26n.pt")

model.export(

format="engine",

dynamic=True, # (1)!

batch=8, # (2)!

workspace=4, # (3)!

int8=True,

data="coco.yaml", # (4)!

)

# Load the exported TensorRT INT8 model

model = YOLO("yolo26n.engine", task="detect")

# Run inference

result = model.predict("https://ultralytics.com/images/bus.jpg")- Exports with dynamic axes, this will be enabled by default when exporting with

int8=Trueeven when not explicitly set. See export arguments for additional information. - Sets max batch size of 8 for exported model and INT8 calibration.

- Allocates 4 GiB of memory instead of allocating the entire device for conversion process.

- Uses COCO dataset for calibration, specifically the images used for validation (5,000 total).

Calibration Cache

TensorRT will generate a calibration .cache which can be reused to speed up export of future model weights using the same data, but this may result in poor calibration when the data is vastly different or if the batch value is changed drastically. In these circumstances, the existing .cache should be renamed and moved to a different directory or deleted entirely.

Advantages of using YOLO with TensorRT INT8

-

Reduced model size: Quantization from FP32 to INT8 can reduce the model size by 4x (on disk or in memory), leading to faster download times. lower storage requirements, and reduced memory footprint when deploying a model.

-

Lower power consumption: Reduced precision operations for INT8 exported YOLO models can consume less power compared to FP32 models, especially for battery-powered devices.

-

Improved inference speeds: TensorRT optimizes the model for the target hardware, potentially leading to faster inference speeds on GPUs, embedded devices, and accelerators.

Note on Inference Speeds

The first few inference calls with a model exported to TensorRT INT8 can be expected to have longer than usual preprocessing, inference, and/or postprocessing times. This may also occur when changing imgsz during inference, especially when imgsz is not the same as what was specified during export (export imgsz is set as TensorRT "optimal" profile).

Drawbacks of using YOLO with TensorRT INT8

-

Decreases in evaluation metrics: Using a lower precision will mean that

mAP,Precision,Recallor any other metric used to evaluate model performance is likely to be somewhat worse. See the Performance results section to compare the differences inmAP50andmAP50-95when exporting with INT8 on small sample of various devices. -

Increased development times: Finding the "optimal" settings for INT8 calibration for dataset and device can take a significant amount of testing.

-

Hardware dependency: Calibration and performance gains could be highly hardware dependent and model weights are less transferable.

Ultralytics YOLO TensorRT Export Performance

NVIDIA A100

Tested with Ubuntu 22.04.3 LTS, python 3.10.12, ultralytics==8.2.4, tensorrt==8.6.1.post1

See Detection Docs for usage examples with these models trained on COCO, which include 80 pretrained classes.

Inference times shown for mean, min (fastest), and max (slowest) for each test using pretrained weights yolov8n.engine

| Precision | Eval test | mean (ms) | min | max (ms) | mAPval 50(B) | mAPval 50-95(B) | batch | size (pixels) |

|---|---|---|---|---|---|---|---|

| FP32 | Predict | 0.52 | 0.51 | 0.56 | 8 | 640 | ||

| FP32 | COCOval | 0.52 | 0.52 | 0.37 | 1 | 640 | |

| FP16 | Predict | 0.34 | 0.34 | 0.41 | 8 | 640 | ||

| FP16 | COCOval | 0.33 | 0.52 | 0.37 | 1 | 640 | |

| INT8 | Predict | 0.28 | 0.27 | 0.31 | 8 | 640 | ||

| INT8 | COCOval | 0.29 | 0.47 | 0.33 | 1 | 640 |

Consumer GPUs

Tested with Windows 10.0.19045, python 3.10.9, ultralytics==8.2.4, tensorrt==10.0.0b6

Inference times shown for mean, min (fastest), and max (slowest) for each test using pretrained weights yolov8n.engine

| Precision | Eval test | mean (ms) | min | max (ms) | mAPval 50(B) | mAPval 50-95(B) | batch | size (pixels) |

|---|---|---|---|---|---|---|---|

| FP32 | Predict | 1.06 | 0.75 | 1.88 | 8 | 640 | ||

| FP32 | COCOval | 1.37 | 0.52 | 0.37 | 1 | 640 | |

| FP16 | Predict | 0.62 | 0.75 | 1.13 | 8 | 640 | ||

| FP16 | COCOval | 0.85 | 0.52 | 0.37 | 1 | 640 | |

| INT8 | Predict | 0.52 | 0.38 | 1.00 | 8 | 640 | ||

| INT8 | COCOval | 0.74 | 0.47 | 0.33 | 1 | 640 |

Embedded Devices

Tested with JetPack 6.0 (L4T 36.3) Ubuntu 22.04.4 LTS, python 3.10.12, ultralytics==8.2.16, tensorrt==10.0.1

Inference times shown for mean, min (fastest), and max (slowest) for each test using pretrained weights yolov8n.engine

| Precision | Eval test | mean (ms) | min | max (ms) | mAPval 50(B) | mAPval 50-95(B) | batch | size (pixels) |

|---|---|---|---|---|---|---|---|

| FP32 | Predict | 6.11 | 6.10 | 6.29 | 8 | 640 | ||

| FP32 | COCOval | 6.17 | 0.52 | 0.37 | 1 | 640 | |

| FP16 | Predict | 3.18 | 3.18 | 3.20 | 8 | 640 | ||

| FP16 | COCOval | 3.19 | 0.52 | 0.37 | 1 | 640 | |

| INT8 | Predict | 2.30 | 2.29 | 2.35 | 8 | 640 | ||

| INT8 | COCOval | 2.32 | 0.46 | 0.32 | 1 | 640 |

See our quickstart guide on NVIDIA Jetson with Ultralytics YOLO to learn more about setup and configuration.

See our quickstart guide on NVIDIA DGX Spark with Ultralytics YOLO to learn more about setup and configuration.

Evaluation methods

Expand sections below for information on how these models were exported and tested.

Export configurations

See export mode for details regarding export configuration arguments.

from ultralytics import YOLO

model = YOLO("yolo26n.pt")

# TensorRT FP32

out = model.export(format="engine", imgsz=640, dynamic=True, verbose=False, batch=8, workspace=2)

# TensorRT FP16

out = model.export(format="engine", imgsz=640, dynamic=True, verbose=False, batch=8, workspace=2, half=True)

# TensorRT INT8 with calibration `data` (i.e. COCO, ImageNet, or DOTAv1 for appropriate model task)

out = model.export(

format="engine", imgsz=640, dynamic=True, verbose=False, batch=8, workspace=2, int8=True, data="coco8.yaml"

)Predict loop

See predict mode for additional information.

import cv2

from ultralytics import YOLO

model = YOLO("yolo26n.engine")

img = cv2.imread("path/to/image.jpg")

for _ in range(100):

result = model.predict(

[img] * 8, # batch=8 of the same image

verbose=False,

device="cuda",

)Validation configuration

See val mode to learn more about validation configuration arguments.

from ultralytics import YOLO

model = YOLO("yolo26n.engine")

results = model.val(

data="data.yaml", # COCO, ImageNet, or DOTAv1 for appropriate model task

batch=1,

imgsz=640,

verbose=False,

device="cuda",

)Deploying Exported YOLO26 TensorRT Models

Having successfully exported your Ultralytics YOLO26 models to TensorRT format, you're now ready to deploy them. For in-depth instructions on deploying your TensorRT models in various settings, take a look at the following resources:

-

Deploy Ultralytics with a Triton Server: Our guide on how to use NVIDIA's Triton Inference (formerly TensorRT Inference) Server specifically for use with Ultralytics YOLO models.

-

Deploying Deep Neural Networks with NVIDIA TensorRT: This article explains how to use NVIDIA TensorRT to deploy deep neural networks on GPU-based deployment platforms efficiently.

-

End-to-End AI for NVIDIA-Based PCs: NVIDIA TensorRT Deployment: This blog post explains the use of NVIDIA TensorRT for optimizing and deploying AI models on NVIDIA-based PCs.

-

GitHub Repository for NVIDIA TensorRT:: This is the official GitHub repository that contains the source code and documentation for NVIDIA TensorRT.

Summary

In this guide, we focused on converting Ultralytics YOLO26 models to NVIDIA's TensorRT model format. This conversion step is crucial for improving the efficiency and speed of YOLO26 models, making them more effective and suitable for diverse deployment environments.

For more information on usage details, take a look at the TensorRT official documentation.

If you're curious about additional Ultralytics YOLO26 integrations, our integration guide page provides an extensive selection of informative resources and insights.

FAQ

How do I convert YOLO26 models to TensorRT format?

To convert your Ultralytics YOLO26 models to TensorRT format for optimized NVIDIA GPU inference, follow these steps:

-

Install the required package:

pip install ultralytics -

Export your YOLO26 model:

from ultralytics import YOLO model = YOLO("yolo26n.pt") model.export(format="engine") # creates 'yolo26n.engine' # Run inference model = YOLO("yolo26n.engine") results = model("https://ultralytics.com/images/bus.jpg")

For more details, visit the YOLO26 Installation guide and the export documentation.

What are the benefits of using TensorRT for YOLO26 models?

Using TensorRT to optimize YOLO26 models offers several benefits:

- Faster Inference Speed: TensorRT optimizes the model layers and uses precision calibration (INT8 and FP16) to speed up inference without significantly sacrificing accuracy.

- Memory Efficiency: TensorRT manages tensor memory dynamically, reducing overhead and improving GPU memory utilization.

- Layer Fusion: Combines multiple layers into single operations, reducing computational complexity.

- Kernel Auto-Tuning: Automatically selects optimized GPU kernels for each model layer, ensuring maximum performance.

To learn more, explore the official TensorRT documentation from NVIDIA and our in-depth TensorRT overview.

Can I use INT8 quantization with TensorRT for YOLO26 models?

Yes, you can export YOLO26 models using TensorRT with INT8 quantization. This process involves post-training quantization (PTQ) and calibration:

-

Export with INT8:

from ultralytics import YOLO model = YOLO("yolo26n.pt") model.export(format="engine", batch=8, workspace=4, int8=True, data="coco.yaml") -

Run inference:

from ultralytics import YOLO model = YOLO("yolo26n.engine", task="detect") result = model.predict("https://ultralytics.com/images/bus.jpg")

For more details, refer to the exporting TensorRT with INT8 quantization section.

How do I deploy YOLO26 TensorRT models on an NVIDIA Triton Inference Server?

Deploying YOLO26 TensorRT models on an NVIDIA Triton Inference Server can be done using the following resources:

- Deploy Ultralytics YOLO26 with Triton Server: Step-by-step guidance on setting up and using Triton Inference Server.

- NVIDIA Triton Inference Server Documentation: Official NVIDIA documentation for detailed deployment options and configurations.

These guides will help you integrate YOLO26 models efficiently in various deployment environments.

What are the performance improvements observed with YOLO26 models exported to TensorRT?

Performance improvements with TensorRT can vary based on the hardware used. Here are some typical benchmarks:

-

NVIDIA A100:

- FP32 Inference: ~0.52 ms / image

- FP16 Inference: ~0.34 ms / image

- INT8 Inference: ~0.28 ms / image

- Slight reduction in mAP with INT8 precision, but significant improvement in speed.

-

Consumer GPUs (e.g., RTX 3080):

- FP32 Inference: ~1.06 ms / image

- FP16 Inference: ~0.62 ms / image

- INT8 Inference: ~0.52 ms / image

Detailed performance benchmarks for different hardware configurations can be found in the performance section.

For more comprehensive insights into TensorRT performance, refer to the Ultralytics documentation and our performance analysis reports.