COCO128 Dataset

Introduction

Ultralytics COCO128 is a small, but versatile object detection dataset composed of the first 128 images of the COCO train 2017 set. This dataset is ideal for testing and debugging object detection models, or for experimenting with new detection approaches. With 128 images, it is small enough to be easily manageable, yet diverse enough to test training pipelines for errors and act as a sanity check before training larger datasets.

Watch: Ultralytics COCO Dataset Overview

This dataset is intended for use with Ultralytics Platform and YOLO26.

Dataset YAML

A YAML (Yet Another Markup Language) file is used to define the dataset configuration. It contains information about the dataset's paths, classes, and other relevant information. In the case of the COCO128 dataset, the coco128.yaml file is maintained at https://github.com/ultralytics/ultralytics/blob/main/ultralytics/cfg/datasets/coco128.yaml.

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# COCO128 dataset https://www.kaggle.com/datasets/ultralytics/coco128 (first 128 images from COCO train2017) by Ultralytics

# Documentation: https://docs.ultralytics.com/datasets/detect/coco/

# Example usage: yolo train data=coco128.yaml

# parent

# ├── ultralytics

# └── datasets

# └── coco128 ← downloads here (7 MB)

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: coco128 # dataset root dir

train: images/train2017 # train images (relative to 'path') 128 images

val: images/train2017 # val images (relative to 'path') 128 images

test: # test images (optional)

# Classes

names:

0: person

1: bicycle

2: car

3: motorcycle

4: airplane

5: bus

6: train

7: truck

8: boat

9: traffic light

10: fire hydrant

11: stop sign

12: parking meter

13: bench

14: bird

15: cat

16: dog

17: horse

18: sheep

19: cow

20: elephant

21: bear

22: zebra

23: giraffe

24: backpack

25: umbrella

26: handbag

27: tie

28: suitcase

29: frisbee

30: skis

31: snowboard

32: sports ball

33: kite

34: baseball bat

35: baseball glove

36: skateboard

37: surfboard

38: tennis racket

39: bottle

40: wine glass

41: cup

42: fork

43: knife

44: spoon

45: bowl

46: banana

47: apple

48: sandwich

49: orange

50: broccoli

51: carrot

52: hot dog

53: pizza

54: donut

55: cake

56: chair

57: couch

58: potted plant

59: bed

60: dining table

61: toilet

62: tv

63: laptop

64: mouse

65: remote

66: keyboard

67: cell phone

68: microwave

69: oven

70: toaster

71: sink

72: refrigerator

73: book

74: clock

75: vase

76: scissors

77: teddy bear

78: hair drier

79: toothbrush

# Download script/URL (optional)

download: https://github.com/ultralytics/assets/releases/download/v0.0.0/coco128.zipUsage

To train a YOLO26n model on the COCO128 dataset for 100 epochs with an image size of 640, you can use the following code snippets. For a comprehensive list of available arguments, refer to the model Training page.

from ultralytics import YOLO

# Load a model

model = YOLO("yolo26n.pt") # load a pretrained model (recommended for training)

# Train the model

results = model.train(data="coco128.yaml", epochs=100, imgsz=640)Sample Images and Annotations

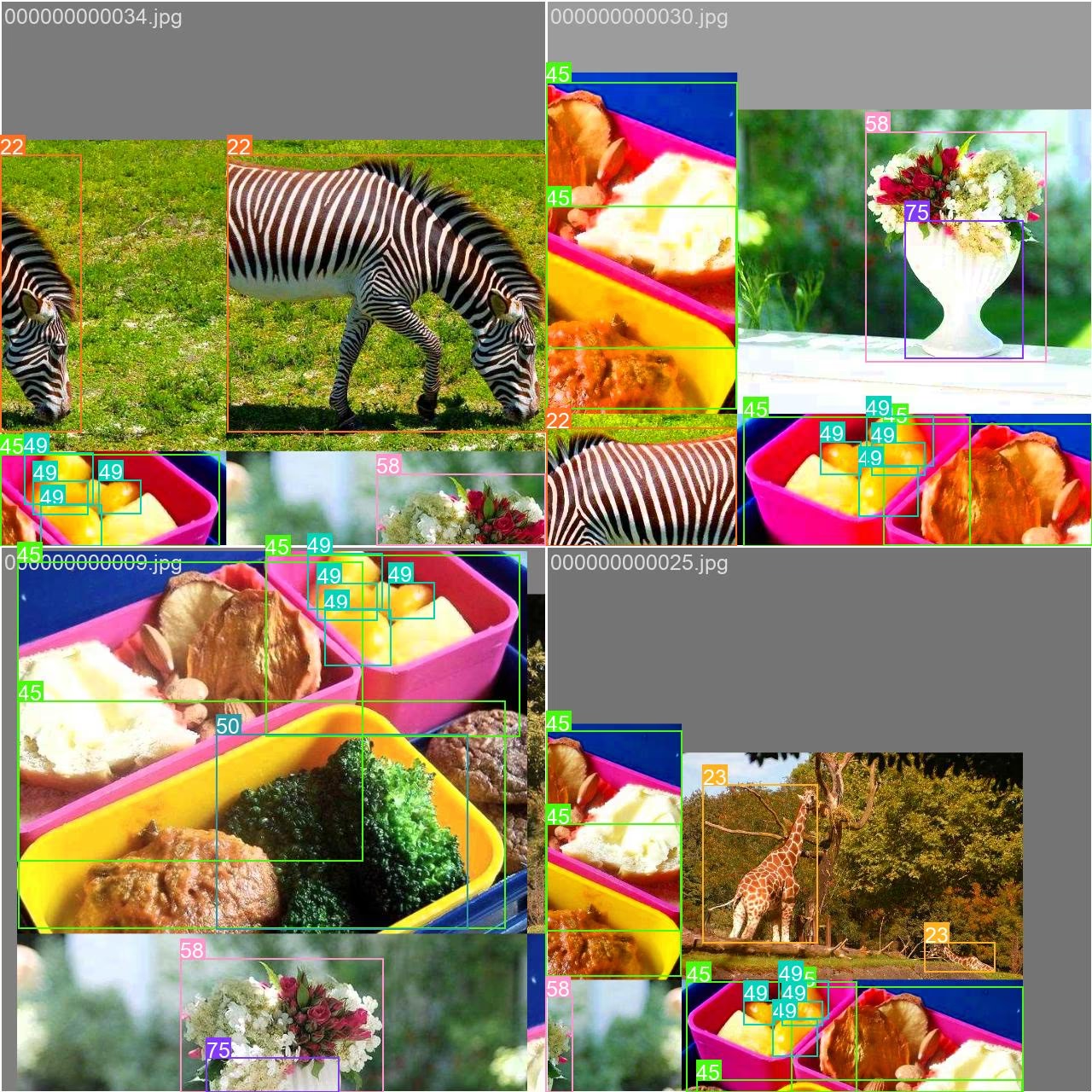

Here are some examples of images from the COCO128 dataset, along with their corresponding annotations:

- Mosaiced Image: This image demonstrates a training batch composed of mosaiced dataset images. Mosaicing is a technique used during training that combines multiple images into a single image to increase the variety of objects and scenes within each training batch. This helps improve the model's ability to generalize to different object sizes, aspect ratios, and contexts.

The example showcases the variety and complexity of the images in the COCO128 dataset and the benefits of using mosaicing during the training process.

Citations and Acknowledgments

If you use the COCO dataset in your research or development work, please cite the following paper:

@misc{lin2015microsoft,

title={Microsoft COCO: Common Objects in Context},

author={Tsung-Yi Lin and Michael Maire and Serge Belongie and Lubomir Bourdev and Ross Girshick and James Hays and Pietro Perona and Deva Ramanan and C. Lawrence Zitnick and Piotr Dollár},

year={2015},

eprint={1405.0312},

archivePrefix={arXiv},

primaryClass={cs.CV}

}We would like to acknowledge the COCO Consortium for creating and maintaining this valuable resource for the computer vision community. For more information about the COCO dataset and its creators, visit the COCO dataset website.

FAQ

What is the Ultralytics COCO128 dataset used for?

The Ultralytics COCO128 dataset is a compact subset containing the first 128 images from the COCO train 2017 dataset. It's primarily used for testing and debugging object detection models, experimenting with new detection approaches, and validating training pipelines before scaling to larger datasets. Its manageable size makes it perfect for quick iterations while still providing enough diversity to be a meaningful test case.

How do I train a YOLO26 model using the COCO128 dataset?

To train a YOLO26 model on the COCO128 dataset, you can use either Python or CLI commands. Here's how:

from ultralytics import YOLO

# Load a pretrained model

model = YOLO("yolo26n.pt")

# Train the model

results = model.train(data="coco128.yaml", epochs=100, imgsz=640)For more training options and parameters, refer to the Training documentation.

What are the benefits of using mosaic augmentation with COCO128?

Mosaic augmentation, as shown in the sample images, combines multiple training images into a single composite image. This technique offers several benefits when training with COCO128:

- Increases the variety of objects and contexts within each training batch

- Improves model generalization across different object sizes and aspect ratios

- Enhances detection performance for objects at various scales

- Maximizes the utility of a small dataset by creating more diverse training samples

This technique is particularly valuable for smaller datasets like COCO128, helping models learn more robust features from limited data.

How does COCO128 compare to other COCO dataset variants?

COCO128 (128 images) sits between COCO8 (8 images) and the full COCO dataset (118K+ images) in terms of size:

- COCO8: Contains just 8 images (4 train, 4 val) - ideal for quick tests and debugging

- COCO128: Contains 128 images - balanced between size and diversity

- Full COCO: Contains 118K+ training images - comprehensive but resource-intensive

COCO128 provides a good middle ground, offering more diversity than COCO8 while remaining much more manageable than the full COCO dataset for experimentation and initial model development.

Can I use COCO128 for tasks other than object detection?

While COCO128 is primarily designed for object detection, the dataset's annotations can be adapted for other computer vision tasks:

- Instance segmentation: Using the segmentation masks provided in the annotations

- Keypoint detection: For images containing people with keypoint annotations

- Transfer learning: As a starting point for fine-tuning models for custom tasks

For specialized tasks like segmentation, consider using purpose-built variants like COCO8-seg which include the appropriate annotations.