Mastering YOLOv5 Deployment on Google Cloud Platform (GCP) Deep Learning VM

Embarking on the journey of artificial intelligence (AI) and machine learning (ML) can be exhilarating, especially when you leverage the power and flexibility of a cloud computing platform. Google Cloud Platform (GCP) offers robust tools tailored for ML enthusiasts and professionals alike. One such tool is the Deep Learning VM, preconfigured for data science and ML tasks. In this tutorial, we will navigate the process of setting up Ultralytics YOLOv5 on a GCP Deep Learning VM. Whether you're taking your first steps in ML or you're a seasoned practitioner, this guide provides a clear pathway to implementing object detection models powered by YOLOv5.

🆓 Plus, if you're a new GCP user, you're in luck with a $300 free credit offer to kickstart your projects.

In addition to GCP, explore other accessible quickstart options for YOLOv5, like our Google Colab Notebook for a browser-based experience, or the scalability of Amazon AWS. Furthermore, container aficionados can utilize our official Docker image available on Docker Hub

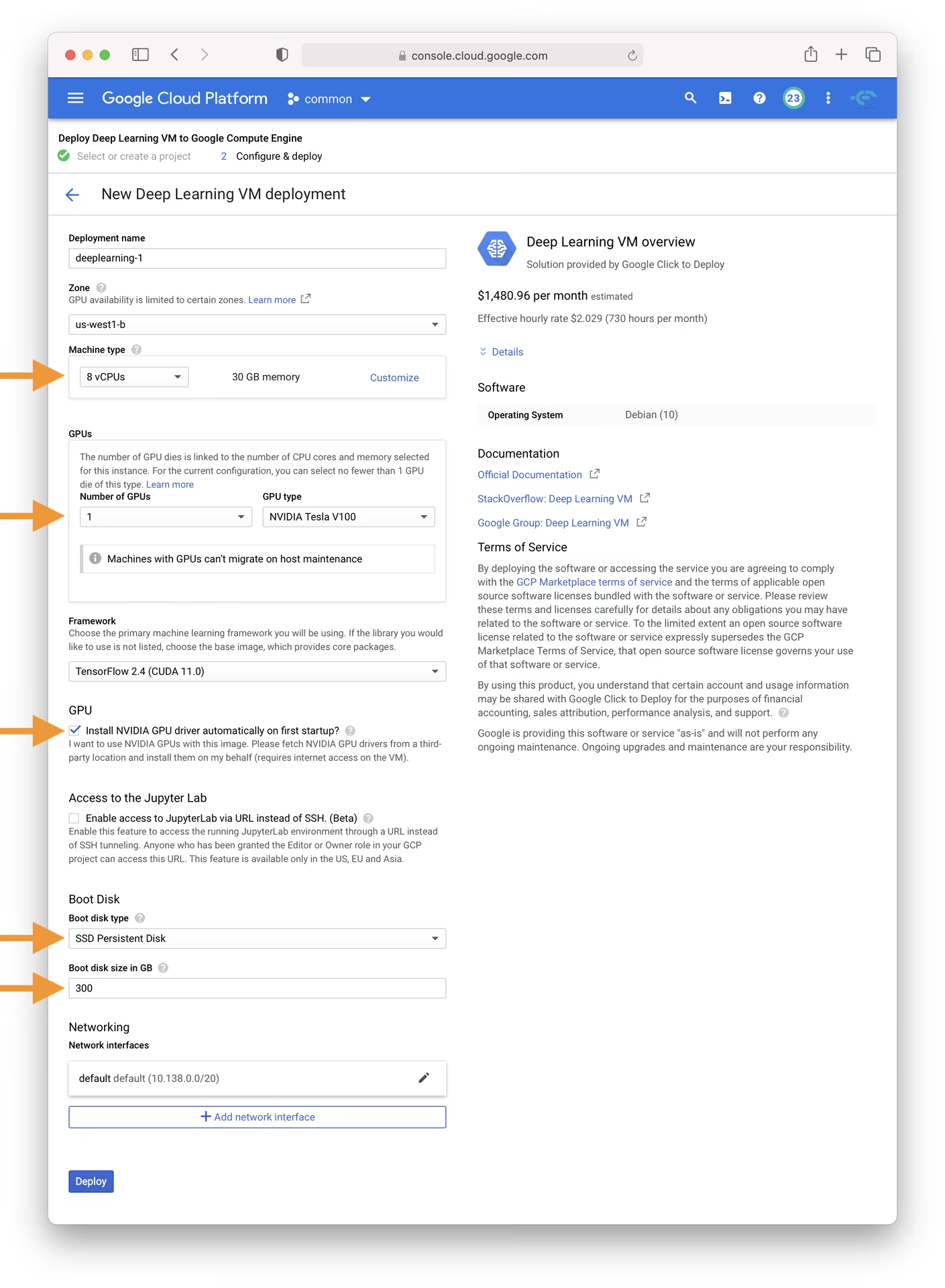

Step 1: Create and Configure Your Deep Learning VM

Let's begin by creating a virtual machine optimized for deep learning:

- Navigate to the GCP marketplace and select the Deep Learning VM.

- Choose an n1-standard-8 instance; it offers a balance of 8 vCPUs and 30 GB of memory, suitable for many ML tasks.

- Select a GPU. The choice depends on your workload; even a basic T4 GPU will significantly accelerate model training.

- Check the box for 'Install NVIDIA GPU driver automatically on first startup?' for a seamless setup.

- Allocate a 300 GB SSD Persistent Disk to prevent I/O bottlenecks.

- Click 'Deploy' and allow GCP to provision your custom Deep Learning VM.

This VM comes pre-loaded with essential tools and frameworks, including the Anaconda Python distribution, which conveniently bundles many necessary dependencies for YOLOv5.

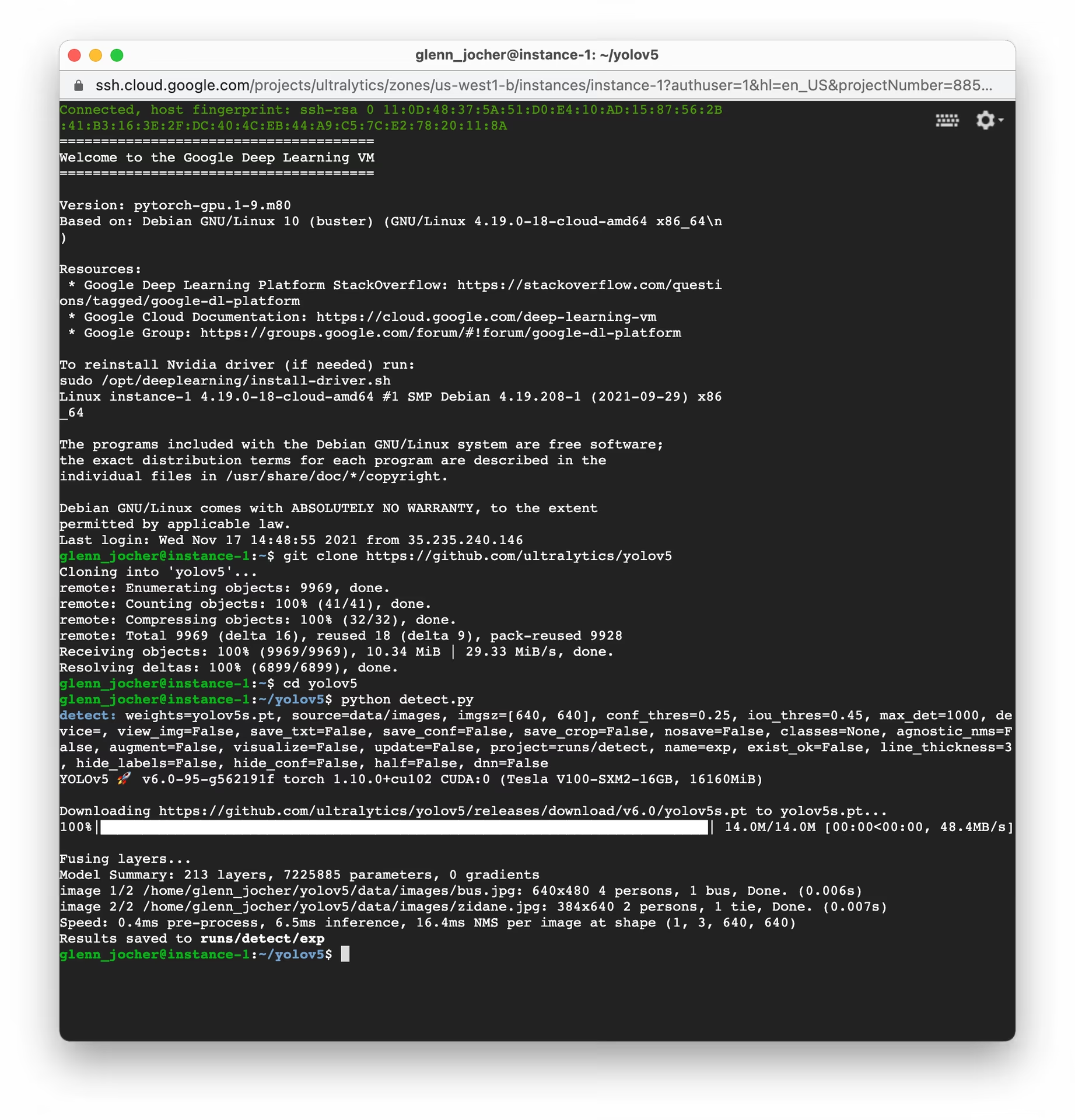

Step 2: Prepare the VM for YOLOv5

After setting up the environment, let's get YOLOv5 installed and ready:

# Clone the YOLOv5 repository

git clone https://github.com/ultralytics/yolov5

cd yolov5

# Install dependencies

pip install -r requirements.txt

This setup process ensures you have a Python environment version 3.8.0 or newer and PyTorch 1.8 or later. Our scripts automatically download models and datasets from the latest YOLOv5 release, simplifying the process of starting model training.

Step 3: Train and Deploy Your YOLOv5 Models

With the setup complete, you are ready to train, validate, predict, and export with YOLOv5 on your GCP VM:

# Train a YOLOv5 model on your dataset (e.g., yolov5s)

python train.py --data coco128.yaml --weights yolov5s.pt --img 640

# Validate the trained model to check Precision, Recall, and mAP

python val.py --weights yolov5s.pt --data coco128.yaml

# Run inference using the trained model on images or videos

python detect.py --weights yolov5s.pt --source path/to/your/images_or_videos

# Export the trained model to various formats like ONNX, CoreML, TFLite for deployment

python export.py --weights yolov5s.pt --include onnx coreml tflite

Using just a few commands, YOLOv5 enables you to train custom object detection models tailored to your specific needs or utilize pretrained weights for rapid results across various tasks. Explore different model deployment options after exporting.

Allocate Swap Space (Optional)

If you are working with particularly large datasets that might exceed your VM's RAM, consider adding swap space to prevent memory errors:

# Allocate a 64GB swap file

sudo fallocate -l 64G /swapfile

# Set the correct permissions for the swap file

sudo chmod 600 /swapfile

# Set up the Linux swap area

sudo mkswap /swapfile

# Enable the swap file

sudo swapon /swapfile

# Verify the swap space allocation (should show increased swap memory)

free -h

Training Custom Datasets

To train YOLOv5 on your custom dataset within GCP, follow these general steps:

- Prepare your dataset according to the YOLOv5 format (images and corresponding label files). See our datasets overview for guidance.

- Upload your dataset to your GCP VM using

gcloud compute scpor the web console's SSH feature. - Create a dataset configuration YAML file (

custom_dataset.yaml) that specifies the paths to your training and validation data, the number of classes, and class names. Begin the training process using your custom dataset YAML and potentially starting from pretrained weights:

# Example: Train YOLOv5s on a custom dataset for 100 epochs python train.py --img 640 --batch 16 --epochs 100 --data custom_dataset.yaml --weights yolov5s.pt

For comprehensive instructions on preparing data and training with custom datasets, consult the Ultralytics YOLOv5 Train documentation.

Leveraging Cloud Storage

For efficient data management, especially with large datasets or numerous experiments, integrate your YOLOv5 workflow with Google Cloud Storage:

# Ensure Google Cloud SDK is installed and initialized

# If not installed: curl https://sdk.cloud.google.com/ | bash

# Then initialize: gcloud init

# Example: Copy your dataset from a GCS bucket to your VM

gsutil cp -r gs://your-data-bucket/my_dataset ./datasets/

# Example: Copy trained model weights from your VM to a GCS bucket

gsutil cp -r ./runs/train/exp/weights gs://your-models-bucket/yolov5_custom_weights/

This approach allows you to store large datasets and trained models securely and cost-effectively in the cloud, minimizing the storage requirements on your VM instance.

Concluding Thoughts

Congratulations! You are now equipped to harness the capabilities of Ultralytics YOLOv5 combined with the computational power of Google Cloud Platform. This setup provides scalability, efficiency, and versatility for your object detection projects. Whether for personal exploration, academic research, or building industrial solutions, you have taken a significant step into the world of AI and ML on the cloud.

Consider using Ultralytics Platform for a streamlined, no-code experience to train and manage your models.

Remember to document your progress, share insights with the vibrant Ultralytics community, and utilize resources like GitHub discussions for collaboration and support. Now, go forth and innovate with YOLOv5 and GCP!

Want to continue enhancing your ML skills? Dive into our documentation and explore the Ultralytics Blog for more tutorials and insights. Let your AI adventure continue!